Walk into any commercial data center and you will feel it immediately: a cold that seems excessive, almost theatrical, like someone left the freezer door open in a warehouse the size of a football field. The servers are running fine. The hardware is not melting. And yet the thermostat reads somewhere between 65 and 68 degrees Fahrenheit, sometimes lower. The American Society of Heating, Refrigerating and Air-Conditioning Engineers recommends an intake temperature range of 64.4 to 80.6 degrees Fahrenheit for modern server equipment. Most facilities run well below that ceiling. The question worth asking is not why data centers need cooling. The question is why they need so much more of it than the equipment actually requires.

Tech companies have a long history of building systems that appear optimized for one purpose while actually serving a very different one. The overcooled server room is one of the most expensive and least examined examples of this pattern.

The Spec Says One Thing, the Industry Does Another

In 2008, ASHRAE published guidelines recommending that data centers could safely operate at much higher temperatures than the industry norm. Modern server hardware is tested to function at inlet temperatures up to 80 degrees Fahrenheit. Some hyperscale operators, including Google and Facebook (now Meta), began running their facilities at higher temperatures years ago, with Google reporting that some of its data centers operate at temperatures that would have been considered reckless by conventional standards just a decade earlier.

The energy savings from raising the thermostat even a few degrees are not trivial. For every degree Fahrenheit you raise the set point in a data center, you reduce cooling energy consumption by roughly two to four percent. At industrial scale, across the thousands of megawatts that global data centers consume, that number becomes staggering. The Lawrence Berkeley National Laboratory estimated that U.S. data centers consumed approximately 70 billion kilowatt-hours of electricity in a single year. Cooling accounts for somewhere between 30 and 40 percent of that load in traditional facilities.

So why hasn’t the industry broadly adopted higher operating temperatures? The answer involves liability, legacy thinking, and a structural incentive problem that is hiding in plain sight.

The Liability Trap Nobody Talks About

The most honest explanation you will get from a data center operations manager, usually off the record, goes something like this: nobody ever got fired for keeping the room cold. Running a facility at 78 degrees Fahrenheit when a server failure occurs is a career-threatening explanation to deliver to a client. Running it at 65 degrees when the same failure occurs is practically a non-issue. The cold is not engineering. It is insurance against blame.

This is a classic principal-agent problem dressed up as a technical standard. The person making the cooling decision (the operations manager or facilities engineer) is not the person paying the electricity bill (the client or the data center owner’s shareholders). The incentives are misaligned, and the gap between recommended operating temperatures and actual practice is the direct result.

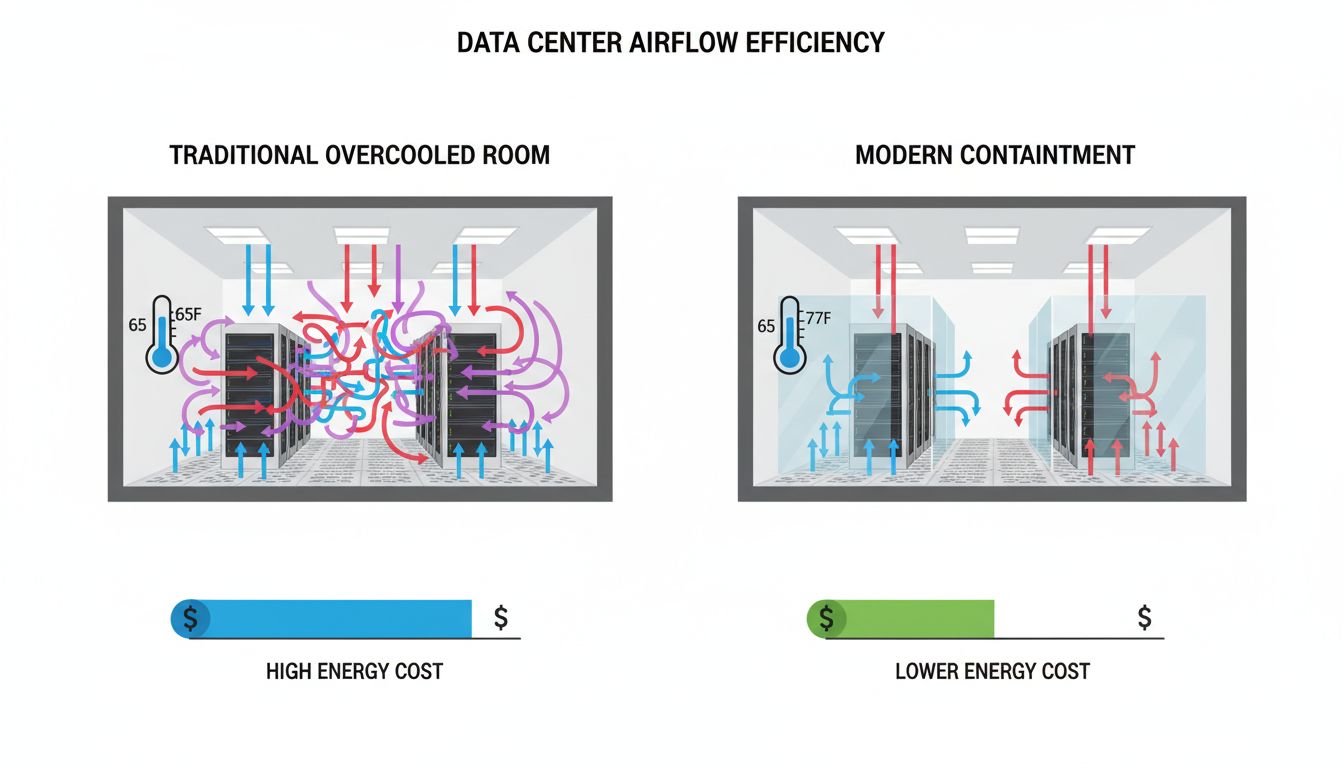

What makes this particularly interesting is how the overcooling norm became self-reinforcing. Equipment vendors began designing cooling infrastructure rated for the colder operating range. Contracts were written with uptime guarantees that implicitly assumed colder temperatures. Insurance policies followed the same logic. Each layer of the industry built on assumptions established by the previous one, and nobody went back to question whether the foundation was actually necessary.

This is the same mechanism behind a surprising number of tech industry practices that look like technical decisions but are actually organizational ones. Software bugs are sometimes left unfixed for reasons that have nothing to do with engineering difficulty and everything to do with who bears the cost of fixing them versus who bears the risk of leaving them alone.

The Energy Cost Is Enormous and Largely Ignored

A mid-size enterprise data center running at 65 degrees instead of 75 degrees might spend an additional one to two million dollars per year on cooling energy, depending on location and facility size. Across the thousands of commercial colocation and enterprise data centers operating in the United States alone, the aggregate waste runs into the tens of billions of dollars annually.

This is not a problem without a known solution. The hyperscalers figured it out. Google, Microsoft, and Amazon have invested heavily in precision cooling systems, containment architectures that separate hot and cold air more effectively, and in some cases, radical approaches like submerging servers in dielectric fluid or siting facilities in naturally cold climates where outside air can do most of the cooling work. Microsoft’s Project Natick placed a sealed data center module on the ocean floor off the coast of Scotland, using the surrounding seawater as a heat sink.

But these solutions require capital investment, organizational willingness to challenge embedded assumptions, and a client base sophisticated enough to accept a new operating standard. The enterprise market, where most of the waste occurs, has none of those things in abundance.

Why the Hyperscalers Broke the Pattern

The companies that actually changed their cooling practices share a common characteristic: they owned their infrastructure outright and operated at a scale where even marginal efficiency gains translated into hundreds of millions of dollars in savings. When you are building a one-gigawatt data center, a three percent reduction in cooling overhead is not an incremental improvement. It is a competitive advantage that compounds across every facility you operate.

This is a version of the same dynamic that plays out whenever a company is large enough to internalize costs that smaller operators externalize onto someone else. Tech giants have a long history of absorbing short-term losses to own structural advantages that smaller competitors cannot replicate. The hyperscaler approach to data center cooling is a quieter version of that same playbook.

For the rest of the industry, the barrier is not technical knowledge. The ASHRAE guidelines have been public for years. The barrier is the combination of legacy contracts, risk-averse clients, and the simple organizational reality that changing a standard which has never visibly failed is very hard to justify to the people who would have to approve the change.

What Comes Next

The pressure to change is building from an unexpected direction: artificial intelligence. The GPU clusters required for large-scale AI training generate significantly more heat per rack than traditional server infrastructure, and they do it in a far more concentrated space. Air cooling at any temperature is increasingly inadequate for the densest AI workloads. Liquid cooling, whether direct-to-chip or immersion-based, is becoming a practical necessity rather than an experimental curiosity.

The irony is that the AI boom may finally force the data center industry to modernize its cooling infrastructure, not because anyone decided to optimize for efficiency, but because the old approach simply cannot handle the new heat loads. The overcooled server room may not be reformed so much as made obsolete by hardware that generates more heat than cold air can remove regardless of how much of it you push through the room.

The hidden reason server rooms are kept colder than necessary turns out to be the same reason many entrenched industry practices persist long after the technical justification has expired: the cost of change is visible and immediate, while the cost of the status quo is diffuse, chronic, and easy to pass on to someone else. That is a lesson that extends well beyond the data center floor.