The first thing Amazon’s engineers figured out about page load time was that every 100 milliseconds of latency cost the company roughly 1% in sales. Google ran similar internal experiments and found comparable results. Both companies published these findings, cited them in engineering blogs, and used them to justify enormous investments in performance infrastructure.

So why does the rest of the industry move in the opposite direction?

Open a browser tab to almost any major consumer web app and run a performance audit. The results are reliably bad. Megabytes of JavaScript that block rendering. Third-party scripts from a dozen vendors firing before your content loads. Images delivered at three times the resolution a screen can display. These aren’t the symptoms of companies that lack resources. They’re the outputs of organizations that made choices, consciously or not, to accept slowness in exchange for something else.

Understanding what that something else is requires peeling back several layers of how product decisions actually get made at scale.

The Measurement Problem That Makes Speed Invisible

The core issue is that page load time is extraordinarily hard to connect to revenue in the short term. Amazon and Google can run rigorous experiments because they have the traffic volume to isolate single variables and measure outcomes within days. Most companies don’t have that luxury, and even those that do face a subtler problem: the damage from slowness accumulates across time rather than appearing as a sharp, attributable revenue drop.

Users who bounce because a page took four seconds to load don’t file a complaint. They don’t show up in a customer churn report with “slow website” as the reason. They simply don’t come back, or they come back less often, and that signal gets diluted across hundreds of other explanatory variables. Product teams see an engagement metric trending slightly downward and debate whether it’s the new feature rollout, the seasonal change in traffic, or the competitor’s recent campaign. Almost nobody guesses it’s the 2.4 megabytes of analytics JavaScript loading on every page view.

When a problem doesn’t generate a clear, traceable cost, organizations don’t treat it as a priority. They treat it as a background condition.

Third-Party Scripts and the Vendor Accumulation Problem

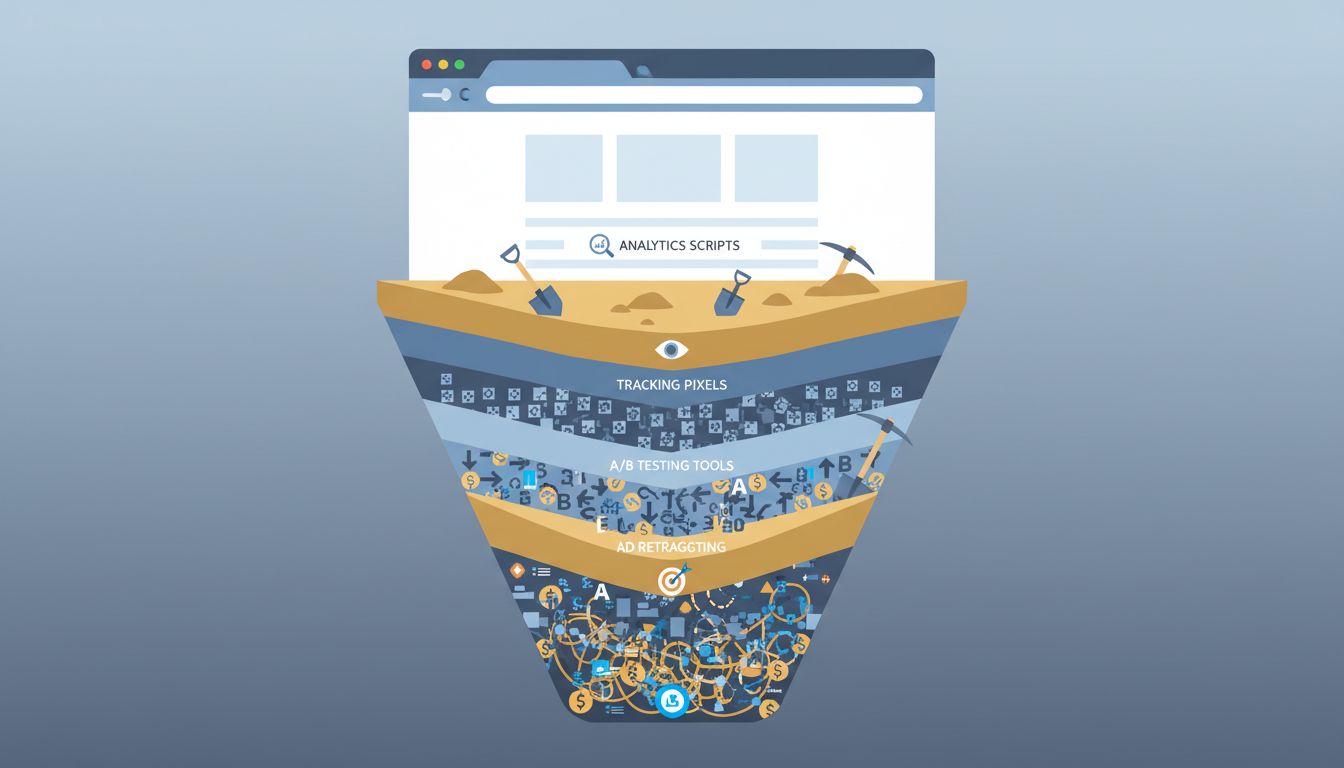

The single biggest driver of intentional slowness isn’t bad engineering. It’s a business process: the accumulation of third-party tracking, analytics, and advertising scripts that marketing, sales, and finance teams add to sites over years without a centralized accounting of what they cost.

Each individual addition seems reasonable. A/B testing software adds maybe 80 milliseconds of load time. A customer data platform adds another 120. A heat-mapping tool adds 60. A retargeting pixel adds 40. None of these numbers clears a threshold that triggers a serious performance review. But stack a dozen of them and you’ve added a full second to median load time, the equivalent of half of Amazon’s danger zone, without any single team taking ownership of the decision.

The reason this keeps happening is structural. The team evaluating a heat-mapping tool is optimizing for better UX data. They’re not responsible for page load time and have no incentive to weigh the performance cost against the analytics benefit. The engineering team that might flag the issue often lacks the organizational standing to block a contract the VP of Marketing has already signed. The result is a commons problem: everyone takes a small piece, nobody pays the full cost.

This is where the deliberate part of the headline earns its keep. These decisions are made deliberately, in the sense that they reflect genuine priorities and real tradeoffs. They just aren’t made with performance explicitly on the table.

The A/B Test That Nobody Wants to Run

Here’s the test that would settle the question for most companies: take your ten heaviest third-party scripts, remove them from a random sample of 10% of users for 30 days, and measure conversion, retention, and revenue against the control group. This experiment would directly quantify what the script load is costing you.

Almost no company runs it. The marketing and analytics teams whose tools would be in the test have no interest in sponsoring a study that might result in their budget being cut. The engineers who could design the experiment don’t control the business outcome metrics that would validate it. And leadership, presented with the proposal, typically sees a 30-day revenue experiment with uncertain upside as a lower priority than the roadmap items already committed to the board.

The test that would make performance legible to executives doesn’t get run because the incentive structure of large organizations actively discourages it.

When Slowness Is a Feature

There are cases where some companies almost certainly do introduce friction deliberately, though it’s rarely acknowledged publicly. The clearest example is in onboarding and checkout flows, where a page that loads instantly can actually reduce conversion rates because users feel they haven’t had enough time to evaluate what they’re agreeing to. Slower apparent progress through a multi-step form creates a sense of system deliberation that builds trust in certain contexts, particularly financial services and healthcare.

More cynically, slowness can serve as a soft lock-in mechanism. If a competitor’s import tool pulls your data at 50 records per second instead of 5,000, users are less likely to bother switching. This is adjacent to the deliberate friction that companies use to make APIs difficult to navigate, where the barrier to exit is engineered into the product’s performance characteristics rather than its feature set.

Some platforms also appear to throttle performance selectively. Pages that users land on from paid search ads are sometimes faster than organic landing pages, presumably because the cost of a bounced paid click is directly measurable in a way that organic traffic isn’t. If this is intentional, it’s a telling illustration of the measurement principle above: speed investment flows toward performance that can be tied directly to dollars.

The Real Cost of Excessive Personalization

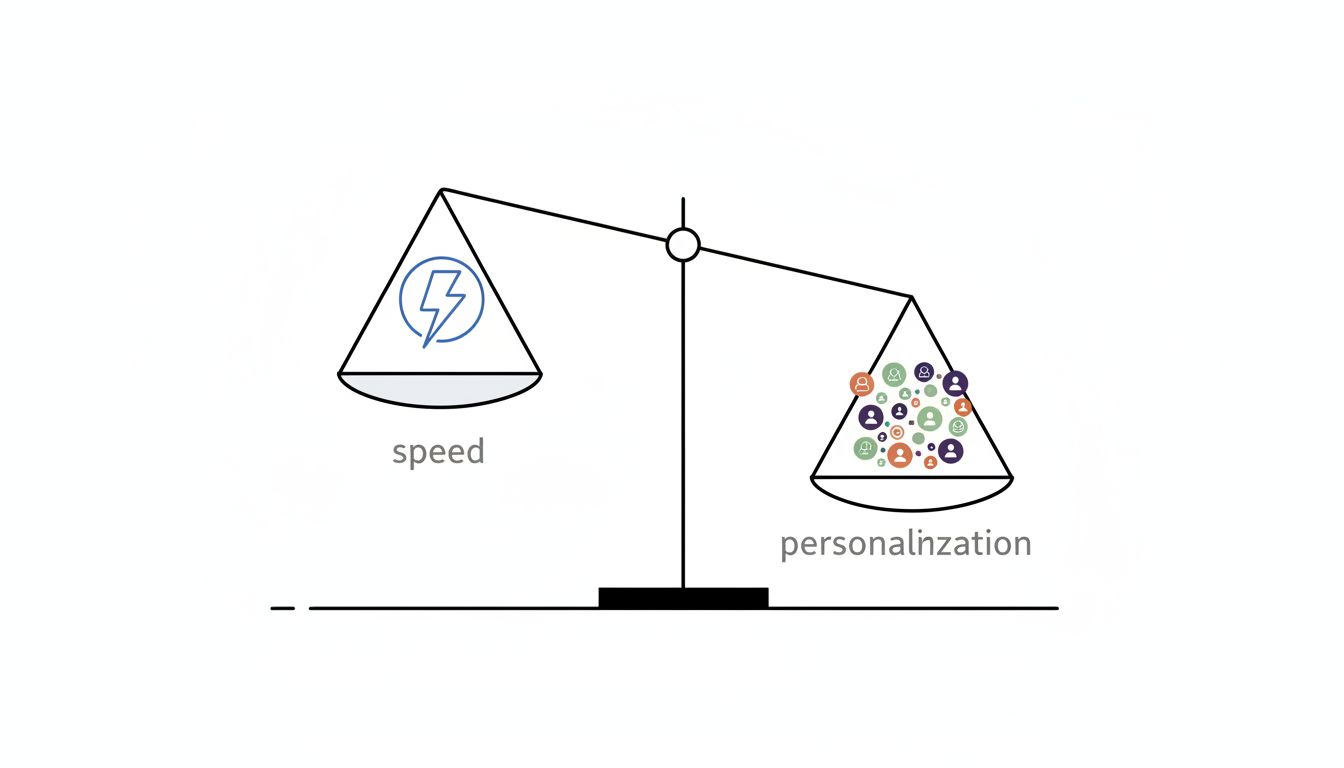

Modern web apps increasingly generate pages server-side using real-time data rather than serving cached, static content. This is partly because users and product teams genuinely want personalized experiences: a feed ranked by your behavior, recommendations based on your history, prices adjusted for your location or customer tier.

But the performance cost of personalization is large and often underestimated at the point of product design. A static page can be served from a content delivery network node a few milliseconds from the user. A fully personalized page has to be assembled on demand, pulling from multiple data sources, often with database queries that weren’t designed with sub-100-millisecond response times in mind.

The problem is that “add personalization” looks like a straightforward product improvement in a roadmap review. The performance implications only become visible when the engineering team does latency analysis, and by then the feature is already committed. Companies that have strong performance cultures (Google and Cloudflare are examples) build the performance cost into the design review. Companies that don’t treat it as an engineering implementation detail discover the cost after launch, when fixing it competes with new features for engineering time.

Why Google’s Core Web Vitals Changed More Than Engineers Expected

In 2020, Google announced that page experience signals including loading speed, measured through a metric called Largest Contentful Paint, would become factors in search ranking. The effect on corporate behavior was immediate and significant, not because the ranking impact was enormous (it wasn’t, initially), but because it gave performance engineers a business case they could take to leadership.

Before Core Web Vitals, a performance engineer arguing for a two-week sprint to reduce JavaScript bundle size had to make an abstract case about user experience and indirect revenue effects. After Core Web Vitals, that same engineer could say: our site scores poorly on a metric Google uses for ranking, and here is what that costs us in organic traffic. The argument became legible to people who controlled budgets.

This is the clearest evidence for the measurement hypothesis. Performance work didn’t become technically easier in 2020. The underlying engineering was the same. What changed was the availability of an external, authoritative metric that connected performance to a business outcome executives already cared about.

What This Means

The slow website problem is, at its core, an organizational incentive problem with some deliberate exploitation layered on top. Most of the slowness comes from decisions that weren’t made with performance in mind at all, not because engineers are lazy or companies are careless, but because the cost of slowness doesn’t show up cleanly in the metrics that drive decisions.

The genuinely deliberate cases, throttled competitors, friction-as-trust-building, selective performance investment based on traffic source, are real but narrower than the headline framing suggests. They’re opportunistic choices made within a system that already doesn’t prioritize speed, not a comprehensive strategy to make products worse.

The companies that consistently ship fast products have solved an organizational problem as much as a technical one: they’ve found ways to make performance cost visible at the point where product and business decisions are made. Core Web Vitals did some of this externally. Strong internal performance cultures do it internally. Without either, the default organizational behavior is to accept slowness one vendor contract at a time, until users are waiting for pages to load on infrastructure that could serve them in a fraction of the time.