A 1% conversion rate would get a sales team fired in almost any traditional industry. In tech, it is often treated as a sign that the pricing strategy is working exactly as intended. This counterintuitive logic sits at the heart of how software companies, hardware makers, and platform businesses set prices, and understanding it reveals something fundamental about how the tech economy actually operates.

The conventional view of pricing is simple: set a price where supply meets demand, maximize the number of buyers, and grow revenue from volume. Tech companies, particularly those selling software, have quietly abandoned this model, and the reasons are more calculated than most consumers realize. The math behind software subscription pricing is a good starting point for understanding just how deliberately these numbers are chosen.

The Economics of Artificial Scarcity

Digital products cost almost nothing to reproduce. Once a piece of software is built, the marginal cost of delivering it to one more customer is effectively zero. This creates a pricing paradox: if you charged what it actually costs to serve an additional user, the price would be nearly free. But nearly free destroys the business model.

So tech companies manufacture scarcity through pricing. They set prices that the overwhelming majority of potential users will decline, not because they need to recoup production costs, but because exclusion itself creates value. When only 1% of a potential market buys a product at a high price point, that product signals something to the buyers: you are part of a smaller, more serious group. Enterprise software is the clearest example. A tool priced at $50,000 per year is not priced there because it costs $50,000 to run. It is priced there because a company willing to pay $50,000 is, by definition, a company that takes the product seriously, integrates it deeply, and is unlikely to churn.

Why High Failure Rates Protect the Business

There is a second, less obvious reason for pricing at near-market-failure levels: it filters out the customers who would cost the most to serve.

Tech companies learned years ago that a cheap or free customer base generates enormous support costs, feature requests that fragment product development, and churn rates that make revenue projections meaningless. When Basecamp, the project management company, raised prices and simplified its offerings, it did not lose revenue. It shed customers who required disproportionate support and retained those who integrated the product into their core operations.

This logic also explains why enterprise software so often looks and feels terrible to use. The interface is not the selling point. The depth of integration, the switching cost, and the exclusivity of the buyer pool are the selling points. A confusing interface actually serves as an additional filter, ensuring only committed buyers make it through.

The filtering effect extends to product development as well. Companies that launch with missing features on purpose are running a version of the same strategy: the price (in this case, the price paid in usability) screens for users who are genuinely aligned with the product’s direction.

The Power User Math

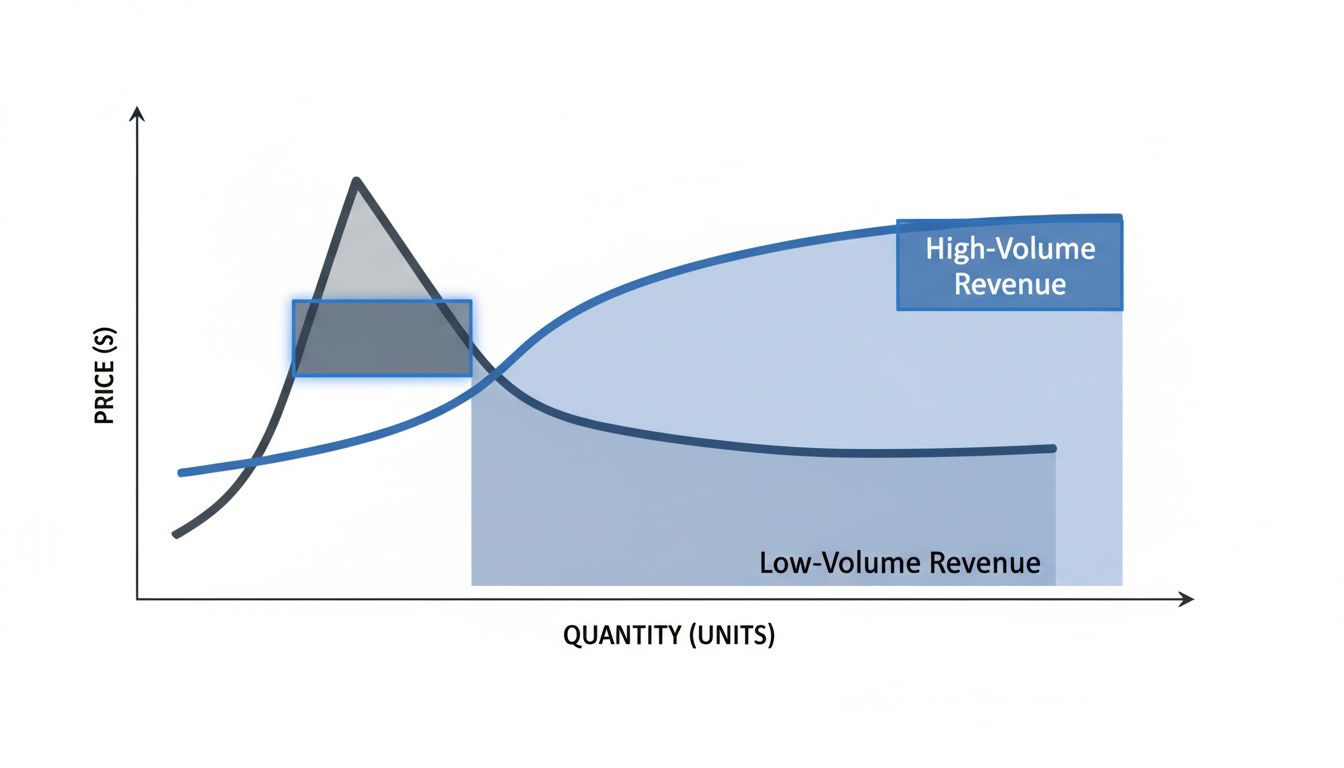

The 1% model depends on a specific calculation: the revenue from the small group of buyers must exceed what you would collect from a much larger group at a lower price. For many software products, this is not a close call.

Consider a productivity tool with a potential user base of 10 million people. If it converts 10% at $10 per month, it generates $10 million in monthly recurring revenue. If it converts 1% at $150 per month, it generates $15 million. The smaller customer base is more profitable, less expensive to support, and far more stable in terms of churn. The 99% who never converted were not a missed opportunity. They were correctly identified as the wrong customers.

This is also why tech companies often build internal tools that are dramatically better than what they sell to the public. The products sold externally are priced and scoped for a specific buyer profile. The tools used internally are optimized purely for performance, without the pricing architecture that shapes external products.

When the Strategy Backfires

The 99% failure rate model is not without risk. It assumes the 1% who buy are enough to sustain the business long-term, and it assumes the 99% who don’t buy will not find a cheaper alternative that captures enough of the value.

This is exactly what has happened in several markets. When a well-funded competitor enters at a dramatically lower price point and accepts lower margins, the high-price incumbent can find its buyer pool suddenly fragile. The customers who paid $50,000 a year because there was no alternative become candidates to switch the moment a $5,000 alternative appears that covers 80% of the use cases.

Platform companies avoid this threat through a different mechanism entirely: they make the switching cost so high that even a dramatically cheaper competitor cannot pull customers away. But for companies that rely purely on pricing exclusivity without lock-in, the strategy has a structural vulnerability.

The companies most exposed are those that set high prices as a shortcut to perceived prestige rather than as a reflection of genuine switching costs or deep integration. Prestige pricing without product depth is just expensive until it isn’t.

What This Means for Buyers and Builders

For anyone buying technology, the 99% failure rate model is a useful lens. When a product seems inexplicably expensive for what it does, the price is usually not about the product. It is about the population of buyers the company wants. That is not inherently manipulative, but it is worth understanding. You are not just buying features. You are buying membership in a specific buyer cohort, with all the support, integration depth, and product stability that cohort commands.

For anyone building technology, the lesson is harder. Pricing at the 99% failure level works when the 1% is genuinely the right customer for your product, when the revenue math is sound, and when you have either deep lock-in or a sufficiently differentiated product to resist cheaper competition. Pricing high to seem premium, without the underlying economics to support it, is a strategy that tends to work until it stops working very suddenly.

The surprising insight is not that tech companies price for failure. It is that for the best-run companies, failure at scale is not a bug in the pricing model. It is the product of it.