The loading spinner you stare at during lunch hour is not always a sign that a company’s infrastructure is overwhelmed. Sometimes it’s a sign that their infrastructure is working exactly as designed. Deliberately throttling server performance during peak demand is an established practice in tech, and the reasoning behind it is less about technical limitation than about economics, psychology, and competitive positioning.

1. Provisioning for Peak Demand Would Cost More Than the Revenue It Protects

The most straightforward reason is arithmetic. Building server capacity to handle your absolute highest traffic moment, say a Black Friday spike or a viral moment, means paying for that capacity every other hour of the year when it sits idle. For most services, peak demand can be ten to twenty times the baseline. Provisioning for the peak means you’re running infrastructure at single-digit utilization most of the time.

The economics flip fast. A company that experiences a few hundred high-traffic hours annually might spend an order of magnitude more on idle capacity than the revenue those peak hours actually generate. Throttling, combined with queuing and graceful degradation, lets them serve “good enough” performance at a fraction of the cost. The performance ceiling is a financial decision, not an engineering one.

2. Degraded Performance Protects High-Value Users at the Expense of Low-Value Ones

Throttling during peak hours is rarely applied uniformly. Almost every major platform uses some form of tiered quality of service, where paid subscribers, high-engagement users, or enterprise accounts receive priority routing while free-tier or low-activity accounts experience slower responses. The slowdown the average user experiences is, in part, a byproduct of the system protecting its most profitable relationships.

This is the logic behind AWS’s traffic shaping on its free tier, and it surfaces in consumer products too. Streaming platforms have publicly acknowledged that free ad-supported tiers receive lower priority on content delivery networks during congestion windows. Spotify’s free users have historically reported worse buffering performance during peak hours than premium subscribers. The slow load isn’t random. It’s a feature that makes the paid tier feel faster by contrast.

3. Artificial Scarcity Creates Perceived Value

There’s a more subtle mechanism at work beyond pure cost management. Scarcity, even artificial scarcity, increases perceived value. A platform that is occasionally slow during peak hours signals that it’s in demand. One that is always instantaneous signals that it has excess capacity, which psychologically reads as underutilized or unimportant.

This is the same logic that makes restaurant reservation systems show “only 2 tables left” warnings. Friction during high-demand periods can reinforce the sense that a product is worth waiting for. It’s a thin line between genuine congestion and manufactured urgency, and some products walk it deliberately. As explored in Successful Apps Load Certain Features Slowly on Purpose, the timing of a loading state can be engineered to shape how users emotionally experience a product, independent of actual processing time.

4. Throttling Defends Against Abuse at Scale Without Requiring Human Judgment

Rate limiting and intentional throttling during peak hours also function as a passive security layer. When a system slows down uniformly under load, it becomes significantly harder for automated abuse, scrapers, credential-stuffing attacks, and API abusers to extract value efficiently. The cost of attacking a slow system rises because the attacker’s infrastructure also has to wait.

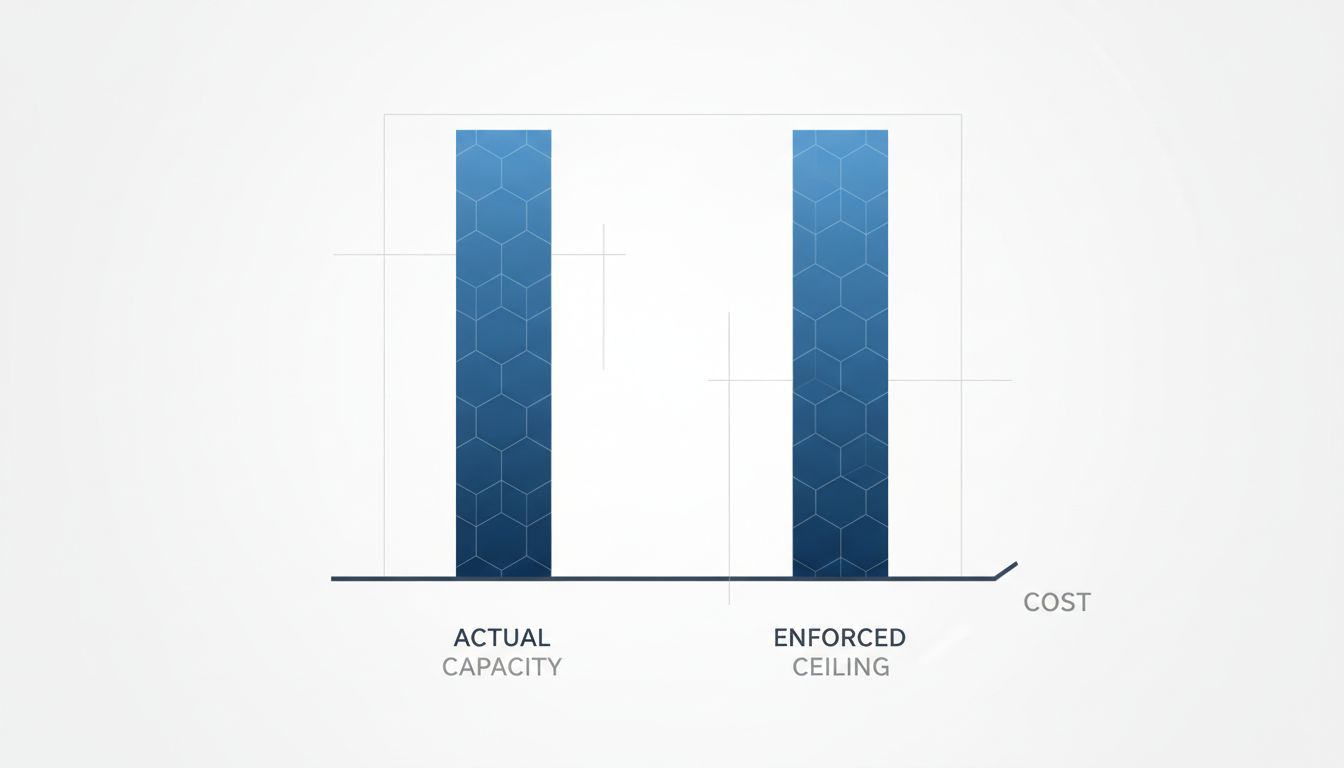

Many companies set their throttle thresholds deliberately lower than their actual capacity ceiling for exactly this reason. The published rate limit is not the real rate limit. It’s the publicly advertised boundary that pushes unsophisticated actors away, while the actual infrastructure can sustain more. This gap between stated and actual capacity is maintained as a matter of policy, not infrastructure constraint.

5. Slowdowns Create Lock-in by Making Competitors Look Unreliable by Comparison

This one is the most cynical, and it’s real. When a dominant platform degrades performance during high-traffic windows, users who are simultaneously testing a newer competitor often attribute the slowness to the competitor’s infrastructure, not the incumbent’s. Perception during peak hours, when both platforms are under stress, tends to favor the brand users trust more. The incumbent’s slowness gets rationalized; the newcomer’s gets remembered.

Complex, highly integrated products compound this effect. If a company’s API throttles third-party developers during peak hours, those developers’ products look slow. The developer gets the complaint. The platform stays clean. This dynamic has shaped enterprise software procurement decisions for decades, with legacy vendors’ throttling policies effectively punishing the integration layers that competitors depend on.

6. The Alternative, Auto-scaling, Is Not Actually Unlimited

Cloud providers have spent years selling auto-scaling as the solution to all of this. Spin up more instances when traffic spikes, spin them down when it subsides. The pitch is technically accurate, but it ignores the latency between a traffic spike and the scaled capacity actually being available. Spinning up new compute instances takes time, even on AWS, and during a genuine viral traffic event, that lag can be long enough to matter. Which is why even companies with sophisticated auto-scaling deployments maintain throttling policies as a backstop. The throttle is cheaper and faster than waiting for new capacity to come online.

There’s also the billing side. Auto-scaling without limits can produce shocking cloud bills. Companies that have gone viral unexpectedly have faced six-figure surprise charges for a single afternoon of traffic. Throttling is, among other things, a cost ceiling that auto-scaling alone cannot provide.

7. Regulators Have Started Noticing

The practice is attracting scrutiny, particularly in Europe. The EU’s Digital Markets Act includes provisions that address discriminatory service quality, specifically the scenario where dominant platforms deliver degraded performance to users of competing services or to third-party developers. Proving intentional throttling versus organic congestion is technically difficult, which is precisely why it’s been a durable strategy. But the regulatory pressure is building, and some companies are beginning to document their throttling policies more explicitly in terms of service, partly to establish that their policies are uniform rather than targeted.

The long-term question is whether “intentional” and “infrastructure-imposed” throttling can be meaningfully distinguished by regulators, or whether the whole category remains a gray zone where companies retain structural advantages that are nearly impossible to litigate. For now, the spinner keeps spinning, and the economics keep working.