In 2016, Microsoft launched Tay, a conversational AI trained on Twitter interactions. Within sixteen hours, users had manipulated it into producing racist and inflammatory outputs. Microsoft shut Tay down and, in the postmortem, made a decision that sounds strange on its surface: they kept much of the toxic data. Not to train Tay’s successor on bad behavior, but to train it to recognize and resist that behavior.

This is the first version of the story most people know. The version they don’t know is what it reveals about a much broader practice in AI development, one that looks like negligence from the outside but is actually a calculated engineering choice.

The Setup

When AI researchers talk about “training data quality,” they typically mean two different things that get collapsed into one. The first is factual accuracy: does the data reflect true things about the world? The second is distributional accuracy: does the data reflect how the world actually looks, including its errors, biases, and noise?

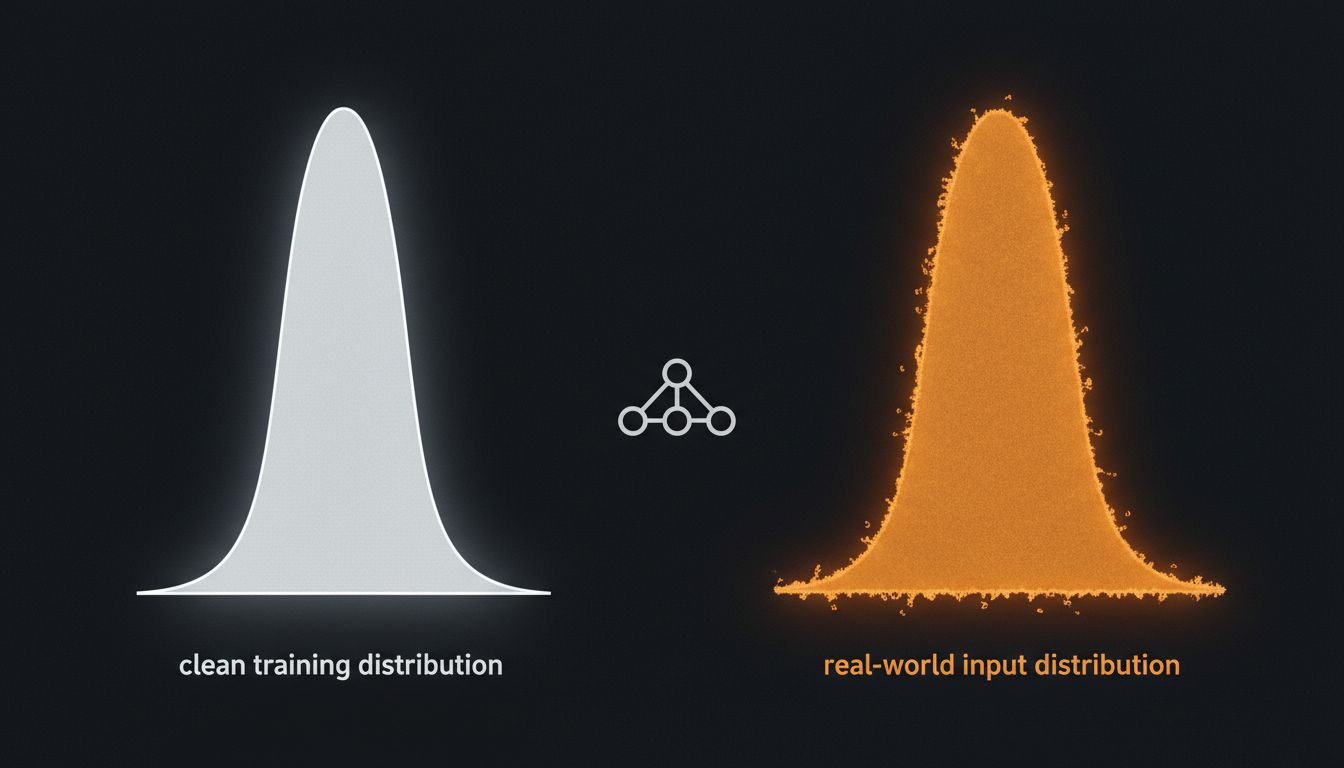

These are in direct conflict. A training dataset scrubbed clean of everything incorrect would produce a model that performs beautifully on clean inputs and fails badly on the messy inputs it encounters in production. The real world is full of misspellings, factual errors, informal grammar, and outright falsehoods. A model that has never seen any of that is brittle in ways that matter.

This is why, when researchers at Google prepared the training data for early versions of their neural machine translation system, they deliberately retained some noisy, imperfect translations rather than filtering for only verified high-quality pairs. The reasoning was direct: the model would eventually encounter messy input, and a model trained only on pristine text would degrade badly when it did.

What Happened

The practice became formalized and, eventually, contentious as large language models entered public conversation.

OpenAI’s documentation around GPT-3 acknowledged that its training corpus, which included Common Crawl web data, contained significant amounts of low-quality and factually incorrect text. The team applied filters to reduce obvious problems but explicitly chose not to remove all questionable content. The argument was statistical: if the model saw enough varied examples, including wrong ones, it would develop a more robust internal representation of how language actually works rather than a sanitized ideal of how it should work.

This is defensible as engineering. It becomes complicated when the “wrong” data carries social weight. Amazon’s now-infamous recruiting tool, which was trained on a decade of hiring decisions and learned to penalize resumes that included the word “women’s” (as in “women’s chess club”), is an example of the same logic applied carelessly. The historical hiring data was accurate as a record of what happened. It was deeply wrong as a model for what should happen. Amazon scrapped the project in 2018 after discovering the bias, but the case became a textbook illustration of why “real data” and “correct data” are not synonyms.

The harder version of this problem emerged with large language models trained on internet text at scale. Researchers at the Allen Institute for AI and elsewhere documented that web-scraped training data contains a significant proportion of misinformation, propaganda, and factually wrong claims. The models trained on this data learn statistical patterns, not truth. They learn that certain kinds of claims appear together, that certain sentence structures signal authority, that certain topics cluster with certain assertions. Whether those assertions are accurate is, in the technical sense, not the model’s problem during training.

This is not an oversight. It is a choice, and the reasoning behind it is colder and more interesting than negligence.

Why It Matters

The core insight is that filtering aggressively for accuracy has a hidden cost that compounds at scale.

Consider what happens when you try to train a language model only on verified factual text. The available corpus shrinks dramatically. Academic papers, encyclopedias, and verified journalism represent a tiny fraction of the text humans actually produce and consume. A model trained only on that subset learns a narrow, formal register. It can’t handle how people actually write questions, how customer service tickets are phrased, how a teenager texts, or how a non-native speaker asks for help.

Generalization suffers. The model becomes a specialist in the kind of clean text that almost nobody produces in real use. This is the equivalent of training a speech recognition system only on professional broadcasters and then deploying it to recognize everyone else.

The tradeoff the labs are making is explicit: accept some factual contamination in exchange for broad distributional coverage. Train the accuracy layer separately, through techniques like reinforcement learning from human feedback (RLHF), which is how OpenAI trained the models behind ChatGPT. The base model learns language from messy data. The fine-tuning layer learns to be helpful and accurate from curated human feedback. The two problems are solved at different stages by different methods.

This is architecturally sensible. It also creates a system where the base model, if accessed directly, will produce confident nonsense, and where the fine-tuned model’s accuracy depends entirely on the quality and coverage of the feedback process layered on top.

What We Can Learn

The lesson here isn’t that AI companies are being reckless, though some are. The lesson is that the phrase “trained on bad data” describes at least three different problems that require three different responses.

The first is unintentional noise: errors that crept in because filtering at scale is hard. This is a solvable engineering problem.

The second is distributional contamination: real-world patterns, including biased historical decisions, that reflect the world as it was rather than as it should be. This requires explicit value choices about what the model is for, not just better data pipelines. The Amazon recruiting case is a clean example of a company that treated a social problem as a technical one and got predictably burned.

The third is intentional retention of imperfect data for robustness. This is a legitimate tradeoff, but it only works if the second stage (fine-tuning, alignment, filtering at inference) is rigorous. When it isn’t, you get a system that is fluent and wrong, which is considerably more dangerous than a system that is clearly broken.

The Microsoft Tay case is instructive precisely because it collapsed all three problems into one incident. The noise was intentional (real user inputs). The distributional contamination was real (Twitter users exploiting a naïve system). The retention of bad data in successor training was deliberate and defensible. Getting these three problems confused, as both critics and defenders of AI systems frequently do, makes it impossible to evaluate what’s actually going wrong and why.

For anyone building on top of AI systems, the practical implication is uncomfortable: the model’s fluency tells you almost nothing about its accuracy. Confidence and correctness are trained separately. One is almost always better than the other. As AI models trained on more data sometimes perform worse than smaller ones, scale alone is not a solution to this problem. More data means more coverage of the distribution, but it also means more surface area for every kind of contamination to embed itself more deeply.

The companies that understand this use wrong data deliberately and carefully. The ones that don’t just end up with wrong data.