The story of a webpage loading is, on its surface, the most ordinary thing a computer does. But it is also one of the most architecturally ambitious feats in modern engineering, compressed into an interval short enough that users complain when it takes two seconds.

To understand why that matters, it helps to follow one specific request from the moment your finger leaves the Enter key.

The Setup

In 2021, Cloudflare published a detailed post-mortem on an outage that took down a significant portion of its network for roughly 27 minutes. The cause was a BGP routing configuration change, the kind of quiet infrastructure edit that happens thousands of times a day across the internet. What made it interesting was how far upstream the failure propagated, and how many layers of apparently robust systems failed in sequence because of it. Users who typed a URL during that window didn’t see an error message. They saw nothing. The browser just waited.

To understand why a BGP change could do that, you have to understand what actually happens when you type a URL.

What Happens

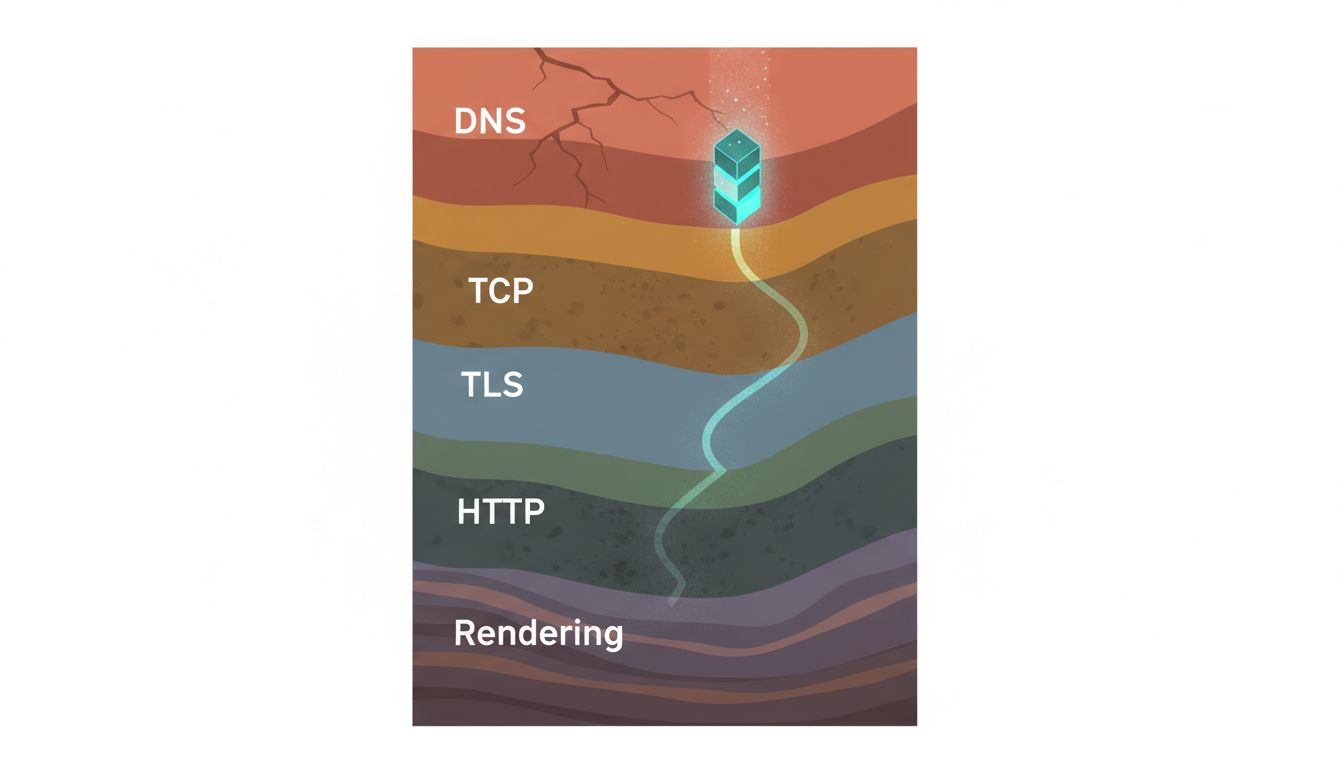

Your browser’s first job isn’t to fetch a page. It’s to figure out where to send the request. The URL you typed contains a domain name, and domain names are not addresses. They’re aliases. Your machine needs to convert www.example.com into an IP address it can actually route to, which means querying the Domain Name System.

This is where most people’s mental model of the process goes wrong. DNS is not a single server. It’s a hierarchy. Your machine checks its local cache first. If the record isn’t there, it asks a recursive resolver, usually operated by your ISP or a third-party provider like Google (8.8.8.8) or Cloudflare (1.1.1.1). That resolver, if it doesn’t have the answer cached, fans out to authoritative nameservers, starting at the root, then the top-level domain (.com), then the domain itself. The answer eventually propagates back down the chain and gets cached at multiple levels with a time-to-live value set by whoever controls the domain.

This entire exchange typically takes under 100 milliseconds. On a warm cache, under 1 millisecond. The machinery is invisible, which is exactly why it surfaces so dramatically when it breaks.

With an IP address in hand, your browser opens a TCP connection. This is the three-way handshake: your machine sends a SYN packet, the server responds with SYN-ACK, your machine acknowledges with ACK. The round-trip time for this handshake is largely determined by physical distance and network conditions. A server in Frankfurt responding to a request from Singapore will have a minimum latency imposed by the speed of light through fiber, roughly 170 milliseconds each way. No software optimization removes that floor.

If the site uses HTTPS (and in 2024, virtually all of them do), you then negotiate a TLS handshake on top of the TCP connection. TLS 1.3, which most modern servers support, reduced this to a single round trip from the two required by TLS 1.2. That single round trip improvement was worth fighting for: at scale, it translated to measurable latency reduction for users everywhere.

Now, finally, your browser sends an HTTP request. The server receives it, processes it, and begins sending a response. For a modern web application, “processing” means the request is likely hitting a load balancer before it touches any application code, then routing to one of many possible backend servers, which may query a database or cache, assemble HTML (or serve a pre-rendered static file), and return it.

The browser doesn’t wait for the entire response before it starts working. It parses HTML as it arrives, discovers references to CSS files, JavaScript files, images, and fonts, and begins fetching those in parallel. Each of those fetches is a new DNS lookup (often cached), a new TCP connection (or a reused one, if HTTP/2 or HTTP/3 multiplexing is available), and a new round of data transfer.

The page isn’t “loaded” when the HTML arrives. It’s loaded when the browser has assembled the DOM, applied styles, executed render-blocking scripts, and painted pixels to your screen. The metric that actually matters to users is First Contentful Paint, the moment something visible appears. Everything before that point is infrastructure.

Why It Matters

The Cloudflare outage is instructive because it shows how a failure at the routing layer (BGP, which governs how networks announce reachable IP prefixes to each other) can make entire swaths of the internet unreachable before any application-layer redundancy has a chance to help. If the IP address can’t be reached, the TCP handshake never starts. If the TCP handshake never starts, TLS never negotiates. If TLS never negotiates, no HTTP request is ever sent. The failure silently consumes every layer above it.

This is the fundamental architecture of the web’s fragility: the stack is deeply sequential in its dependencies, even when each layer has redundancy built in. DNS has redundancy. BGP has redundancy. TCP has error correction. TLS has certificate validation fallbacks. And yet an outage at the routing layer can make all of it irrelevant simultaneously.

Performance works the same way. Adding server-side caching helps. Using a CDN to co-locate content near users helps. Switching to HTTP/3’s QUIC transport (which eliminates TCP’s head-of-line blocking) helps. But if DNS resolution takes 300 milliseconds because the authoritative nameserver is slow, all of those downstream optimizations are working against a ceiling they can’t push through.

This is why companies like Fastly and Cloudflare have built businesses around DNS infrastructure, not just CDN delivery. The performance leverage at the DNS layer is disproportionate. Shaving 200 milliseconds off DNS resolution improves every subsequent step, because nothing downstream can begin until that step completes.

What We Can Learn

The practical lesson from tracing a URL request end to end is that performance and reliability are not application problems. They are infrastructure problems that get misdiagnosed as application problems, because the application is where engineers spend most of their time.

A team that has spent months optimizing database queries and compressing assets may be mystified when their page load times don’t improve, because the bottleneck is 8,000 miles of fiber and a DNS TTL set to 3,600 seconds by an engineer who hasn’t touched the account in years.

The second lesson is about failure modes. The internet’s layered architecture is its greatest strength and its most confusing failure property. When something breaks, the symptom appears at the top of the stack (the user sees nothing) while the cause is buried at the bottom (a routing table update, a TLS certificate expiry, a DNS misconfiguration). Debugging requires working backward through layers that most application developers have never had to think about. As your load balancer is deciding who has a bad day, so is every other component in this chain, most of the time invisibly.

The third lesson is one of genuine appreciation. The fact that this entire sequence, DNS resolution, TCP handshake, TLS negotiation, HTTP request, server processing, asset fetching, rendering, typically completes in under a second is not an accident. It is the product of decades of engineering decisions, protocol refinements, and infrastructure investment by thousands of organizations that mostly don’t know each other. The ordinary magic of a webpage loading is, in that light, extraordinary. It’s just been abstracted into invisibility.