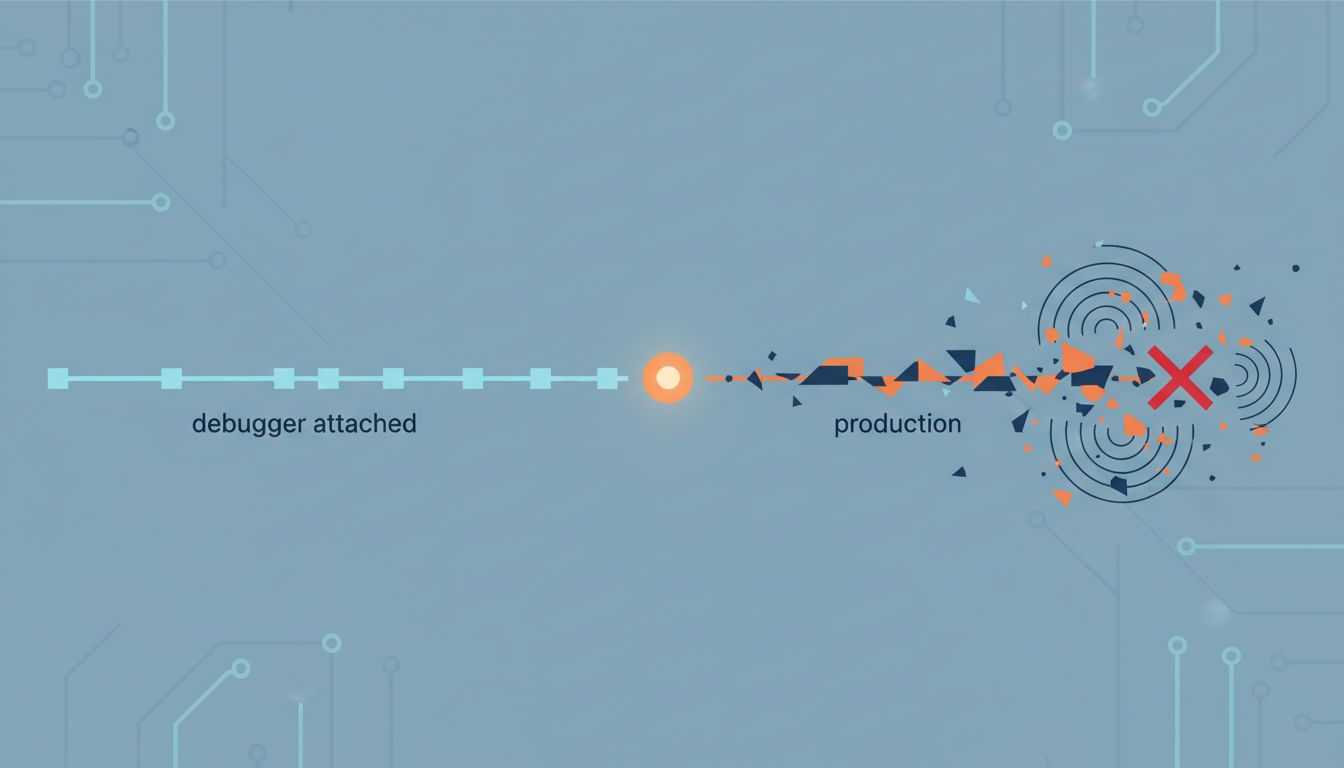

The Bug That Only Appears When No One Is Looking

In 1982, a programmer at DEC named Jim Gray coined the term “Heisenbug” as a nod to Werner Heisenberg’s uncertainty principle: the act of observing a quantum particle changes its state. The computing version works the same way. Attach a debugger to a misbehaving program, and the bug vanishes. Remove the debugger, and the crash returns. This is not magic. It is physics, timing, and the uncomfortable reality that computers are not the deterministic machines most people assume.

Heisenbugs are genuinely useful as a teaching device, because each one reveals a specific place where the abstraction we call “a running program” breaks down against the machinery underneath it.

1. Observation Changes the Timing, and Timing Is Everything

The most common class of Heisenbug lives in the gap between two threads of execution. Thread A reads a value, thread B writes a new one, and thread A reads again expecting the old value. This is a race condition, and it is extraordinarily sensitive to timing. When you add a debugger, the program slows down. Breakpoints, memory inspection, and single-stepping all insert delays that change the exact sequence in which threads interleave. The race condition that crashed your server at 3 AM disappears entirely under examination because the critical timing window has been stretched out of existence.

This is not just an academic problem. The Therac-25 radiation therapy machine, which killed several patients in the 1980s, ran a software race condition that only manifested when an operator typed fast enough to advance between screens before a reset completed. Speed of human input was the trigger. No slow-motion test could reproduce it.

2. Memory Can Be in Multiple Places at Once

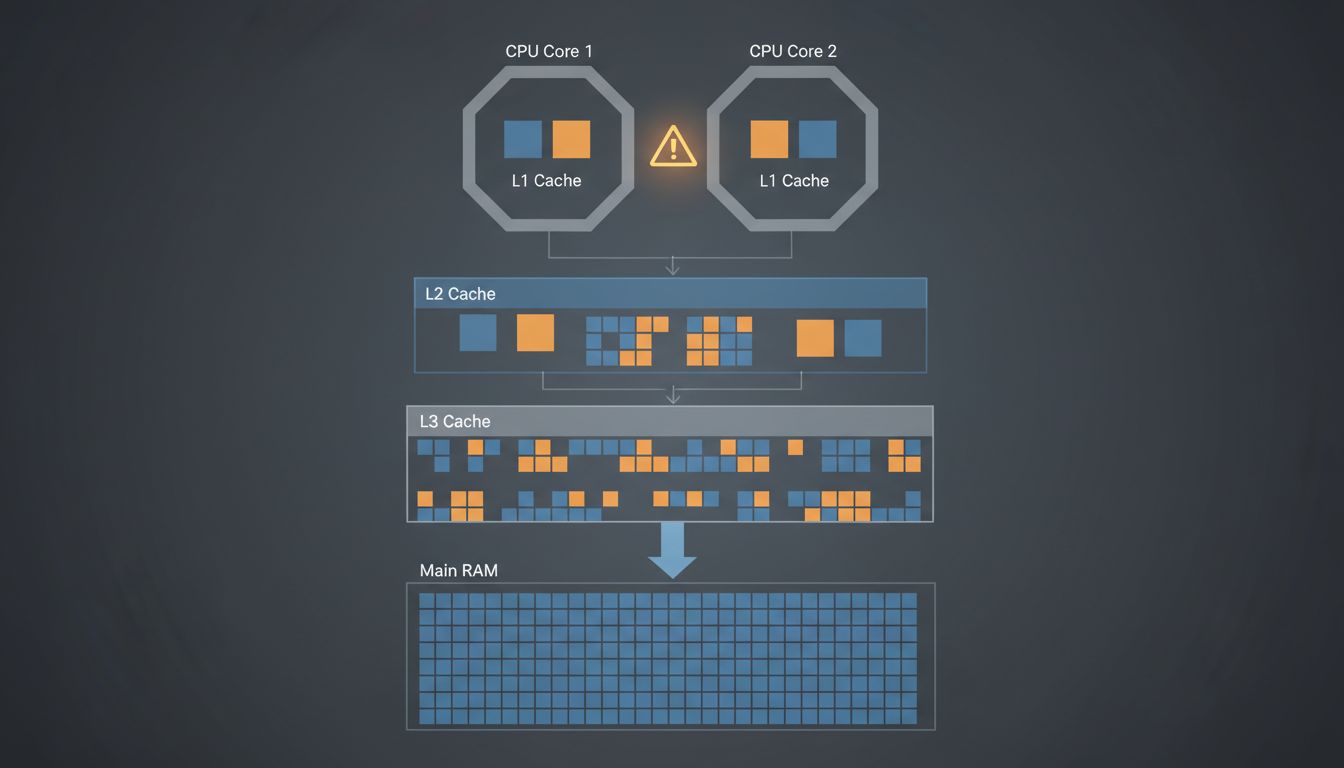

Modern CPUs do not read and write directly to RAM. Between the processor and memory sits a hierarchy of caches, typically three levels, each faster and smaller than the last. When one CPU core writes a value to a cache, that value does not immediately appear in the caches of other cores. There is a propagation delay, and the rules for managing it are governed by a concept called the memory model.

A Heisenbug rooted here is particularly nasty. Adding print statements to debug the problem forces the compiler to actually commit values to memory in a predictable order, because output functions include memory barriers. The synchronization you added for debugging purposes accidentally fixes the underlying unsynchronized access. Remove the print statements and the bug returns. This is why “printf debugging” sometimes works and sometimes makes things worse: you are accidentally editing the memory ordering of your program while trying to observe it.

3. The Compiler Is Not Your Transcriptionist

When you write code, you are not writing instructions for the CPU. You are writing instructions for the compiler, which then decides what instructions to actually issue. Modern compilers reorder operations, eliminate variables it considers redundant, and make assumptions about what your program can and cannot do. One common assumption: that if a variable is not marked volatile, no external agent will change it unexpectedly.

This creates a class of Heisenbug where the bug exists in the optimized binary but not in the debug build. Debug builds typically disable optimizations so that the compiled output maps cleanly to the source code, making it easier to step through. Enable optimizations, and the compiler rewrites your program in ways that break your informal threading assumptions. The code you read and the code that runs are different documents. This is not a flaw in compilers; it is an intended feature that programmers routinely underestimate.

4. The Operating System Has Its Own Agenda

Your program does not run continuously. The operating system interrupts it thousands of times per second to let other processes run, handle hardware events, and perform bookkeeping. From your program’s point of view, time is not a smooth river. It is a series of stops and starts, and the gaps between them are variable and largely unpredictable.

Heisenbugs that depend on wall-clock time are particularly sensitive to this. A program that assumes an operation completes within 10 milliseconds will fail intermittently whenever the scheduler decides to pause it for 15. Under a debugger, where timing is already distorted, these pauses look different and the failure never materializes. This is one reason that real-time systems, from aircraft flight controllers to financial trading infrastructure, run specialized operating systems with hard guarantees about scheduling latency rather than general-purpose kernels where the scheduler’s decisions are opaque.

5. The Hardware Itself Is Speculative

Modern processors do not execute your instructions in the order you wrote them. They predict which branches your code will take, execute instructions ahead of time, and throw away the results if the prediction was wrong. This “speculative execution” is the mechanism behind the Spectre vulnerability disclosed in 2018, which allowed attackers to read memory they were not supposed to access by exploiting the side effects of discarded speculative operations.

Spectre is, in a sense, the most elaborate Heisenbug ever documented. The attack works precisely because observation changes nothing: the speculative execution leaves traces in the CPU cache, and you can measure those traces through timing even though the speculative results were officially discarded. The bug was not in software. It was in the design philosophy of hardware going back decades, and it affected virtually every modern processor from Intel, AMD, and ARM.

6. Heisenbugs Are an Argument for Better Tooling, Not More Heroism

The traditional response to a Heisenbug is patience and cleverness: add logging that does not change timing, isolate components, reproduce the conditions exactly. This works, but it takes a long time and relies heavily on the intuitions of senior engineers who have encountered these failure modes before.

The better long-term response is structural. Thread sanitizers, like the one built into Clang and GCC, detect data races at runtime without requiring the bug to manifest catastrophically. Memory sanitizers catch use-after-free errors that only appear under specific allocation patterns. Formal verification tools can prove that certain race conditions cannot occur in a given design. None of these eliminate the underlying complexity of concurrent hardware, but they move the detection earlier in the process, before the bug reaches production and starts behaving like a ghost.

The deeper lesson of the Heisenbug is that computers are probabilistic machines wearing a deterministic costume. The costume holds most of the time, which is why software engineering works at all. But the costume has seams, and they show up exactly when you are least equipped to handle them: at 3 AM, under load, in production, where no one is watching.