Most developers have a folder in their brain labeled “deal with later” where compiler warnings go to live permanently. The build passes. The tests green. Ship it. The warnings are just the compiler being overly cautious, right?

Wrong. Compiler warnings are one of the cheapest forms of static analysis available, and the cost of ignoring them compounds in ways that tend to become visible at the worst possible moment.

What Warnings Actually Are

A compiler warning is not a guess. It’s the result of a well-defined rule applied to your actual code, written by engineers who have collectively seen thousands of ways programs fail. When GCC or Clang flags an uninitialized variable, it’s not being paranoid. It’s telling you that a code path exists where that variable has no defined value, and the behavior you get depends on whatever bytes happen to be sitting in memory at runtime.

The distinction between an error and a warning is largely political. Errors are patterns the compiler refuses to compile. Warnings are patterns the compiler could refuse to compile but doesn’t, usually because there are legitimate uses that look syntactically similar to the dangerous ones. That distinction tells you nothing about severity at runtime.

Consider signed/unsigned integer comparison warnings in C and C++. They look boring. They look like the compiler being pedantic. But a signed value of -1 compared to an unsigned value produces a result that surprises almost everyone the first time they encounter it: -1 as a signed 32-bit integer is 0xFFFFFFFF, which as unsigned is 4,294,967,295. Your loop that was supposed to stop at the end of a buffer now has a very different termination condition.

The Volume Problem and How Teams Create It

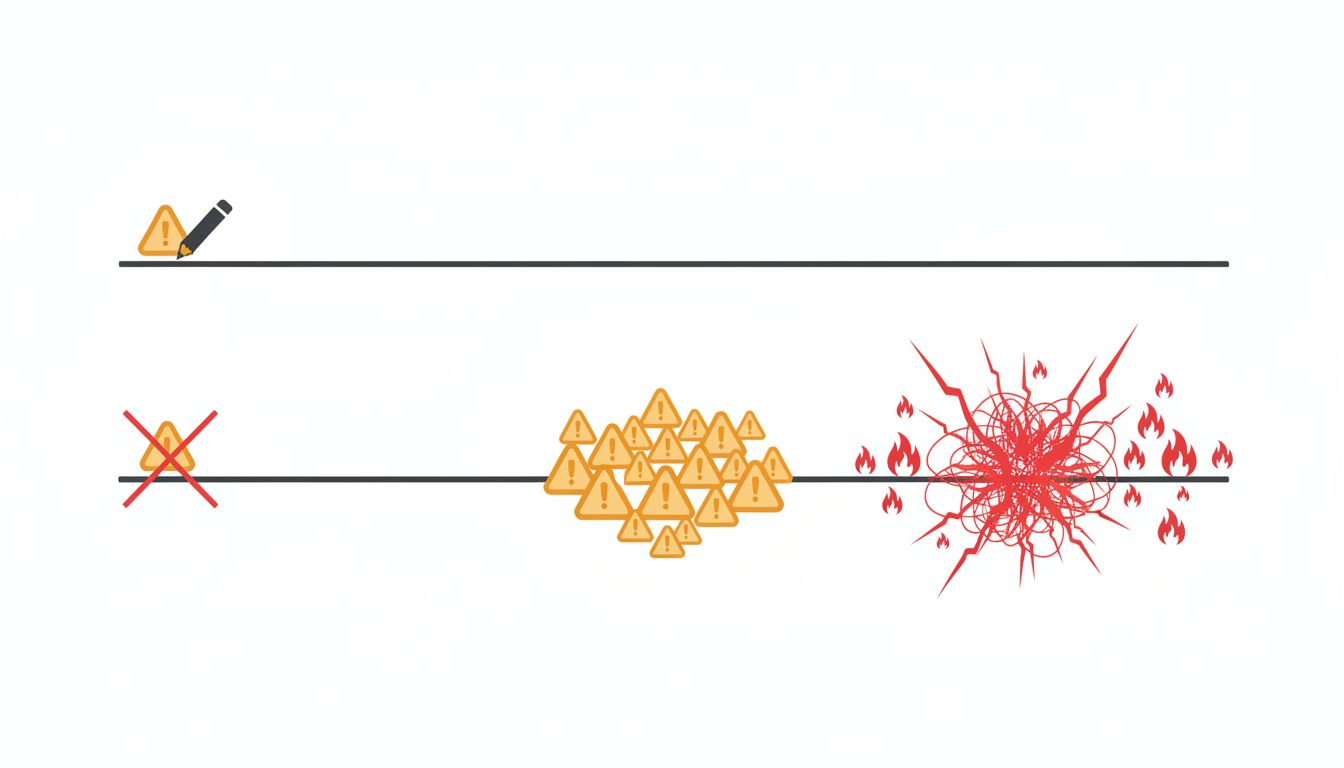

Here’s how warning debt accumulates: a project starts clean, someone adds code that generates a warning, nobody fixes it, the warning gets normalized, and within a few months the build output has hundreds of warnings that everyone ignores. At that point, a new critical warning has no signal value. It blends into the noise.

This is the same dynamic that makes alert fatigue dangerous in production monitoring, and it operates by the same mechanism. When every alert fires, no alert matters. What 1970s air traffic control teaches about alerts covers this pattern in operational systems, but the principle applies equally to build output: a signal system that cries wolf constantly stops being a signal system.

The fix is not complicated, just uncomfortable. Treat warnings as errors. In GCC and Clang, -Werror does this. In MSVC, /WX. Yes, this means your build breaks when a new warning appears. That’s the point. A warning that breaks the build gets fixed. A warning that merely appears in build output gets ignored.

If you inherit a codebase with existing warning debt, the path forward is -Werror combined with -Wno-error= exclusions for specific warning types you haven’t addressed yet, then methodically removing those exclusions as you clean up each category. It’s tedious but tractable.

The Warnings Worth Prioritizing

Not all warnings carry equal risk, and knowing which ones deserve immediate attention is useful when you’re triaging a backlog.

Use-after-free and memory safety warnings in C and C++ are in a category by themselves. These are the class of bug that produced some of the most severe vulnerabilities in recent memory. The Chromium project has documented extensively that memory safety issues account for a large majority of their high-severity security bugs. When a compiler or sanitizer flags a potential use-after-free, that warning deserves the same urgency as a failing test.

Implicit fallthrough in switch statements is subtler but appears constantly. A case that falls through to the next case without a break is sometimes intentional and sometimes a missing break. The compiler can’t tell the difference, but it can tell you when the pattern appears. In C++17, [[fallthrough]] lets you annotate intentional fallthroughs, which simultaneously silences the warning and documents intent for the next reader.

Unused return values are a reliable indicator of ignored errors. Functions return status codes and results for a reason. When you call a function and discard its return value, you’re often silently ignoring a failure mode. In C, __attribute__((warn_unused_result)) marks functions where discarding the return value should be an error. POSIX’s write() is a classic example: it returns the number of bytes written, which may be less than you requested. Ignoring that is a latent bug.

Shadow variable warnings flag a local variable that shares a name with one in an outer scope. This compiles fine and runs deterministically. The problem is that it’s easy to write code thinking you’re modifying one variable while actually modifying another. These warnings are almost always worth fixing because the fix is trivially cheap (rename the inner variable) and the benefit is clarity.

The Languages That Made This Decision For You

This is worth examining: several modern languages treat compiler strictness as a design principle rather than an option.

Rust makes a number of patterns that C/C++ warn about into outright compile errors. The borrow checker doesn’t warn about use-after-free possibilities; it refuses to compile them. Unused variables produce warnings by default, and idiomatic Rust code acknowledges them explicitly with underscore prefixes. The language takes the position that the programmer’s time is better spent fixing these things than deciding whether to fix them.

Swift’s guard and mandatory handling of Optional values address the null dereference problem at the type system level. Go’s compiler refuses to compile with unused imports or unused local variables. These aren’t warnings; they’re errors.

The lesson isn’t that you should rewrite everything in Rust (though the safety arguments are real). It’s that the designers of these languages looked at the most common sources of bugs and said: we’re not going to let the programmer choose to ignore these. That design decision reflects a conclusion that the cost of enforced correctness is lower than the cost of optional correctness.

The Real Cost of Deferred Warnings

There’s a version of this argument that treats warnings as purely a code quality issue, a matter of craft and professionalism. That framing is fine but it undersells the business case.

Bugs that warnings predict are bugs that reach production, which means debugging time, incident response, and potentially customer impact. A developer hour costs three times what your payroll says it does, which means the economics of fixing a warning at compile time versus debugging a production incident at 2am are not remotely comparable. The warning is essentially free to fix when the code is being written. It is not free to fix after it has manifested as a customer-reported bug.

There’s also the compounding effect on code review. A codebase with clean build output makes it easy to notice when a change introduces a new warning. A codebase with 400 existing warnings makes it nearly impossible.

The compiler is running anyway. It’s analyzing your code for free every time you build. Treating that analysis as background noise rather than actionable feedback is leaving a significant amount of value on the floor.

The warnings aren’t wrong. They’ve just been very patient.