The story goes that Linus Torvalds keeps a rubber duck on his desk. Whether or not that particular detail is true, the practice it represents is real, widespread, and seriously underappreciated by anyone who thinks debugging is fundamentally a technical problem.

Rubber duck debugging is simple: when you’re stuck on a bug, you explain your code line by line to an inanimate object, as if teaching a complete beginner. The duck asks no questions. It offers no suggestions. It just sits there. And somewhere in the explanation, the programmer solves the problem themselves.

This sounds like a folk remedy. It is not. It’s a case study in how expert knowledge breaks down under pressure, and what it takes to recover it.

The Setup: A Major Codebase, a Buried Bug, and Three Days of Nothing

In the early 2000s, the OpenSSH project, the open-source implementation of the Secure Shell protocol that now runs on essentially every Linux server on the planet, had a subtle memory management bug. The kind of bug that doesn’t announce itself. It surfaces under specific load conditions, on specific hardware, intermittently enough that automated tests don’t catch it reliably.

The developers working on the bug were not junior engineers guessing at stack traces. These were experienced systems programmers with deep familiarity with the codebase. They had read the relevant code dozens of times. They had mental models of the memory allocation patterns. And they were stuck.

One of the contributors, in a note that’s since been quoted in various programming communities, described the breakthrough moment: he started explaining the problematic function to a colleague who had no context on the issue. Midway through explaining why a particular pointer was being passed a certain way, he stopped. The assumption he had been making for three days, the assumption so obvious it had never risen to the level of conscious thought, was wrong. The colleague hadn’t said a word.

This is not an isolated story. It’s a pattern.

What Actually Happens When You Talk to the Duck

The mechanism here is not mystical. It’s cognitive.

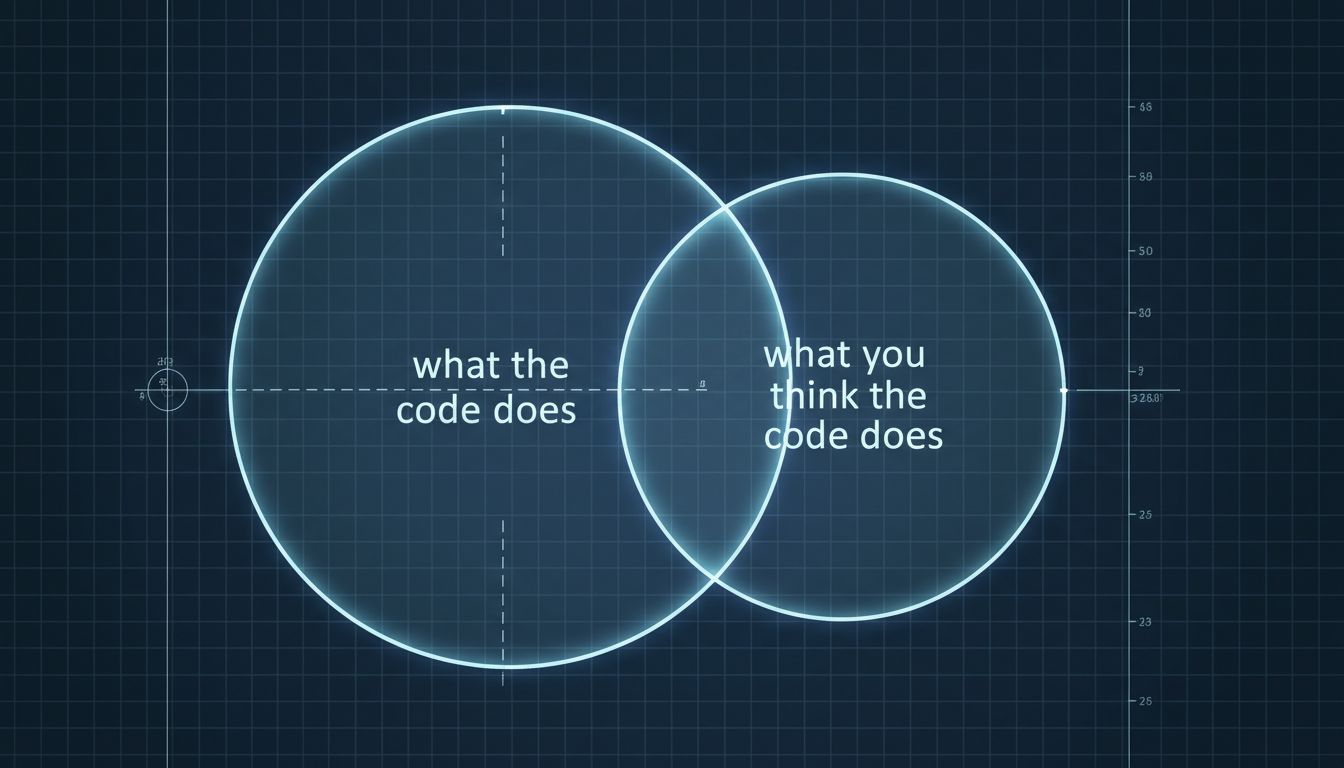

Expert programmers operate with what psychologists call chunked knowledge. They don’t read code character by character; they perceive patterns, structures, familiar idioms. This is efficient. It’s also a trap. When a bug lives in an assumption that’s been chunked away below the level of conscious attention, the expert’s fluency actively prevents them from seeing it. They read over the bug because their brain has learned not to stop there.

Forcing yourself to explain code to a non-expert, whether a colleague, a rubber duck, or a blank document you’re filling with comments, breaks that chunking. You can’t say “and here the pointer is managed normally” when you’re speaking out loud to something that cannot infer what “normally” means. You have to say what “normally” means. And sometimes when you say it, you hear that it isn’t true.

This is related to what researchers call the “protégé effect,” the well-documented finding that people learn and recall material more effectively when they expect to teach it to someone else. The cognitive preparation for teaching forces a more complete encoding of the material. The same mechanism runs in reverse during debugging: the preparation for explanation forces a more complete examination of assumptions.

This is also, not coincidentally, why pair programming works. Not because two people catch twice as many bugs. As we’ve written about, the productivity gains in collaborative technical work are rarely about raw throughput. The driver in a pair programming session is under the same cognitive constraint as someone explaining to a duck: you can’t skip over the part you don’t actually understand, because the other person will notice.

Why This Particular Technique Gets Dismissed

The reason rubber duck debugging gets treated as a joke rather than a practice is that it looks unserious. Software engineering culture has a bias toward tooling. When you’re stuck, the instinct is to reach for a better debugger, a profiler, a new logging strategy. These are real tools with real value. But they share a common limitation: they show you what the machine is doing, not what you are assuming.

As one study of debugging behavior found, the bugs that take the longest to fix are rarely the ones where the programmer lacked information. They’re the ones where the programmer had the wrong model and kept interpreting new information through that model. A debugger gives you more data points. It doesn’t reset your mental model. The duck does.

There’s also a professional vulnerability issue. Explaining your code to an inanimate object, out loud, in an open-plan office, makes you look like you’re struggling. Staring intently at a monitor for three hours looks like you’re working hard. The incentives point in the wrong direction.

What the Best Engineers Actually Do

The programmers who use this technique most reliably aren’t using a literal rubber duck. They’ve internalized the practice and adapted it to whatever form creates the minimum friction.

Some write “rubber duck comments,” explanatory notes in their codebase addressed to an imaginary reader who knows nothing. These often become the best documentation in the project. Some use their commit messages as the duck, forcing themselves to explain in plain language what a change actually does and why. Some use Stack Overflow, not to post the question but to draft it. It’s well-known among experienced developers that you often solve the problem while writing up the question in sufficient detail to post publicly.

The pattern is consistent. The productive version is not “explain the code.” It’s “explain the code to someone who cannot make your assumptions for you.”

The Broader Lesson

Rubber duck debugging is a case study in a principle that applies well beyond software: the tools built for experts often preserve the blind spots that make experts wrong. The debugger, the profiler, the sophisticated IDE are optimized for someone who already has a correct mental model and needs to find where reality diverges from it. They’re not built for the harder problem, which is finding where your mental model has silently diverged from reality without your noticing.

The best engineers know that the most dangerous bugs are the ones they’re confident they understand. When that confidence collides with a system that won’t cooperate, the answer is almost never more information. It’s a forced return to first principles, which is exactly what a rubber duck, a colleague who doesn’t know the codebase, or a carefully written Stack Overflow question demands.

Torvalds or not, the duck earns its place on the desk.