A few years ago, I watched a VP of Engineering walk into an all-hands meeting beaming. His team had delivered a new internal tooling system two weeks early and $200,000 under the approved budget. The room applauded. Six months later, the tool was abandoned. The team that was supposed to use it had worked around it from day one, building spreadsheet hacks and Slack automations instead. The VP had optimized for the budget line. Nobody had optimized for the actual problem.

Here is the position I will defend: if your software project came in under budget, one of two things happened. Either you scoped it wrong at the start, or your team pulled punches during execution. Neither of those is a success. The celebration of under-budget delivery is one of the most persistent and damaging myths in technology management.

Budget Accuracy Is a Measure of Understanding, Not Discipline

When a project comes in significantly under budget, the instinct is to credit fiscal discipline. Rarely is that what happened. What actually happened is that someone estimated a problem they didn’t fully understand, and the gap between the estimate and reality revealed itself during execution as scope that never got built.

Software budgets are, at their core, a statement about how well you understand the problem. A precise estimate means you’ve thought deeply about what you’re building, what risks exist, and what it will actually take to solve the problem worth solving. Coming in under budget usually means the scope shrank, not that the team got more efficient. And if the scope shrank, the question you need to answer is: did it shrink because you discovered the original scope was wrong, or because you ran out of time and started cutting things that mattered?

Most of the time, it’s the second one.

Sandbagged Estimates Are the Industry’s Dirty Secret

The more common explanation for under-budget delivery is even less flattering: the estimate was padded. Engineering teams and vendors learn quickly that the incentive structure rewards under-spending. Miss your budget once, and you’ll be hauled into a retrospective. Come in under budget, and you’re a hero. So estimates creep upward. Teams build in buffer on top of buffer. And then when execution goes reasonably well, they look like financial wizards.

This is not efficiency. It is sandbagging with good PR. The organization paid for the padding in the original budget approval, spent less than that number, and called the delta a win. The actual cost of the project, relative to what it should have cost, was probably higher than it needed to be from the moment the estimate was submitted.

The organizations that tolerate this dynamic eventually produce teams that have no idea how to estimate accurately, because there’s no incentive to try. Accuracy only matters if there are real consequences for being wrong in either direction.

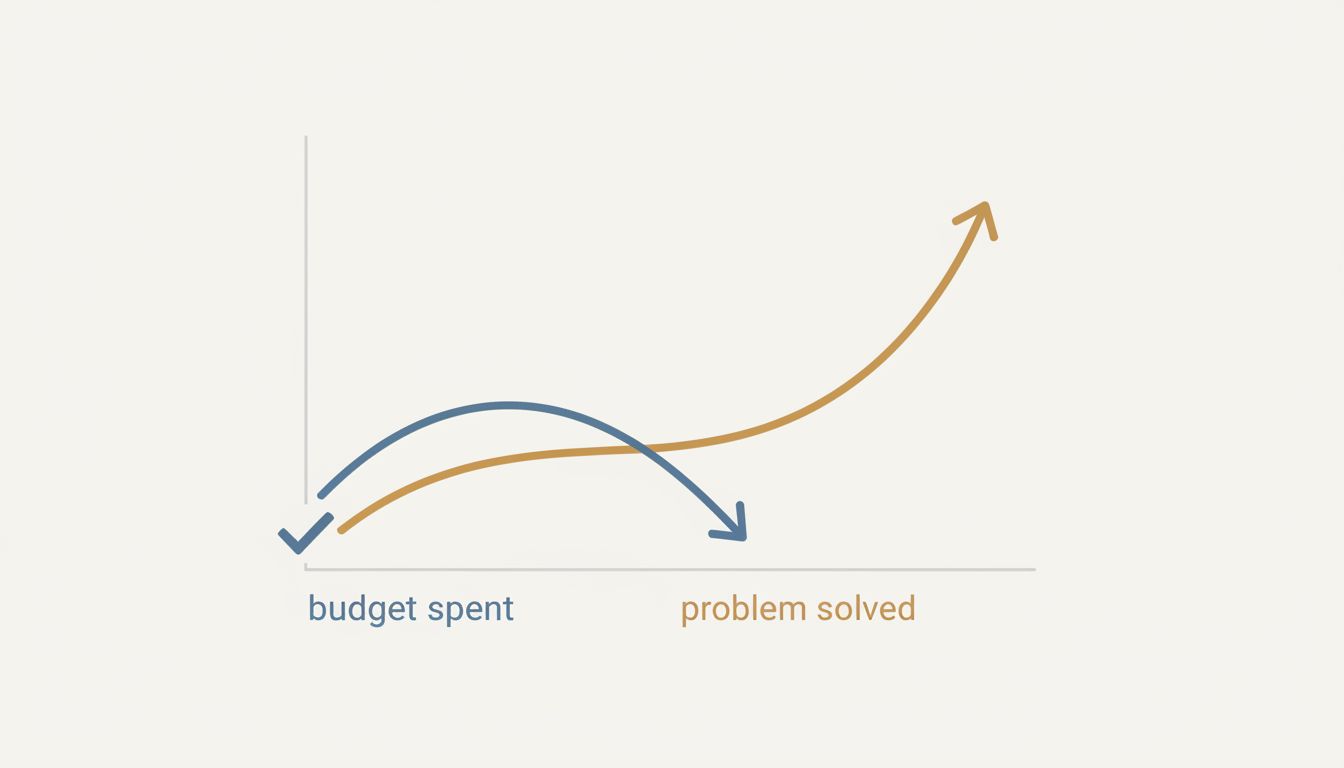

Under-Delivery and Under-Spending Move Together

There is a third scenario, and it is the most damaging: the project came in under budget because the team shipped less than the problem required, and nobody noticed until it was too late.

This is what happened to that VP’s internal tool. The team hit their milestones, stayed on schedule, and left features on the cutting room floor that seemed optional during planning but turned out to be the whole point. The reporting module that got deprioritized in week three was the thing the end users actually needed. The integration with the legacy system that got descoped in week six was why the tool existed. What shipped was technically functional and fiscally responsible, and it solved maybe 40 percent of the problem.

As software is never really done, the real question isn’t whether you hit your budget. It’s whether the thing you shipped is still being used a year later. Under-budget delivery that produces shelfware is not a success by any honest accounting.

The Counterargument

The reasonable pushback here is that I’m conflating under-budget delivery with bad delivery. Surely, the argument goes, a team can genuinely find efficiencies, use better tools, or discover that a simpler solution works, and legitimately spend less than planned while still solving the problem.

Fair. That happens. But it is rarer than the mythology suggests, and when it does happen, the correct response is not to celebrate the budget variance. The correct response is to ask why the original estimate was so far off, update your estimation process, and make sure the simpler solution actually solves the full problem before you declare victory. Teams that do this are doing something different from what most under-budget project retrospectives look like, which is a lot of backslapping and very little interrogation.

If your team genuinely found a 30 percent efficiency gain through better tooling or architectural insight, that insight has value far beyond one project. Capture it. Codify it. Use it to improve future estimates. The fact that most organizations don’t do this tells you something about whether they actually believe the efficiency story they’re telling themselves.

What Honest Project Accounting Actually Looks Like

The metric that matters is not budget variance. It is outcome delivery relative to the problem you set out to solve. Did the system get used? Did it reduce the friction it was supposed to reduce? Did the users it was built for actually adopt it, or did they route around it?

Those questions are harder to answer than a budget comparison, which is exactly why organizations default to the budget comparison. It is a precise measurement of the wrong thing.

The projects worth celebrating are the ones that shipped what the problem required, where the team understood the scope well enough that actual costs came within a reasonable range of estimated costs, and where the thing they built is still running and still used. Budget variance, in either direction, is just noise unless you understand why it happened.

The project that came in under budget and sits unused is not a fiscal success with an adoption problem. It is a failed project that spent less money failing. Stop applauding the number on the spreadsheet and start asking whether the problem got solved.