Tony Hoare Invented the Null Pointer in 1965 and Has Spent Decades Explaining Why He Wishes He Hadn’t

In 2009, Tony Hoare stood before an audience at QCon London and called his own invention a “billion-dollar mistake.” He wasn’t being theatrical. He was being conservative.

Hoare is one of the most accomplished computer scientists alive. He invented Quicksort. He developed Hoare logic, which underpins formal program verification. He received the Turing Award in 1980. And while working on ALGOL W in 1965, he introduced the null reference, a concept so simple and so catastrophically misused that it has arguably caused more software failures than any other single design decision in the history of the field.

This is worth understanding carefully, because the story isn’t really about null. It’s about what happens when a convenient shortcut gets baked into a type system, and nobody realizes the cost until it’s too late to change.

1. The Original Sin Was Not Laziness, It Was Convenience

Hoare didn’t invent null because he was cutting corners. He invented it because he was solving a real problem: what value should a reference hold when it doesn’t point to anything? He wanted a way to represent the absence of a value, and null was the simplest possible implementation. It took, by his own account, about ten minutes to add to the language.

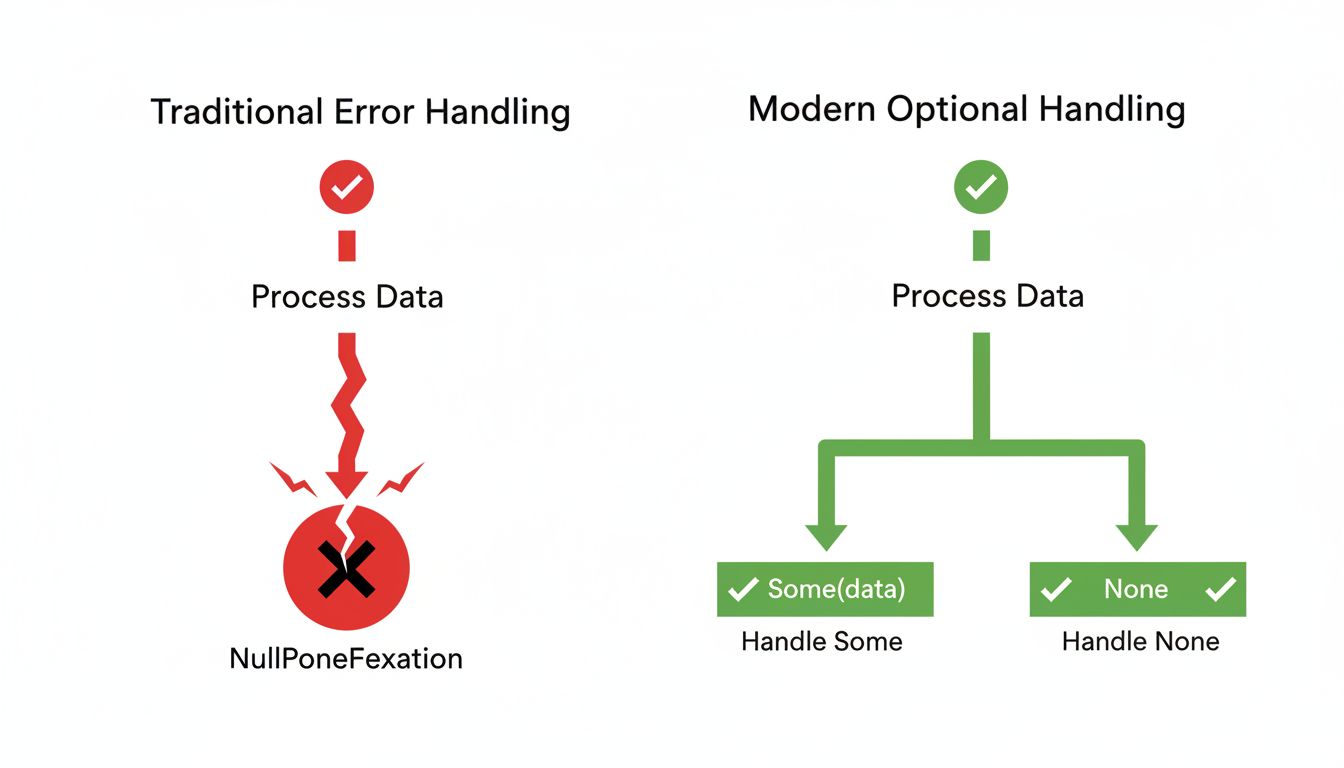

The mistake wasn’t in the concept. Absence of value is a legitimate thing to represent. The mistake was in making null a valid state for every reference type by default, without requiring programmers to explicitly handle it. Instead of forcing you to acknowledge that a reference might be empty, the type system just let null flow through silently until something tried to use it. Then your program crashed.

This is the core design error: null conflates two very different things. A variable that holds a value, and a variable that holds nothing, look identical to the type checker. The type system offers you no help distinguishing them.

2. The Billion-Dollar Estimate Is Almost Certainly Too Low

When Hoare said “billion-dollar mistake” in 2009, he was gesturing at cumulative losses from crashes, security vulnerabilities, and debugging time caused by null reference errors. The actual figure is impossible to calculate precisely, but the scale is not hard to believe.

Null dereference vulnerabilities appear regularly in CVE databases. NullPointerException is perennially among the most common exceptions in Java production environments. The Ariane 5 rocket failure in 1996, which destroyed a $370 million spacecraft 37 seconds after launch, involved a software exception caused by a floating-point conversion failure, a close cousin of the null family of “unexpected absence” bugs. These aren’t edge cases. They are the normal texture of software failure.

The reason the cost compounds is that null errors are often invisible until runtime. A null slips through compilation, passes some tests, and detonates in production, frequently in a context far removed from where it was introduced. Debugging becomes archaeology.

3. Better Type Systems Prove the Problem Was Always Solvable

The most clarifying fact about null is that we’ve known how to fix it for a long time. Haskell’s Maybe type, ML’s option type, and Rust’s Option<T> all force the programmer to explicitly acknowledge that a value might be absent and handle both cases before the code compiles. The compiler rejects the program if you try to use a potentially-absent value without checking it first.

This isn’t theoretical niceties. Rust, which shipped its 1.0 release in 2015, has no null references. If you want to represent absence, you use Option<T>. The compiler makes it impossible to forget. Swift, introduced by Apple in 2014, uses optionals for the same reason, and any iOS developer who has migrated a codebase from Objective-C to Swift can tell you how many latent null bugs the migration surfaced.

Kotlin, designed partly as a safer replacement for Java, distinguishes nullable types from non-nullable types at the syntax level. You can’t accidentally pass a nullable string where a non-null string is expected. These languages didn’t discover some exotic theoretical insight. They just applied the obvious fix that Hoare himself identified: if you want to represent absence, make it explicit in the type.

4. Java Doubling Down on Null Made It a Generational Problem

When Java launched in 1995, it inherited null and gave it enormous reach. Every reference type in Java is nullable by default. Every Java programmer has thrown a NullPointerException. The language has shipped more than four billion devices’ worth of runtime, and every one of those runtimes carries the original design error.

Java has tried to compensate. Optional<T> was added in Java 8, but it’s optional (deliberately ironic) and widely misused. Annotations like @NonNull and @Nullable from tools like FindBugs and later Checker Framework help, but they’re not enforced by the core compiler. The JDK team has improved NullPointerException messages over the years so they at least tell you which object was null, a feature that required a JEP and multiple Java versions to land.

This is what happens when a design mistake achieves massive adoption before anyone fully understands its costs. You end up spending decades building scaffolding around a problem that a better type system would have prevented entirely. The engineer who costs $300K a year is often paying for exactly this kind of accumulated debt.

5. Hoare’s Regret Is a Model for How Experts Should Talk About Mistakes

The most underappreciated part of the null pointer story is how Hoare handled it. He didn’t rationalize. He didn’t claim the ecosystem bore the responsibility. He stood up and said: I made a design decision, it was wrong, and here is the specific mechanism by which it went wrong.

This is unusual in software, where design decisions get defended long after their costs are obvious, often by people who weren’t involved in making them. Hoare’s willingness to name the mistake precisely is part of why the field has made progress on the problem. When the inventor tells you what went wrong, it’s easier to build languages that fix it.

The practical lesson for working engineers is not to avoid null (though using languages that eliminate it is genuinely good advice). The lesson is to take design decisions seriously before they achieve scale. Null was added in ten minutes to a research language. It then propagated into production languages used by hundreds of millions of programmers. Convenience and reach are a dangerous combination when the underlying design is flawed. By the time the cost is visible, the fix is a decades-long project.

Hoare called it a billion-dollar mistake. That framing treats it as a historical curiosity. It’s still compiling.