The Simple Version

When two packets arrive at a router at the same time, one waits in a queue while the other gets forwarded first. The router has one outgoing line to send them on, so it processes them sequentially, not simultaneously.

That’s the honest one-sentence answer. But the interesting part is everything underneath it: how the router decides which packet goes first, what happens when the queue fills up, and why engineers have spent decades arguing about the right way to manage this problem.

The Physical Reality

A router is, at its core, a device with multiple input interfaces and multiple output interfaces. Packets come in on one side and need to leave on another. The switching fabric in the middle moves packets from input to output, and this process is fast but not instantaneous.

The problem is that a router’s output interface can only transmit one packet at a time. If two packets both need to leave on the same outgoing link at the same moment, you have a conflict. There’s no way to merge them or squeeze them both through simultaneously. One has to wait.

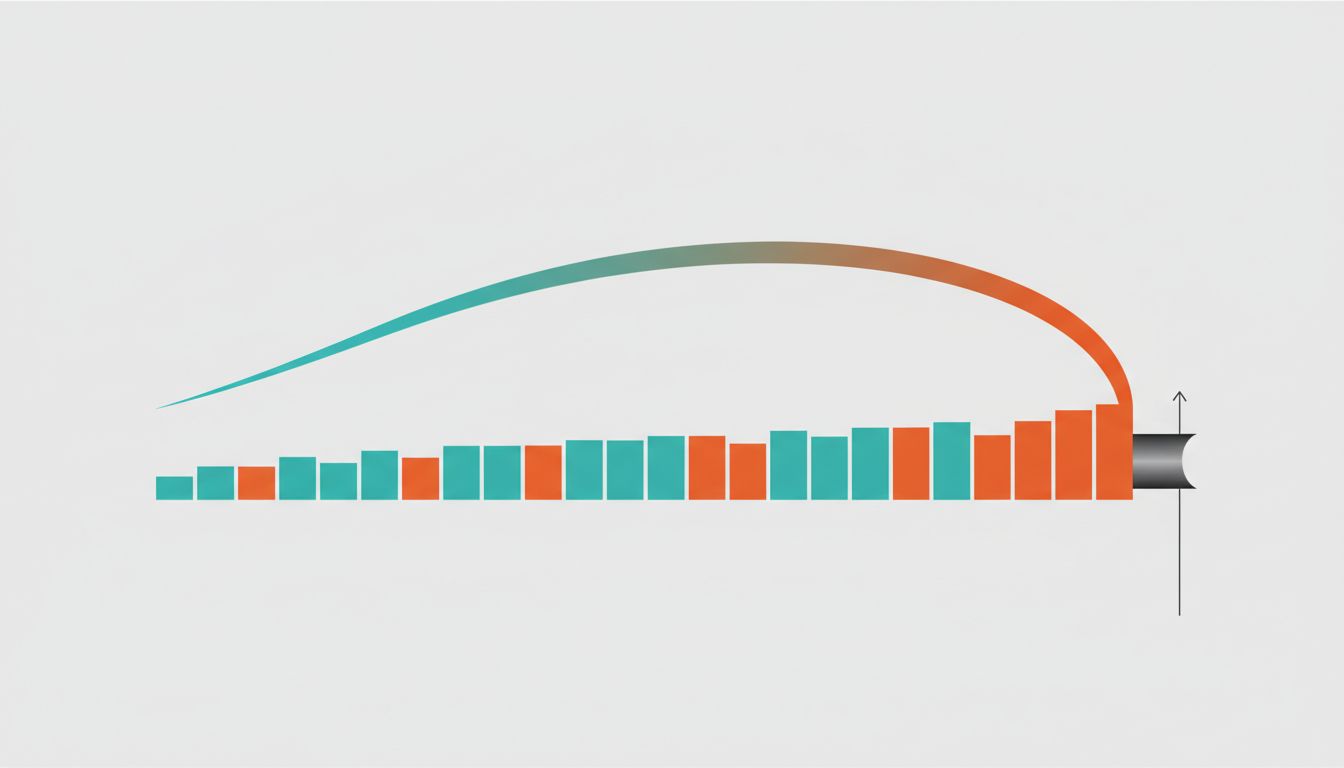

So routers maintain output queues (sometimes called buffers) on each outgoing interface. Packets stack up there while waiting their turn. Under light traffic, packets spend almost no time in queue. Under heavy traffic, those queues fill up, and that waiting time is precisely what we call latency.

This is why your video call degrades before it drops entirely. The packets aren’t being lost immediately; they’re spending longer and longer sitting in buffers, waiting for their turn, until the delay becomes perceptible.

How the Router Decides Who Goes First

The simplest approach is FIFO: first in, first out. Whoever arrived first gets forwarded first. It’s fair in a democratic sense and trivially easy to implement.

But FIFO has a well-documented problem called head-of-line blocking. If a large packet (say, a big file transfer) is sitting at the front of the queue, it blocks all the smaller packets behind it, including the tiny acknowledgment packets that your video call depends on. From a network perspective, it’s like being stuck behind a semi-truck at a toll booth when you’re paying cash and all you need is thirty seconds.

This led to the development of Quality of Service (QoS) mechanisms, which let routers prioritize certain types of traffic. A router using Differentiated Services (DiffServ) reads a field in the IP packet header called the DSCP (Differentiated Services Code Point) and uses it to place packets into different priority queues. Real-time traffic like VoIP or video conferencing gets marked for expedited forwarding. Bulk file transfers get marked as best-effort and wait when the network is busy.

Weighted Fair Queuing takes this further. Instead of a strict priority system (which could theoretically starve low-priority traffic entirely), it gives each traffic class a weighted share of the available bandwidth and serves them in rotation. High-priority traffic gets more turns, but lower-priority traffic still makes forward progress.

What Happens When the Queue Fills Up

Buffers are finite. When a queue fills up completely and a new packet arrives with nowhere to go, the router has to drop something. This is called tail drop, and it’s the brute-force answer to buffer overflow.

Tail drop works but has a nasty side effect. When a router’s queue is full, it tends to be full because the network is congested, which means multiple TCP connections are all sending at high rates simultaneously. When the queue fills and starts dropping packets, all those connections notice the drops at roughly the same moment and all back off at the same time. Then they all start ramping back up together. This synchronized boom-bust cycle is called TCP global synchronization, and it wastes bandwidth by turning a smoothly loaded link into a herky-jerky one.

The fix developed in the 1990s was Random Early Detection (RED). Instead of waiting for the queue to completely fill, RED starts probabilistically dropping packets when the queue reaches a certain threshold. The probability of dropping increases as the queue gets fuller. This staggers the signal to different TCP connections, so they don’t all back off simultaneously. The result is a more stable queue occupancy and better overall throughput.

Modern variants like CoDel (Controlled Delay) take a different approach entirely. Rather than measuring queue length in packets or bytes, CoDel measures the minimum delay packets spend in the queue and starts dropping when that delay exceeds a threshold (5 milliseconds by default). This directly targets the problem we actually care about: latency.

Why Buffer Size Is a Real Engineering Debate

You might assume more buffer space is always better. If packets have somewhere to wait, they won’t get dropped. But this intuition is wrong in an important way.

Over-buffered networks suffer from a problem called bufferbloat, a term popularized by networking researcher Jim Gettys around 2011. The symptom is familiar: you start a large download and your video call becomes unusable, even though you have plenty of bandwidth. What’s happening is that the large download is filling up a massive buffer somewhere, and your call’s packets are stuck at the back of that queue, delayed by hundreds of milliseconds. The packets aren’t dropped; they’re just arriving too late to be useful.

Bufferbloat turned out to be pervasive in home routers, cable modems, and DSL equipment. Vendors had added large buffers to hide packet loss (which looks bad in benchmarks) without considering the latency consequences. The fix, broadly, is smarter active queue management like CoDel rather than simply throwing more buffer space at the problem.

The Bigger Picture

Every time you use the internet, your packets are navigating dozens of these queuing decisions across multiple routers. The remarkable thing is how well it works given the complexity. A packet traveling from New York to London passes through roughly ten to fifteen routers, each one making independent forwarding and queuing decisions in microseconds, with no global coordinator.

The tradeoffs involved (fairness vs. priority, throughput vs. latency, simplicity vs. sophistication) don’t have universal right answers. A router in a data center serving financial transactions has different priorities than a router at a residential ISP handling a mix of gaming, streaming, and video calls. The algorithms described here aren’t defaults baked into every router; they’re choices that network engineers make deliberately, with real consequences for the traffic that flows through them.

Understanding this makes the occasional sluggishness of your internet connection less mysterious. It’s usually not a broken link or a failed server. It’s a queue, somewhere, doing exactly what it was designed to do.