Imagine you’re a worker in Nairobi or Manila. You’ve just been asked to rate whether an AI-generated answer is helpful, harmless, and honest. You’ll do this 200 times today. You’ll earn somewhere between $1 and $3 per hour doing it, depending on the platform and the requestor. On the other end of the pipeline, a company will use your judgments to fine-tune a model it will later sell for hundreds of dollars per user per month.

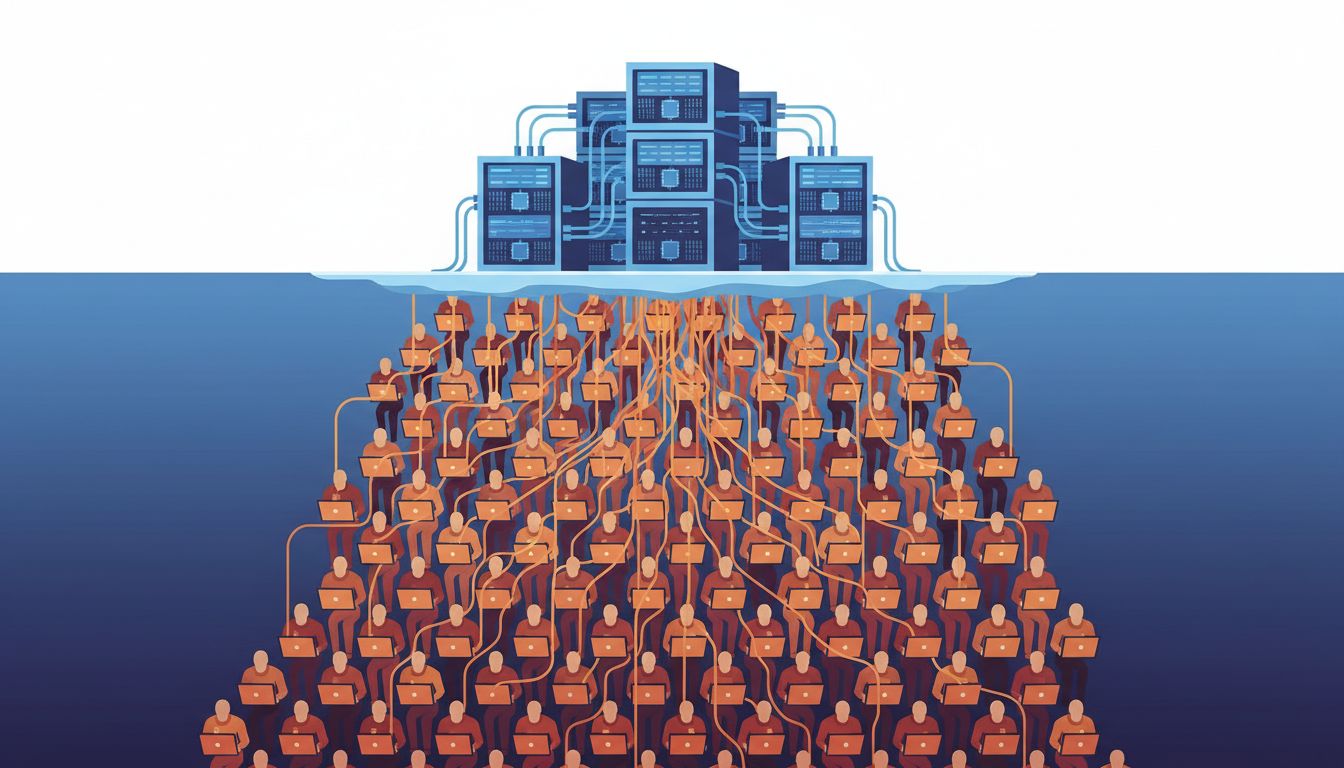

This is not a hypothetical. It is the current economics of large language model development, and it is hiding in plain sight. The story of what AI actually costs has almost nothing to do with GPU clusters and almost everything to do with the humans we’ve built into the infrastructure and refuse to count.

1. The Compute Narrative Is Convenient Misdirection

When AI companies talk about training costs, they lead with compute. Training GPT-4 reportedly cost over $100 million in compute alone. These numbers are real and staggering, and they do a useful job of making the whole enterprise seem like pure engineering, as if the models emerge from math and silicon rather than from millions of human decisions about what is true, relevant, and acceptable.

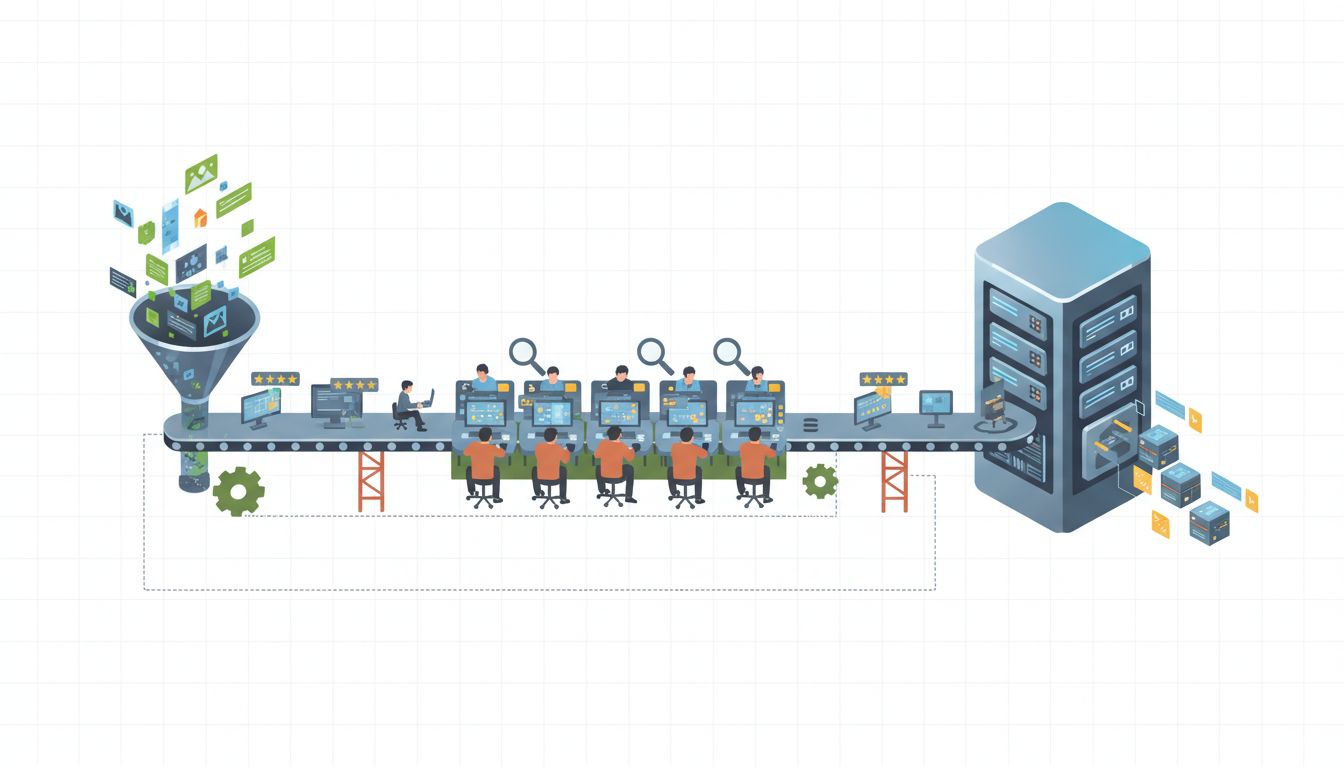

The human annotation labor that makes those models useful rarely gets a line item. Reinforcement Learning from Human Feedback (RLHF), the technique that turns a competent autocomplete engine into something that can hold a conversation, requires enormous volumes of human-generated preference data. Someone has to do that work. That someone is not a researcher in San Francisco.

2. The Platforms Are Deliberately Structured to Hide the Wage

Amazon Mechanical Turk, Scale AI, Remotasks, Appen, and a handful of similar platforms sit between the AI labs and the workers. This layering is not accidental. It allows labs to say they’re paying “market rates” to vendors while remaining insulated from questions about what workers actually take home per hour.

A 2023 study by researchers at the Oxford Internet Institute found that data workers across sub-Saharan Africa earned a median of around $2.50 per hour on major AI training platforms. Workers in Kenya and Uganda interviewed for the study reported being paid per task, with rates set high enough to look reasonable on paper but calibrated so that completing one task to approval standard took far longer than the implied rate assumed.

The wage obscurity is structural. It is a feature.

3. Content Moderation Work Carries Costs That Don’t Appear on Any Invoice

There is a specific subcategory of AI training labor that is worse than labeling images of dogs and bicycles. Content moderators and data annotators who work on toxicity classification, CSAM detection, and violent content tagging are exposed to material that causes documented psychological harm.

Time magazine’s reporting on Kenyan workers contracted through Sama to do content moderation work for OpenAI described workers earning less than $2 per hour while reviewing graphic violence and child sexual abuse material. Several workers interviewed described lasting psychological distress. The work is real, the harm is real, and the cost is externalized entirely onto workers who have no meaningful recourse.

When you see an AI product marketed on its safety and alignment properties, you are looking at the output of that labor. The safety didn’t come from the model. It came from people.

4. The “AI” in Many AI Products Is Partially Human, Right Now, Today

There’s a well-documented pattern in tech where companies sell automation that is actually people doing manual work behind an interface. This is not limited to early-stage startups buying time before they’ve built the real thing. It runs through enterprise AI products at scale.

Annotation is ongoing, not one-time. Models degrade, drift, and encounter new domains they weren’t trained on. Continuous human review is baked into most production AI systems. The workers doing that review are part of the product’s cost structure in the same way that AWS compute is part of the cost structure, except that AWS shows up on the P&L and the annotation workers often don’t.

This matters for anyone trying to understand what your LLM does when it doesn’t know the answer. Part of the answer is: a human catches it, rates it, and the correction enters the next training cycle.

5. Underpricing AI Products Relies on Underpriced Labor

There’s a broader argument in tech that cheap products signal low value. But the AI case is more specific and more troubling. AI products are cheap at point of sale partly because the labor costs embedded in their production were never correctly priced in.

If the annotators, moderators, and RLHF raters who built and maintain these models were paid $25 an hour instead of $2 to $3, the economics of the current generation of AI products would look very different. Some products would not exist. Others would cost substantially more. The venture math that made large-scale AI investment legible depended on labor markets in the Global South that could be tapped at rates that no labor law in the United States or Western Europe would permit.

Pricing your product too low tells buyers it doesn’t work is a real risk in competitive markets. But when the low price is subsidized by poverty wages on another continent, the problem isn’t just marketing. It’s structural.

6. Automation of Annotation Is Coming, and It Has Its Own Problems

Labs are aware that human annotation is a bottleneck and a PR liability. The current push toward synthetic data generation and AI-assisted annotation is partly a response to this. Have a model generate its own training data, have a stronger model evaluate a weaker model, reduce the human in the loop.

This is not a clean solution. Synthetic data can amplify existing model biases at scale in ways that human annotators, with their messy human judgment, sometimes catch and resist. More importantly, using AI to replace the humans who train AI is not cost elimination. It is cost redistribution back toward compute and away from labor. The compute bills will go up. The labor costs will go down. Whether the resulting models are actually better, or just cheaper, is an open empirical question that the people selling the products have a financial interest in answering optimistically.

7. If You’re Building on Top of These Models, You Own Part of This

This is the part of the conversation that founders and engineers tend to skip. If your product is built on a foundation model, the supply chain question is not just an ethical talking point. It is a business risk with a time horizon.

Regulatory attention to AI supply chains is increasing. The EU AI Act includes provisions touching on training data practices. Class action suits related to data sourcing are already in courts. The argument that you’re just using an API and the labor practices upstream are not your problem is exactly the argument that apparel brands made about contract factories in the 1990s. It did not hold up.

The $12-an-hour Mechanical Turk worker (and more often the $2-an-hour Remotasks worker) is not an abstraction. They are load-bearing members of the products you are shipping. Pretending otherwise is not a neutral position. It’s a choice.