The Code You Write Is Not the Code That Runs

In 1984, Richard Stallman began writing GCC, the GNU Compiler Collection, partly as a political act. Proprietary compilers at the time were black boxes. Stallman wanted programmers to see what was happening to their code. The irony is that once GCC matured and its optimization passes became sophisticated enough, the transformation between input and output grew so complex that most programmers still have no idea what’s happening.

This is not a complaint. It’s the point. Understanding what a compiler actually does, using GCC’s history as a lens, reveals something important about every abstraction layer in software: the interface hides transformations that are far more radical than users assume.

The Setup: You Write C, You Get Something Else

Consider what a programmer thinks they’re doing when they write a loop in C. They’re describing sequential instructions. Increment a counter, check a condition, do some work, repeat. The mental model is procedural, ordered, literal.

The compiler sees something different. It sees a mathematical relationship, and it has decades of research behind it suggesting that the mathematical relationship can often be computed faster if you abandon the sequential, ordered, literal structure entirely.

GCC’s development through the 1990s and 2000s illustrates how aggressive this divergence became. The early versions did relatively straightforward translation: C to assembly, more or less preserving program structure. Then came optimization passes, added incrementally as compiler research matured. Each pass takes the intermediate representation of your code and transforms it according to a specific rule.

By the time GCC 4.x arrived in the mid-2000s, a single C function could pass through more than a dozen distinct transformation stages before producing assembly.

What Happened: The Optimization Pass System

Here is what GCC actually does with your code, simplified to the stages that matter most.

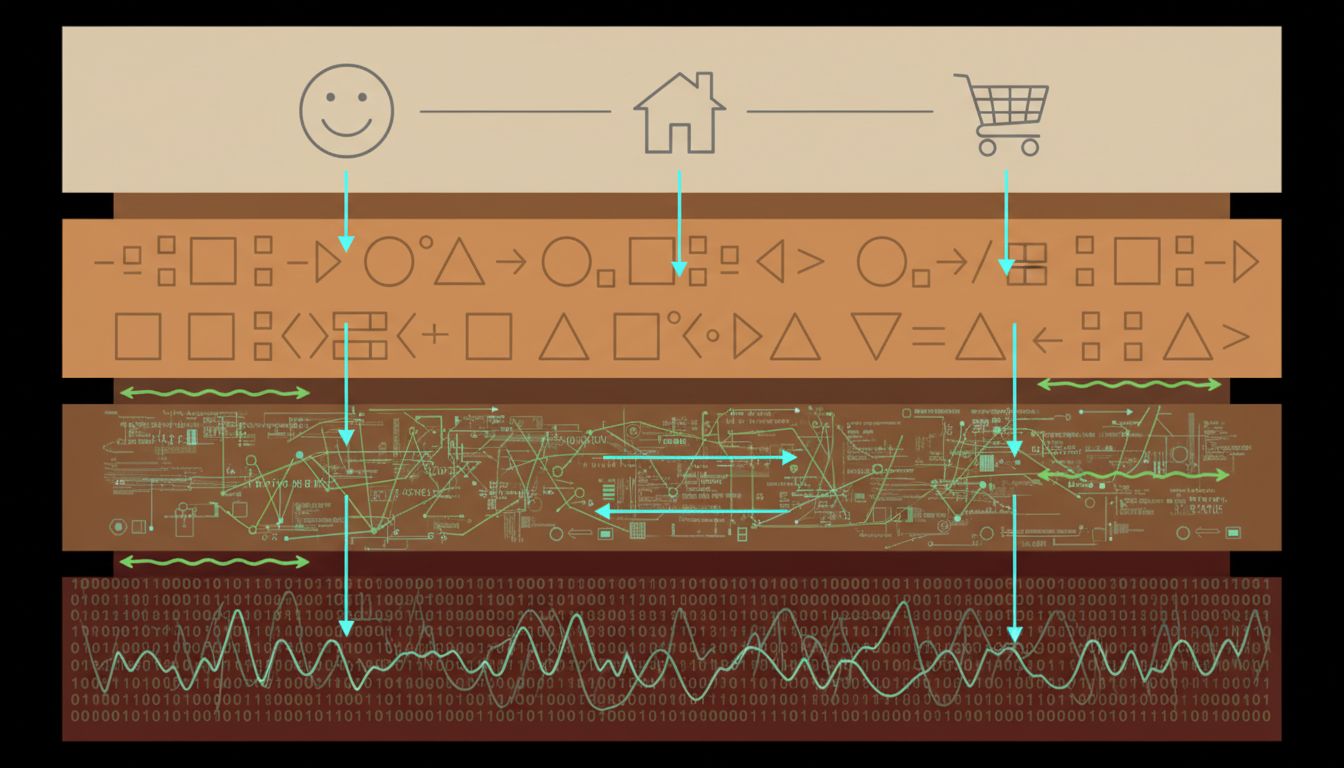

First, the compiler parses your source into an abstract syntax tree, a structured representation of what you wrote. This is the last stage where your code looks anything like what you typed. The AST gets lowered into an intermediate representation called GIMPLE, which strips away the surface syntax and makes control flow explicit.

Then the passes begin. Dead code elimination removes instructions whose results are never used. Constant folding evaluates expressions at compile time rather than runtime, so if you write x = 2 * 3 * y, the compiler emits x = 6 * y without you asking. Inlining replaces function calls with the function body itself, eliminating call overhead and enabling further optimization of the combined code.

The one that surprises most programmers is loop transformation. GCC can unroll loops, executing multiple iterations per cycle by duplicating the loop body. It can vectorize loops, replacing scalar operations with SIMD instructions that process multiple data elements simultaneously. A loop you wrote to process one integer at a time may run on four or eight integers per iteration on modern hardware, because the compiler recognized the pattern and issued AVX or SSE instructions you never wrote.

The most counterintuitive pass is alias analysis. C allows pointers that can refer to the same memory location, which constrains what the compiler can reorder. GCC’s alias analyzer tries to prove that two pointers cannot alias, and when it succeeds, it is free to reorder and parallelize operations that would otherwise have to be sequential. If you’ve ever had a bug appear only in optimized builds, alias analysis is a likely suspect. The optimizer rewrote your code in a way that was mathematically valid given its assumptions, but your code was making aliasing guarantees it never stated.

This is the relationship between GCC and the C standard that makes experienced systems programmers careful: the compiler optimizes based on what the standard permits, not what you intended. Undefined behavior in C isn’t just an error, it’s permission for the compiler to assume that code path doesn’t exist, and to optimize aggressively on that assumption.

Why It Matters: The Distance Between Intent and Execution

The GCC case is instructive because it makes visible a gap that exists at every layer of the software stack. You write Python; CPython interprets bytecode. You write SQL; the query planner rewrites your join order. You write a React component; the framework decides when and whether to call it.

In each case, the interface accepts your description of what you want and produces something different in pursuit of the same result. The compiler is simply the most mature and extensively studied version of this pattern.

What the GCC story teaches is that this translation is not neutral. Optimizing compilers make choices based on models of hardware and program behavior. Those models are usually right. Sometimes they’re wrong in ways that are very hard to debug. The compiler warning you keep ignoring is right often because the compiler has detected a pattern that its model flags as dangerous, and its model has decades of evidence behind it.

The performance implications are also non-trivial. The difference between unoptimized and optimized GCC output on a numerical workload can be an order of magnitude. This isn’t the compiler making your algorithm better. It’s the compiler expressing your algorithm in instructions that match what the hardware actually executes efficiently, which is not what the C source code describes.

This is why the instinct to rewrite code in a lower-level language to improve performance is often misguided. Why rewriting in a faster language usually makes it slower captures the trap: programmers attribute performance problems to the language when the real issue is what the compiler and runtime are doing with it. A well-optimized C program can outperform naive Rust, and optimized Rust can outperform naive C. The language is almost beside the point.

What We Can Learn

The practical lesson from GCC’s evolution is not that you need to understand every compiler pass. Most of the time, you don’t. The lesson is about when the abstraction layer matters and when it doesn’t.

It doesn’t matter when your code is doing work that is far from the hardware limit. Database queries, network calls, user interface rendering: the compiler’s optimization decisions are irrelevant compared to I/O latency and architecture choices.

It matters when you are close to the hardware limit, when you are writing numerical code, signal processing, cryptography, or game engines. In those contexts, understanding the compiler’s mental model, specifically what patterns it can and cannot vectorize, what aliasing assumptions it’s making, what the cost of a branch misprediction is, becomes necessary to write code that performs as intended.

And it always matters when debugging. A bug that disappears at -O0 and appears at -O2 is not a compiler bug. It is almost certainly undefined behavior in your code that the optimizer exposed. The compiler is not wrong. Your mental model of what your code does is wrong.

Stallman’s goal in 1984 was to make compilation transparent. Four decades later, GCC is open source and extensively documented, and the optimization passes are public knowledge. The transparency is real. What he didn’t anticipate is that the transformation would become sophisticated enough that transparency and comprehensibility are two different things. You can read every pass. Understanding the interaction between them, on real code, on real hardware, is a career’s worth of work.

The code you write is a request. The compiler decides how to fulfill it.