The Simple Version

When two packets arrive at a router simultaneously, the router puts one of them in a queue and processes them one at a time. If the queue is full, it drops a packet entirely.

Why This Happens More Than You’d Think

The internet runs on packet switching. Every file, video frame, and message you send gets chopped into discrete chunks, each labeled with a destination address, and those chunks travel independently across a network of routers. At any busy router, hundreds of thousands of packets arrive every second across dozens of physical ports.

True simultaneity at the hardware level is rare but not impossible, and the more important problem is near-simultaneity: packets arriving faster than they can be forwarded. This is the normal state of affairs at any router that’s doing real work. The interesting question isn’t whether congestion happens. It’s how routers manage it without the whole thing collapsing.

The Queue: Where Packets Wait Their Turn

Every output port on a router has a buffer, essentially a queue where packets wait before being transmitted. When two packets arrive at the same time and need to leave through the same output port, one gets processed immediately and the other waits in the buffer. This is called output queuing, and it’s the dominant approach in modern routers.

The buffer is finite. On consumer-grade routers, these buffers are often measured in milliseconds of storage capacity. On carrier-grade hardware, they can hold more, but the principle holds: there’s a limit.

When that limit is hit, the router has to make a decision about which packet to drop. The naive approach is tail drop, meaning you throw away whatever just arrived. It works but has a known failure mode: during TCP-dominated traffic, tail drop causes synchronized congestion collapses where multiple connections all back off at the same moment, creating a thundering herd when they resume.

The smarter alternative is Active Queue Management (AQM). Algorithms like RED (Random Early Detection) start randomly dropping packets before the queue fills up, which spreads the congestion signal across connections rather than slamming them all at once. The even smarter successor, CoDel (Controlled Delay), developed by Kathleen Nichols and Van Jacobson in 2012, targets queue latency directly rather than queue length, dropping packets only when they’ve been waiting longer than a set threshold. CoDel became the default AQM in the Linux kernel and is now widely deployed.

Not All Packets Are Equal

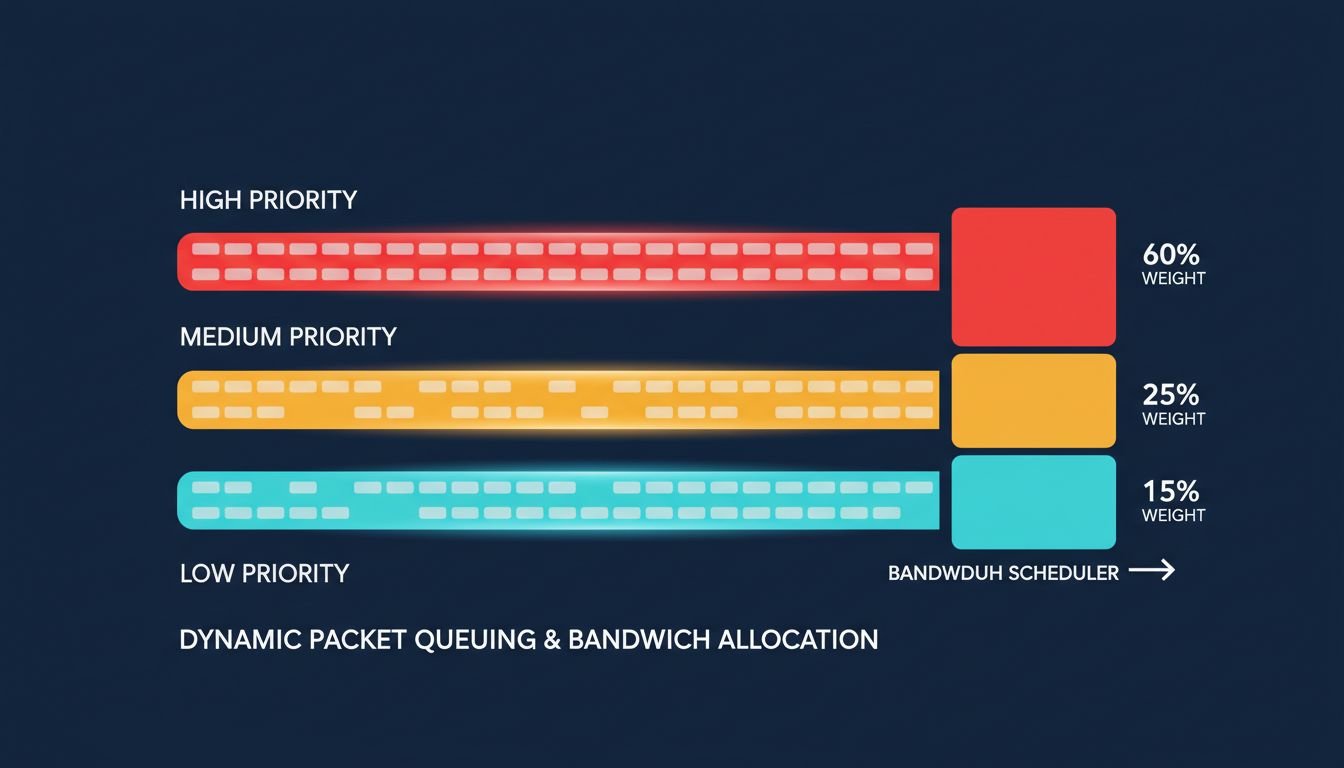

Modern routers don’t maintain a single queue. They maintain multiple queues with different priorities, a system called Quality of Service (QoS).

The way this works in practice: packets get classified when they arrive, based on their IP headers, their DSCP (Differentiated Services Code Point) markings, or the type of traffic. A VoIP packet gets placed in a high-priority queue. A bulk file transfer goes into a best-effort queue. A background software update might land in a low-priority queue that only gets bandwidth when nothing else needs it.

The router scheduler then decides which queue to serve and when. A common approach is Weighted Fair Queuing (WFQ), which allocates bandwidth proportionally across queues while preventing any single queue from monopolizing the output port. Strict Priority Queuing is more aggressive: it empties high-priority queues completely before touching lower-priority ones, which keeps latency low for real-time traffic but risks starving lower queues during heavy load.

This is why a video call stays smooth while a large download is running in the background. They’re in different queues, and the scheduler is making decisions about them dozens of times per second.

The Speed of All This

The timescales involved here are worth appreciating. A modern core router like Cisco’s ASR 9000 series or Juniper’s PTX line can forward packets at rates measured in terabits per second. The packet processing pipeline, including ingress, lookup in the routing table, queue placement, scheduling, and egress, happens in hardware on custom silicon, not in software on a general-purpose CPU.

Routing table lookups use specialized data structures called tries (specifically radix trees or CAM/TCAM hardware) that can match a destination IP address against hundreds of thousands of routing entries in a single memory access cycle. The lookup itself takes nanoseconds.

All of this is invisible to you. When you load a webpage, packets are being queued, deprioritized, dropped, and retransmitted in ways that TCP’s congestion control algorithm handles automatically. TCP interprets a dropped packet as a signal to slow down, reduces its transmission rate, then gradually increases it again. This is the Additive Increase Multiplicative Decrease (AIMD) algorithm, and it’s the mechanism that keeps the internet from collapsing under its own traffic. The congestion signal starts at a router’s buffer, propagates back to your machine via a missing acknowledgment, and results in your client sending packets more slowly. The whole feedback loop completes in one round-trip time.

When the System Breaks Down

Bufferbloat is what happens when router buffers are too large. Rather than dropping packets early to signal congestion, oversized buffers just absorb the excess and create massive queuing delays. Your download throughput looks fine. Your ping time to the same server spikes to hundreds of milliseconds. This makes real-time applications like gaming and video calls miserable even when raw bandwidth seems adequate.

The problem became widely recognized in the early 2010s, and CoDel was developed specifically to address it. Getting the fix deployed broadly has been slow, because it requires either firmware updates on existing hardware or replacement cycles. Many consumer routers shipped with bufferbloat problems for years.

The deeper point is that the elegance of the system sits alongside its fragility. Packet queueing, priority scheduling, and TCP congestion control are all cooperative mechanisms. They work because every participant in the network follows the same conventions. When devices behave badly, like streaming apps that mark all their traffic as high priority, or TCP implementations that don’t back off properly, they degrade the system for everyone. The internet’s resilience isn’t a property of any single router. It’s an emergent behavior of millions of devices all following the same rules, imperfectly, under pressure.