The assumption is so basic it rarely gets spoken aloud: that the time on one machine matches the time on another. Your bank’s servers assume it when recording transactions. Every distributed database assumes it when deciding which write happened first. The entire architecture of modern computing rests on it.

It is frequently wrong.

This is the story of how Google discovered exactly how wrong, what it cost them to fix it, and why the fix they invented is still, more than a decade later, the most honest thing anyone has ever done with a clock.

The Setup

By the mid-2000s, Google was running into a wall. Their internal databases had scaled impressively, but the company needed something that didn’t yet exist: a relational database that could span multiple continents, offer ACID transactions, and remain consistent without the engineers at each regional datacenter needing to call each other to agree on what time it was.

The problem isn’t exotic. When two servers on opposite sides of the planet need to agree that Transaction A happened before Transaction B, they need a shared sense of time. The naive solution is Network Time Protocol, or NTP, which synchronizes clocks across machines using internet roundtrip measurements. It works well enough for most purposes. It does not work well enough when the ordering of financial transactions is on the line. NTP drift can push clocks apart by hundreds of milliseconds, and in a high-throughput distributed system, hundreds of milliseconds is an eternity.

If Machine A thinks it is 12:00:000 and Machine B thinks it is 11:59:850, and both are recording a transaction at “now,” you have a causal consistency problem. The database doesn’t know which event actually came first. Pick the wrong answer and you’ve corrupted the ordering of your data. Pick no answer and you’ve stalled the system waiting for certainty that may never come.

This is the fundamental tension in distributed systems that Leslie Lamport formalized in his landmark 1978 paper on logical clocks: physical time is unreliable, so you need another way to establish causality. Lamport’s solution was elegant and largely theoretical. Google needed something that ran in production at planetary scale.

What They Built

Google’s answer was Spanner, described publicly in a 2012 paper by Corbett et al. The architectural centerpiece was something they called TrueTime.

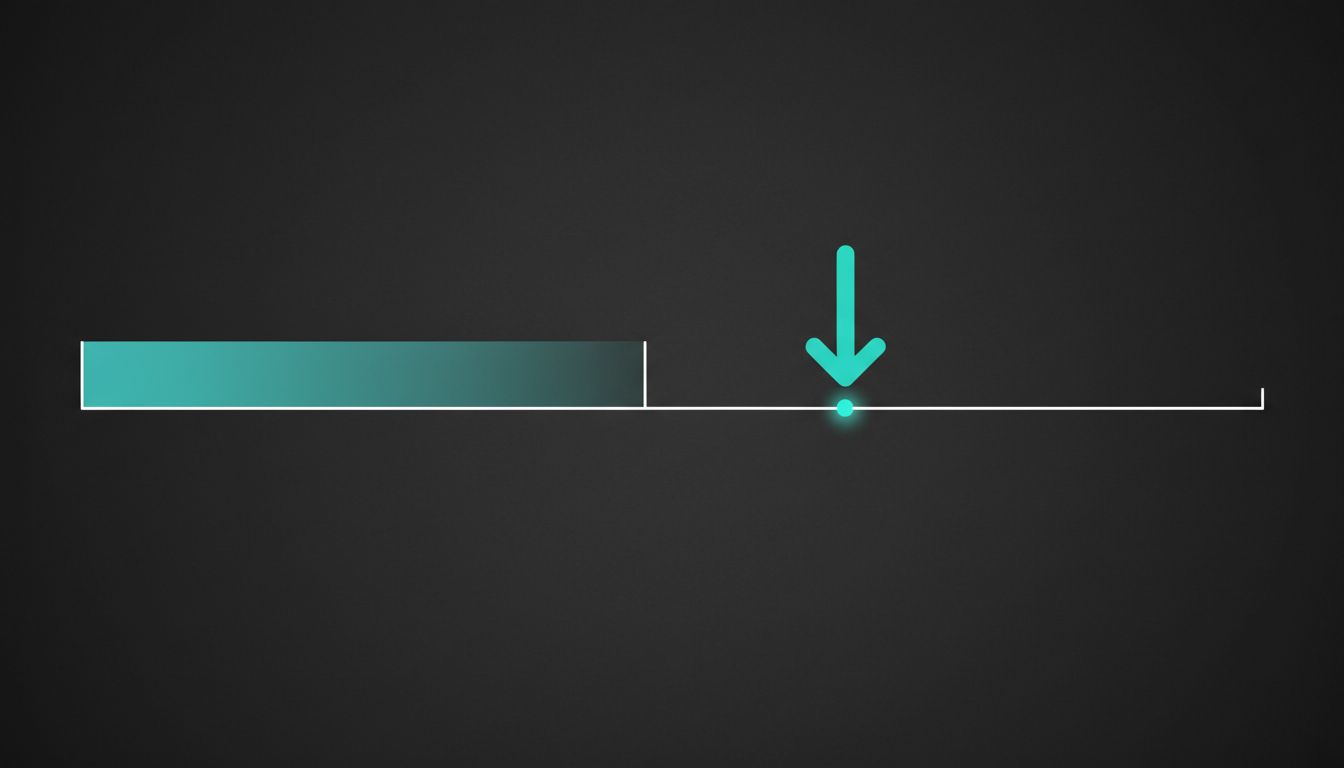

Most timekeeping systems give you a timestamp and pretend it’s precise. TrueTime gives you an interval. When you ask TrueTime what time it is, it returns two values: the earliest it could possibly be, and the latest it could possibly be. The interval represents the system’s honest uncertainty about the current moment. Google achieved sub-ten-millisecond uncertainty by equipping its datacenters with GPS receivers and atomic clocks, using them as authoritative references, and propagating time signals through a carefully designed hierarchy of servers.

The genius of the design is not the hardware, impressive as it is. The genius is what Spanner does with the uncertainty. Before committing a transaction, Spanner waits out the uncertainty window. If the TrueTime interval says “it is somewhere between T and T+7ms,” Spanner waits 7 milliseconds before committing, so that by the time the commit is complete, the timestamp assigned to it is unambiguously in the past relative to any subsequent transaction. Google calls this “commit-wait.” It adds latency deliberately, in exchange for correctness guaranteed by physics rather than assumptions.

The implications are significant. Spanner can provide what Google calls “external consistency,” meaning that if Transaction A commits before Transaction B begins, then A’s timestamp will always be less than B’s. This is a property that most distributed databases cannot offer at all, and the ones that approximate it do so by assuming clocks are more accurate than they are.

Why It Matters

Spanner launched internally at Google around 2011 and became available externally as Cloud Spanner in 2017. It underpins Google’s advertising systems, the F1 database that replaced MySQL for their ads backend, and much of the infrastructure that processes a significant portion of the world’s online commerce.

The broader significance is what the Spanner paper admitted out loud: that clock skew is not a theoretical edge case to be handled in the footnotes of your architecture document. It is a first-class problem that, if ignored, produces silent data corruption. Silent corruption is the worst kind because systems built on corrupted foundations can run for months before the inconsistencies become visible, by which point the blast radius is enormous.

Many distributed systems handle this by leaning on consensus algorithms like Paxos or Raft, which sidestep clock agreement by having nodes vote on the ordering of events. This works, but it’s slower and more complex. Spanner’s bet was that if you invest heavily enough in making time trustworthy, you can use it as a coordination primitive directly, and the result is simpler and faster at scale.

The lesson landed hard enough that it influenced a generation of database design. CockroachDB, which bills itself as an open-source Spanner alternative, implements its own version of hybrid logical clocks to manage uncertainty. Amazon’s DynamoDB, Aurora, and other services have had public postmortems involving clock-related consistency bugs. The industry eventually stopped treating time as a given.

What We Can Learn

The first lesson is that invisible infrastructure has a way of becoming visible at the worst possible moment. Clocks are infrastructure so basic that they don’t appear in most architecture diagrams. When they fail, the failure mode is subtle: your data doesn’t explode, it just quietly becomes wrong.

The second lesson is about the value of encoding uncertainty explicitly rather than hiding it. TrueTime doesn’t pretend to know the exact time. It tells you how wrong it might be and forces the system to account for that wrongness. This is the opposite of most engineering instincts, which push toward false precision because ambiguity is uncomfortable. Google built the ambiguity into the API and got correctness in return. This is the same instinct behind good probabilistic systems generally: if your model has uncertainty, surface it rather than collapsing it to a point estimate.

The third lesson is about the cost of correctness. Building TrueTime required GPS receivers and atomic clocks in every major datacenter. It required Spanner’s engineers to reason carefully about commit-wait latency and its effects on throughput. It required a detailed public paper explaining the design so the industry could scrutinize it. Correctness, at scale, is expensive. The companies that try to get it cheaply by pretending clocks are synchronized are, in effect, taking out a debt that accrues silently until it doesn’t.

Spanner’s authors were building a database. What they actually built was an argument that the only honest answer to “what time is it?” is a range, not a number, and that any system which claims otherwise is making a bet it may eventually lose.