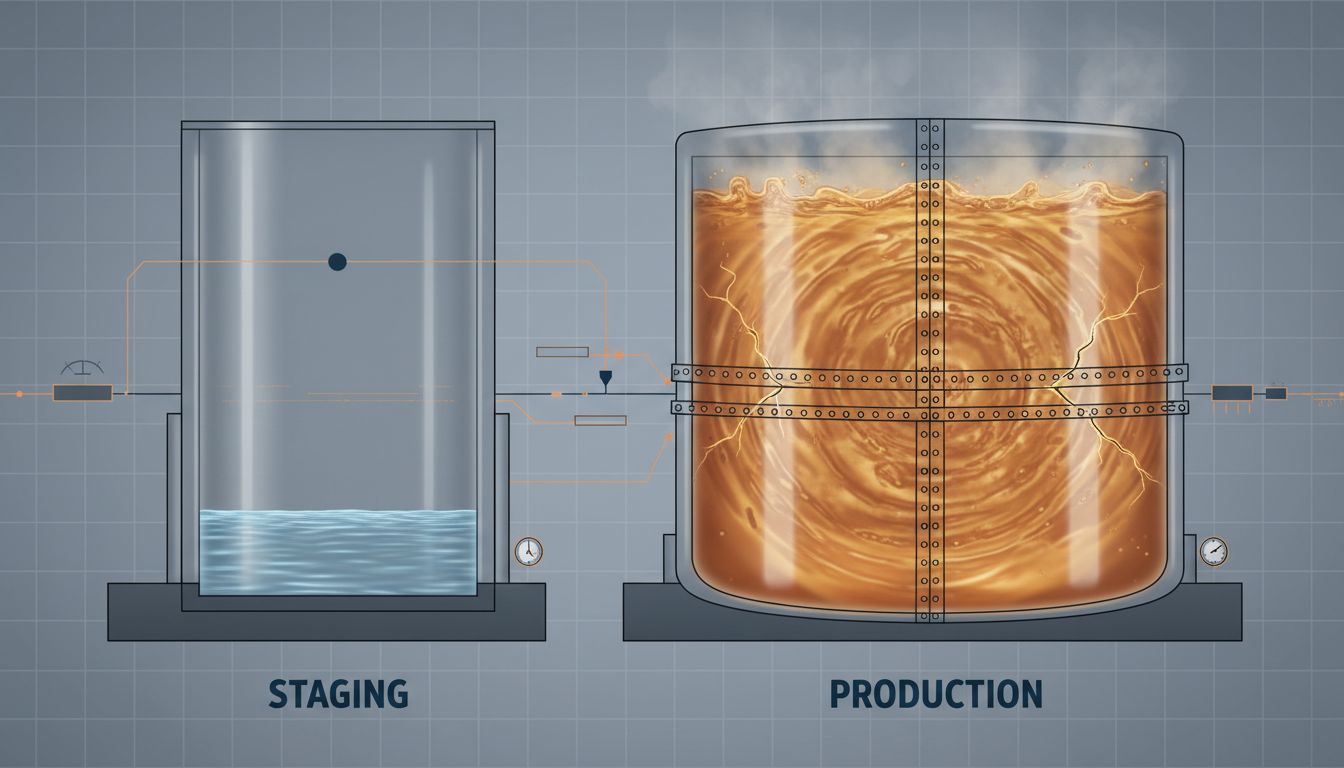

The software industry has convinced itself that staging environments are a safety net. Run your code there first. If it works, ship it. The logic seems airtight until you watch a change that passed every staging check bring down production at 2 a.m. on a Saturday.

This is not a testing failure. It is a modeling failure. Staging environments are not smaller versions of production. They are approximations, and the gaps in that approximation are exactly where outages live.

The Approximation Problem

Every staging environment starts with good intentions and drifts toward fiction. The database has a fraction of the rows. The message queue is empty. The third-party API is pointed at a sandbox that behaves nothing like the real endpoint. The cache is cold. The CDN is misconfigured or absent. The SSL certificate is self-signed. The traffic is synthetic and arrives in a steady trickle rather than in the spiky, bursty patterns that real users produce.

None of these differences feel significant in isolation. Together, they mean that staging is not testing your code against production conditions. It is testing your code against a story you have told yourself about production conditions.

The classic example is database query performance. A query that returns in 12 milliseconds on a staging database with 50,000 rows can take 4 seconds on a production database with 50 million rows, particularly if an index is missing or a query planner makes a different choice at scale. The staging environment passes this test because it was never capable of failing it.

Configuration Drift Is the Silent Killer

Beyond scale, the more insidious problem is configuration drift. Production environments accumulate changes over time: environment variables added during an incident, feature flags toggled manually, third-party credentials rotated and updated in one place but not another. Staging does not always follow. It cannot, because nobody has clear ownership of keeping them synchronized.

The result is that the two environments quietly diverge until they are running materially different software, even when they are nominally running the same version. A bug that shows up only when a specific environment variable is missing will never surface in staging. Neither will a behavior that depends on a production-only secret, a production-only network route, or a production-only queue depth.

This is not a new observation. Infrastructure-as-code tools like Terraform and configuration management systems like Ansible were built partly to address this problem by making environment definitions explicit and version-controlled. Yet even teams that use these tools carefully find that staging drifts, because production is always one incident response away from a manual change that never makes it back into the codebase.

Traffic Patterns Cannot Be Faked

Some failures only appear under specific concurrency conditions. A race condition in a payment handler might never trigger when one developer is clicking through a staging environment, but emerges consistently when ten thousand users hit the same endpoint within the same second. A database connection pool that is sized correctly for staging load becomes a bottleneck under production traffic. A memory leak that takes 72 hours of sustained load to become visible will never show up in a staging deploy that lasts 20 minutes before the engineer moves on.

Load testing helps here, but it is another approximation. Synthetic traffic does not reproduce the full distribution of real requests. Real users send malformed inputs, giant payloads, and request sequences that no test author would think to script. The only place to observe real production traffic patterns is production.

This is partly why canary deployments and feature flags have become standard practice at companies that operate at meaningful scale. Rather than relying on staging to be a complete proxy for production, these approaches treat production itself as the test environment, but limit the blast radius by controlling which users are exposed to new code. Sentry, LaunchDarkly, and similar tools exist because the alternative, trusting staging, failed enough times to justify the investment.

The Organizational Dimension

There is also a less technical reason staging fails: it is often nobody’s job to keep it accurate.

Production has an obvious owner. When it breaks, everyone feels it immediately, and accountability is clear. Staging exists in a middle space. Engineers use it but do not maintain it as a primary responsibility. Operations teams focus on production reliability. The result is that staging environments are often under-resourced, under-monitored, and over-trusted.

Teams sometimes run staging on infrastructure that is qualitatively different from production, not just smaller. A production system running on dedicated instances in a specific availability zone may be tested in staging on shared, burstable compute. The performance characteristics are not just different in degree. They are different in kind.

The honest answer to the question of who is responsible for staging accuracy is usually nobody, which explains why it gradually stops being accurate.

What Actually Works

The solution is not to abandon staging. It is to be precise about what staging can and cannot validate.

Staging is good at catching obvious integration failures, broken API contracts, missing configuration keys (when environments are managed carefully), and basic functional correctness. It is not good at catching performance regressions, concurrency bugs, scale-dependent failures, or anything that depends on production data volumes or traffic patterns.

Teams that have moved beyond staging theater tend to use it for the former, and use progressive delivery strategies for the latter. They deploy to a small percentage of production traffic, monitor error rates and latency against a baseline, and expand the rollout only when the signal is clean. They treat production observability, structured logging, distributed tracing, and real-time alerting, as first-class engineering investments rather than afterthoughts.

The staging environment is not a gate. It is a filter, and a coarse one. Treating it as a guarantee is how you end up explaining a Saturday outage to users who had no idea a test environment existed.