The Problem Isn’t Laziness, It’s Ambiguity

Every team thinks they know what “done” means. Ask three engineers on the same team to define it and you’ll get three different answers, often without anyone realizing they disagree. One person means “the code is written.” Another means “it passed review and got merged.” A third means “it’s deployed and users can access it.” These aren’t trivial variations. They represent entirely different assumptions about who owns the gap between those states.

That gap is where a lot of work quietly disappears. A feature is “done” for two weeks before anyone notices it was never deployed to production. A bug fix passes review but sits unmerged because the person who wrote it assumed someone else would handle it. A data pipeline runs successfully in staging and never gets promoted because “done” for the analyst meant “it ran once without errors,” not “it runs reliably on a schedule in the environment where it matters.”

This is not a morale problem. It’s a coordination problem, and it’s solvable with precision rather than motivation.

What a Real Definition of Done Actually Contains

The Scrum Guide (which many teams nominally follow and actually don’t) defines the Definition of Done as a formal list of criteria that every increment must meet before it can be considered complete. But the useful insight isn’t in the Agile framing. It’s in the word list.

“Done” needs to be a checklist, not a feeling. And that checklist needs to be specific enough that two people who’ve never talked can apply it independently and get the same result.

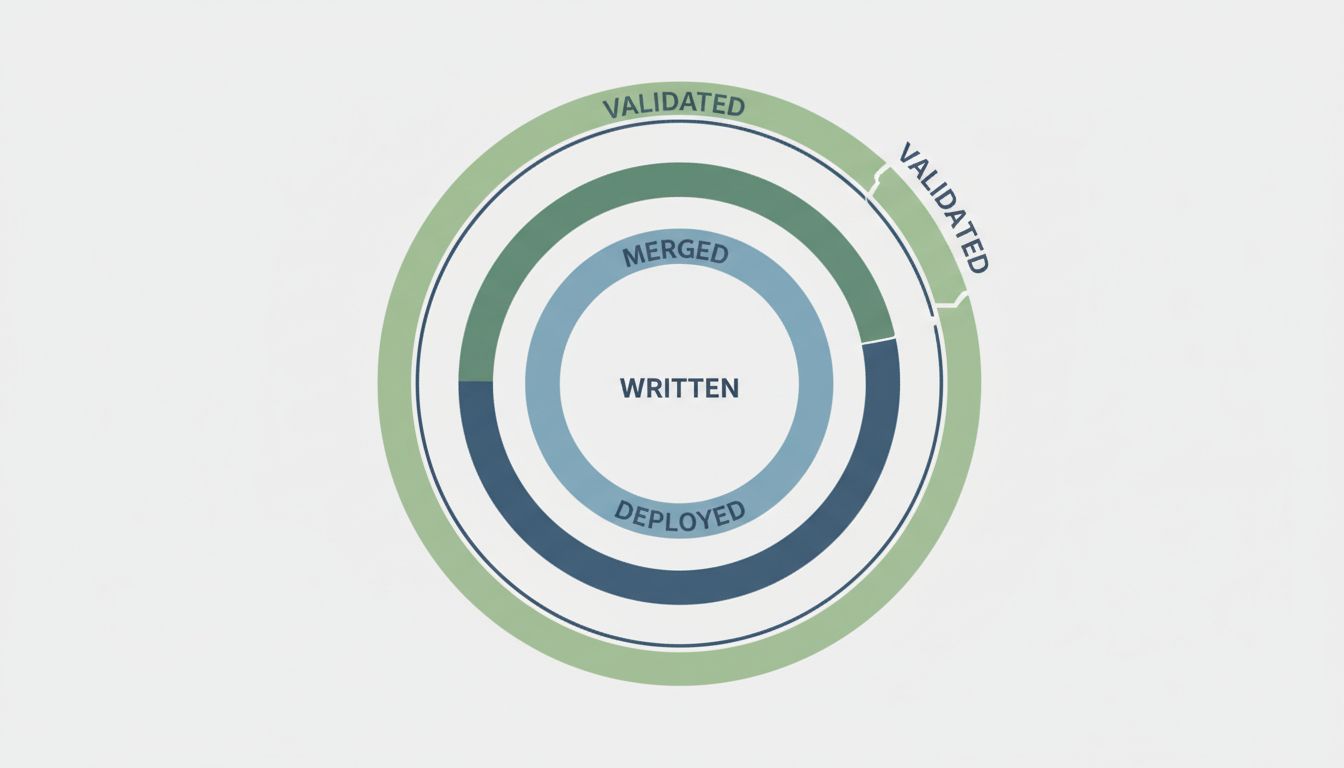

High-output teams tend to structure their definitions around four distinct layers:

Technical completion. The code does what it was specified to do. Tests cover the meaningful paths, not just the happy path. Static analysis and linting pass. If you have type checking, it passes. This layer is about the artifact itself being correct.

Integration. The artifact works within the system it belongs to. It’s been reviewed by at least one person who can speak to correctness and not just style. It’s merged into the appropriate branch. Dependencies it introduces are documented or flagged. This layer is about the artifact belonging to the codebase.

Deployment. The change is running in the target environment. For most teams, that means production or a staging environment that mirrors production closely enough to matter. Observability hooks (logging, metrics, alerting) are in place. A rollback path exists and has been thought through. This layer is about the artifact being real.

Validation. Some signal confirms the change does what was intended at a system level. This might be a metrics check, a user acceptance test, a product review, or an automated smoke test against the deployed environment. This layer is about the artifact being useful.

Most teams have implicit expectations around the first two layers. Very few have explicit, shared expectations around the third and fourth.

Why Teams Skip the Hard Layers

Deployment and validation are the layers that require coordination across roles. A developer can independently write code, run tests, and get review. Deployment usually involves at least some awareness of infrastructure, release schedules, or feature flags. Validation often involves product managers, QA, or actual users.

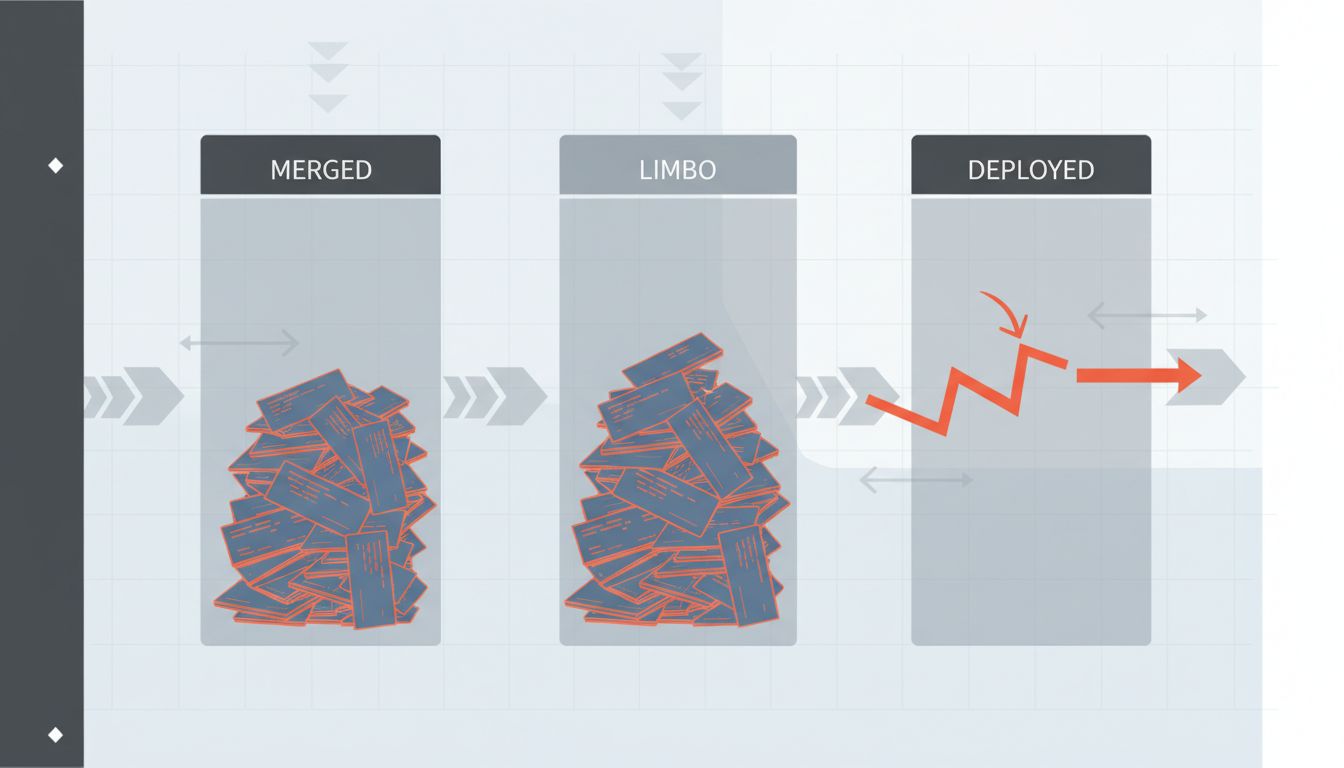

When teams are moving fast, this coordination is the first thing to collapse. The path of least resistance is to call something done when your part is done, which typically means the technical and integration layers. The remaining work becomes implicit handoff, the worst kind.

Implicit handoffs create what some teams call “invisible work in progress.” A feature is technically merged but not deployed. From a velocity standpoint it looks finished. From a user standpoint it doesn’t exist yet. The tracking system says one thing. Reality says another. Over time, this disconnect compounds. You think you shipped eight things last sprint. Users received four of them.

This is distinct from deliberate feature flagging, where you intentionally ship code without exposing it to users and that’s a conscious product decision with a specific follow-on task. Implicit handoff is when nobody is quite sure whose job it is to flip the switch, so nobody does.

How High-Output Teams Actually Enforce It

The teams that manage this well share one habit: they treat the Definition of Done as infrastructure, not documentation. It lives in the same places the work lives.

In practice, this means a few concrete things.

Pull request templates include checklist items that correspond directly to the team’s Definition of Done. Not aspirational items like “ensure code is readable” (subjective, unverifiable) but binary items like “staging deployment confirmed” or “alerts configured for new service path.” The template doesn’t enforce completion, but it makes skipping visible.

Ticket states in project tracking software map to real pipeline states, not sentiment states. A ticket doesn’t move to “Done” until deployment is confirmed, which often means integrating the ticketing system with deployment tooling so the state transition is automatic rather than manual. Tools like Linear, Jira, and GitHub Projects can all support this with some configuration effort. The teams that do this have fewer “wait, was that deployed?” conversations in standups.

Retrospectives treat Definition of Done violations as bugs, not behavioral failures. When something slipped through, the question is “what’s missing from our checklist” rather than “why didn’t you finish your part.” This matters a lot for culture, because it removes the defensive incentive to claim work is done prematurely to avoid looking slow.

The Scope Problem Nobody Talks About

One thing that makes this harder is that “done” isn’t a single definition. It needs to exist at multiple granularities: the individual task, the user story, the feature, the release. These require different checklists and different owners.

A single commit being “done” is different from a user story being “done,” which is different from a feature being “done.” A common failure mode is applying task-level thinking to feature-level work. The individual tasks all have green checkmarks, but no single person has looked at the full feature as a user would experience it. The integration gaps between tasks are nobody’s explicit responsibility.

This is why some teams separate “done” from “releasable.” A task can be done when it passes its own criteria. A feature is releasable only when it meets an additional set of criteria that apply to the whole thing as a unit: end-to-end testing, documentation, performance baselines, whatever the team has decided matters at that level.

The teams that make this distinction explicitly have dramatically fewer “it worked in testing” postmortems.

The Uncomfortable Conversation About Definition of Done and Team Incentives

Here’s the thing that’s harder to fix than process: if your team is measured on story points completed or tickets closed, you’ve built a system that rewards claiming work is done. The incentive to define “done” conservatively (meaning loosely) is real. Velocity goes up. Nobody asks hard questions until something breaks in production.

Your productivity tools are often measuring the wrong thing when they treat closed tickets as a proxy for shipped value. The number that matters is working, deployed software in front of users. Everything upstream of that is work in progress, whatever the tracker says.

Fixing this requires agreement at a level above the individual team, usually engineering leadership and product together. The Definition of Done is ultimately a contract about what the team is accountable for. If leadership celebrates velocity without asking whether the shipped items are actually in production and working, the team will optimize for the number rather than the reality.

This isn’t cynicism about engineers. It’s how incentive structures work. The fix is changing the measurement, not lecturing people about ownership.

What This Means in Practice

If you want a working Definition of Done rather than a nominal one, the starting point is a conversation with your actual team, not a document from a methodology.

Ask everyone to write down, independently, what they think “done” means for a typical piece of work. Read the answers aloud. The disagreements will surface immediately and they’ll be illuminating. Use those disagreements as the raw material for building the actual checklist.

Make the checklist binary. If a criterion can’t be verified with a yes or no, rewrite it until it can. “Code is well-tested” is not a criterion. “Unit tests cover all branches of the new logic and CI passes” is a criterion.

Attach the checklist to the places work actually flows through: PR templates, ticket transitions, deployment runbooks. Don’t rely on people remembering to consult a separate document.

Audit it quarterly. The right Definition of Done for a team of three moving fast is different from the right one for a team of fifteen with regulatory requirements. The definition should evolve with the team, which means it needs deliberate review rather than slow drift.

And accept that a more rigorous definition will, at first, feel like it slows things down. It doesn’t. It makes the actual speed visible. Work that looked done and wasn’t was always going to require more time. You’re just moving that time to where it’s less expensive to spend it.