Indexes are the first thing engineers reach for when a query is slow. Add an index, watch the query plan improve, close the ticket. The mental model is clean: indexes speed things up. The reality is messier, and if you’re running a write-heavy application or a table with a complicated mix of query patterns, some of your indexes are actively costing you performance.

This isn’t a knock on indexing. It’s a case for understanding what indexes actually do to your database, not just what they do for your SELECT statements.

1. Every Index Is a Write Tax

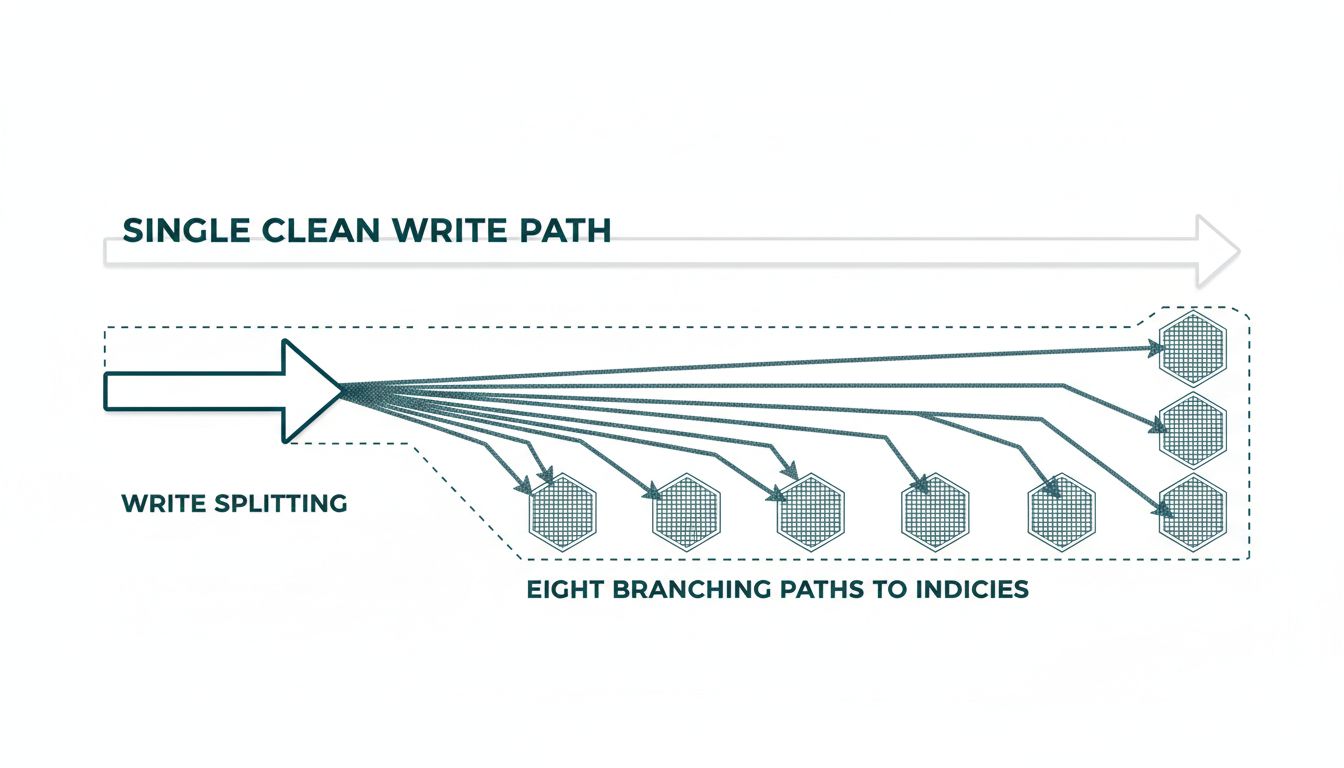

When you insert a row, your database doesn’t just write to the table. It writes to every index on that table. A table with eight indexes requires eight additional write operations per insert. On a table receiving thousands of inserts per second, this is not overhead you can ignore.

The PostgreSQL documentation is explicit about this tradeoff: indexes speed up data retrieval but slow down data modification. MySQL’s InnoDB engine maintains a change buffer specifically to defer secondary index updates because the synchronous cost would be prohibitive. These are not edge cases the database vendors are protecting against. They’re the normal operating condition of heavily indexed tables.

The practical implication: if you’re debugging slow inserts or updates, check your index count before you check your hardware. Many engineers who’ve been through this particular fire remember the day they dropped an index and watched their write throughput improve noticeably.

2. Low-Cardinality Indexes Often Hurt More Than They Help

Cardinality is the number of distinct values in a column. A boolean column has cardinality two. A status column with values like ‘active’, ‘inactive’, and ‘pending’ might have cardinality three. Indexing these columns is almost always a mistake.

Here’s why. When the query planner evaluates whether to use an index, it estimates how many rows the index will eliminate. If your users table has a million rows and half of them are ‘active’, an index on the status column pointing to ‘active’ doesn’t help you find a small haystack. It returns 500,000 rows, and the database has to fetch all of them from the heap anyway. At that point, a sequential scan would have been faster, because sequential I/O is substantially cheaper than the random I/O pattern an index forces.

Query planners in mature databases like PostgreSQL will often choose to ignore a low-cardinality index and do the sequential scan anyway. When that happens, your index isn’t just useless. It’s costing you write overhead for zero read benefit.

3. Composite Index Column Order Is Not Arbitrary

A composite index on (last_name, first_name) is not the same as an index on (first_name, last_name). The first index can serve queries filtering on last_name alone, or on last_name and first_name together. It cannot efficiently serve queries filtering on first_name alone. This is the leftmost prefix rule, and violating it is one of the most common sources of unexpectedly slow queries on tables that appear well-indexed.

The problem compounds when engineers add single-column indexes to compensate for composite indexes that aren’t serving their queries. You end up with overlapping indexes that each impose write costs, cover partially redundant cases, and leave the query planner with more choices to evaluate, which itself has a cost. Index bloat of this kind tends to accumulate gradually, usually because removing an index feels risky while adding one feels safe. The asymmetry is worth pushing back on, and it connects to a broader principle: the most valuable engineering work is often deletion, not addition.

4. Unused Indexes Are Surprisingly Common and Easy to Find

PostgreSQL tracks index usage statistics in the pg_stat_user_indexes view. The idx_scan column tells you how many times each index has been used by the query planner. In many production databases, a meaningful fraction of indexes have idx_scan counts at or near zero. These are indexes that were added speculatively, or to support a query pattern that changed, or because someone was debugging a slow query and forgot to clean up their experiment.

MySQL’s performance_schema provides equivalent data. The point is that the information is available and almost never reviewed systematically. Running a periodic audit of index usage is straightforward, takes minutes, and regularly uncovers indexes that can be dropped without any negative impact on read performance, while immediately improving write throughput.

5. Indexes on Frequently Updated Columns Fragment Over Time

B-tree indexes, which are the default in most relational databases, maintain their sorted structure through page splits. When you update an indexed column, the database removes the old entry and inserts a new one, potentially triggering a page split to maintain sort order. Over time, on columns that change frequently, this produces index bloat: pages that are only partially filled, wasting storage and forcing more I/O per scan.

The fix is periodic index rebuilding (REINDEX in PostgreSQL, OPTIMIZE TABLE in MySQL), but many teams don’t have this in their maintenance routines. The symptom is an index that looks correct in schema review but performs worse than expected in practice, and degrades further as the table grows. If you’re indexing a column like updated_at or last_login that changes on nearly every write, you should expect this problem and plan for it.

6. Partial Indexes Cover Less Ground but Cost Much Less

If your application queries a table primarily by a filtered subset, a partial index covering only that subset is smaller, faster to scan, and cheaper to maintain than a full index. A classic example: if your orders table has millions of historical records but your application mostly queries for orders with status = ‘open’, and open orders are maybe five percent of the table, a partial index with a WHERE status = ‘open’ clause is roughly twenty times smaller than a full index on status.

Partial indexes are supported in PostgreSQL and several other databases. They’re underused, probably because they require a more precise understanding of your query patterns than a blanket full-column index does. That precision is the point. An index built around how your application actually queries the data is almost always more effective than one built around how the schema happens to be structured.

The engineers who manage databases well share a habit: they treat every index as a liability until proven otherwise. The question isn’t whether an index could theoretically help a query. It’s whether it provably does, at a write cost the application can afford, without redundancy that adds maintenance weight. Most databases will tell you exactly which indexes are earning their keep. The information is there. You just have to look at it.