The Network Was Built to Lose Packets

The internet does not guarantee delivery. This is not a bug or an oversight from the 1970s that no one got around to fixing. It is a deliberate architectural choice, and understanding why reveals something genuinely surprising about how reliable communication gets built on top of a fundamentally unreliable substrate.

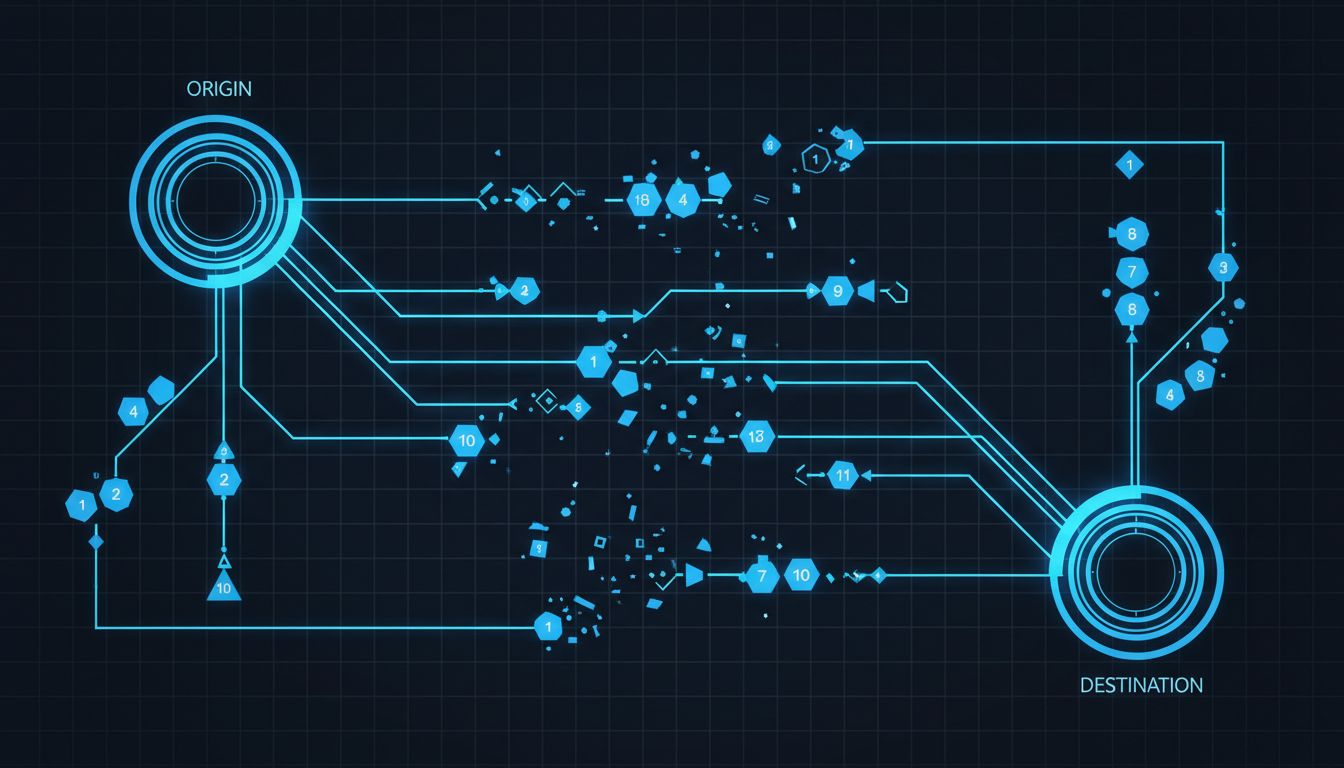

When you send a message, a video frame, or a database query across the internet, that data does not travel as a single coherent stream from point A to point B. It gets chopped into discrete packets, each one numbered and sent independently. Those packets may take completely different physical routes, passing through routers in different cities, crossing different undersea cables, arriving out of order or not at all. The system tolerates this. The genius is in how.

What IP Actually Does (and Doesn’t Do)

The Internet Protocol handles addressing and routing. It stamps each packet with a source address, a destination address, and a time-to-live counter that decrements at each router hop, ensuring packets that are lost in transit eventually expire rather than circulating forever. IP does not verify whether packets arrive. It does not reorder them. It does not retransmit lost ones. It is, by design, a best-effort delivery mechanism.

This is where most explanations of TCP/IP stop, leaving the impression that reliability just happens somehow. The actual mechanism lives one layer up, in the Transmission Control Protocol, and it is worth understanding precisely because it solves a hard problem in an economical way.

Every TCP connection begins with a three-way handshake: the sender transmits a SYN packet, the receiver responds with SYN-ACK, and the sender confirms with ACK. Only then does data flow. This exchange establishes sequence numbers, which both sides use to track exactly which bytes have been sent and received. Every byte in a TCP stream has an assigned position in this sequence. When the receiver sends an acknowledgment, it is not confirming a packet arrived. It is confirming that all bytes up to a specific sequence number have been received in order.

This distinction matters. If packet 4 in a sequence arrives before packet 3, the receiver buffers packet 4 and waits. It does not acknowledge packet 4 to the sender, because from the sender’s perspective, acknowledging out-of-order data would imply the gap was filled. The sender, watching a timer for each segment, eventually retransmits packet 3. Order is reconstructed before the data ever reaches the application.

The Checksum Problem and How It Gets Solved

Sequencing handles order and retransmission. But what about corruption? A packet can arrive intact and still contain flipped bits, the result of electrical interference, a faulty router, or a cosmic ray striking a RAM cell (which happens more often than most people expect, particularly at scale).

TCP carries a checksum field in every segment header. The sender computes this value over the header and data, and the receiver recomputes it upon arrival. A mismatch means the segment is silently discarded, triggering retransmission just as a lost packet would. The checksum algorithm TCP uses (a one’s complement sum) is relatively weak by modern cryptographic standards, and researchers have documented cases where corrupted data slips through undetected. For applications requiring stronger guarantees, additional checksums at the application layer are the standard answer.

The wider point is that TCP’s reliability model is layered. No single mechanism covers every failure mode. Sequence numbers handle order and loss. Checksums handle most corruption. Window-based flow control prevents a fast sender from overwhelming a slow receiver. Congestion control algorithms (Reno, CUBIC, BBR) prevent a fast sender from overwhelming the network itself. These mechanisms interact continuously during a connection, adjusting in real time based on observed behavior.

Why the Internet Doesn’t Collapse Under Its Own Load

The congestion control piece deserves more attention than it typically gets. Without it, a network under load would behave like a highway where every driver accelerates when they see traffic, causing crashes. TCP’s congestion control does the opposite: when a sender detects packet loss (a signal that some router along the path has run out of buffer space and started dropping), it reduces its transmission rate. This collective behavior, replicated across billions of simultaneous connections, is what keeps the internet from collapsing into congestion.

BBR (Bottleneck Bandwidth and Round-trip propagation time), developed at Google and deployed widely after 2016, changed the model. Rather than inferring congestion from packet loss, BBR actively probes the network to estimate available bandwidth and minimum latency, then targets a transmission rate that keeps those parameters in balance. Google reported significant throughput improvements on long-distance connections after deploying BBR, particularly on paths with high latency and some packet loss, where older algorithms were overly conservative.

None of this is visible to the applications sitting above TCP. A database connection, a video stream, or an HTTP request simply receives a reliable, ordered byte stream. The complexity is entirely encapsulated.

The Cost of Reliability, and When to Avoid It

TCP’s guarantees are not free. The retransmission mechanism means that if one packet in a stream is lost, the entire stream stalls waiting for it. In applications where old data is worthless (live video, online gaming, real-time sensor feeds) this is the wrong trade. A retransmitted video frame from 200 milliseconds ago is less useful than accepting the gap and moving on.

This is why UDP exists and why it has seen a resurgence. QUIC, the protocol underlying HTTP/3, runs over UDP and implements its own selective reliability: streams within a single connection are independent, so a lost packet on one stream does not block others. Google began developing QUIC around 2012, and it is now responsible for a substantial portion of internet traffic. It carries the core insight of TCP (sequencing, flow control, congestion management) while shedding the parts that make TCP poorly suited to modern web applications.

The underlying logic here connects to something broader in system design: reliability guarantees should match what the application actually needs, not default to the maximum available. Overbuilding for reliability has real costs in latency and throughput. Compression is a similar discipline, where the instinct to add more often extracts a hidden tax.

What makes TCP/IP worth studying is not that it is the final answer to reliable networking. It is that it demonstrates a consistent principle: rather than trying to make the underlying network infallible, the protocol accepts failure as the default condition and builds correctness on top of it. That approach has proven more durable than any attempt to engineer away the failures directly.