The Instinct That Makes This Worse

When a bug surfaces in production, the first instinct is to fix it fast and move on. The bug gets patched, a regression test gets written for that specific failure, and the team declares victory. This ritual feels responsible. It is, in a narrow sense. But it systematically misses the point.

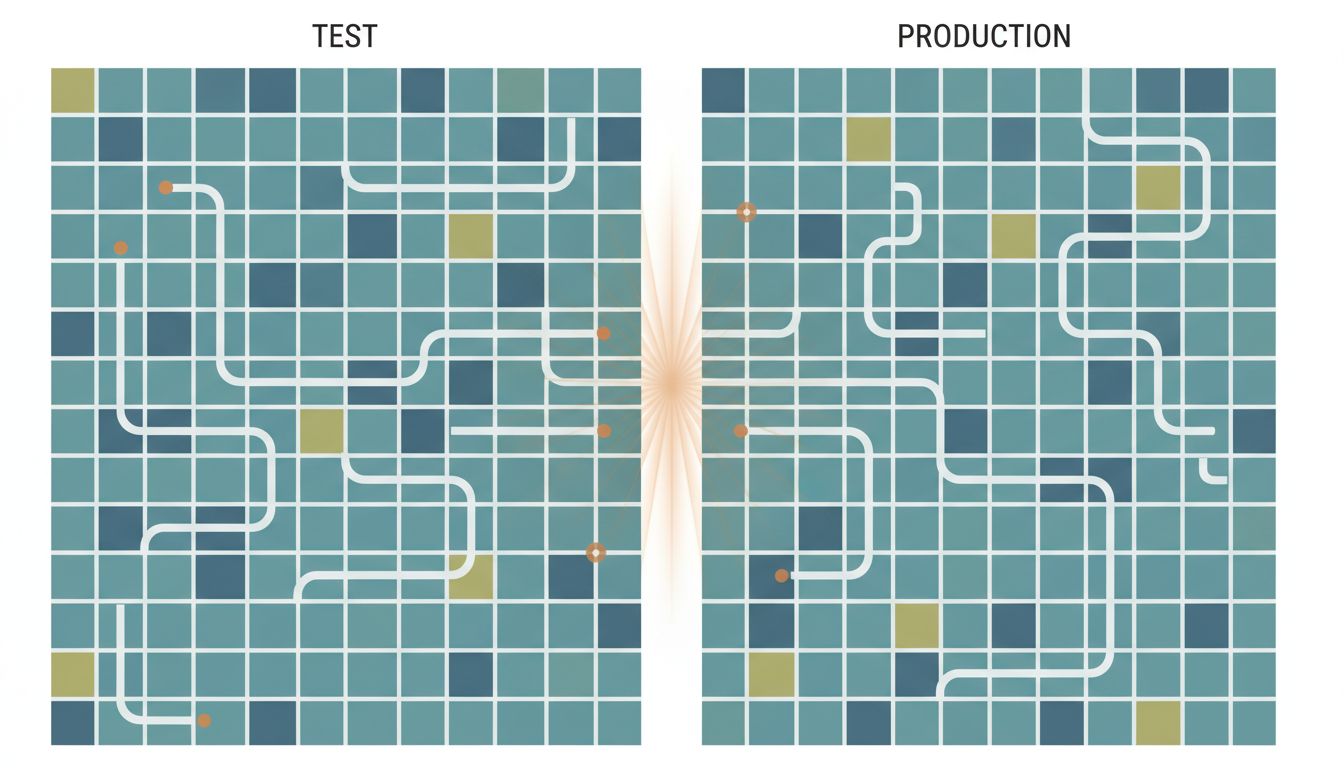

A bug that only appears in production is not primarily a bug. It is a measurement failure. Your test suite claimed the system was working. Production disagreed. The gap between those two verdicts is the real thing worth examining, and most teams never examine it.

This distinction matters because the fix-and-regress cycle produces test suites that are very good at not failing in the same way twice, while remaining perfectly capable of failing in new ways. The suite grows. Confidence grows with it. The production incident rate stays stubbornly flat.

What Tests Actually Measure (and What They Don’t)

Tests measure whether your code behaves a certain way under conditions you anticipated when you wrote the test. That is all they measure. The coverage percentage, the green build, the CI pipeline completing in under four minutes — none of these measure whether your tests are asking the right questions.

The most common failure mode is not inadequate coverage of known paths. It is the complete absence of tests for conditions that only exist in production. Consider a few categories:

Concurrency. Unit tests typically run one operation at a time against an in-memory object. Production systems run thousands of operations simultaneously against shared state. Race conditions, double-submits, and lock contention are nearly invisible in test environments that run serially.

Data shape. Test fixtures are written by developers who understand the system. Real user data is written by people who don’t. The user who puts an emoji in a name field, or submits a form twice by clicking the button fast, or pastes 40,000 characters into a text box — none of these are covered because nobody anticipated them.

Environmental drift. Third-party services behave differently at scale, under rate limits, or with real authentication tokens. A mock that always returns a 200 in under 50 milliseconds teaches your code nothing about what happens when the payment processor times out.

State accumulation. Fresh test databases don’t have four years of inconsistent data in them. Production databases do. A query that runs fine against clean test data can behave unexpectedly against the actual distribution of values your users have created.

The Problem With Mocking Your Way to a Green Build

Mock-heavy test suites are the clearest expression of this problem. Mocks let you isolate the unit under test, which is valuable for fast feedback on logic. But when mocks constitute the majority of a test suite’s external interactions, the suite is no longer testing integration. It is testing that your code calls the right functions with the right arguments, given assumptions about how those functions behave that you invented yourself.

This produces a specific failure pattern. Your payment processing code has 94% coverage. Every edge case in your charge logic is tested. The bug that hits production is that your webhook handler and your synchronous charge response both try to fulfill the same order when they arrive within 200 milliseconds of each other. Nothing in your test suite could catch this because nothing in your test suite exercises two concurrent processes against a shared database row. Your mocks return instantly and sequentially.

The coverage number was real. It just wasn’t measuring anything useful about the actual failure mode.

This is not an argument against mocking. It is an argument for being precise about what mocks let you skip, not just what they let you do faster. Every mock is a bet that the real thing will behave the way you assumed. The more mocks, the more unverified bets.

Why Staging Environments Fail at This Job

The conventional response to the “production-only bug” problem is a better staging environment. Mirror production as closely as possible. Use realistic data. Run the same infrastructure. This advice is correct and insufficient.

Staging environments fail for a structural reason: they are maintained by people who know how the system is supposed to work. Production is used by people who don’t. Staging traffic is scripted or generated; it reflects the engineering team’s mental model of what users do. Production traffic reflects what users actually do, which routinely includes sequences and inputs no developer would have scripted.

Staging also has a data problem. Even when teams anonymize and copy production data into staging, they do it infrequently, and the copy ages. The bug that appears because a specific combination of user account age, subscription tier, and locale setting produces an unexpected null — that bug requires the exact production data distribution to reproduce reliably. Most staging environments don’t have it.

The deeper issue is that staging environments are designed to give developers confidence, and developers are motivated to trust them. This creates a systematic bias toward believing the environment is more production-like than it actually is.

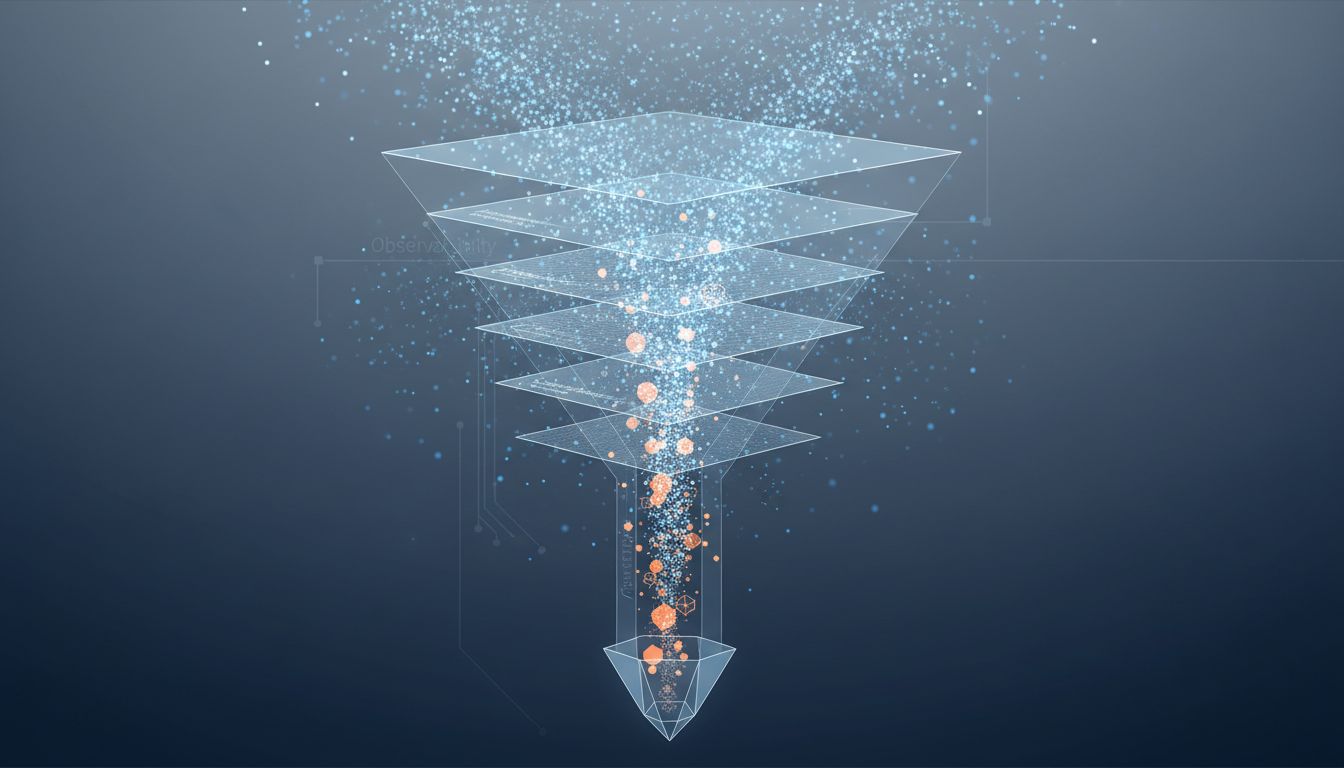

What Observability Changes About This Equation

The most useful shift in how mature engineering teams handle production-only bugs is not in testing strategy, at least not initially. It is in instrumentation. A bug you can observe, trace, and reproduce from production telemetry is a solvable problem. A bug you can only reproduce through guesswork is not.

Observability tools — structured logging, distributed tracing, error tracking with full context — do something specific: they make the production environment legible. When a bug surfaces, you have a transaction ID, a stack trace, the full request context, and ideally a recording of exactly what state the system was in when the failure occurred. That information transforms the question from “why might this happen?” to “here is exactly what happened.”

This matters for testing because it closes the loop. The bug from production is now fully specified. You can write a test against the exact inputs and state that caused the failure, not a hypothetical approximation of it. That test will be more precise and more durable than one written from speculation.

Companies that have invested seriously in observability — the kind with structured traces across every service boundary and rich context on every error event — consistently report faster mean time to resolution on production incidents. The instrumentation doesn’t prevent bugs. It makes every bug a better source of information about what the test suite missed.

The Honest Taxonomy of Your Test Suite

Most test suites contain several distinct types of tests that are rarely separated in how teams think about them. Separating them is clarifying.

There are tests that verify logic: given these inputs, does this function return the right output? These are fast, reliable, and good at what they do. They are also the category least likely to catch a production-only bug, because production-only bugs are almost never logic errors in isolated functions.

There are tests that verify integration: does this component interact correctly with that component under realistic conditions? These are slower, require more setup, and fail more often for incidental reasons. Teams frequently deprioritize them because they make CI slow and flaky. This is a mistake with a specific cost.

There are tests that verify behavior under load or concurrency: does the system hold up when ten things happen simultaneously? These require infrastructure investment and are rarely written at all. They are the category most directly predictive of production-only bugs.

And there is what some teams call “property-based testing” or “fuzzing”: instead of specifying exact inputs, you define constraints and let the testing framework generate thousands of cases you wouldn’t have written yourself. Tools like Hypothesis in Python and fast-check in JavaScript implement this. Property-based testing is effective precisely because it explores the input space the developer didn’t anticipate, which is exactly the space production users occupy.

A test suite that is 95% logic tests and 5% integration tests, with zero load or property-based tests, will produce the pattern you recognize: high coverage, recurring production surprises.

What This Means

The production-only bug is diagnostic. It tells you that your test suite models your assumptions about the system rather than the system’s actual behavior. The right response is not a faster hot fix. It is an audit of what categories of tests you have and what categories you’re missing.

Specifically: if your suite is dominated by unit tests with heavy mocking, you are probably undercovered on integration behavior. If you have no tests that run concurrent operations, you are flying blind on race conditions. If your test data is all developer-authored fixtures, you have no coverage of the input distributions that real users generate.

The engineer who fixes the bug rarely knows why it existed in the first place, because the fix is usually faster than the diagnosis. Slowing down to trace the failure back to its origin in the test strategy is where the compounding value is. Every production-only bug is an opportunity to make the next ten less likely. Most teams spend that opportunity on making that one specific bug impossible to repeat instead.

The green build is a measurement. What it’s measuring depends entirely on the questions you decided to ask.