The Machine That Earns Its Keep by Sitting Still

If you walked into most data centers and watched CPU utilization graphs, you’d find a category of servers that hover near zero, day after day. No user requests, no batch jobs, no cron tasks firing. They exist purely to wait. To a CFO scanning a hardware budget, these machines look like waste. They are, in reality, the most important thing in the rack.

This is the counterintuitive core of high-availability architecture: the value of a server is not proportional to how hard it works. Standby nodes, failover replicas, and hot spares produce no output during normal operations, yet their presence determines whether your system survives the inevitable failure of the servers that do. Understanding why requires thinking carefully about the difference between work and insurance, and why organizations routinely underfund the latter.

What a Standby Server Actually Does

The terminology here matters. Engineers distinguish between cold standbys (a server that’s powered off and would take time to provision), warm standbys (running but not serving traffic, requiring some reconfiguration), and hot standbys (fully synchronized and ready to take over in seconds without human intervention). The cost gradient between these options is steep, but so is the recovery-time difference.

A hot standby database replica, for example, is continuously receiving write-ahead logs from the primary. It is processing real data, maintaining indexes, and staying synchronized in near-real time. What it isn’t doing is serving queries to users. From a resource-utilization perspective, it looks like a drain. From an availability perspective, it is the only reason your system can survive a primary disk failure without losing transactions or going dark for hours.

The PostgreSQL streaming replication model illustrates this well. A replica configured with hot_standby = on can serve read queries, which at least gives it visible utility. But the core purpose, the reason it exists, is failover. The read traffic is a secondary benefit. Companies sometimes forget this ordering and start routing so much read load to the replica that it can’t keep up with replication lag, which defeats the entire point.

The Accounting Problem That Gets People Killed

Organizations consistently underinvest in standby infrastructure for a simple reason: the cost is visible and the benefit is not. You can see the monthly AWS bill for a standby RDS instance. You cannot see the outage that didn’t happen because it was there.

This is classic insurance accounting, and it creates the same distortions. A company that has never had a serious outage tends to view its standby nodes as overhead. A company that has lost a day of transactions to a primary failure treats those same nodes as sacred. The difference in perspective is experience, and experience in this domain is expensive to acquire.

The financial math is not complicated. If your business generates meaningful revenue per hour and your mean time to recovery from a primary failure without a hot standby is, say, four hours (accounting for diagnosis, provisioning, restoration from backup, and data validation), then your expected loss per failure event is substantial. The standby server costs a fraction of that per year. The return on investment is obvious in retrospect and invisible in foresight, which is exactly the circumstance under which organizations cut infrastructure budgets.

This is structurally similar to why your most important work never makes the task list. Preventive infrastructure doesn’t generate tickets, doesn’t ship features, and doesn’t appear in sprint retrospectives. It simply prevents catastrophes that are never attributed to it.

The Geography Problem: Why Distance Is a Feature

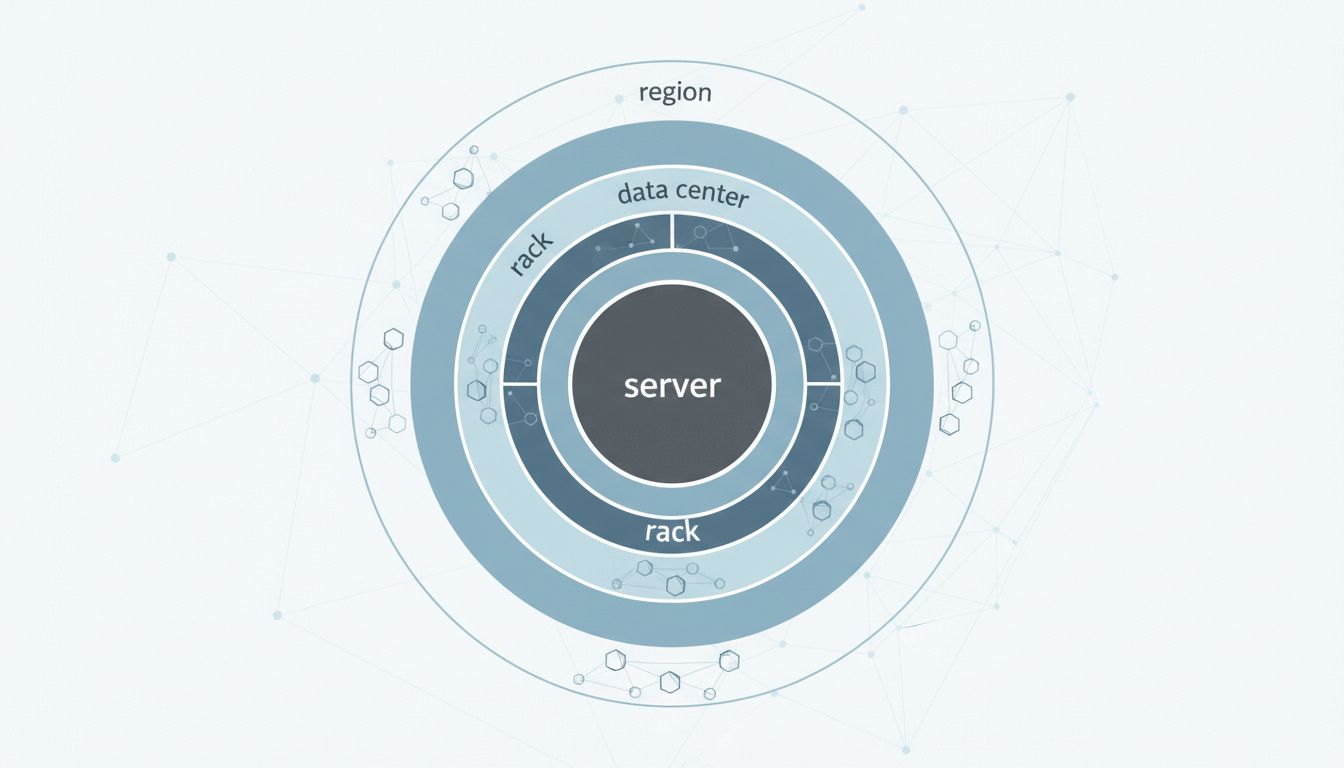

A single standby server in the same rack as the primary solves some failure modes and ignores others. Disk failures, yes. Power supply failures, yes. A rack-level power circuit failure, a cooling failure affecting that row, a maintenance window gone wrong, a network switch failure, no. Genuine high availability requires thinking in terms of failure domains, not individual machines.

This is why AWS, Google Cloud, and Azure all organize their infrastructure around availability zones: physically separate data centers with independent power, cooling, and networking, close enough together to maintain synchronous replication without prohibitive latency. The canonical recommendation for production workloads is to span at least two availability zones, which means you’re paying for infrastructure in multiple locations, much of which is idle during normal operations.

The extreme version of this is a hot standby in a separate geographic region. Cross-region replication introduces latency, which means you’re typically looking at asynchronous replication with a recovery point objective measured in seconds or minutes rather than milliseconds. For most businesses, some data loss is acceptable. For financial systems, healthcare records, or anything with regulatory implications, it often isn’t, which is why truly mission-critical systems sometimes maintain synchronous replication across geographic distances at significant cost and complexity.

Netflix’s famous Chaos Engineering practice, which involves deliberately injecting failures into production systems, emerged precisely from recognizing that the only way to know your standby infrastructure actually works is to test it under realistic conditions. Their Chaos Monkey tool (and later the Simian Army) would randomly terminate production instances to verify that their systems failed over correctly. The insight was uncomfortable: standbys that are never activated have a tendency to drift, misconfigure, or fail silently in ways that only become apparent when you actually need them.

The Subtler Cases: Queues, Caches, and Consensus Systems

Database replicas are the obvious example. The principle extends further.

Message queues are frequently deployed in clusters where multiple brokers share the load, but the cluster is sized to survive the loss of one or more nodes without dropping messages. A Kafka cluster with a replication factor of three means that two of three brokers could hold a copy of any given partition. One of those brokers might be actively handling consumer requests; the others are replicating and waiting. They look idle. They are your durability guarantee.

Distributed consensus systems like etcd (used extensively in Kubernetes for cluster state management) require an odd number of nodes to maintain quorum. A three-node etcd cluster can survive one node failure. A five-node cluster can survive two. In both cases, you’re paying for nodes whose primary function is to exist in case others disappear. A two-node cluster, which might seem like a cost-efficient compromise, provides no fault tolerance at all, since losing either node breaks quorum. The third node doesn’t earn its keep by doing more work; it earns its keep by making the whole system survivable.

Content delivery networks use a similar logic for origin shield configurations: a caching layer that sits between the CDN edge and your origin server. Under normal load, the shield handles most requests. Under a cache miss storm (or a DDoS attack that bypasses the cache), the shield absorbs traffic that would otherwise hit your origin directly. It’s doing less visible work than the edge nodes but preventing a failure mode the edge nodes can’t handle alone.

What You’re Actually Buying When You Buy Uptime

Service level agreements are usually expressed as percentages. 99.9% uptime sounds impressive until you calculate that it permits roughly eight hours of downtime per year. 99.99% (“four nines”) allows about an hour. 99.999% (“five nines”) allows around five minutes. Each additional nine requires not just better software but more standby infrastructure, more redundant paths, more components sitting idle so that failures in active components don’t propagate.

When you buy a service with a meaningful SLA from a cloud provider, you are largely buying their standby infrastructure. You are paying for servers that will never serve your requests under normal conditions. The SLA is a bet that those servers will earn their cost in the rare moments they’re needed.

For companies building their own systems, the same economics apply. The decision to run a two-availability-zone architecture versus one is a decision about how much standby capacity you’re willing to fund. The decision to maintain a cross-region replica is a decision about acceptable data loss in extreme scenarios. These are business decisions disguised as technical ones, and they deserve explicit analysis rather than being decided by whoever sets up the initial cloud account.

What This Means

Idle infrastructure is not wasted infrastructure. The correct metric for standby servers is not utilization but availability, and the cost of not having them is measured in outage duration and data loss rather than monthly bills.

Several practical conclusions follow from this:

Test your standbys. A failover that has never been exercised in production is not a failover. Schedule deliberate failover drills before you need them urgently.

Budget for failure domains, not just nodes. A second server in the same rack is not the same as a second server in a separate availability zone. The additional cost buys a qualitatively different category of protection.

Separate the accounting. Standby infrastructure cost should be understood as reliability spending, not operational waste. It belongs in the same mental category as insurance premiums, not bloated headcount.

Know your recovery objectives before you design. The acceptable recovery time and acceptable data loss for your application determines what kind of standby architecture you need. Starting with the architecture and then discovering your objectives don’t match is expensive to fix.

The server doing nothing is doing exactly what you need it to do. The question is whether you know that before or after it saves you.