Prompt engineering started as a punchline. ‘You’re just typing sentences into a chatbox’ was the dismissal, delivered with the particular confidence of people who hadn’t actually tried to do it well. A year or two later, companies are writing six-figure job postings for the role and internal AI teams are quietly reorganizing around the people who are best at it.

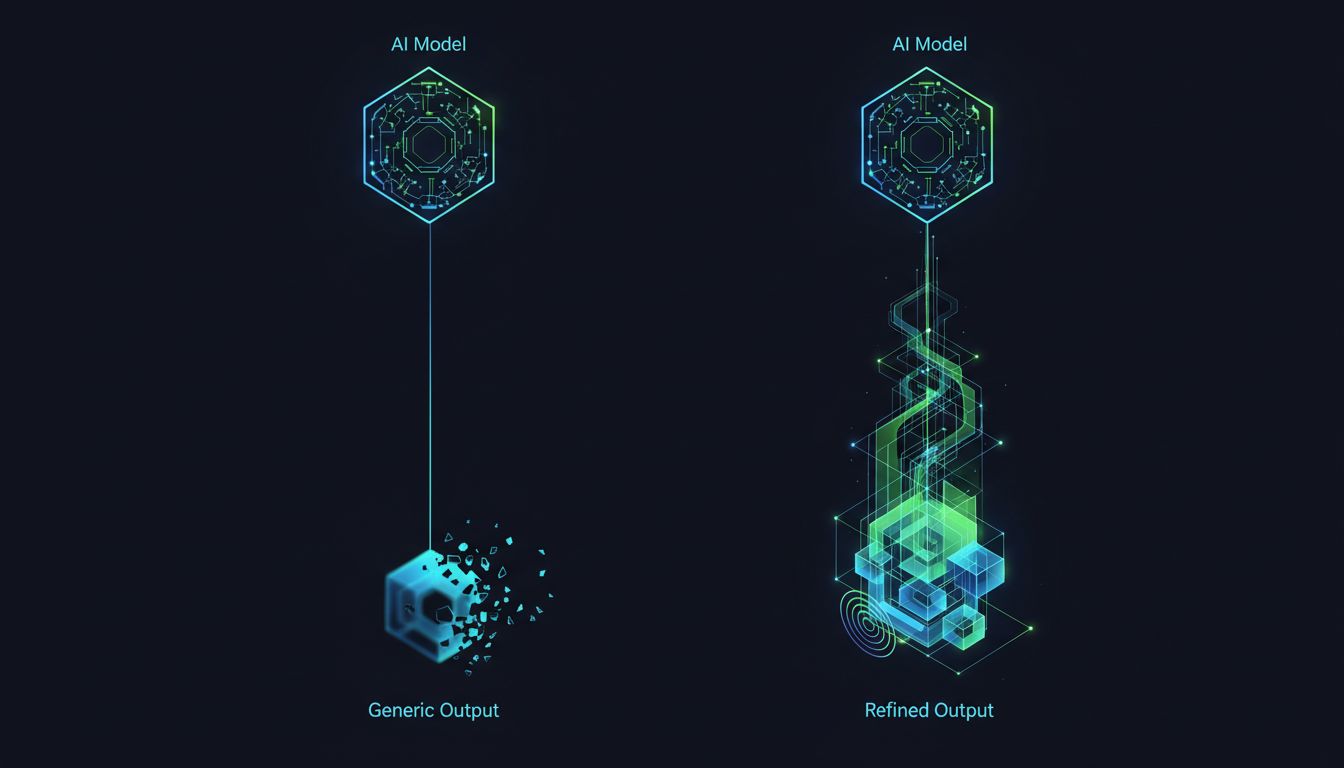

This isn’t hype catching up to reality. It’s reality catching up to something most developers discovered the hard way: the model you have access to and the results you actually get are two very different things, and the gap between them is almost entirely determined by how you prompt.

The Interface Layer Everyone Underestimated

For most of software history, the interface between human intent and machine output was code. You wrote precise instructions; the machine followed them precisely. The appeal of large language models is that you can now express intent in plain language. The catch is that ‘plain language’ is doing enormous work here.

Natural language is ambiguous in ways that syntax isn’t. When you write a function, the computer does exactly what the function says. When you write a prompt, the model interprets what you probably meant, weighted by everything it learned during training. That interpretation process is where enormous amounts of value get created or destroyed.

A prompt engineer’s job is to close that gap. They figure out how to express intent in a way that reliably produces the output you need, across varied inputs, at scale. That sounds simple until you’re debugging why your customer service bot apologizes for things it didn’t do, or why your code generation tool produces working code 80% of the time but subtly broken code the other 20%. Finding and fixing those patterns is not a trivial skill.

Why This Skill Is Rarer Than It Looks

Good prompting requires a specific and unusual combination of abilities. You need to think carefully about how language works and where ambiguity hides. You need enough domain knowledge to recognize when an output is subtly wrong rather than obviously wrong. You need the patience to run systematic experiments rather than just trying random variations until something sticks. And you need to understand, at least roughly, how the model you’re working with behaves (since different models have genuinely different strengths, failure modes, and sensitivities).

Most people have one or two of these. Fewer have all four. The ones who do tend to produce outputs that look almost magical to their colleagues, which is partly why the role gets dismissed as ‘not real engineering.’ It’s real. It’s just operating at a layer most engineers haven’t spent time on yet.

It’s also worth noting that the skill compounds in a way that raw model capability doesn’t. A better model is a one-time improvement. A better prompt engineer improves every workflow they touch, continuously, and builds institutional knowledge that stays in the organization even as models change.

What They Actually Do All Day

If you’re building an AI-powered product or integrating AI into internal workflows, here’s what a skilled prompt engineer is concretely doing for you.

First, they’re designing and iterating on system prompts. The system prompt is the foundation of any model-powered feature, and small changes to it cascade through every interaction. Getting it right involves understanding what constraints to set, what persona or tone to establish, what edge cases to anticipate, and how to structure context so the model uses it well. (On that last point, the relationship between context structure and output quality is more complex than most people assume, as the research on context window behavior suggests.)

Second, they’re building evaluation frameworks. One of the hardest problems in AI product development is knowing whether a change you made actually improved things. A prompt engineer builds test suites, defines quality criteria, and creates processes for measuring output quality systematically rather than anecdotally. This is unglamorous work that most teams skip, and the absence of it is why so many AI features quietly degrade after launch.

Third, they’re translating between stakeholders. Business teams know what outcome they want. ML engineers know what the model can do. Prompt engineers understand both well enough to bridge them, which turns out to be surprisingly rare and valuable.

The Case for Prioritizing This Role Now

There’s a reasonable objection here: models keep improving, and many prompting tricks that work today may become unnecessary as models get better at inferring intent. If GPT-6 or whatever comes next can just understand what you mean, does the role collapse?

Probably not, for two reasons. First, the ceiling keeps rising. Better base models don’t eliminate the performance gap between mediocre and excellent prompting; they raise the floor for everyone while leaving the gap largely intact. The absolute quality of good prompting improves alongside the models. Second, the complexity of what teams are trying to do with AI keeps increasing. Early use cases were simple enough that basic prompting was fine. Current use cases, multi-step agents, complex RAG pipelines, specialized domain applications, require serious craft at the prompt layer regardless of how capable the underlying model is.

The teams that are ahead right now in AI product quality almost universally have someone (or a small group of people) who takes prompting seriously as a discipline. That’s not a coincidence.

If you’re building an AI team or trying to improve an existing one, the practical move is to identify who in your organization already has this skill set and is doing it informally. They’re probably in a role that doesn’t officially include it. Give them room to focus on it, a proper mandate, and a title that reflects the actual value. You’ll get compounding returns in ways that adding raw engineering headcount often doesn’t deliver.

The Quiet Reorganization

What’s happening across AI teams right now isn’t dramatic. There’s no announcement that prompt engineering has become a serious discipline. Instead, there’s a quiet sorting process: teams that invested in this skill are producing noticeably better AI products, and teams that treated it as an afterthought are stuck in a loop of inconsistent outputs and disappointed users.

The title will probably evolve. Some companies are calling these people AI engineers, conversational designers, or model integration leads. The label matters less than recognizing what the role actually requires and compensating for it accordingly. The person who can make your AI reliably do what you need it to do, across all the edge cases and failure modes and ambiguous inputs your users will throw at it, is genuinely hard to find and worth keeping.