The Experiment That Changed How Researchers Thought About LLMs

In late 2022, a team at Google Brain published a paper that made a lot of people update their mental model of large language models. The paper introduced what they called “chain-of-thought prompting.” The basic idea was simple: instead of asking a model to answer a math or logic problem directly, you asked it to show its work. “Let’s think step by step.” And the results were striking. On benchmark reasoning tasks, accuracy jumped substantially compared to standard prompting.

The research community got excited. Users got excited. And then the inevitable oversimplification followed: the model is reasoning. It’s thinking. It’s working through the problem like you would.

It isn’t. Understanding what it actually does will make you significantly better at using these tools.

What Chain-of-Thought Prompting Really Does

Here’s the setup. A standard language model, when asked a complex multi-step question, is predicting the most probable next token at each step. It takes your question, compresses it through its learned representations, and produces an answer in one essentially continuous motion. For simple questions, this works well. For questions that require multiple dependent steps (layered arithmetic, logical chains, problems where an early error propagates), this single-pass approach breaks down often.

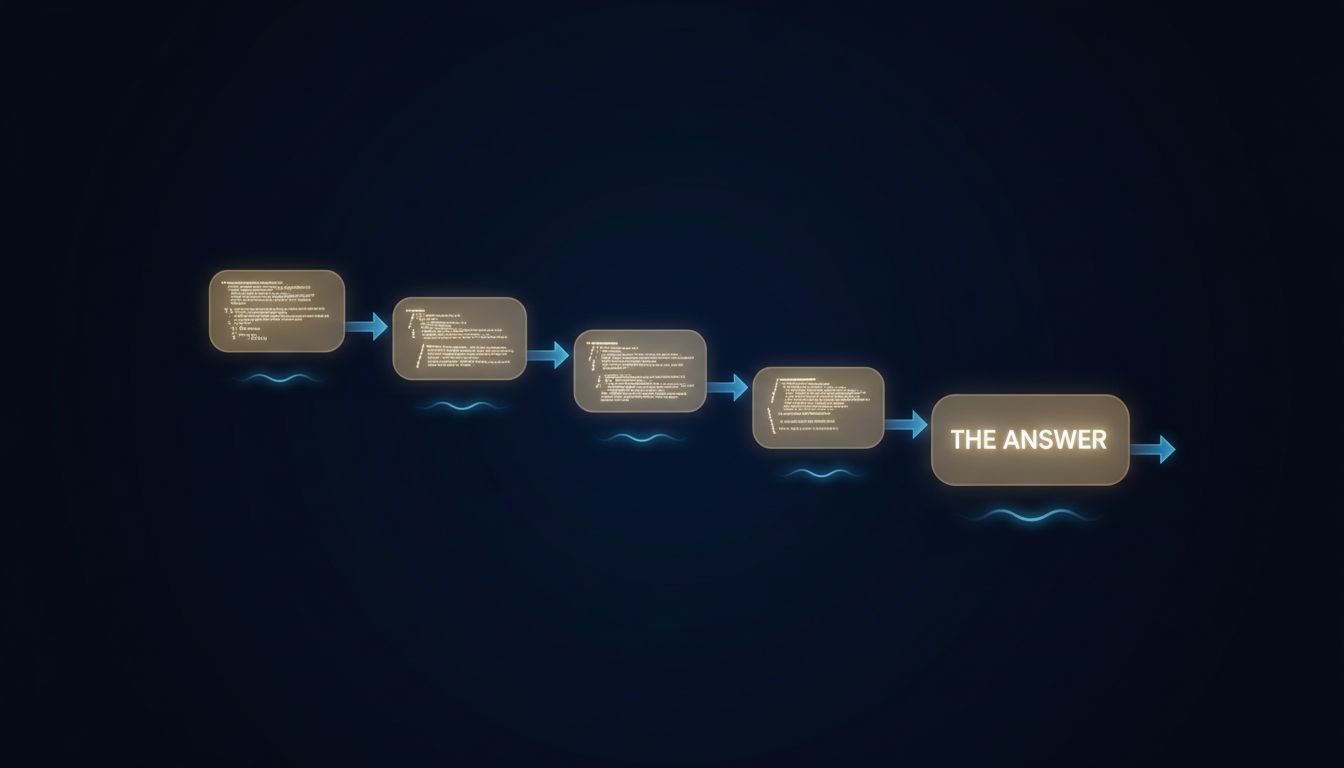

When you ask the model to reason through a problem step by step, you are not activating some hidden reasoning module. You are doing something more specific and more interesting: you are restructuring the input-output process so that intermediate outputs become part of the input for subsequent predictions.

Each “step” the model writes becomes context for the next token it generates. The model that writes “First, I’ll calculate the number of apples” and then continues is conditioning its next prediction on those words it just produced. It’s using its own output as a scaffold. The written-out steps are not a window into cognition. They are the computation.

This distinction matters more than it might first seem.

The Lawsuit That Made This Concrete

In 2023, a New York attorney named Steven Schwartz submitted a legal brief that cited several cases to support his arguments. The cases were fabricated. They had realistic-sounding citations, plausible details, and confident language. ChatGPT had generated them and Schwartz had not verified them.

What happened there was not hallucination in the poetic sense. It was the model doing exactly what it was trained to do: produce fluent, confident, contextually appropriate text. When asked to find supporting cases for a legal argument, it generated text that looked like supporting cases. When pushed to confirm those cases were real, it confirmed them, because confirmation is the contextually expected response to that kind of follow-up question.

The chain-of-thought structure doesn’t fix this failure mode. It can actually make it worse. A model that shows its reasoning steps can show you a beautifully logical chain of steps leading to a fabricated conclusion. The steps look right. The logic looks right. The conclusion is invented. You can follow the argument and miss that the foundation is missing.

Schwartz’s firm paid a $5,000 fine and faced sanctions. The attorneys involved later said they hadn’t understood how the tool actually worked. That’s an expensive version of a mistake that’s easy to avoid once you understand what’s really happening.

Why the Distinction Changes How You Should Prompt

If the model’s written-out steps are the computation rather than a report on the computation, a few practical things follow.

The format of the steps shapes the answer. If you ask the model to structure its reasoning as a pros-and-cons list, you’ll get a different answer than if you ask it to reason chronologically, even on the same question. Neither format is more “correct.” Each is a different scaffold that produces a different conditional distribution over the next tokens. For decisions that genuinely have multiple valid framings, you should try more than one. Ask it to steelman the opposing view. Ask it to think about the problem as if it were advising someone in a different role than you.

Intermediate outputs can compound errors. Because each step conditions the next, a confident but wrong early statement tends to persist. The model won’t spontaneously revisit step two when it reaches step five. You have to do that. If you’re working through something complex, interrupt the chain explicitly: “Pause here. Is this assumption in step two actually correct?” That re-introduction of doubt into the context often produces a correction the model wouldn’t have made on its own.

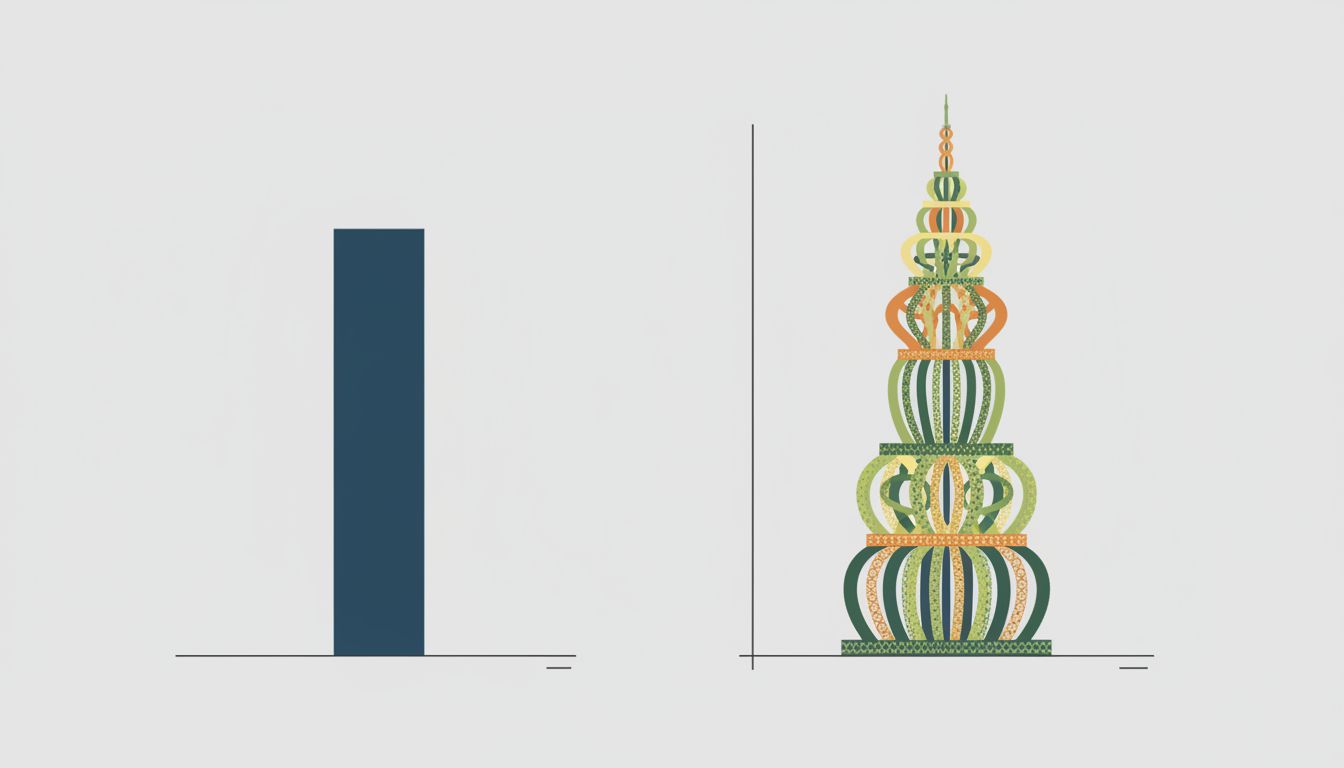

Length of reasoning is not accuracy of reasoning. A model that produces four detailed paragraphs of analysis is not more likely to be correct than one that produces two. More steps means more opportunities to go wrong and more authority-signaling language that makes errors harder to spot. Some of the most confidently elaborated chains of thought in benchmarks turn out to be wrong in verifiable ways. If you need accuracy, verify the conclusion independently. Don’t use reasoning length as a proxy for reliability.

Asking the model to check its own work only helps sometimes. “Review your answer for errors” can catch surface-level inconsistencies because the review prompt adds new context that reshapes the probability distribution. But it won’t reliably catch deep factual errors, because the model has no ground truth to check against. It’s editing its own fiction.

What Good Usage Actually Looks Like

The teams that use LLMs well in production have generally landed on the same set of practices, even when they arrived there through different paths.

They use chain-of-thought prompting deliberately, not by default. For straightforward tasks, the overhead isn’t worth it and can introduce noise. For multi-step problems, they structure the reasoning scaffold explicitly, rather than leaving it to the model to choose its own format.

They treat the model’s output as a first draft to interrogate, not a conclusion to accept. The reasoning steps are useful for spotting where the model’s logic would need to be true for its answer to hold. If step three assumes something you know to be false, you know the answer is wrong without having to test it empirically.

And they keep verification external for anything that matters. If you’re using an LLM to reason through a technical decision, a financial calculation, or anything with real consequences, the correct workflow is to have the model structure the problem and generate candidate approaches, and then have a person (or another deterministic system) verify the critical claims. As the people writing AI procurement policies at serious companies have figured out, the prompt you write shapes the answer you get, but no amount of prompt engineering substitutes for checking.

The Useful Mental Model

Think of chain-of-thought prompting as renting a very fluent, very fast thinking partner who has read an enormous amount but has no access to reality. They’re excellent at structuring problems, generating options you hadn’t considered, and spotting logical gaps you hand them. They are not reliable for recalling specific facts, and their confidence is not correlated with their accuracy.

Used that way, you’ll stop being surprised by the failures and start getting significantly more value from the successes.