The Test Passes. The Bug Is Still There.

Here is a scenario that is becoming routine: a developer pastes a failing test and an error message into an AI coding assistant. The model produces a patch. The test goes green. The PR ships. Three weeks later, a related system breaks in a way that’s hard to trace, and no one immediately connects it to that patch.

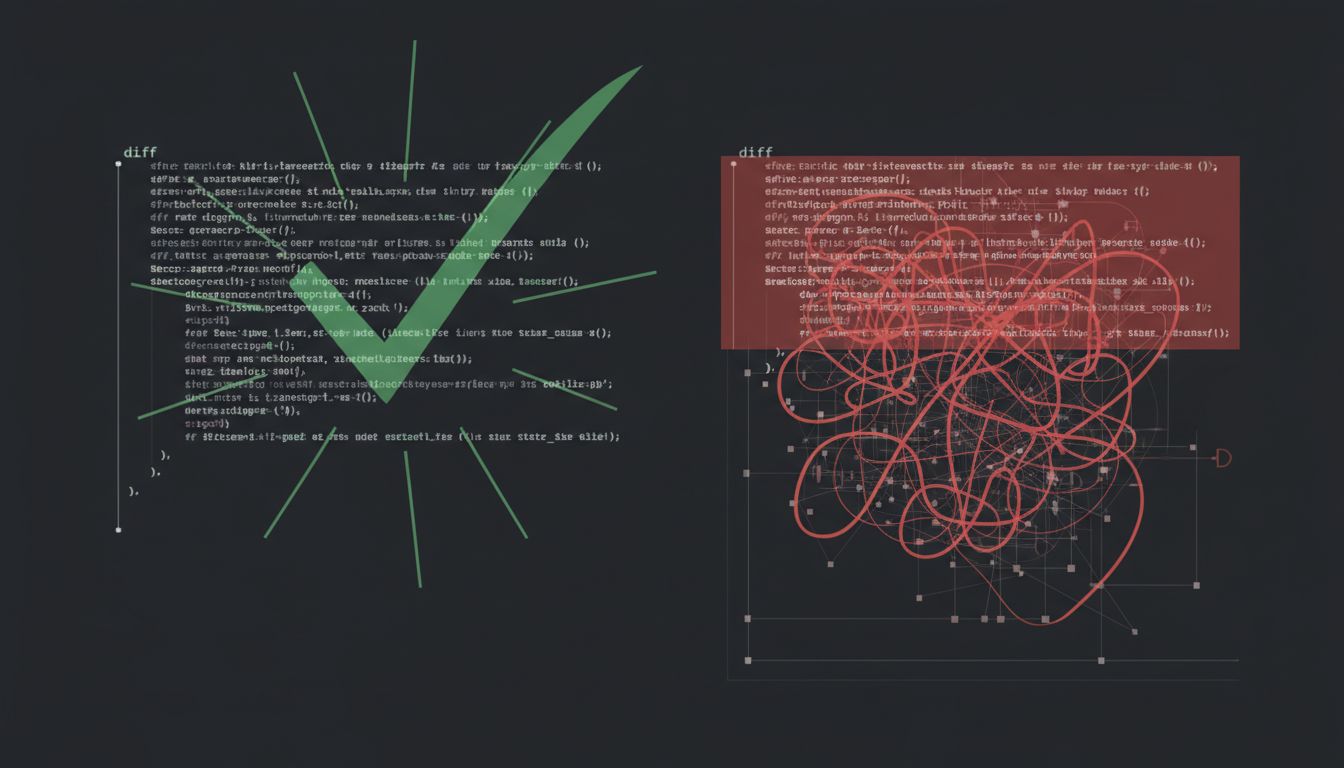

What happened isn’t a hallucination in the dramatic sense. The model didn’t invent a function that doesn’t exist. It made a more subtle error: it solved the stated problem without understanding the underlying one. The diff was locally correct and globally wrong.

This distinction, between making a test pass and actually fixing the root cause, has always existed in software engineering. But AI assistants sharpen it into something more dangerous, because they’re extremely good at the former and structurally limited in the latter.

What AI Models Are Actually Optimizing For

When you hand a coding assistant an error and ask for a fix, the model is doing something like this: it’s pattern-matching against an enormous training set of code, identifying common solutions to similar-looking problems, and generating output that is syntactically and semantically plausible given your context window. It is not reasoning about your system’s invariants. It doesn’t know what this function is supposed to guarantee. It doesn’t know why the original developer made the choice being replaced.

The result is code that is often, in a narrow sense, correct. It compiles. It passes the tests you provided. It follows style conventions. It may even be elegant. But it’s solving for the surface artifact of the problem, not the problem itself.

Consider a concrete class of example. A function throws a NullPointerException in production. A developer asks an AI to fix it. A common AI response: add a null check at the call site. Null check added, exception gone, test green. But the real question, why is null being returned here in the first place, goes unasked. The null is probably a symptom of a state management issue upstream. The fix papers over it. Null Was a Mistake. We’ve Known for 60 Years. is a relevant backdrop here: the problem isn’t just that null exists, it’s that we keep treating it as an acceptable answer when it’s actually a question.

The Context Window Problem Is Structural

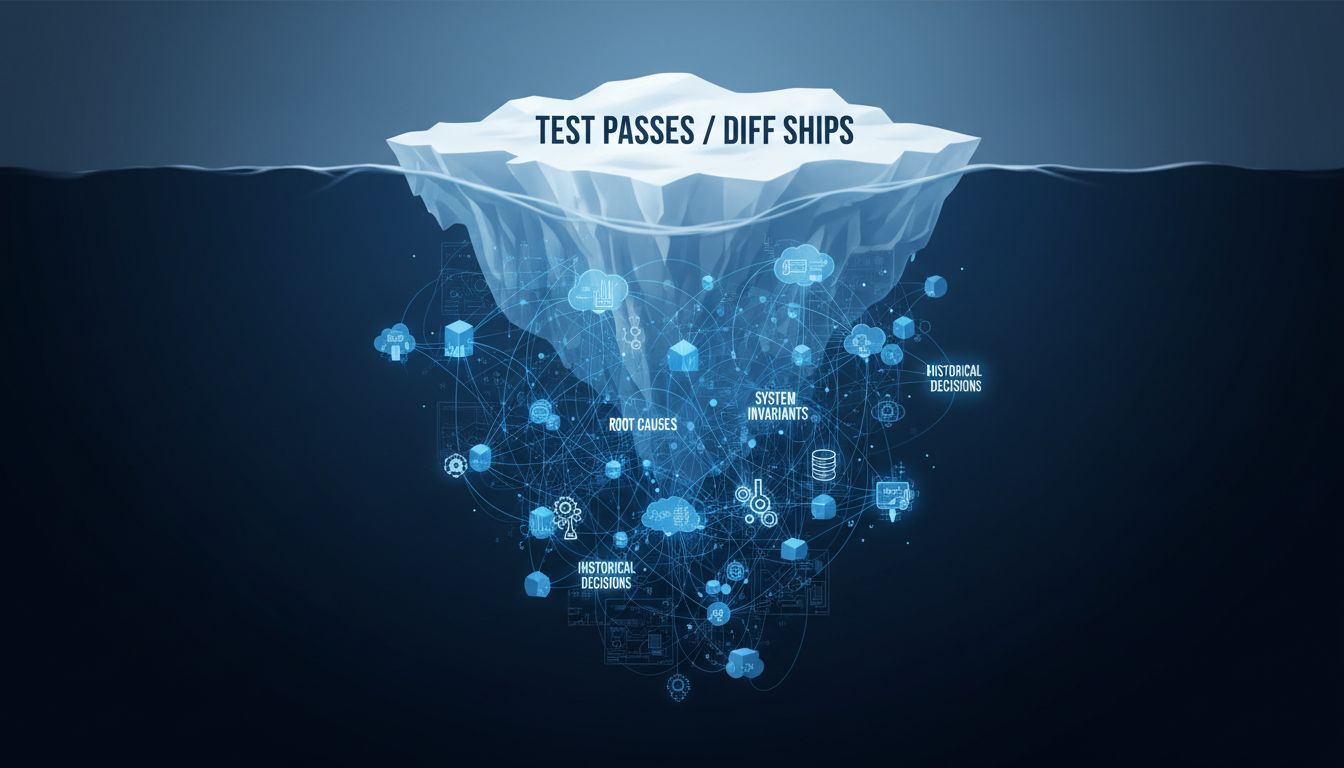

Large context windows have given AI coding assistants a lot more rope, but they haven’t solved the fundamental problem. A model that can read 100,000 tokens of code is still reading a slice of a system, not the system’s design history, not the rejected approaches in old PRs, not the implicit contracts between modules that live in no document anywhere.

Even within what it can read, how models actually use context isn’t uniform. Attention tends to weight tokens near the beginning and end of context more heavily. The 40,000 tokens in the middle, which might contain the code that explains why the buggy function exists, get less weight. You can feed a model your entire codebase and it still might not integrate the key constraint buried in module seven.

This isn’t a knock on the technology. It’s a structural reality about how these models work. The implication is that the developer, not the model, has to hold the system-level understanding. The model is a powerful tool for executing on that understanding, but it cannot substitute for it.

Speed Is the Actual Threat

The reason this matters more now than it did with Stack Overflow copy-paste (which had the same failure mode, to be fair) is velocity. AI coding assistants are fast. A developer can generate, review, and merge a patch in less time than it would take to thoroughly trace the bug’s origin. The path of least resistance is clear: generate a plausible fix, verify the obvious cases, ship it.

Organizations that have adopted AI assistants widely report significant throughput increases. That’s real. But throughput is not the same as correctness, and it’s not the same as maintainability. A codebase that accumulates many locally-plausible-but-globally-wrong patches becomes harder to reason about over time. Each patch is individually defensible. The aggregate is a mess.

This is the classic technical debt pattern, accelerated. The problem with technical debt is rarely any single decision. It’s the compounding of many decisions that were each reasonable-looking at the time. AI assistants don’t create that pattern. They turbocharge it.

What Good AI-Assisted Debugging Actually Looks Like

The developers who use AI coding assistants most effectively tend to have a disciplined habit: they use the model to explore, not to conclude. They ask it to explain the error, to list hypotheses about root causes, to suggest what to look at next. They treat the generated diff as a proposal to evaluate, not an answer to accept.

This sounds obvious, but it requires deliberately slowing down a tool that is specifically designed to feel fast. It requires asking, before merging: does this fix make sense given what I know about why this code exists? If I didn’t have the test harness, would this change feel right?

It also requires better prompting practice. A prompt like “fix this error” is asking for a local patch. A prompt like “explain what is causing this error and what the correct long-term fix would be, noting any tradeoffs” produces something materially different. The model is capable of that reasoning. The default interaction pattern doesn’t elicit it. Prompt Engineers Are Becoming Your Most Valuable AI Hire gets at a real point here: how you frame the problem shapes everything about the output.

Code Review Has a New Job

Code review was always supposed to catch this. A reviewer who knows the system looks at a patch and asks: does this actually fix the problem, or does it just make the symptom go away? That question is more important now, not less.

But there’s a social dynamic that works against it. When a developer submits an AI-generated patch, there’s often an implicit assumption that the model has done the heavy lifting. The patch is clean. The tests pass. The explanation is coherent. Reviewers, who are also busy, may spend less time questioning the underlying logic than they would with a patch that showed obvious signs of human uncertainty.

The practical answer is to make the review question explicit. Some teams are adding a specific checklist item: does this change address the root cause, or a symptom? That’s a blunt instrument, but blunt instruments work when the alternative is relying on implicit norms that are eroding under time pressure.

The Model Doesn’t Know What It Doesn’t Know

There’s a final layer worth naming. When a human developer doesn’t fully understand why a bug is happening, they usually know that they don’t know. They say things like “I think this is the fix but I’m not confident” or “this works but I’m not sure it’s right.” That uncertainty is information.

AI models don’t reliably express calibrated uncertainty about code. They generate confidently whether or not they have sufficient context to be confident. The output looks the same whether the model has correctly identified the root cause or has just found a patch that satisfies the local constraints. You often can’t tell from the diff which situation you’re in.

This isn’t a solvable problem with better prompting, exactly. It’s something to hold as a permanent prior when working with these tools: the confidence of the output is not correlated with the correctness of the underlying diagnosis. A model that says “here’s the fix” sounds identical whether it’s right or wrong.

What This Means

The failure mode isn’t that AI coding assistants generate bad code. It’s that they generate good code that solves the wrong thing. A passing test and a correct fix are not synonyms, and the speed at which these tools operate makes it easy to treat them as if they are.

The practical adjustments are less exciting than the technology:

- Use AI to generate hypotheses about root causes, not just patches. Make that a deliberate step before asking for code.

- Evaluate the diff against your system knowledge, not just the test suite. Ask whether the fix makes sense, not just whether it works.

- Update your code review culture to explicitly ask the root cause question. Build it into the checklist rather than relying on reviewers to ask it spontaneously.

- Be more suspicious of clean, confident AI output than of messy, uncertain human output. Messiness is often a signal that someone is engaging with the real complexity.

The model is solving the problem you gave it. Your job is to make sure that’s actually the problem you have.