What a CPU Actually Does When You Run a For Loop

Most programmers think of a for loop as a direct instruction: do this thing N times, then stop. The reality is that your loop is a suggestion. The CPU, the compiler, and the memory subsystem spend considerable effort rewriting, reordering, and sometimes outright replacing what you wrote. Understanding that process explains why a ten-line loop can be the slowest or fastest code in your program, and why the difference often has nothing to do with the loop itself.

The Compiler’s First Rewrite

Before your loop touches a CPU, the compiler has already transformed it. Take a simple summation loop in C: iterating over an array of integers and accumulating a total. What you wrote is syntactically a sequential process. What the compiler emits, at any modern optimization level, may look almost nothing like that.

The compiler’s first move is often loop unrolling. Instead of one iteration per cycle, it rewrites the loop body to execute two, four, or eight iterations per pass, reducing the overhead of the branch check and counter increment. GCC and Clang both do this automatically at -O2 and above. The result is more instructions in the binary, but fewer total cycles, because the branch prediction hardware has less work to do and the CPU can begin executing subsequent iterations before the current one finishes.

More aggressively, the compiler checks whether your loop can be auto-vectorized, meaning it can be replaced with SIMD (Single Instruction, Multiple Data) instructions that process multiple array elements in parallel. A loop summing 32-bit integers on a modern x86 chip can be rewritten to use AVX2 instructions that process eight integers per instruction. That’s not a small optimization. It’s a potential 8x throughput improvement from a transformation the programmer never requested.

The constraint that blocks auto-vectorization is aliasing: if the compiler can’t prove that your input and output pointers don’t overlap in memory, it has to assume they might, and it can’t safely reorder reads and writes. This is why the restrict keyword exists in C, and why it can matter more than almost any manual optimization.

Fetch, Decode, Execute — and Why It’s Never That Simple

Assume the compiled code hits the CPU. The classic description of processor operation is a three-stage pipeline: fetch an instruction, decode what it means, execute it. Modern processors bear no resemblance to this model.

A current Intel or AMD desktop chip has a pipeline 14 to 20 stages deep. Instructions are fetched in 16-byte chunks, decoded in parallel (Intel’s Golden Cove can decode up to six instructions per cycle), and then broken down further into micro-operations, or uops, which are the actual primitive actions the execution units perform. A single x86 instruction like ADDPD (add packed double precision) might become multiple uops. A memory load that misses the cache might stall for hundreds of cycles while the whole pipeline waits.

The CPU manages this complexity through out-of-order execution. Rather than processing uops in program order, the chip maintains a structure called the reorder buffer (ROB) that holds in-flight uops and dispatches them to execution units as their inputs become available. On a modern high-performance core, the ROB can hold 350 or more uops simultaneously. The processor is constantly juggling work, running instructions that appear later in the program ahead of earlier instructions that are still waiting on data.

For a for loop, this matters enormously. If loop iteration N depends on the result of iteration N-1 (a dependency chain), the out-of-order engine can’t help you much. If the iterations are independent, it will run several of them concurrently without being asked.

Branch Prediction and the Cost of Being Wrong

Every loop has a branch: the condition check that decides whether to keep iterating or exit. For a loop over a million elements, this branch is almost always taken (continue iterating) until suddenly it isn’t. CPUs exploit this pattern aggressively.

The branch predictor maintains a history of recent branch outcomes and uses that history to guess what will happen next. For a simple counting loop, prediction accuracy is typically above 99%. The CPU uses that prediction to speculatively execute instructions beyond the branch before knowing whether the branch was actually taken. By the time the branch resolves, several subsequent iterations may already be in-flight.

When the predictor is wrong, the cost is a pipeline flush: all the speculative work gets discarded, and the processor has to restart from the correct path. On a deep out-of-order pipeline, a single misprediction can cost 15 to 20 cycles. For a loop with a predictable pattern, this happens once, at the end. For a loop with data-dependent early exits, it can happen repeatedly, which is why binary search over a random array performs worse than expected on modern hardware despite having O(log n) comparisons.

This is also the mechanism behind Spectre, the vulnerability disclosed in 2018. Speculative execution is not a bug in the usual sense — it’s a deliberate design choice that makes processors vastly faster. Spectre showed that the speculatively accessed data leaves traces in the cache that can be measured by a side channel, turning a performance feature into a security hole. Intel and AMD still haven’t fully resolved the architectural tension.

The Memory Hierarchy Is the Real Bottleneck

Loop execution time is almost never determined by arithmetic. It’s determined by memory access patterns.

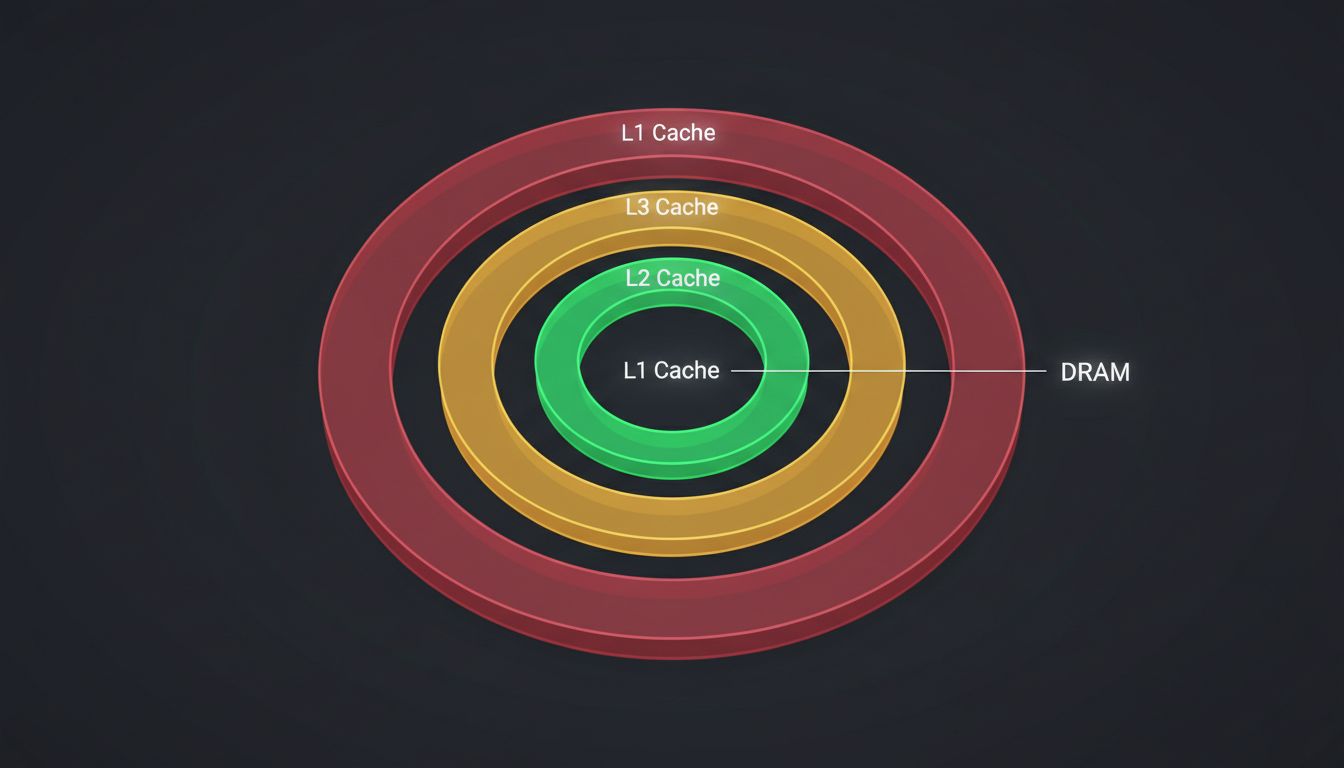

Modern processors can execute arithmetic operations in one or two cycles. A cache miss to main memory takes 200 to 300 cycles on typical hardware. The gap is large enough that a loop doing nothing but sequential array reads can be bandwidth-limited by memory long before it runs out of arithmetic capacity.

The memory hierarchy exists to close this gap. L1 cache (typically 32 to 64 KB per core) delivers data in 4 to 5 cycles. L2 (256 KB to 1 MB) takes around 12 cycles. L3 (shared across cores, 8 to 64 MB on modern server chips) takes 30 to 50 cycles. Main memory is the cliff.

For a for loop iterating over a large array sequentially, the CPU’s hardware prefetcher recognizes the access pattern and begins loading cache lines before they’re needed. Sequential access is the pattern prefetchers are designed for. Random access, as in a loop over a linked list or an unsorted hash table, defeats prefetching entirely and produces a cache miss for nearly every iteration.

This is why the standard advice to prefer arrays over linked lists isn’t about algorithmic complexity — it’s about hardware. An O(n) scan over a packed array can outperform an O(n) scan over a linked list of the same data by a factor of 10 or more, purely because of cache behavior. As with many distributed systems problems, the bottleneck is usually in the plumbing, not the logic.

How the CPU Predicts What You’ll Need Next

The prefetcher’s job is to hide memory latency by loading data before the processor requests it. It watches the stream of memory addresses your loop generates and tries to extrapolate.

A simple stride-1 access pattern (every iteration reads the next element in an array) is trivially prefetchable. Stride-N patterns, where you skip every other element or sample every fourth, are also detectable by modern prefetchers, which track multiple independent streams. The Intel prefetcher can handle both spatial prefetching (loading nearby cache lines) and temporal prefetching (loading data predicted to be needed soon).

Where prefetching breaks down is with pointer chasing. When you dereference a pointer to get the address of the next memory access, the prefetcher can’t know what address to load until the current load completes. This creates a fundamental latency chain that no prefetcher can overcome. It’s why tree traversals and linked-list iterations are genuinely cache-hostile, not just theoretically suboptimal.

Software prefetch hints (__builtin_prefetch in GCC) let programmers tell the CPU to begin loading a future address early. For loops with known access patterns that the hardware can’t infer, these hints can recover substantial performance. They require knowing N iterations ahead what address you’ll need, which is feasible for some data structures and impossible for others.

SIMD and the Hidden Parallelism

Returning to the auto-vectorization point from the compiler section: the execution units that process SIMD instructions are physically separate from the scalar integer and floating-point units. When your loop is auto-vectorized, it’s using hardware that would otherwise sit idle during scalar computation.

AVX2, widely available since Intel Haswell (2013) and AMD Ryzen (2017), operates on 256-bit registers. For 32-bit floats, that’s eight values processed simultaneously. AVX-512, available on Intel server chips and recent client chips, doubles that to 512 bits and 16 values per instruction. The practical consequence is that a loop summing a million floats doesn’t need a million addition operations. It needs around 125,000 SIMD additions, and if those can be pipelined, the throughput approaches the memory bandwidth limit rather than the arithmetic limit.

The catch is that SIMD requires aligned, contiguous data. Loops that access struct fields instead of plain arrays, or that mix data types, often fail to vectorize. This is one concrete reason why data-oriented design, structuring data as arrays of values rather than arrays of objects, produces faster code. The transformation isn’t philosophical. It’s about matching your memory layout to what the hardware’s execution units actually consume.

What This Means

A for loop that looks like one thing to you looks like a multi-stage optimization problem to the toolchain and hardware beneath it. The compiler decides what code to generate based on provable properties of your data. The branch predictor decides whether to speculatively execute future iterations. The out-of-order engine decides what operations can run in parallel. The prefetcher decides what memory to load before you ask for it. SIMD units sit waiting for work that most loops don’t offer them in the right form.

The practical upshot is that the micro-level choices that seem least important, how you lay out your data in memory, whether your loop iterations are independent, whether you give the compiler enough information to prove aliasing doesn’t occur, are the ones that determine whether your code runs at a few percent of hardware peak or close to it. The loop syntax is almost irrelevant. The memory access pattern is almost everything.