The Spreadsheet That Nearly Sank Us

A founder I know spent four months building a reporting dashboard. Beautiful thing. Filters, exports, drill-downs. The kind of feature that makes a good screenshot for a deck. He showed it to his first ten enterprise prospects. Seven of them asked why the core data ingestion was so slow. Three asked if he could integrate with a tool he’d never heard of. Not one mentioned the dashboard.

He’d built the thing that felt concrete and safe. The unglamorous work of making the pipeline fast and reliable felt too risky to touch because he wasn’t sure he could solve it. So he built around it.

This is the most common mistake I see in early-stage product decisions, and it’s not about laziness or bad taste. It’s about a very human instinct to avoid the work that might expose a fatal flaw.

The Illusion of the Feature Priority Matrix

At some point someone will tell you to use a prioritization framework. RICE. MoSCoW. Weighted scoring. You’ll spend a Friday afternoon in a Notion doc assigning numbers to features and calculating what to build next. The output will feel rigorous.

It’s mostly theater.

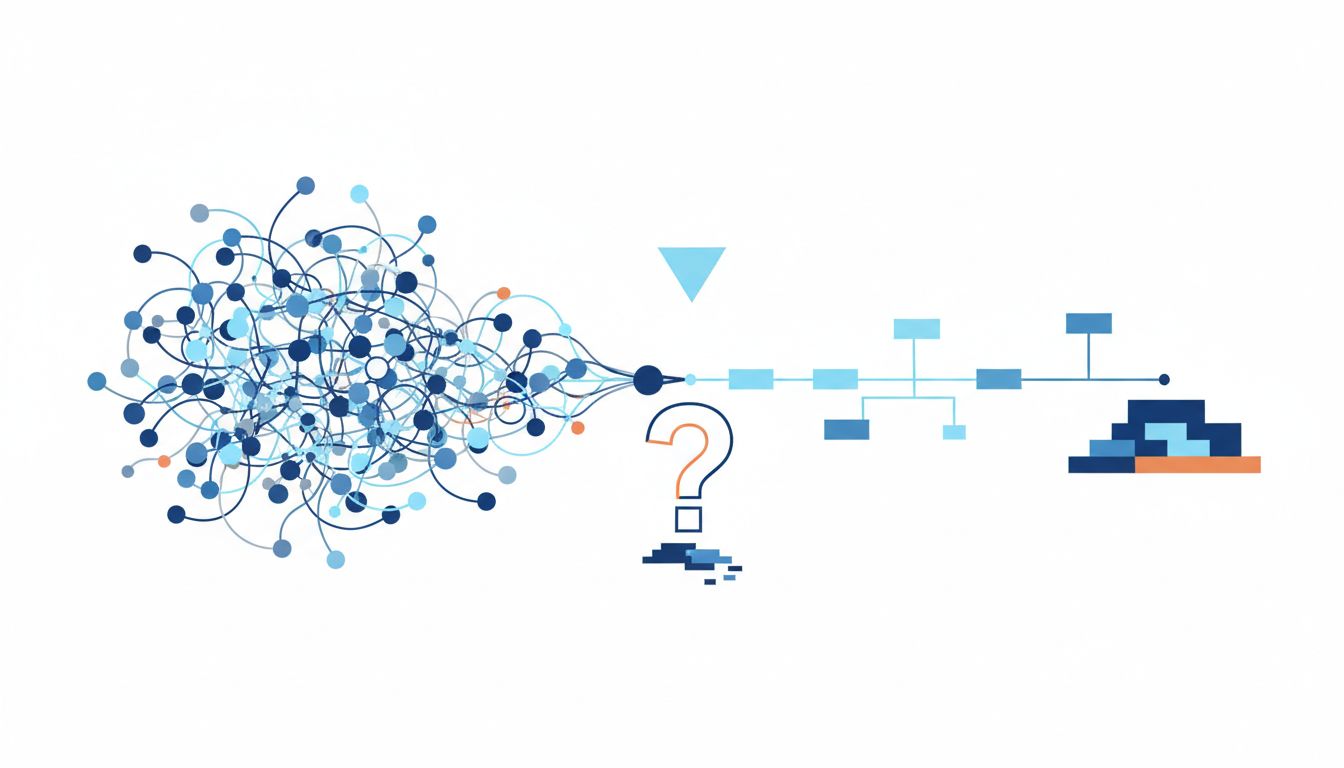

These frameworks are good at helping you rank features once you’ve already established that your core product works. They’re terrible at telling you what to build first because they treat all uncertainty as equal. A feature with a score of 80 that you know how to build and a feature with a score of 80 that might not be technically feasible look identical in a spreadsheet. In reality, they’re completely different bets.

The real question in early product development isn’t “what do users want most?” It’s “what do I still not know that could kill this company if I’m wrong?”

Risk as the Actual Organizing Principle

There’s a concept borrowed from project management called “risk-first development,” and while the name sounds bureaucratic, the instinct is exactly right. The thing you should build first is the thing whose failure would most severely compromise everything else.

Phrased differently: what assumption, if wrong, makes the rest of your roadmap irrelevant?

For some companies that’s a technical assumption. Can you actually process this data at the volume you need? Can the latency be low enough for real-time use? Can you build the integration the customer’s IT department will actually approve? For others it’s a behavioral assumption. Will users change a habit they’ve had for years? Will they trust a recommendation from a system they didn’t set up?

These are the things you build first. Not because they’re the most visible or the most impressive, but because until you know the answer, nothing else you build is standing on solid ground.

The Unhappy Path Is the Real Product

Here’s a test I’ve started applying to early product decisions: what happens when it breaks?

Most founders build for the happy path. The user signs up, completes onboarding, uses the feature as intended, gets value, comes back. That path is easy to build for because it’s the one you’ve imagined a thousand times. But the unhappy path is where you learn whether your product actually works in the world.

What happens when the data the user imports is malformed? What happens when two users try to edit the same thing at the same time? What happens when the external API you depend on goes down? What happens when the user doesn’t understand what they’re supposed to do?

I’m not arguing you should build every edge case before you ship. That’s a different failure mode. What I’m arguing is that if you haven’t thought through the unhappy paths for your most critical flow, you don’t actually know what you’re building yet. The product you ship is not the product that runs on your laptop with clean test data. Why staging passes and production still catches fire is a problem that starts in this exact planning gap.

Before you add a feature, map what happens when it fails. That exercise will tell you a lot about whether you should build it at all.

The Loudest Requests Are Usually the Wrong Ones

Here’s where the pressure comes from: customers. Investors. Your own co-founder who talked to a prospect at a conference.

You will always have a list of features that people are asking for. The trap is that the people asking loudest are usually your most engaged (and often most unusual) users, not your median customer. They have specific workflows, strong opinions, and time to give you feedback. They’re valuable, but they’re not representative.

Building for them first is a common enough failure that it deserves its own category. The loudest customer is steering you off a cliff is a real phenomenon, and it’s particularly lethal in the early stage when you’re still figuring out who you’re actually building for.

The right filter isn’t “who asked for this” but “what is true about my customer’s problem that would still be true even if no one had thought to ask for it.” That distinction matters. Customers are good at telling you what hurts. They’re often unreliable at prescribing the fix.

Cutting the Scope Without Losing the Point

Once you’ve decided what to build, there’s still the question of how much of it to build. And this is where most teams overcomplicate things.

The instinct is to build the complete version of the feature before shipping it. Full configurability. All the edge cases handled. The settings panel. The help text. It feels unprofessional to ship something smaller.

The problem is that the full version encodes a lot of assumptions that might be wrong. You’ve assumed people want to configure it. You’ve assumed they need the help text (if they do, that might be a sign the feature is unclear, not undertested). You’ve spent time on things that might get ripped out entirely once you see how people actually use it.

The question isn’t “what is the complete version of this feature” but “what is the smallest version that would actually prove the core assumption wrong or right?” That’s meaningfully different from a minimum viable product in the worst sense of the phrase, where MVP becomes an excuse for shipping something broken. The goal is precision, not laziness. You’re trying to learn a specific thing, and you want to build exactly enough to learn it.

When Everything Genuinely Is Critical

Sometimes founders are right that multiple things are urgent. You’re in a competitive market, you have a customer who won’t convert without a specific feature, and you have an investor meeting in six weeks. Everything feels load-bearing.

In those cases I’d push back on the premise that you need to resolve this through prioritization at all. Sometimes the answer is sequencing, not ranking. What’s the right order of operations given your specific constraints?

If the feature the investor cares about and the feature the customer needs overlap at all, build there. If they don’t, you have a different problem: you might be trying to be two products at once. That’s a premature scaling problem more than a prioritization problem.

And if you genuinely can’t sequence things because they’re all equally urgent and equally foundational, you probably have a resourcing problem. You’ve committed to more than a small team can validate simultaneously. That’s a hard conversation to have, but it’s more honest than building four things at half speed and learning nothing from any of them.

What This Means

Prioritization frameworks are a useful forcing function, but they answer the wrong question in the early stages. What you need to build first is not the most requested feature or the easiest win. It’s the thing whose failure would undermine everything else.

Build to reduce uncertainty, not to accumulate features. Map the unhappy path before you write the first line of code. Be skeptical of the loudest customer requests and more skeptical of frameworks that treat all features as commensurable. Scope down until you’re building exactly what you need to learn what you need to learn.

The founders who get this right don’t have better taste or more experience. They’re just more honest about what they don’t know yet.