A startup I know spent three months migrating their primary infrastructure to a lower-cost cloud region. The compute savings were real, around 28% on their monthly bill. Then their on-call engineers started getting paged at 3am because latency to their European users had quietly crept from 60ms to 340ms. Customer support tickets about slowness doubled. Two senior engineers spent most of Q3 debugging region-specific networking quirks. By the time anyone ran the actual numbers, the migration had cost more than a year of the savings it was supposed to generate.

This story is not unusual. It is, in fact, the modal outcome for companies that chase regional pricing without accounting for the full cost surface.

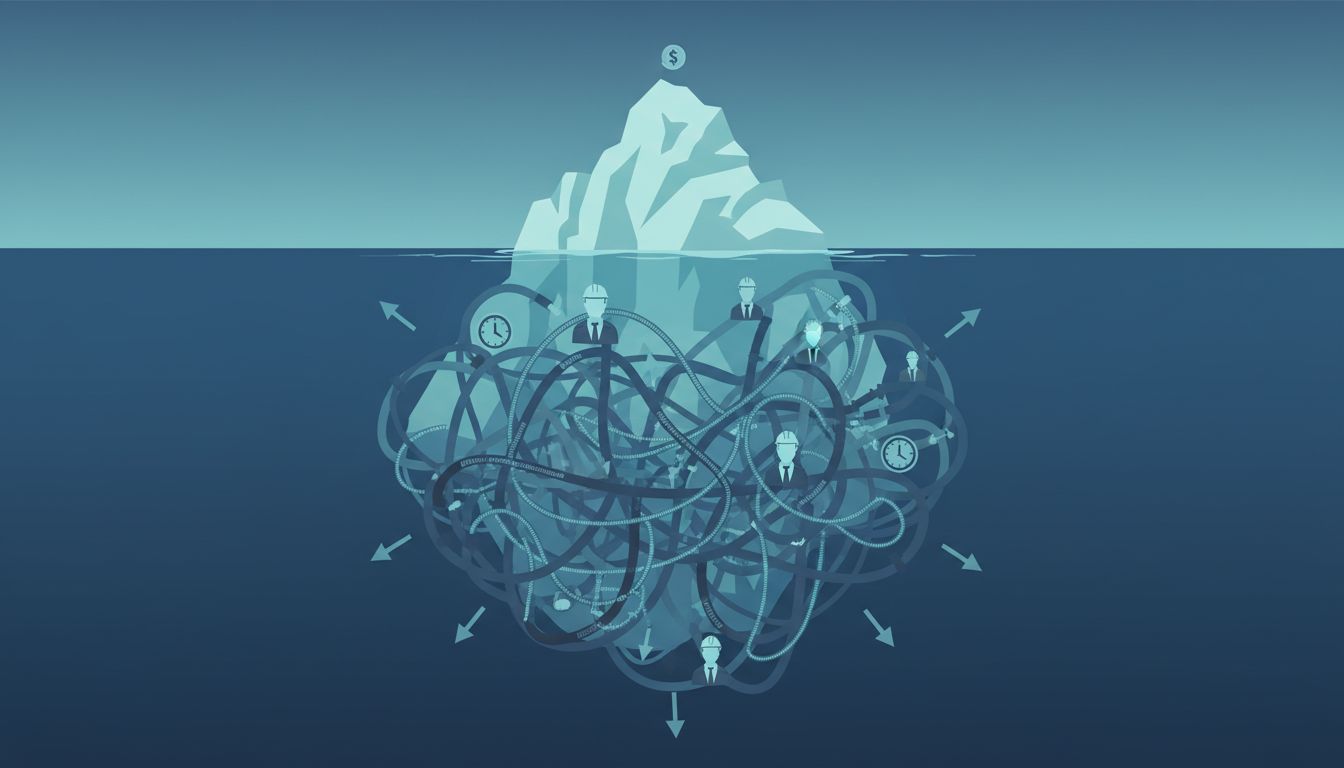

The Price Tag You See Is Not the Price You Pay

Cloud providers publish compute and storage rates per region, and those rates vary meaningfully. AWS’s ap-south-1 (Mumbai) typically runs 10-20% cheaper than us-east-1 for comparable instance types. GCP’s regions in Asia show similar spreads. On paper, the savings are real.

What the pricing page doesn’t show you is egress. Data transfer costs between regions, between a region and your CDN, between a region and your users’ geographies, can easily dwarf the compute savings. AWS charges for data leaving a region to the internet regardless of which region you’re in, but the volume of cross-region traffic you generate when your primary region doesn’t colocate well with your services, your vendors, or your users is the hidden multiplier. Many companies don’t model this until they’ve already committed to the move.

Then there’s the managed services gap. The cheaper regions are almost always behind on service availability. If your architecture depends on a managed Kafka offering, a specific RDS version, or a newer GPU instance type, there’s a good chance it isn’t available in the region you’re moving to. So you either rebuild parts of your stack from scratch (expensive in engineering time) or you accept cross-region dependencies that introduce latency and failure modes your original architecture never had.

Latency Has a Dollar Value and Most Teams Don’t Calculate It

There’s solid research from Google, Akamai, and others showing that for e-commerce and SaaS products, increases in page load time correlate directly with conversion rate drops and user churn. The numbers vary by industry and product type, but the direction is consistent and the effect is not small.

When you move your primary compute region further from your users, you’re making a performance tradeoff. If your users are concentrated in the American Northeast and you move from us-east-1 to ap-southeast-1 to save on compute, you’ve added round-trip latency that no amount of CDN caching fixes for dynamic, authenticated requests. Those requests have to travel to your servers and back. Physics is not negotiable.

The honest calculation requires you to put a dollar value on a 100ms latency increase. Most engineering teams never do this, partly because the data is hard to gather and partly because it forces an uncomfortable answer. If you’re a B2B SaaS company where users are session-heavy and latency-sensitive, the real cost of that latency delta could be measured in churn points. 99.9% uptime sounds impressive until you do the math, and the same numeracy problem applies here: the headline number looks fine until you work through what it actually means.

The Engineering Tax Nobody Budgets For

Region-specific bugs are real and they are weird. Network behavior, DNS resolution quirks, BGP routing anomalies, hardware generation differences, clock synchronization tolerances — these vary between AWS regions in ways that are documented nowhere and discovered only through painful experience. Senior engineers who have worked extensively in a primary region have built up intuitions about how that region behaves. Move to a new region and those intuitions fail.

The debugging overhead is not trivial. When something breaks in a region your team knows well, you have pattern-matching that accelerates diagnosis. In an unfamiliar region, every anomaly requires first-principles investigation. This is engineering time that costs real money, and it’s almost never included in the pre-migration savings estimate.

Vendor support compounds this. Many third-party services — monitoring tools, security vendors, database proxies — have their own region preferences and latency profiles. When your primary infrastructure is in a region that’s geographically or logistically distant from your vendors’ infrastructure, you’re adding integration complexity that your engineering team has to manage permanently, not just during migration.

When the Math Actually Works

None of this means cheaper regions are always the wrong choice. There are scenarios where they make clear sense.

If you’re running batch processing workloads, your latency tolerance is high. Jobs that run overnight, data pipelines that process logs, machine learning training runs — these don’t care if they’re 200ms further from your users. For pure compute-heavy background work, cheaper regions are often genuinely cheaper with no meaningful downside.

If you’re building a product specifically for users in a geography where cheaper regions are also closer, the calculation flips. A company building primarily for Indian users has good reason to run in ap-south-1 rather than us-east-1, and the pricing advantage is a bonus on top of the latency win.

Multi-region architectures that are designed from the start with regional separation in mind can also capture pricing benefits intelligently, routing different workload types to regions that are optimally priced for them. But this requires architectural intentionality upfront, not a post-hoc migration.

The failure mode is the one-to-one migration where a company takes an architecture designed for one region and moves it wholesale to a cheaper one, expecting the savings to materialize cleanly. They rarely do.

The Actual Calculation You Need to Run

Before any region migration, build a cost model that includes compute savings, egress delta, latency impact converted to a dollar value using your own conversion and churn data, engineering time for migration and ongoing regional maintenance, service availability gaps that require workarounds, and vendor integration costs. Most teams run the first item and stop.

Cloud cost optimization is a real discipline and the savings can be real. But the savings come from right-sizing instances, using reserved capacity intelligently, eliminating waste in your architecture, and understanding where your actual spending goes. Region arbitrage is the version that looks obvious in a slide and bites you six months later.

The startup I mentioned at the beginning eventually migrated back to their original region. The rollback cost another quarter of engineering time. Their cloud bill is higher than it would have been if they’d never moved. The lesson they came away with was the obvious one, just learned the expensive way: the number on the pricing page is not the number you pay.