A founder I know spent six months celebrating a Net Promoter Score in the low 60s. Solid. Respectable. Investors liked it in the deck. Then she looked at her 12-month cohort retention and realized she was losing 40% of customers by month eight. The promoters were real. They just weren’t staying.

NPS and churn measure different universes. One captures sentiment at a moment. The other captures the accumulated weight of every interaction, every support ticket, every time the product did or didn’t do what the customer needed it to do. When they diverge, believe churn. Here’s why, and what churn is actually telling you.

1. High NPS With Rising Churn Means You Have a Timing Problem

NPS surveys go out right after a good moment. A successful onboarding. A resolved ticket. A feature launch email. Customers feel good, they score you a 9, and that score sits in your dashboard for months while reality quietly shifts underneath it.

Churn doesn’t care about the moment. It measures whether, when the renewal arrives or the credit card gets charged again, the customer decided the product was worth it. That decision is made in the accumulated middle of their experience, not the highlighted peaks you chose to survey around.

If your NPS is high but churn is accelerating, your timing is off. You’re measuring the wrong moments and drawing comfort from them. Find out what’s happening between those good moments. That’s where the product is actually failing.

2. The Customers Who Churn Quietly Are Your Most Honest Signal

Promoters fill out NPS surveys. Churned customers mostly don’t. The people who leave without a word, who just stop logging in and let the subscription lapse, are giving you the most honest data point available: they decided your product wasn’t worth the friction of even canceling properly.

Passive churn (where customers just don’t renew or stop engaging without formally canceling) is a graveyard of real product feedback. These customers weren’t angry enough to complain. They were indifferent enough to leave. Indifference is harder to fix than anger, and NPS won’t capture it at all because indifferent customers don’t respond to NPS surveys.

The move is to build exit interviews into your offboarding, aggressively, and to separate passive churners from active ones. The passive churners are telling you the product never became essential. That’s a product-market fit problem, not a customer success problem.

3. Churn Segments Reveal Whether You Have the Wrong Customers

Aggregate churn is almost useless. Segmented churn is where the real story lives. When you break churn down by acquisition channel, company size, industry, or use case, you often find that one segment is churning at three times the rate of another. That’s not a retention problem. That’s a targeting problem.

Many B2B SaaS companies discover, after doing this analysis, that the customers acquired through broad top-of-funnel campaigns churn at dramatically higher rates than those who came through referrals or specific content channels. The broad campaigns brought in people who were never a real fit. They signed up because the pitch was compelling, not because the product solved an urgent problem for them. The wrong customer nearly killed Stripe, Slack, and YouTube and it’s killed a lot of companies that never got famous enough for anyone to write the postmortem.

NPS will not surface this. A customer who was a bad fit might still score you an 8 early on. They just leave later.

4. Time-to-Churn Tells You Where Your Onboarding Is Lying

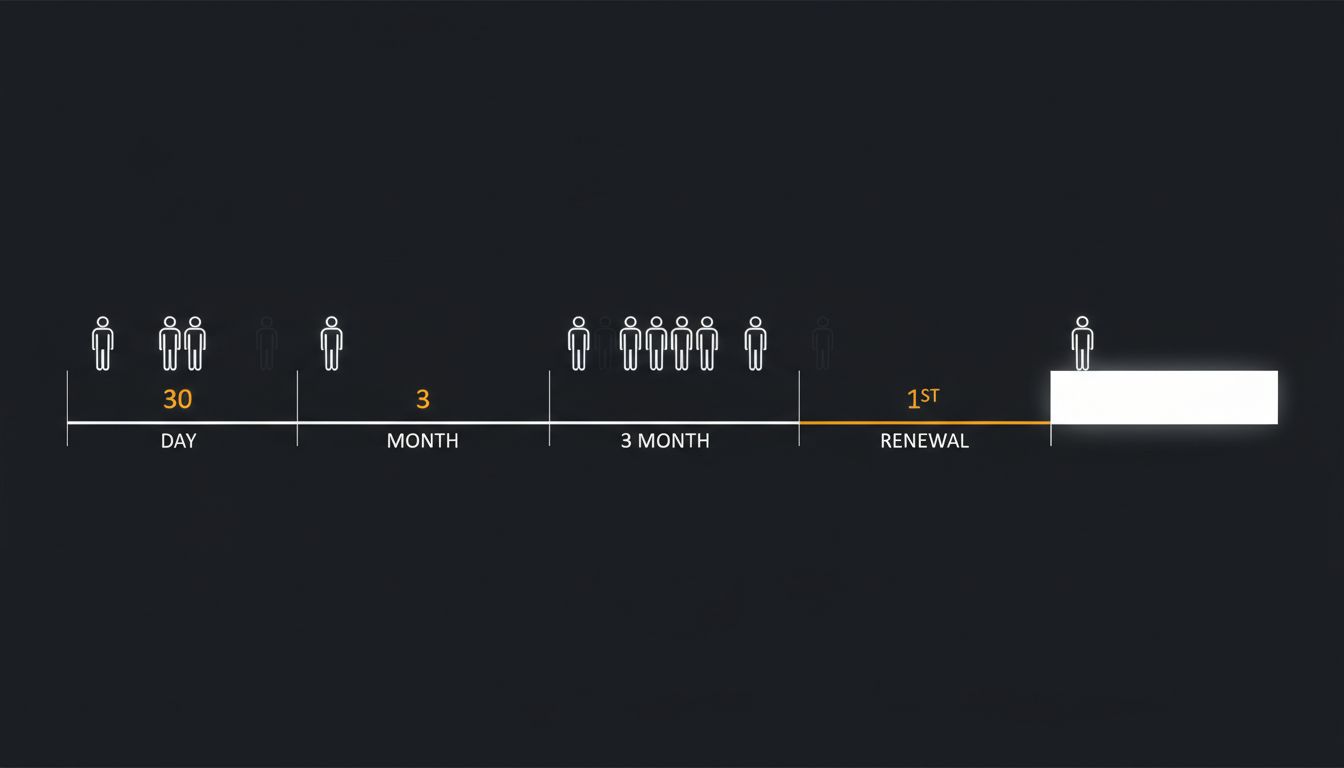

If you plot churn against time since acquisition, you’ll usually see spikes. Common ones: around day 30, around month three, and around the first renewal. Each spike has a different cause and requires a different fix.

The day-30 spike is almost always an onboarding failure. Customers signed up, didn’t reach the moment where the product clicked for them, and drifted away. This is the “activation” problem, and it’s one of the most solvable problems in early-stage SaaS if you’re willing to look at it honestly. The product promised something in the sales process that the onboarding experience couldn’t deliver fast enough.

The month-three spike often reflects feature exhaustion. Customers used the parts they needed, ran into the parts they didn’t, and concluded the product wasn’t deep enough. The first-renewal spike is almost pure value perception. They’re asking: did this product generate enough value to justify this invoice? If you can’t point to a clear answer, they’ll answer for you.

5. NPS Optimization Is Often a Local Maximum

Once a team starts optimizing for NPS, they get very good at generating positive survey moments. They time the surveys better. They build automated touchpoints designed to boost scores. The number goes up. Retention sometimes doesn’t move at all.

This is a real trap. Improving NPS and improving retention are correlated at a population level but easily decoupled at the execution level. A customer success team that’s rewarded on NPS will, rationally, focus on the things that move NPS. That means proactive outreach before the survey, quick resolution of visible problems, and friendly interactions that feel good in the moment. None of that is bad. But it’s not the same as making the core product more indispensable.

The companies that get this right treat NPS as a lagging indicator of product quality, not a target to manage. They use it the way you’d use a temperature reading: informative, but not something you try to change by holding a heat lamp next to the thermometer.

6. Churn Forecasting Forces Honesty That NPS Never Does

Building a real churn forecast requires you to commit to assumptions about why customers leave and when. You have to name the failure modes. You have to estimate their frequency. You have to assign dollar values to them. That process is uncomfortable in a way that running an NPS survey is not, which is exactly why it’s more useful.

Teams that build churn models, even rough ones, tend to develop sharper intuitions about retention than teams that rely on NPS and gut feel. The model forces questions: Which cohort is most at risk? What leading indicators precede a churn event? Which customer behaviors predict renewal? You can’t answer those questions with a quarterly survey score.

Pricing wrong kills startups and so does ignoring the compounding math of churn. A 5% monthly churn rate sounds manageable until you realize you’re replacing your entire customer base every 20 months. NPS won’t make that math visible. A churn forecast will.

Stop using NPS as a proxy for customer health. Use it for what it’s actually good at: a rough directional signal, surveyed sparingly, weighted against behavioral data. Churn is the behavioral data. It doesn’t ask customers how they feel. It watches what they do. Trust the behavior.