A founder I know spent six months interviewing her best customers. She flew to their offices, bought them lunch, ran structured sessions with a research consultant. The result was a detailed portrait of people who loved her product deeply. Then she looked at her churn data and realized she was losing 40 percent of new signups before they ever reached the features those loyal customers were raving about. She had built a thorough understanding of survival bias.

Your best customers are fluent in your product. They’ve forgotten the confusion they felt at the start. They’ve built workarounds for the things that don’t work. They’ve stopped noticing the friction because they navigate around it automatically. The people who churned in month one haven’t done any of that. They hit a wall and left. And that wall is probably where most of your growth problem lives.

1. Early churners experience your product as a stranger would

Long-term customers carry years of accumulated context. They know which menu items are mislabeled, which workflows require a workaround, which features to ignore. That knowledge is invisible to them because it’s been internalized. When you interview them, they describe a product that mostly works because, for them, it mostly does.

Someone who signs up, gets stuck, and cancels within three weeks has none of that. They arrived with a specific job they needed done, ran into something that blocked them, and made a rational decision to stop paying. Their experience is the closest thing you have to an unfiltered encounter with your own onboarding and core value proposition. That’s not a failure event. That’s a data source.

2. Their exit timing tells you where the actual friction lives

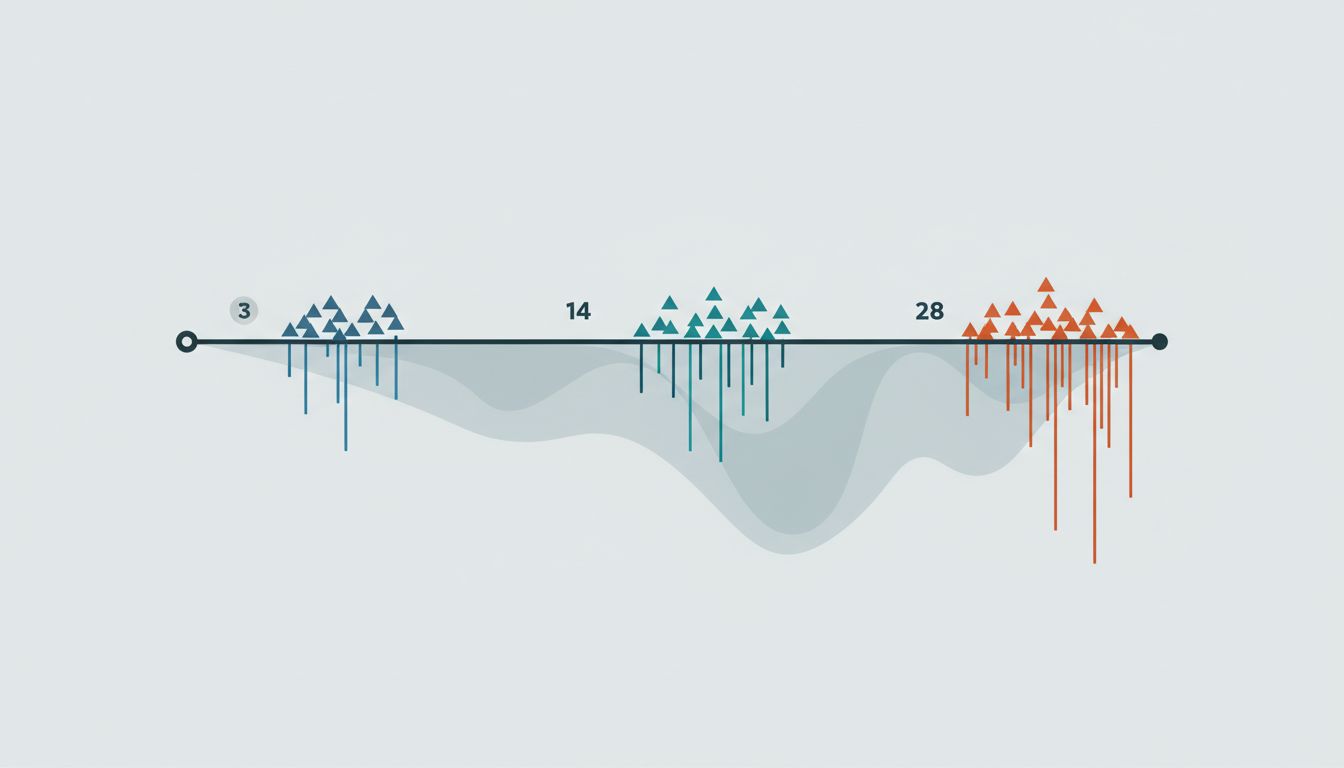

The specific week or day someone churns maps to a specific moment in the customer journey. If you’re losing people on day three, something in initial setup is failing. If you’re losing them at the end of week two, they probably tried to do something real with your product and couldn’t. If the spike is at day 28, right before the first invoice, they evaluated after a full trial and decided the value wasn’t there.

This is more actionable than most of what NPS surveys return. An aggregate satisfaction score doesn’t tell you where people fell off the cliff. Churn tells the truth your NPS score hides. When you break churn down by the exact point in the customer lifecycle where it occurs, you stop guessing about which part of your product is broken and start knowing.

3. They left before they had a reason to be polite

Long-tenured customers have a relationship with you. They’ve been on calls with your support team. They’ve emailed your CEO about a bug and gotten a personal response. They feel some degree of loyalty and, more importantly, they feel social pressure to be generous in their assessments. When you ask them what could be better, they tell you small things.

Someone who churned in month one owes you nothing. They signed up for a product, it didn’t work for them, they left. That emotional neutrality makes them unusually honest. In exit surveys and follow-up calls with early churners, you tend to hear the direct version: the thing that didn’t work, stated plainly, without the softening layer that long-term customers apply automatically. The feedback stings more, which is usually a sign it’s more accurate.

4. Retention optimization based on survivors is systematically misleading

When you study what your best customers have in common and then try to replicate those conditions, you’re doing product development on a filtered sample. The customers you’re learning from are the ones who made it through a gauntlet that most people didn’t. You’re optimizing for the characteristics of people who survived, not for removing the barriers that eliminated everyone else.

This shows up in feature prioritization constantly. A team interviews power users, learns they love a specific advanced capability, and doubles down on it. Meanwhile, most new signups never reach that feature because they churn out two weeks earlier at a completely different step. Survivorship bias doesn’t just distort your perception of the product. It distorts where you spend engineering time. Startups find product-market fit mostly by accident partly because of exactly this dynamic: the signal from the people who stayed drowns out the signal from the much larger group who didn’t.

5. The real cost isn’t the subscription revenue

Founding teams tend to think about early churn as lost MRR. That framing undervalues the problem. Someone who cancels in month one also never refers anyone. They never expand into a higher tier. They don’t become a case study or show up as a success story on your website. And in many categories, they write the review on G2 or leave the App Store rating that the next thousand prospects will read before signing up.

The compounding cost of early churn is acquisition efficiency. If you’re losing 35 to 40 percent of new customers before they reach their first meaningful outcome with your product, the CAC on every customer you retain is effectively carrying the cost of all the ones you didn’t. Fixing month-one retention doesn’t just improve the denominator. It makes every other growth metric easier to hit.

6. They can still be reached, and some of them will tell you exactly what happened

The window for getting honest exit data is short but real. Customers who churned within the last 30 to 60 days remember their experience clearly, haven’t developed ill will, and often respond to a direct, no-pressure email asking what went wrong. The response rate is lower than you’d get from active customers, but the quality of what you learn is often higher.

The emails that work are short and explicit about what you’re not asking for: you’re not trying to win them back, you’re not going to send them a discount code, you just want to understand what didn’t work. That framing disarms the social transaction. You get the direct answer more often than you’d expect. A few of those conversations are worth more than a hundred NPS responses from people who stayed.