In August 2012, Knight Capital Group deployed new trading software to its production servers. Eight of the nine servers received the update correctly. The ninth did not. When markets opened the next morning, that ninth server began executing a dormant piece of code, an old routine called “Power Peg” that had been used years earlier for a different purpose. Over the next 45 minutes, the server bought and sold millions of shares at unfavorable prices, accumulating positions Knight had no intention of holding. The loss totaled $440 million. The firm was effectively done.

The bug itself was not subtle, but it was fixable. The engineering post-mortem identified it clearly: a deployment process that lacked verification, a flag that activated legacy code, a rollback procedure that made things worse before anyone understood what was happening. Knight’s engineers understood the mechanism of the failure within hours.

What they almost certainly did not understand, and what the post-mortems rarely surface, is why those conditions existed in the first place.

Software bugs cluster into two categories that look identical from the outside. The first kind is a genuine mistake, a logic error, an off-by-one, a null pointer that slipped past review. These bugs exist because programming is difficult and humans are fallible. Fixing them is straightforward: find the error, correct it, ship the fix.

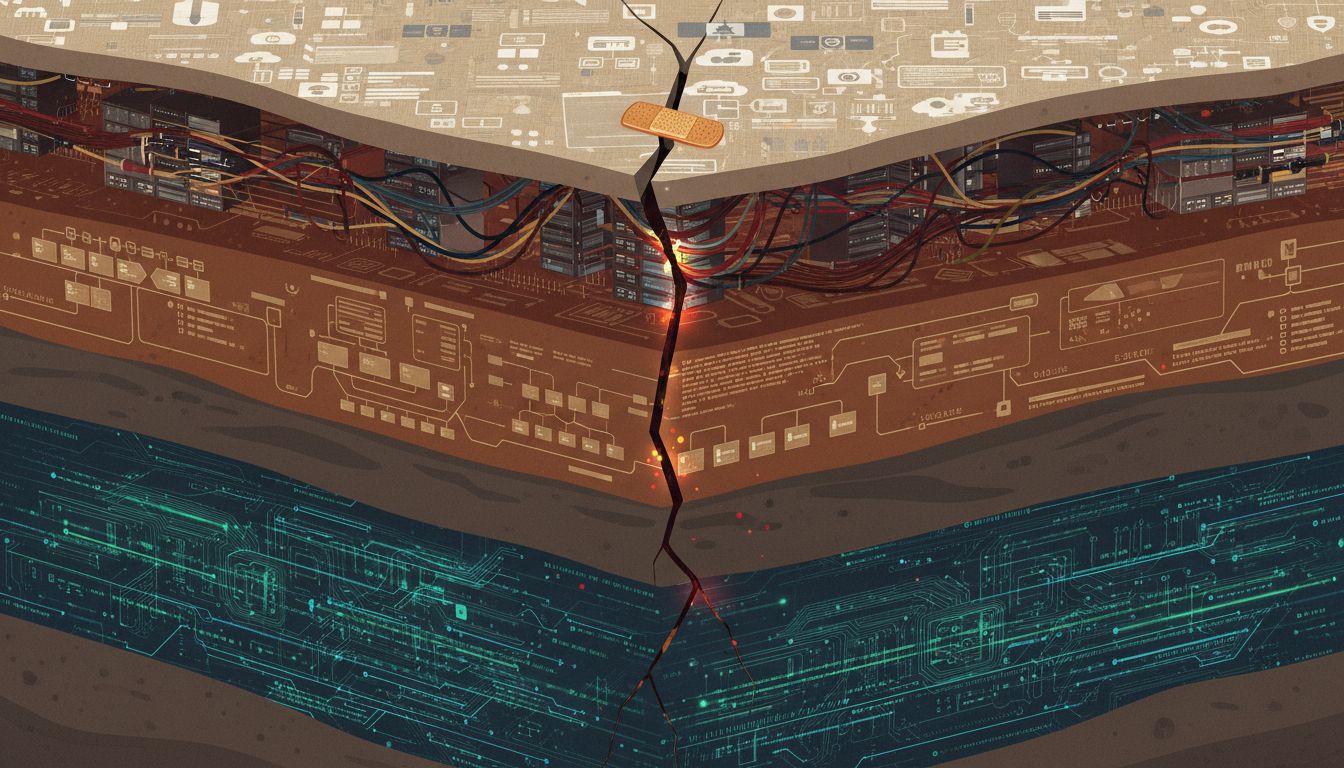

The second kind is a symptom. The code is wrong, but the code is wrong because of something upstream, a deadline that compressed review time, an organizational boundary that prevented one team from knowing what another team had deployed, an incentive structure that rewarded shipping velocity over verification. Knight Capital’s ninth server was this kind of bug. The error was a flag. The cause was a deployment culture that had accumulated debt quietly for years.

The distinction matters enormously because these two categories require completely different responses. Fix the first kind and you’re done. Fix the second kind without addressing the upstream cause, and you’ve bought yourself time until the next symptom appears.

The reason engineers so often address the first without the second comes down to what the job rewards. Debugging is legible work. You can point to the commit, show the test that now passes, close the ticket. Organizational archaeology is not legible in the same way. Asking “why did we not have a deployment verification step?” leads to conversations about process and ownership and history that are uncomfortable, slow, and difficult to assign to a sprint. The incentives push toward the patch.

There’s a compounding factor: the engineer fixing the bug is almost never the person who wrote it, and often isn’t the person who created the conditions that allowed it. Knight Capital had been growing its algorithmic trading infrastructure for years. The Power Peg routine had been dormant since 2003. The deployment process that failed in 2012 had probably worked adequately for a long time under different conditions, different scale, different teams. The engineer who ran that deployment on the morning of August 1, 2012, was not the architect of the system they were operating.

This is the normal state of software organizations, not the exception. Code accumulates. The people who wrote it leave or move to other teams. The reasoning behind decisions, why this flag exists, why that service was split off, why this particular timing assumption was made, lives in documents that don’t get updated, in Slack threads that scroll into the past, or nowhere at all. The institutional memory that would let you distinguish “this is how it works” from “this is a landmine someone left” degrades faster than the codebase does.

Knight Capital’s situation had one additional layer that made it representative of a broader problem in high-stakes systems: the bug was only catastrophic in combination with external conditions. The Power Peg code alone wouldn’t have caused harm. The deployment error alone wouldn’t have caused harm. The absence of a real-time position limit alert wouldn’t have caused harm. The harm came from the intersection of all three, plus the specific market conditions on that particular morning. Complex system failures almost always look like this. Charles Perrow documented it in his 1984 book “Normal Accidents,” arguing that in sufficiently complex, tightly coupled systems, accidents aren’t aberrations. They’re an emergent property of the system’s structure. The question isn’t whether they’ll happen. It’s which combination of factors will trigger them first.

The engineering response to this, when organizations are being honest with themselves, is to build toward what’s now called chaos engineering: the deliberate injection of failures to find the intersections before they find you. Netflix popularized this with Chaos Monkey, which randomly terminated production servers starting around 2010. The logic was direct. If the system will eventually fail in unpredictable ways, you’re better off manufacturing small failures on your own schedule than waiting for large ones on the market’s schedule. It’s a methodology that implicitly accepts Perrow’s argument and works backward from it.

But chaos engineering addresses resilience, not comprehension. It tells you what breaks. It doesn’t tell you why the fragility was there to begin with.

The more durable answer is organizational, and it’s harder to ship. It requires treating context as a first-class artifact alongside code, keeping records of not just what decisions were made but why, making the reasoning behind architectural choices as searchable as the architecture itself. It requires post-mortems that are genuinely blameless, not in the sense of protecting people from accountability, but in the sense of staying curious long enough to trace a bug back to its origin rather than stopping at the first fixable error. The naming of things, the documentation of intent, the compression of reasoning into forms that survive personnel turnover are all part of this, and none of them show up in a sprint velocity metric.

Knight Capital was acquired by Getco the following year. The engineers who patched the deployment process moved on to other companies, where they carry the lesson of the mechanism. The organizational conditions that allowed Power Peg to sit dormant for nine years, undocumented and dangerous, may or may not have traveled with them.

The bug gets fixed. The question is whether anyone learns why it was there.