The Simple Version

Some bugs are invisible at small scale and catastrophic at large scale, not because the code changes, but because the assumptions baked into the code stop being true once enough people use it simultaneously.

Why Your Test Suite Won’t Catch These

When you write tests, you’re modeling a world. Ten users, maybe a hundred in a load test, all behaving more or less rationally, in sequence or in small bursts. That model is fine for catching logic errors and broken integrations. It is completely useless for a specific class of failure that only emerges from genuine concurrency at scale.

The technical term for this class is a race condition: a situation where the correctness of your program depends on the timing of events that you don’t control. At low traffic, the timing usually works out. Operations complete before the next one starts. Resources get freed before anyone else needs them. The code behaves as if it’s running in a tidy, orderly sequence, because mostly it is.

Add a million users, and timing stops being your friend. Two requests that would never have overlapped in testing suddenly overlap constantly. And the failure only happens in that precise window of overlap, which might be 20 milliseconds wide.

You cannot reproduce this in your staging environment, because your staging environment has 12 users on a good day.

The Classic Failure: The Double-Spend

Here’s a concrete example that breaks more systems than people admit. You’re building a ticketing platform. A user clicks “buy” on the last available ticket. Your code does this:

- Check if tickets are available

- If yes, deduct one from inventory

- Complete the purchase

At low traffic, this works perfectly. At high traffic, two users click “buy” at exactly the same moment. Both requests hit step 1 at the same time. Both see one ticket available. Both proceed to step 2. You’ve just sold the same ticket twice.

This isn’t a hypothetical. This exact failure (in various forms) has hit retail flash sales, concert ticketing systems, and airline seat reservations. The code wasn’t wrong in any obvious sense. It did exactly what it was told. The problem was that it was told to do something that assumed operations were atomic (meaning: indivisible, happening all at once) when they weren’t.

The fix, if you’re curious, involves database-level locking or atomic operations: instead of “check then update,” you issue a single command that says “update inventory where inventory > 0 and return the number of rows affected.” If the affected rows come back as zero, someone beat you to it. The check and the update happen as one inseparable step.

When Caches Become Liars

Caching is one of the most effective performance tools in software engineering. It’s also one of the most reliable sources of scale-specific bugs.

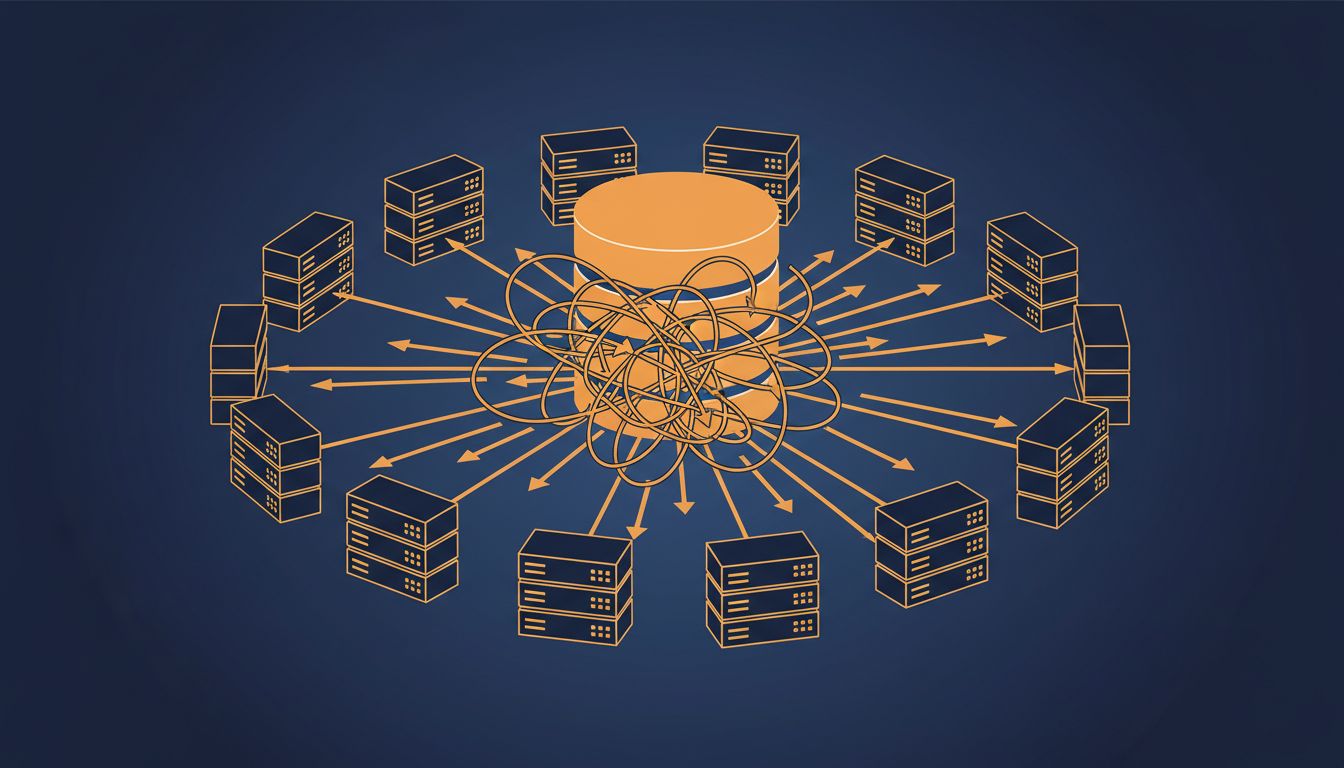

The idea is simple: expensive computations (database queries, API calls, complex aggregations) get stored in a fast, temporary store like Redis or Memcached. Subsequent requests read from the cache instead of recomputing. This works beautifully until you hit what’s called the thundering herd problem.

Imagine your cache holds the result of a heavy database query, and that cache entry expires. If you have ten users, maybe one or two requests hit the database to regenerate the cache while the others wait. If you have a million users, thousands of requests hit that expiration at the same moment, all simultaneously executing the expensive query that the cache was supposed to prevent. Your database, which was coasting along nicely, suddenly gets hit with thousands of identical heavy queries at once. It falls over. Your site goes down.

The cache was supposed to protect the database. At scale, the cache expiration itself became an attack vector.

Solutions exist (cache stampede prevention, probabilistic early expiration, request coalescing) but they all require you to have anticipated the problem. Most teams don’t, because it never showed up in testing.

State That Forgets It’s Shared

Another category involves global or shared state that developers treat as if it’s local. A counter that tracks active sessions. A flag that controls feature rollout. A configuration value that gets read once at startup and then cached in memory.

At small scale, these feel fine. At scale, you might have fifty server instances all maintaining their own copy of what they think is shared truth. A user toggles a setting on server instance 7. Their next request goes to instance 23, which has no idea. The setting appears to not have saved. This isn’t data loss in the database sense, it’s consistency failure across distributed state.

The deeper issue is that distributed systems don’t naturally agree on facts. Getting them to agree requires explicit coordination, and coordination has costs. As your system grows from one server to many, any assumption that two processes share memory becomes a lie. What happens when the clock lies is a related failure: even time becomes a thing distributed systems can’t agree on without careful design.

The Failure Mode Nobody Budgets For

There’s a meta-problem underneath all of these: scale-specific bugs are expensive to find, expensive to fix, and nearly impossible to justify spending time on before they happen. They require production traffic to surface, which means they only become visible after launch, after growth, often in the middle of your biggest traffic moment (a product launch, a viral moment, a sale).

The standard engineering response is to invest in observability before you need it. Distributed tracing, structured logging, anomaly detection on your metrics. Not because you know what will break, but because when something breaks at 3am under a traffic spike, you want to be able to see which code path is melting and why.

The teams that handle this well aren’t smarter. They’ve just built software that fails loudly and informatively instead of silently and mysteriously. That’s a design choice you make deliberately, usually before you need it, or painfully after.

Scale doesn’t change what your code does. It changes whether your code’s assumptions about the world are still true. Most of the time, at scale, they aren’t.