The engineers who built Betamax were right. Their format had better picture quality than VHS, longer recording time at launch, and a more robust cassette mechanism. Sony had every reason to believe the better product would win. It didn’t. By the mid-1980s, VHS held roughly 60% of the market and Betamax was finished as a consumer format. The lesson most people take from this story is about marketing. The real lesson is about what “better” actually means to buyers.

Quality Is Defined at the Point of Purchase, Not the Point of Engineering

The Betamax versus VHS outcome wasn’t a fluke or a failure of consumer taste. It was a preview of how technology markets actually work. JVC, which developed VHS, made a deliberate choice to license its format widely and prioritize recording length over picture fidelity. Rental stores needed tapes that could hold a full movie. Betamax initially couldn’t. VHS could, and JVC made sure every major manufacturer could build a VHS deck.

This pattern repeats with uncomfortable regularity. Google’s search engine was technically superior to Alta Vista and Yahoo when it launched in the late 1990s, but that’s the exception, not the template. More often, the product that wins is the one that solved the right subset of problems for the right distribution partners at the right moment. The product with the longer feature list or the cleaner architecture frequently loses.

The reason is simple: most buyers aren’t evaluating products in isolation. They’re evaluating them against switching costs, social proof, and whatever is already installed or already working. A product that is 20% technically better but requires retraining a team, converting existing files, or renegotiating vendor contracts isn’t 20% better from the buyer’s perspective. It may not be better at all. Switching costs are invisible until you try to leave, and by the time buyers calculate them honestly, the second-best product often looks like the safer choice.

Distribution Is a Feature

Microsoft didn’t win the office productivity wars in the 1990s because Word was superior to WordPerfect or Excel was better than Lotus 1-2-3. WordPerfect was dominant and beloved by legal professionals and power users. Lotus had genuine technical depth. Microsoft won in large part because Windows gave it a distribution channel that competitors couldn’t replicate. Every new PC came with Windows, and Microsoft had strong incentives to make its own applications run best on its own operating system.

This is the part that product-centric companies consistently underweight. Distribution isn’t a go-to-market afterthought. It’s a core competitive variable, often more durable than technical advantages. A patent expires. A feature can be copied. A distribution relationship, an OEM deal, a platform dependency, a deeply embedded workflow: these compound over time rather than erode.

The same logic applies in consumer software. Facebook wasn’t the first social network. Friendster launched in 2002 and had millions of users before Facebook existed. MySpace had more users than Facebook as late as 2008. What Facebook had was a growth strategy that spread through college networks systematically, then a mobile strategy that arrived at the right moment, and then a data advantage that made its advertising product increasingly difficult to match. Not better features. Better distribution mechanics, better timing, and a feedback loop that reinforced itself.

The Innovator’s Curse: Being Early and Right

There’s a specific variant of this dynamic worth examining separately. Companies that are technically correct but arrive too early often lose to companies that arrive at the right moment with a good-enough product.

General Magic, founded in 1990, built technology that was a credible prototype of what smartphones would eventually become. The company had an all-star founding team, real technical innovation, and a clear vision. It also had no viable distribution, no mature wireless infrastructure to build on, and no mass market capable of understanding the product. It collapsed. A decade later, Palm, then BlackBerry, then Apple arrived into an environment where the infrastructure existed and the consumer psychology had shifted.

Being right too early is economically equivalent to being wrong. The market doesn’t reward accurate predictions. It rewards solutions that fit existing infrastructure, existing buyer behavior, and existing price expectations well enough to spread. A product optimized for a world that doesn’t yet exist will lose to a product optimized for the world as it is, even if the first product is objectively superior in a technical sense.

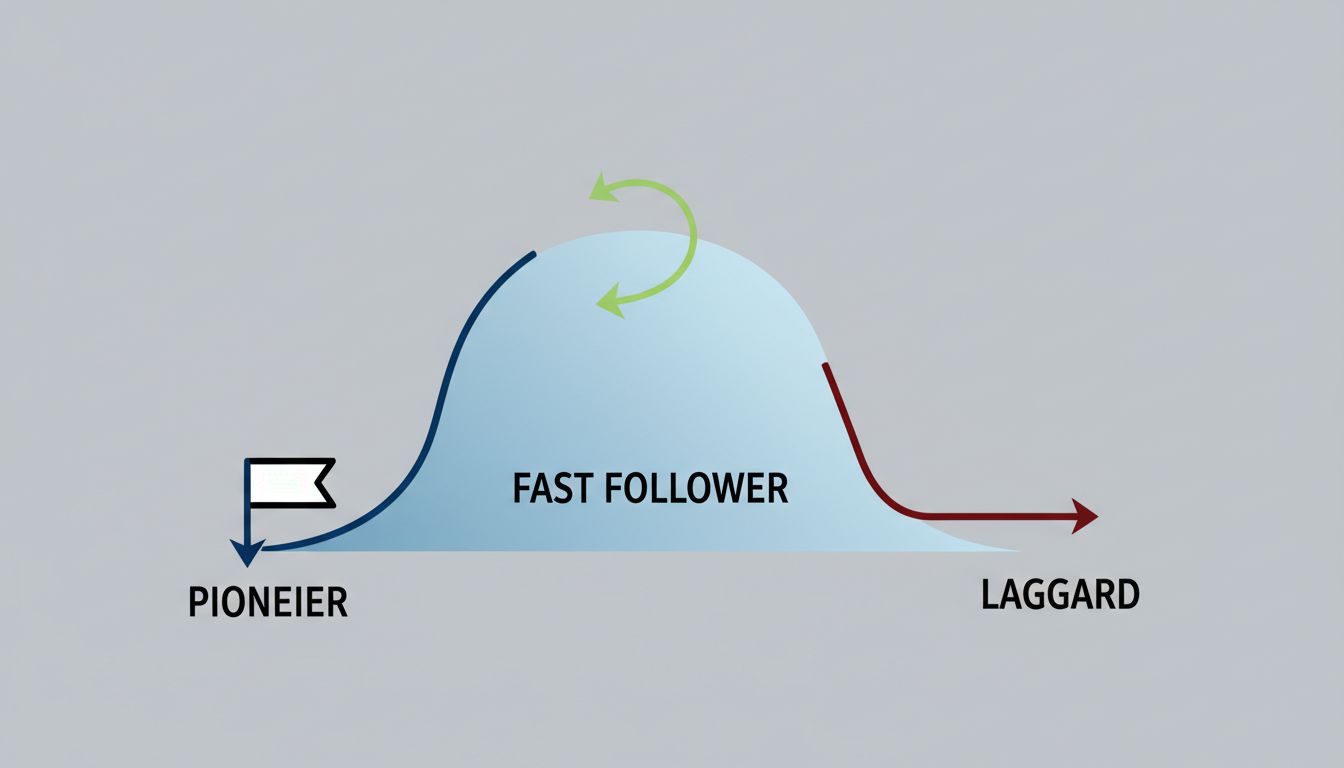

Why Number Two Has Structural Advantages

There’s an underappreciated financial argument for arriving second with a good-enough product. The pioneer has to educate the market, fund the infrastructure, absorb the failed iterations, and convince early adopters who are a small fraction of eventual buyers. The fast follower inherits all of that investment at no cost.

Amazon didn’t invent online retail. eBay didn’t invent person-to-person commerce. Google didn’t invent the search engine. In each case, a pioneer did the expensive work of proving the concept, and a fast follower arrived with a cleaner execution, better timing, and the advantage of learning from the pioneer’s mistakes. The fast follower also competes in a market where buyers already understand the category, which dramatically lowers the cost of conversion.

This doesn’t mean technical quality is irrelevant. It means technical quality is necessary but not sufficient, and that the threshold for “good enough” is lower than engineers typically assume. A product that clears the threshold of competence and then wins on distribution, timing, or network effects will beat a product that clears the threshold of excellence but lacks those advantages.

What This Means for Product Strategy

The practical implication isn’t that companies should stop trying to build good products. The implication is that product teams need to be honest about what problem they’re actually solving and for whom.

A product built for expert users who will appreciate its technical depth is a different product than one built to spread through an organization via the path of least resistance. Both can succeed. But a product designed with expert users in mind, then sold to procurement committees and IT departments that care primarily about compatibility and support, will consistently underperform its technical merits.

The companies that navigate this well tend to do one thing clearly: they define “better” from the buyer’s position, not the builder’s. That means accounting for switching friction, for the learning curve, for whether the product fits into existing workflows or demands that workflows change. The product that asks the least of its buyers while delivering enough value to justify the switch will usually beat the product that demands the most in exchange for the greatest reward.

Betamax was better. It lost anyway. The lesson isn’t that quality doesn’t matter. The lesson is that quality is only one variable in a system where distribution, timing, and buyer psychology carry equal or greater weight. Product teams that internalize this tend to build things that actually ship and spread, rather than things that win arguments in design reviews and lose in the market.