The AI community has a branding problem, and it’s not the one you think. The problem isn’t hype, exactly. It’s selective memory. When researchers describe large language models as systems that “understand context” or “reason about code,” they’re right in a limited sense, but they’re also describing something that compiler engineers have built, refined, and shipped in production for fifty years.

This matters practically. If you’re a developer evaluating AI code tools, an engineering manager deciding where to invest, or just someone trying to cut through the noise, understanding what’s genuinely new versus what’s been rebranded helps you make better decisions.

What a Compiler Actually Does to Your Code

Most developers have a fuzzy mental model of compilation: you write code, the compiler turns it into machine code, done. The reality is that between your source file and the executable sits a sophisticated analysis and transformation pipeline that would look at home in a modern AI system description.

Take LLVM, the compiler infrastructure that powers Clang, Rust’s compiler backend, and Swift. When LLVM processes your code, it first converts it into an intermediate representation called IR. Then it runs your program through a series of optimization passes. Constant folding evaluates expressions at compile time rather than runtime, so if you write x = 2 * 3 * y, the compiler rewrites it as x = 6 * y before your program ever runs. Common subexpression elimination recognizes when you’ve computed the same value twice and keeps only one copy. Inlining replaces function calls with the function body itself when that’s faster.

None of this requires the program to run. The compiler reasons about what your code will do by analyzing its structure. That’s static analysis, and it’s the same fundamental idea behind tools that now get marketed as AI-powered code review.

Dead Code Elimination and the Training Data Problem

One of the most instructive compiler techniques is dead code elimination. The compiler builds a control flow graph of your program, traces every possible execution path, and identifies code that cannot be reached. It removes that code entirely before compilation. Your binary gets smaller and faster without you doing anything.

This sounds trivial until you think about what it requires: the compiler must understand the meaning of your program well enough to know what parts are necessary. It builds a model of your code’s behavior and uses that model to make decisions.

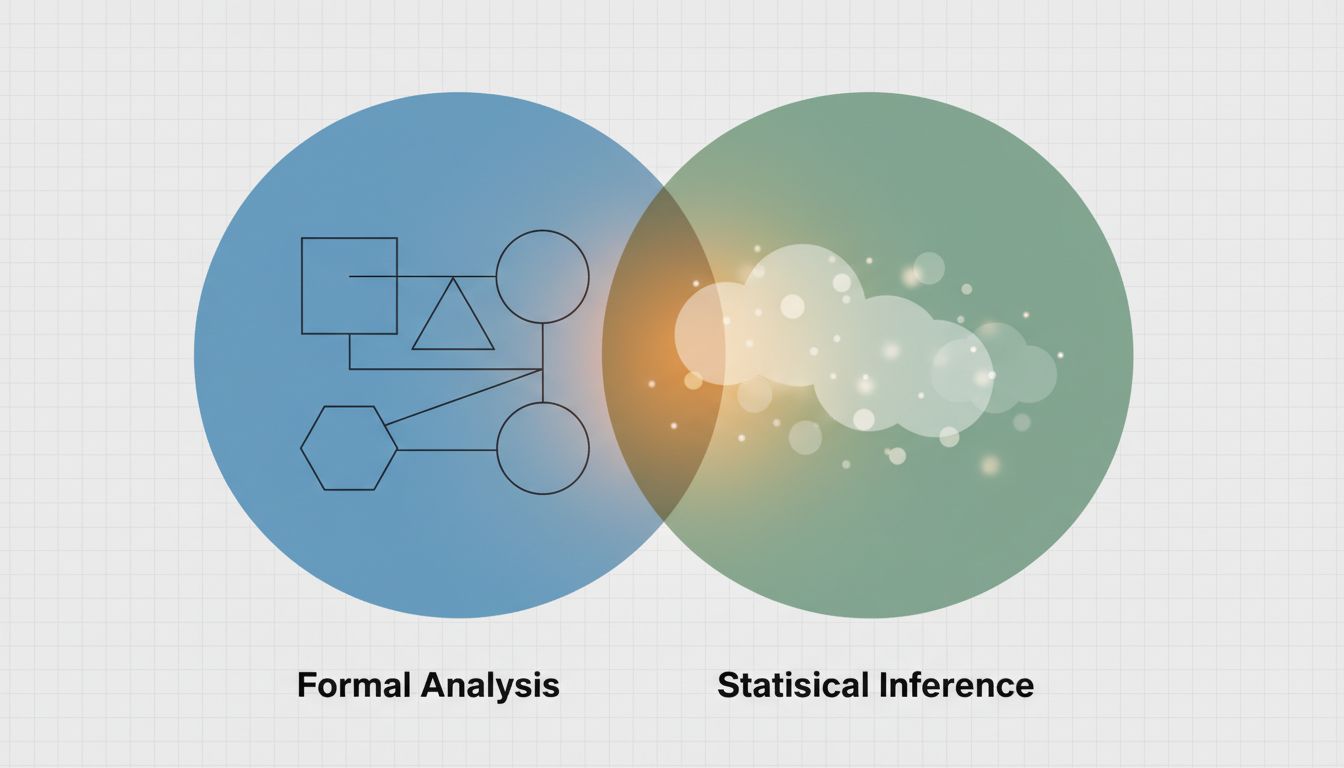

LLM-based code tools do something structurally similar when they identify unused variables or flag unreachable branches. The difference is method (statistical pattern matching over training data versus deterministic graph traversal) and certainty (compilers are provably correct within defined semantics; LLMs are probabilistic). But the goal is identical and the compiler version has been running in production compilers since the 1960s.

When you see a marketing claim that an AI tool “understands your codebase,” ask yourself: understands it how? A compiler understands your code completely within its semantic model. An LLM understands it approximately within a statistical model. Both are genuinely useful. Neither is magic.

Loop Transformations and the Optimization Mindset

If dead code elimination shows you compilers reasoning about logic, loop transformations show you compilers reasoning about performance at a level most developers never think about.

Loop unrolling takes a loop that runs four times and rewrites it as four sequential operations. This eliminates the overhead of conditional branch checking on each iteration. Loop fusion merges two adjacent loops into one to improve cache locality. Loop vectorization rewrites scalar operations to use SIMD instructions, so a single CPU instruction processes four or eight values simultaneously. GCC and LLVM do all of this automatically, based on analysis of your code and the target hardware’s characteristics.

This is hardware-aware optimization based on modeling the execution environment. When AI tools like GitHub Copilot suggest that you restructure a loop for better performance, the insight is similar. The execution is different: the compiler uses formal analysis and hardware specifications, while Copilot uses patterns learned from code where humans made similar optimizations. But again, the categories overlap more than the marketing suggests.

The practical implication for you: compiler flags are an underused tool. Running your code with -O2 versus -O3 in GCC or Clang can produce measurably different performance without changing a line of your source. Understanding what those flags actually do (they control which optimization passes run) makes you a better judge of what AI tools can realistically offer on top of that foundation.

Type Inference and What “Understanding” Really Means

Haskell’s type inference system, formalized in the Hindley-Milner type system from 1969, can determine the type of any expression in a program without explicit annotations. You write a function, and the compiler figures out that it works on any type that supports equality comparison, or specifically on integers, or returns a list of whatever type its input is. It infers the most general type that’s still correct.

This is the compiler reasoning about what your code means, not just what it says. TypeScript’s type inference does the same thing in a less theoretically pure but more practical way. When TypeScript sees you assign const x = 5, it knows x is a number without being told. When it sees you pass x to a function that expects a string, it tells you that’s a problem before you run anything.

AI-powered code completion tools that “understand” types are mostly building on this same foundation. Copilot, for example, benefits from TypeScript’s type information being available in the context it receives. The AI layer adds the ability to work across incomplete or ambiguous contexts. But type inference, one of the most impressive things a tool can do with code, has been solved and shipped for decades.

This should recalibrate your expectations usefully. The bar for AI code tools isn’t “can it understand types” (solved). It’s “can it do useful things in contexts where formal analysis breaks down,” which is a narrower and more honest framing of where these tools add real value.

Where Compilers Stop and Where AI Picks Up

Being clear about overlap doesn’t mean dismissing what’s new. Compilers work within precise, formally defined semantics. They reason about code that is syntactically valid and semantically well-formed in a specific language. They cannot read intent. They don’t handle incomplete specifications. They work entirely within the box you give them.

This is exactly where LLM-based tools have traction. Ask a compiler to generate a function that “fetches user data and handles the edge cases” and it has nothing to work with. Ask Copilot that, and you get a plausible first draft. The trade-off is that the compiler’s output is provably correct within its model, while Copilot’s is statistically likely to be useful. Those are genuinely different things and both matter.

The honest description of AI code tools is that they extend assistance into the ambiguous, intent-heavy, early-stage parts of development where formal methods cannot operate. They’re not replacing compiler optimization. They’re covering a different part of the map.

For developers deciding which AI tools to adopt, this framework is actionable. If a tool claims to optimize your loops or detect dead code, your compiler already does that better. If a tool helps you generate boilerplate from natural language descriptions, or explains unfamiliar code, or suggests approaches to under-specified problems, that’s where the actual value proposition lives. Prompt engineering and its built-in ephemerality is worth thinking about in this context too: the most durable value from AI tools tends to come from where formal methods genuinely can’t go.

The Verification Problem Neither Has Solved

Here’s the area where both compilers and AI tools struggle, and where the next decade of interesting work will happen: verification under uncertainty.

Compilers can prove that an optimization is semantically equivalent to the original code (within the language specification). They cannot prove that your program does what you intended, because your intent isn’t part of the specification. AI tools can make reasonable guesses about your intent, but cannot prove their output is correct. Formal verification systems like those used in seL4 (a verified microkernel operating system) can prove programs correct against a specification, but they require enormous manual effort to specify what “correct” means.

The interesting frontier is not “AI versus compilers” but whether AI can help bring the verification workload down to the point where formal methods become practical for more of the code that matters. There’s genuine research happening on using LLMs to generate proof hints for formal verification systems. That’s new, genuinely new, in a way that “AI detects dead code” is not.

What This Means

If you take nothing else from this: the techniques being attributed to AI in software tools are mostly decades-old ideas from compiler theory, static analysis, and formal methods, applied through a new mechanism (statistical learning over large corpora rather than formal analysis). That’s real and useful, but it’s incremental, not revolutionary.

For your day-to-day decisions, this means a few things. First, understand your compiler’s optimization flags and use them. You’re leaving performance on the table if you’re not. Second, when evaluating AI code tools, ask where they add value that your existing tools (compiler, linter, type checker) don’t already provide. Third, be skeptical of tools that claim to do something that formal analysis can already do deterministically. A probabilistic version of a solved problem is usually a worse version.

The genuinely new territory for AI in software is in the ambiguous, intent-heavy, early-stage work that formal methods were never designed to handle. That’s worth paying attention to. The rebranding of compiler theory as AI innovation is not.