Most software either works or it doesn’t. You write a function to add two numbers, deploy it, and if it returns the wrong answer, you have a bug with a clear location. Machine learning models don’t behave this way. They degrade silently, fail confidently, and cost you nothing obvious until they cost you everything.

Deploying a model to production is one of those processes that looks straightforward on a diagram and turns out to be genuinely hard in practice. The gap between “we have a model with 94% accuracy on our test set” and “this model is reliably useful in the real world” is where most ML projects quietly die.

The Gap Between Benchmark and Reality

Accuracy on a held-out test set is a useful signal during development. It becomes a liability when teams treat it as a deployment checklist. The test set was drawn from the same distribution as the training data, which means it tells you how the model performs on data that looks like data it has already seen. Production data doesn’t care about that.

Consider a fraud detection model trained on transactions from January through September. It hits 96% accuracy on the October holdout set. Then it gets deployed in November and starts missing fraud patterns that emerged during the holiday shopping surge, patterns that simply weren’t represented in the training data. The model isn’t broken. It’s doing exactly what it was trained to do. The problem is that the world it was trained on no longer matches the world it’s being asked to evaluate.

This is called distribution shift, and it’s not an edge case. It’s the default condition for any model operating over time. User behavior changes. Product features change. Upstream data pipelines change in ways no one documents. The model, frozen at training time, can’t adapt.

Latency Is a Product Decision Disguised as a Technical One

A model that’s accurate but slow is often just a slow product. The acceptable latency budget for a real-time recommendation on a product page is measured in tens of milliseconds. A transformer model that takes 800ms to return an answer might be technically deployed but practically unusable.

Teams underestimate how much of model serving is really infrastructure engineering. You’re not just running inference. You’re managing GPU allocation, batching requests to maximize throughput, caching outputs where possible, and deciding how to degrade gracefully when the model is unavailable. A model hosted on a single GPU instance with no fallback isn’t production infrastructure. It’s a demo with uptime risk.

The batching question alone is worth spending time on. Serving models one request at a time is inefficient. Batching ten requests together and running inference once improves throughput significantly, but it introduces latency for the first request in the batch, which is now waiting for nine others to arrive. Getting this tradeoff right requires understanding your actual traffic patterns, not the ones you assumed during design.

What Monitoring Looks Like When the Model Is the Bug

Standard application monitoring tracks things like error rates, response times, and uptime. These metrics are necessary for ML deployments but not sufficient. A model can return HTTP 200 on every request while producing outputs that are progressively more wrong. Your dashboards look green while your product quietly degrades.

Production ML monitoring needs to track the inputs, not just the outputs. If the distribution of incoming requests shifts, you want to know before users start complaining. This means logging feature distributions, tracking statistical properties of inputs over time, and building alerting around meaningful drift thresholds. It’s more work than dropping in a metrics library, and it’s the kind of work that rarely makes anyone’s task list until something breaks badly.

Output monitoring matters too, but it’s harder. You often don’t have ground truth labels in real time. A recommendation model can’t know immediately whether it made a good recommendation. You’re left monitoring proxies: click rates, conversion rates, downstream user behavior. These signals are noisy, delayed, and easy to misread. A model that recommends more expensive items might improve revenue short-term while damaging user trust long-term. The metric looks good; the product is getting worse.

Shadow Deployments and Canary Releases Actually Work

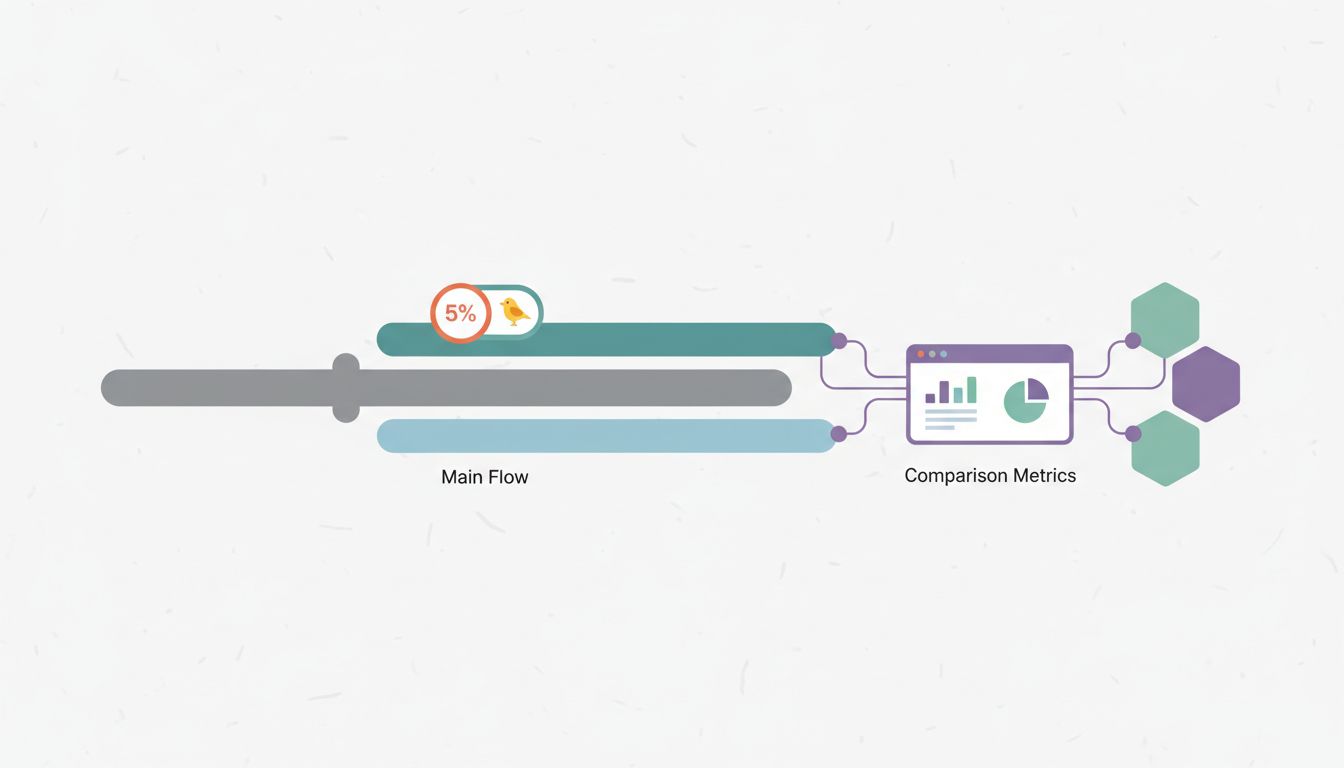

The most reliable way to know how a new model will behave in production is to run it in production without committing to it. Shadow deployments route live traffic to the new model in parallel with the existing one. The new model runs inference, logs its outputs, but doesn’t serve them to users. You get real production behavior without real production consequences.

Canary releases are the next step: send a small percentage of live traffic to the new model and compare outcomes. If the new model performs well on 5% of traffic, you have evidence for a broader rollout. If it degrades on a specific user segment or request type you didn’t anticipate, you find out before it affects everyone.

This isn’t novel advice, but it’s consistently under-applied. Teams spend months building and evaluating a model, then deploy it all at once on a Thursday afternoon because there’s pressure to ship. The failure mode here is predictable, and as bugs that only surface in production suggest, the cost of that approach usually shows up at the worst possible time.

The Ongoing Work After Deployment

Shipping a model is not a milestone. It’s a starting point for an ongoing operational commitment. Models need to be retrained as data drifts. Feature pipelines need to be maintained as upstream systems change. Monitoring thresholds need to be recalibrated as baselines shift.

Many teams staff for development and understaff for operation. The ML engineer who built the model moves on to the next project. The model runs untouched for eighteen months, slowly degrading in ways nobody is watching, until a business stakeholder asks why a particular metric has been trending down. The post-mortem eventually traces back to a data pipeline change from a year ago that nobody connected to the model.

The organizational implication is that model deployment requires owning the model, not just shipping it. That means dedicated monitoring, scheduled retraining cadences, and clear accountability for production behavior. Teams that treat deployment as a handoff from research to infrastructure tend to find out, expensively, that the handoff doesn’t actually work.