Most engineers who work with embeddings carry a quiet misconception: that when two vectors are close together in space, it means the underlying concepts are somehow alike. That’s a seductive framing, but it’s wrong in a precise and important way. Embeddings don’t capture meaning. They capture statistical co-occurrence patterns baked out of massive text corpora. The difference between those two things should change how you build systems that rely on them.

What an Embedding Actually Encodes

An embedding is a list of numbers, typically hundreds to thousands of dimensions, produced by a model trained to predict context. The canonical example is Word2Vec, which was trained on the principle that words appearing in similar contexts should land near each other in vector space. The famous demo: king - man + woman ≈ queen. People interpreted this as evidence that the model had learned concepts. What it actually learned was that those words appear in structurally similar grammatical and topical contexts.

This is not a minor distinction. The word “nurse” historically embedded closer to “woman” than “doctor” did, not because of anything inherent to nursing, but because the training data reflected decades of gendered language patterns. The model learned the world as it was written, not the world as it is. That’s the system working exactly as designed. The problem arises when we treat the output as a neutral measure of semantic truth.

Modern sentence and document embeddings from models like OpenAI’s text-embedding-ada-002 or the open-source Sentence-BERT family are more sophisticated, but they carry the same fundamental character. They encode patterns of linguistic habit at massive scale.

Why Cosine Similarity Is Weirder Than It Looks

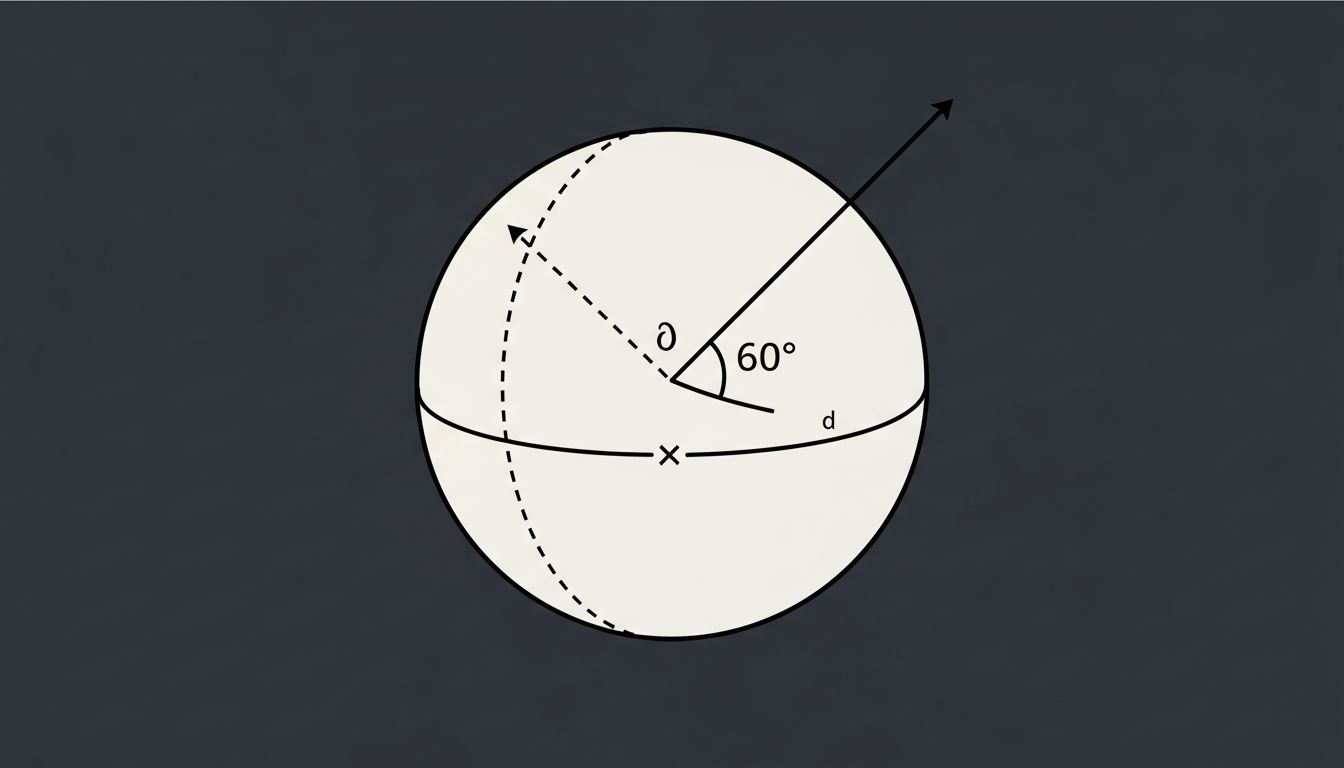

The standard tool for comparing embeddings is cosine similarity, which measures the angle between two vectors rather than their distance. A score of 1.0 means the vectors point in exactly the same direction. A score near 0 means they’re orthogonal, essentially unrelated.

Here’s where it gets strange. In high-dimensional spaces, most random vectors are nearly orthogonal to each other. This is a well-documented property of high-dimensional geometry, sometimes called the “curse of dimensionality.” When you’re working in 1,536 dimensions (the size of Ada’s output), the cosine similarity scores for most pairs of things cluster in a surprisingly narrow band. The spread between “somewhat related” and “closely related” can be just a few hundredths of a point.

This means that small differences in similarity score carry enormous practical weight, but the absolute values of those scores are nearly meaningless without calibration. A score of 0.82 versus 0.79 might be the difference between a retrieved document being relevant or garbage, but nothing about those numbers tells you that intuitively. Engineers often set thresholds by feel or trial and error, which is a reasonable approach, but it exposes how much empirical guesswork underlies systems that get described as “finding semantically similar content.”

The Nearest Neighbor Problem Is a Proxy Problem

Retrieval-augmented generation pipelines, semantic search systems, and recommendation engines all depend on finding the nearest neighbors to a query vector. The bet is that geometric proximity in embedding space corresponds to usefulness for the task at hand.

Sometimes this works beautifully. Sometimes it produces results that are superficially plausible but substantively wrong, and the failure mode is invisible without careful evaluation. An embedding model trained on general web text might surface documents that sound like they’re about your query topic while missing the actually relevant technical document because it was written in more specialized language that the model mapped to a different region of the space.

This is why what actually happens when you deploy a model to production often looks different from what the benchmarks suggested. Benchmark evaluations use curated datasets where the nearest neighbors are well-defined. Production queries are messier, and the mismatch between the embedding space’s notion of similarity and the user’s notion of relevance is where most RAG systems quietly degrade.

The Counterargument

The reasonable pushback here is that this is all just implementation detail. Embeddings work well enough in practice, and the theoretical critique doesn’t translate into meaningful production failures for most use cases. Semantic search is genuinely better than keyword search for a wide class of queries. Vector databases have enabled real applications that didn’t exist before.

All true. But “works well enough” is a different claim than “measures meaning,” and conflating the two leads to real mistakes. Teams build embedding-based systems for tasks where the underlying assumptions don’t hold, they trust similarity scores without calibrating them to their specific domain, and they debug failures by tweaking prompts instead of examining whether the embedding space actually represents what they need it to represent. The practical value of the technology is not in dispute. The conceptual framing around it is.

Precision in What We Claim

Embeddings are extraordinarily useful engineering tools. Vector search has made a category of problems tractable that were previously intractable. But they are tools that encode statistical habit, not semantic truth, and the systems built on them are only as reliable as the match between that statistical structure and the task being solved.

The engineers who build the most robust systems are the ones who treat embedding similarity as a heuristic to be validated rather than a ground truth to be trusted. They evaluate their retrieval pipelines against real-world queries in their domain. They notice when the model’s notion of “close” diverges from their users’ notion of “relevant.” They don’t mistake a high cosine score for a guarantee of meaning.

That’s not skepticism about the technology. It’s just accuracy about what the technology is.