The Simple Version

A language model decides what each word in your prompt means by measuring how much every other word influences it. Once you understand that, most prompt engineering advice stops being folklore and starts being obvious.

Why Prompt Advice Feels Like Superstition

Ask around and you’ll collect a grab bag of prompt tips: start with “You are an expert,” add “think step by step,” put the important instruction at the end, use XML tags, write in all caps for emphasis. Some of this works. Almost none of the people dispensing it can tell you why.

That’s cargo-culting. You’ve observed that planes land when the guys in the tower wave the paddles, so you build a tower and wave paddles. The ritual looks right. The planes don’t come.

The frustrating part is that the techniques aren’t wrong, exactly. “Let’s think step by step” genuinely improves performance on multi-step reasoning tasks. Researchers at Google Brain documented this in a 2022 paper by Wei et al. on chain-of-thought prompting. But if you don’t understand why it works, you’ll apply it everywhere, including places where it actively degrades output, and you’ll have no way to diagnose what went wrong.

What Attention Actually Does

Transformer models, which underlie every major language model you’ve used, process text through a mechanism called self-attention. The 2017 paper “Attention Is All You Need” introduced the architecture that most modern LLMs are built on.

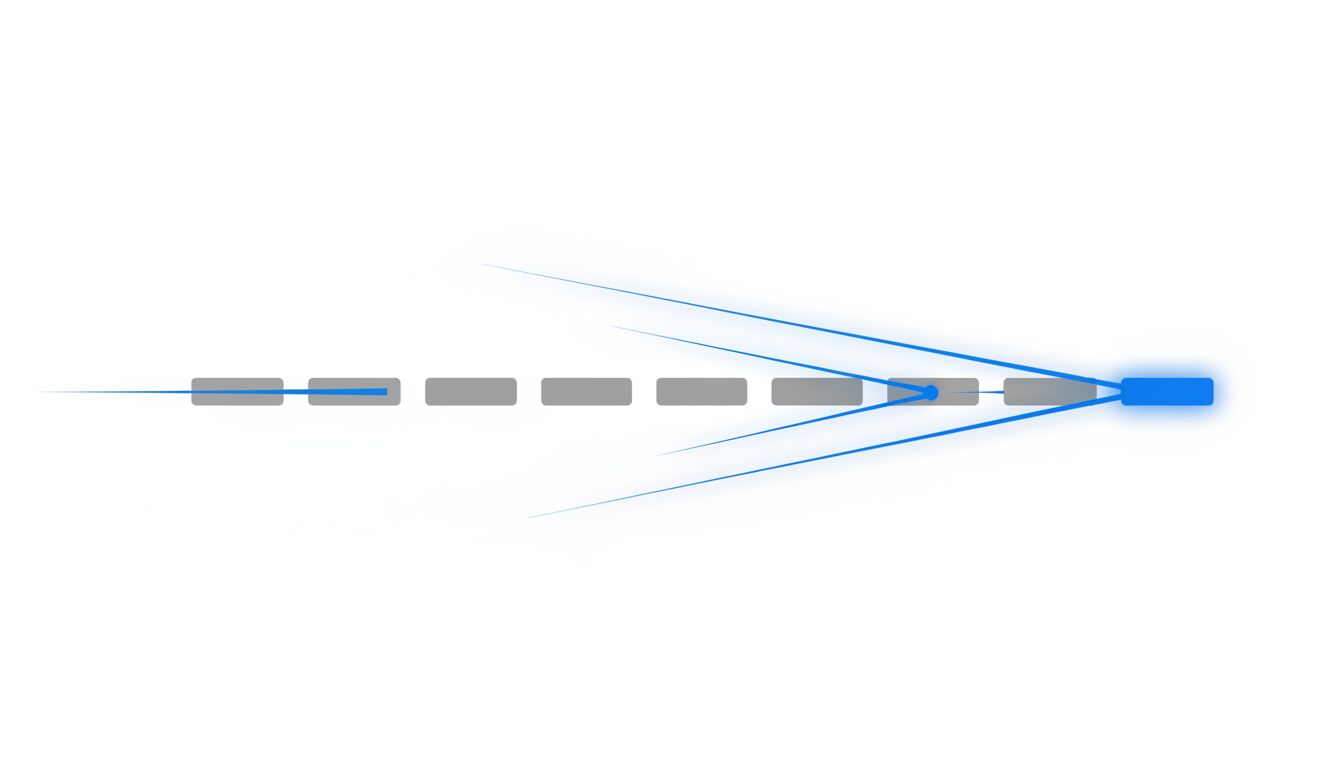

Here’s the intuition. When a model reads your prompt, it doesn’t process words in isolation. For every token (roughly, every word or word-fragment), the model computes a score representing how much that token should “attend to” every other token in the context. These scores determine the representation each word gets before the model predicts the next token.

Consider the sentence: “The trophy didn’t fit in the suitcase because it was too big.” What does “it” refer to? The trophy. You know this because “big” attends strongly to “trophy” given everything else in the sentence. The model does the same thing, dynamically, across every token pair in your input.

This is why context length matters. Longer context means more token pairs to score, which is why processing costs scale quadratically with sequence length in the naive implementation (and why a lot of engineering effort goes into approximating attention more cheaply).

What This Tells You About Your Prompts

Several things that prompt engineers treat as magic tricks fall out naturally from how attention works.

Recency and primacy effects are real but context-dependent. The model doesn’t just read your prompt once and summarize it. Every token attends to every other token, but positional encodings and training dynamics mean that tokens at the beginning and end of a long prompt tend to have stronger influence on the output than tokens buried in the middle. This is sometimes called the “lost in the middle” problem, documented by researchers at Stanford and UC Berkeley. Putting your key instruction at the start or end of a long prompt isn’t superstition; it’s working with a real asymmetry.

Repetition is a form of emphasis. If the model computes attention over all tokens and you’ve stated a constraint three times, three groups of tokens are all pulling toward that constraint. Saying “respond only in JSON, no prose, only JSON” isn’t redundant, it’s loading more weight onto the signal. This also explains why contradictions in a prompt are so destructive. When you tell a model to “be concise” in the system prompt and then provide a verbose example in the few-shot section, you’ve created opposing attractors and the output becomes unpredictable.

Format signals are semantic signals. Using XML-style tags like <instructions> or markdown headers isn’t just organizational tidiness. Those characters appear in training data with consistent meaning; the tokens that make up <instructions> co-occur, in billions of training examples, with content that follows a certain structure. When you use them, you’re not just adding labels for your own reading clarity, you’re activating patterns the model has seen before. The formatting is part of the meaning.

Chain-of-thought works because reasoning is generative. When you prompt a model to show its work, you’re asking it to generate intermediate tokens that then become context for the final answer. Each reasoning step attends to the previous ones. You’re essentially making the model build a scaffold and then stand on it, rather than jumping directly to a conclusion. This is why it helps for problems with multiple interdependent steps and does nothing (or hurts) for simple factual retrieval.

As a complement to this, What an LLM Does With Your Prompt Before Responding covers the tokenization and preprocessing stage that happens before attention even begins, which is another layer people frequently misunderstand.

Where This Changes Your Practice

Understanding attention gives you a debugging frame, not just a recipe list.

When a model ignores part of your instruction, the first question becomes: is the relevant signal getting diluted by competing context? A long, meandering system prompt with the key constraint buried in paragraph four is not the same as a short, focused one with that constraint at the top. The tokens are there, but their influence is weaker.

When a model drifts in long conversations, you’re watching attention cope with a context window that’s filling up. The early instructions are still technically present, but hundreds or thousands of newer tokens are competing for influence. Re-stating key constraints mid-conversation isn’t nagging; it’s refreshing the signal.

When few-shot examples produce inconsistent results, check whether the examples you’ve provided are actually consistent with each other. Conflicting examples don’t average out into some useful middle ground; they generate noise in the attention patterns the model uses to predict your intended format.

None of this requires you to read papers or implement anything. It requires you to have a mental model of what the model is actually doing, so that when something breaks, you’re reasoning about causes rather than shuffling incantations.

The people who are genuinely good at working with language models aren’t the ones who’ve memorized the most prompt templates. They’re the ones who can look at a bad output and say, with some confidence, why the model went wrong. Attention is the mechanism that makes that diagnosis possible.