The oldest, ugliest code in your organization is probably also the most important code. Not despite its age, but because of it. Software that never gets rewritten has survived because everything else depends on it, and everyone who tried to replace it eventually discovered that the blast radius was larger than they thought.

This is not a celebration of technical debt. It’s an observation about how criticality and legibility tend to drift apart over time, and why that gap matters more now that AI-assisted development is accelerating the pace at which new systems get built on top of old ones.

Rewrite Attempts Reveal Hidden Dependencies

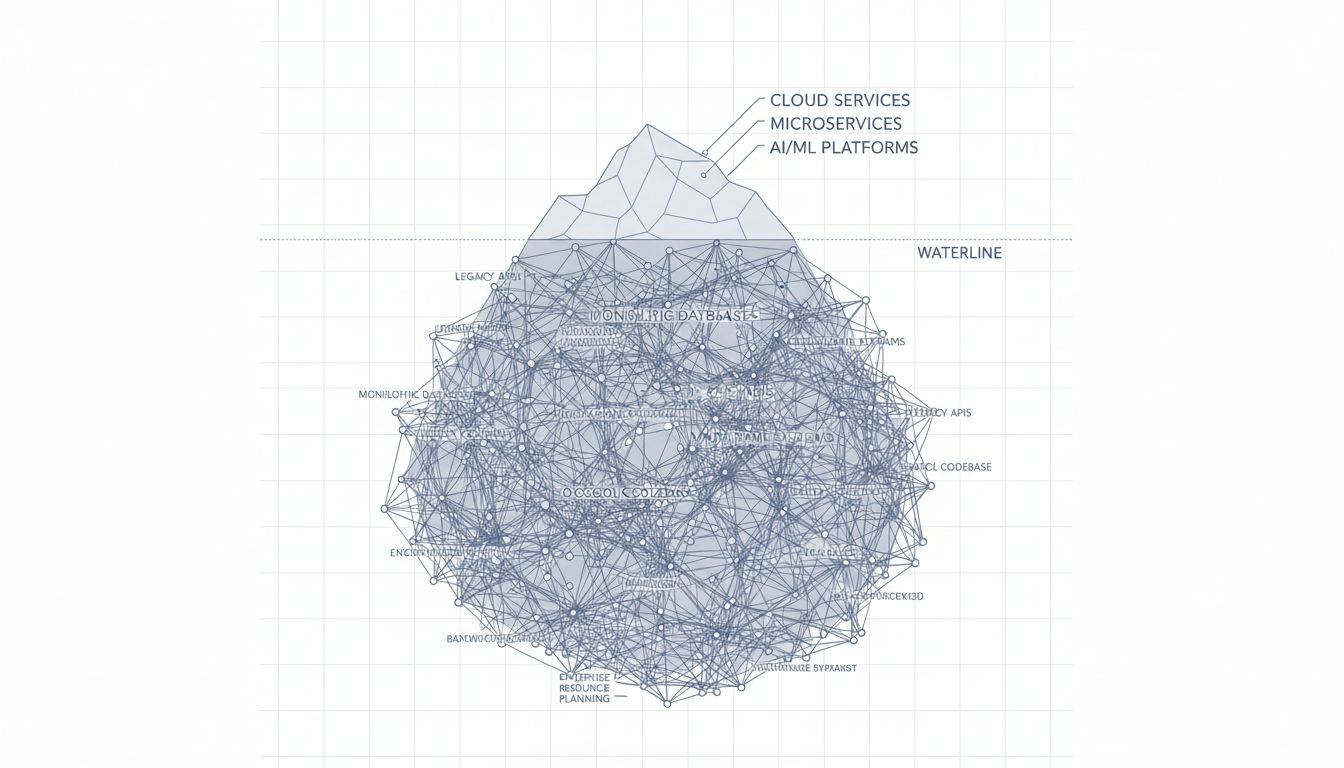

Every senior engineer has a story about the rewrite that got cancelled. The pattern is almost always the same: a team identifies a piece of legacy code that looks straightforward to replace, scopes the project based on what they can see, and then starts discovering the iceberg below the waterline.

This happens because old code accumulates implicit contracts. A payroll system written in the 1990s doesn’t just calculate pay. Over two decades, someone added logic to handle a union agreement that expired in 2003 but whose edge cases still affect certain pension calculations. Someone else hard-coded a rounding behavior to match a specific version of a government form. None of this is documented because the people who knew about it retired, and the code itself became the documentation.

When you try to rewrite the system, you discover these contracts not from reading the code, but from breaking them in production. The rewrite that was scoped for six months takes three years, and sometimes it just stops.

Longevity Is a Proxy for Load-Bearing Structure

There’s a selection effect at work. Software gets rewritten when the cost of rewriting is lower than the cost of maintaining it, and when stakeholders believe the new version will be meaningfully better. That calculation rarely favors critical infrastructure.

Consider the COBOL systems still running inside major financial institutions and government agencies. The reason they haven’t been replaced isn’t purely inertia or budget constraints. It’s that they handle transaction volumes and edge-case correctness requirements that newer systems repeatedly fail to match during migration attempts. The U.S. Social Security Administration runs COBOL. So does a substantial portion of global ATM and banking infrastructure. These systems process trillions of dollars annually, and every serious replacement attempt has either stalled or been quietly scaled back.

The longevity isn’t a bug. It’s evidence that the software is doing something real that proved hard to replicate.

The Abstraction Gap Grows Over Time

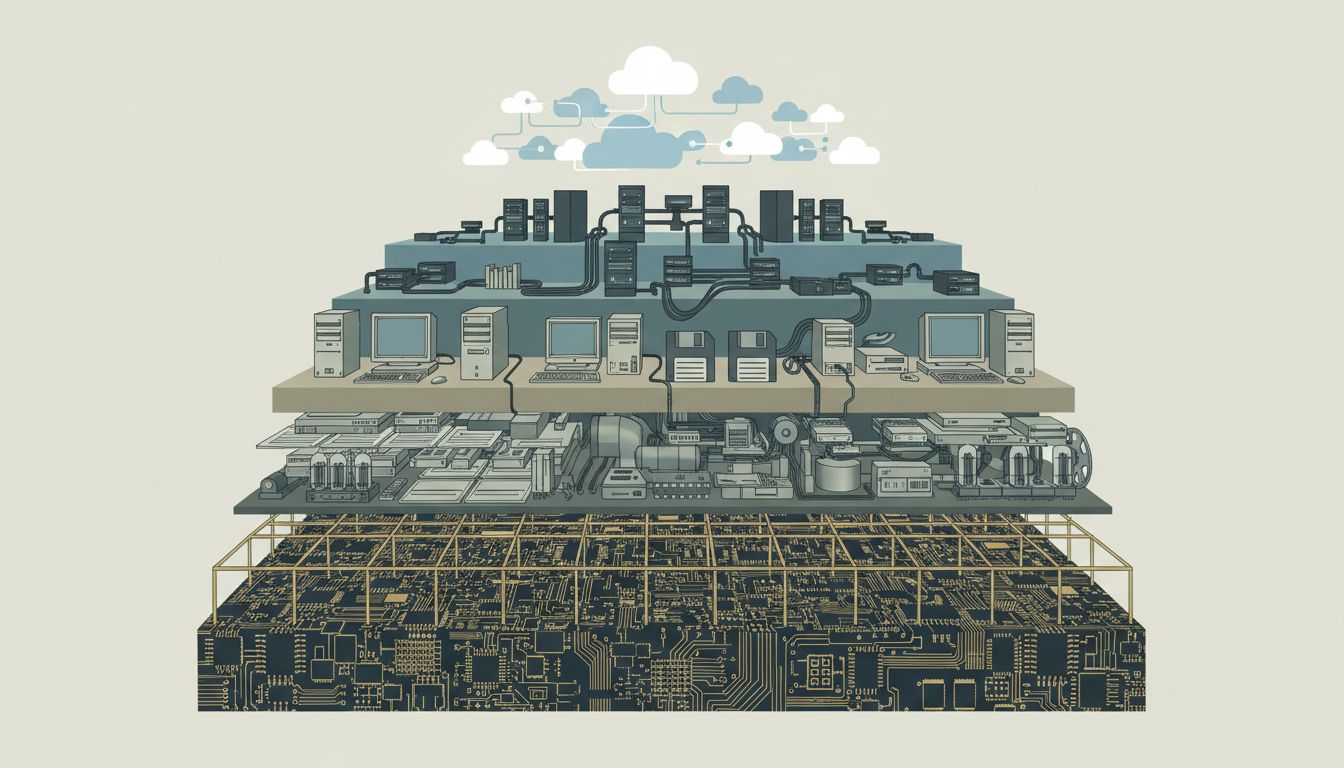

New software tends to be built at higher levels of abstraction than the systems underneath it. This is generally good: it lets teams move faster and reason about smaller surfaces. But it also means that the people writing the new code often have no mental model of what’s happening several layers down.

A React frontend developer building a dashboard has no particular reason to understand the stored procedures generating the data beneath three API layers. That’s fine until the stored procedures need to change, at which point nobody in the current organization can confidently say what depends on them or how. As the article on deleting a database column illustrates, even a seemingly small structural change in a data layer can cascade in ways that surprise teams who thought they had good visibility into their own system.

The older the code, the more likely it sits at the bottom of one of these abstraction stacks, invisible but structural.

The Counterargument

The obvious pushback is that survivorship bias is doing most of the work here. Not all legacy code is critical. A lot of it is just forgotten. Internal tooling, one-off scripts, deprecated integrations that nobody cleaned up: these stick around too, and they’re not load-bearing in any meaningful sense.

That’s fair. The claim isn’t that old code is important because it’s old. The claim is that code which has been repeatedly considered for replacement and survived those conversations tends to be critical. There’s a difference between code nobody has looked at in ten years and code that a team tried to replace in 2015, 2018, and 2022, and each time found they couldn’t. The latter is the interesting category.

You can usually tell the difference by asking: has anyone tried to get rid of this? If the answer is yes, and it’s still here, that’s signal.

Build Accordingly

The practical implication is straightforward. When you’re designing new systems that sit on top of existing infrastructure, treat the infrastructure with more respect than it probably looks like it deserves. Don’t assume that because something is old it can be easily abstracted away or eventually replaced. And when you’re working with AI tools that generate code quickly and confidently, remember that they have no model of the institutional history encoded in your legacy systems. They can’t see the dead union contract in the payroll logic.

The software that never gets rewritten is usually telling you something. The honest interpretation is that it’s doing real work that proved harder to replicate than anyone expected. Build on top of it carefully, document the contracts it enforces, and be skeptical of anyone who says replacing it will be straightforward. They are almost certainly not accounting for the iceberg.