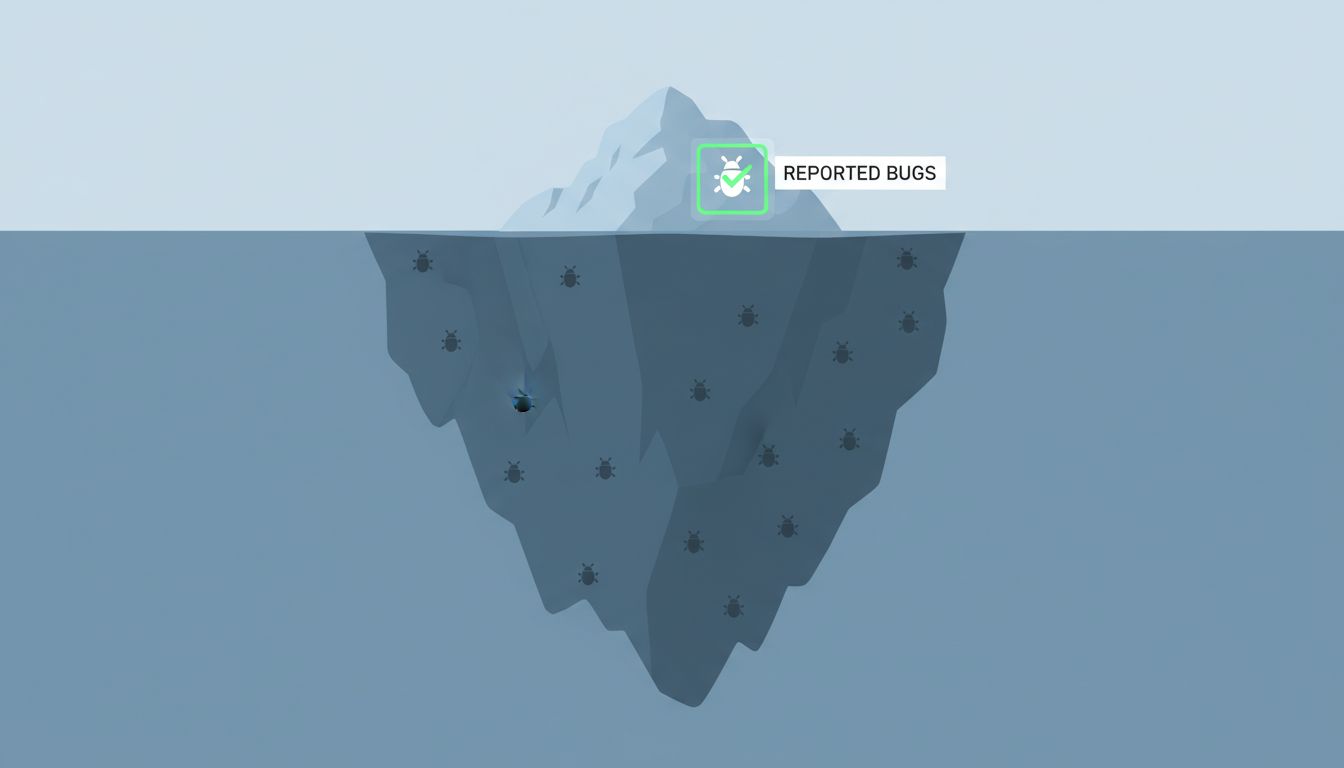

Your test suite is not your quality floor. Your users are. And most of them will never tell you.

This is the uncomfortable truth that engineering teams paper over with coverage metrics and CI green lights: the majority of bugs that actually affect users in production are never surfaced through formal channels. They’re absorbed quietly, worked around instinctively, or just accepted as the cost of using software. The ticket never gets filed. The Slack message never gets sent. The user just stops doing the thing that broke, and you never find out.

Why users don’t report bugs

Filing a bug report is work. It requires a user to believe a few things simultaneously: that the problem is real and reproducible, that someone will read the report, that something will actually change, and that spending ten minutes writing it up is worth their time. Most users don’t believe all four of those things, and honestly, their skepticism is often justified.

What users actually do when they hit friction is adapt. They refresh the page. They use a different browser. They skip the feature entirely and find another way to get what they need. They tell a colleague the tool is “a bit buggy” and move on. This behavior is invisible to your monitoring unless you’re explicitly looking for it. Abandonment rates on specific flows, feature usage drop-offs, support queries that circle around a problem without naming it directly — these are the traces that bugs leave when users don’t file tickets.

The research on this is consistent across industries: most customer dissatisfaction never reaches the company directly. This isn’t unique to software, but software has a particular version of the problem because the gap between “user who hit a bug” and “engineer who could fix it” is so wide and institutionally managed.

Tests can only catch what you imagined

A test suite is a formalization of your assumptions. You write tests for the paths you thought of, the edge cases you anticipated, the regressions you already experienced. This is genuinely valuable work. But it’s structurally incapable of catching the bugs introduced by the combination of behaviors you didn’t anticipate.

Users are creative in ways that test suites are not. They paste content copied from Excel with invisible Unicode characters. They use your mobile app on a three-year-old Android device on a 3G connection while the GPS is active. They navigate backwards through a multi-step form using the browser’s back button instead of your custom navigation. They leave a session open for six hours and come back to submit it. Your tests didn’t cover those paths because no one thought to write them. The users found the bugs anyway.

This is related to the problem described in Your Staging Environment Is Lying to You: the controlled environment you use to validate software is fundamentally different from the chaotic environment in which it runs.

The signal you’re ignoring

Here’s what’s actionable about this. The bugs your users are silently absorbing are leaving traces, and those traces are findable if you look for them deliberately.

Session replay tools show you where users rage-click, where they hesitate, where they retry the same action multiple times. Error monitoring tools like Sentry capture exceptions that nobody reported. Analytics show you which funnels have unusual drop-off rates at specific steps. Support conversations, even the ones that don’t name a bug explicitly, cluster around problem areas if you read them as a corpus rather than as individual tickets.

The practice of reading these signals regularly, not just when something catastrophic happens, changes your relationship to production bugs entirely. You stop waiting for users to tell you what’s broken and start seeing what’s broken directly. Set up a weekly ritual: look at your top JS errors, look at your worst-converting funnels, read the last 20 support messages. You’ll find bugs you didn’t know existed.

The other lever is reducing the friction to report. In-app feedback that appears contextually, immediately after a user hits an error state, converts dramatically better than a generic “report a bug” link buried in a menu. You’re catching them at the moment of frustration, when the motivation to say something is highest.

The counterargument

The obvious pushback here is that this logic could be used to justify underinvesting in tests. It shouldn’t be. Tests give you fast, cheap, repeatable feedback on regressions and logic errors. They’re essential for moving quickly without constantly breaking things. Nothing in this argument is a case for writing fewer tests.

The point is about mental models, not resource allocation. If you believe your test coverage is a reasonable proxy for your production bug rate, you’re going to underinvest in production observability, in user feedback infrastructure, and in the kind of qualitative reading of user behavior that surfaces the bugs that never get filed. You’ll keep shipping with confidence right up until a churned customer tells you on their way out the door that a feature you thought was working hadn’t worked for them in months.

What this means for how you build

Treat production as the only ground truth. Your tests are a filter, not a guarantee. Your users are running an experiment on your software right now, and most of the results are being quietly discarded rather than reported back to you.

Build the infrastructure to hear what they’re not saying. Watch where they stop. Read what they write to support. Instrument the flows that matter. Make it easy to report something broken.

Your test suite tells you what you built. Your users tell you whether it works. You need both signals, and right now most teams are only listening to one.