The Simple Version

When you rewrite a prompt to get a better response from an AI, you’re doing the same thing as a researcher tweaking a model’s temperature or top-p settings: steering the distribution of outputs. The tools look different, but the underlying act is the same.

Why This Framing Matters

Prompt engineering has accumulated a lot of mystique. There are courses, certifications, and job listings for it. Some of that is warranted. Some of it obscures something fairly mechanical happening underneath.

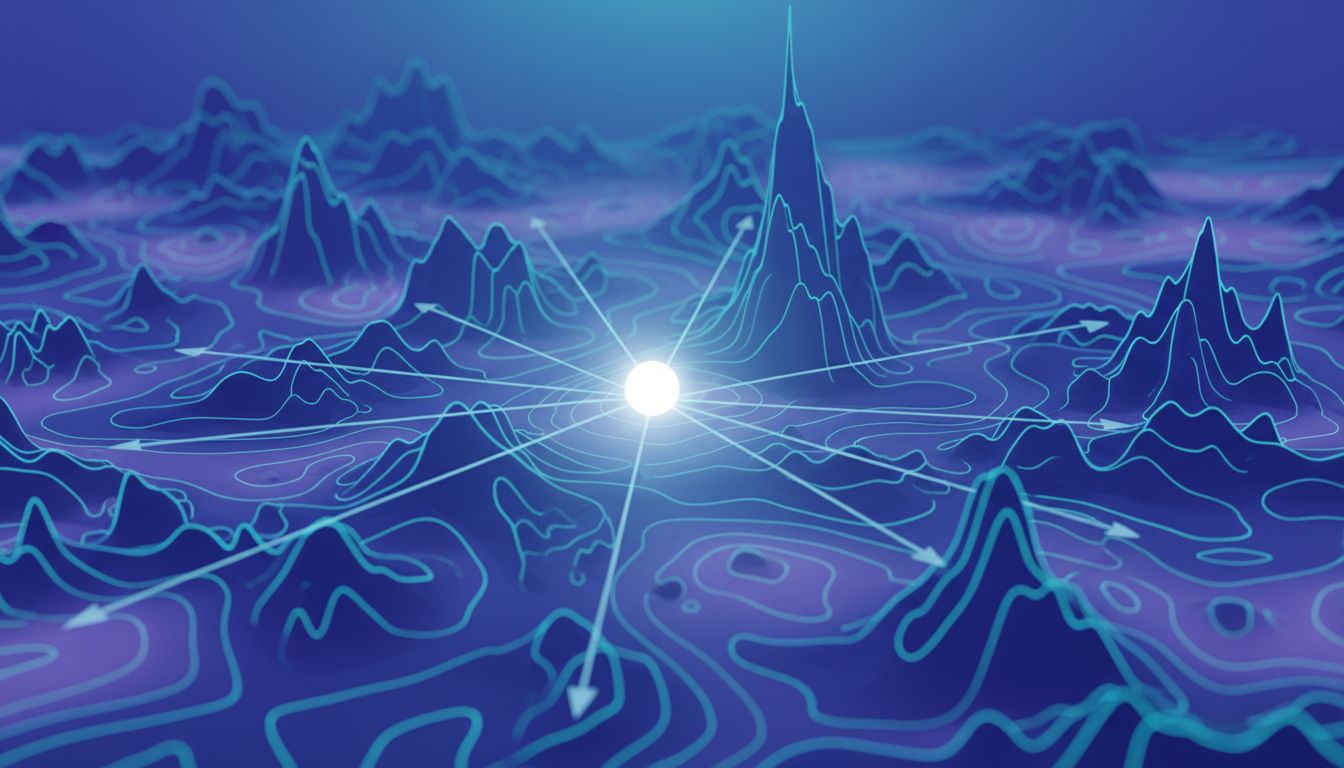

Here’s what’s actually going on. A large language model doesn’t read your prompt the way you read a sentence. It converts your words into tokens, maps those tokens into a high-dimensional vector space, and uses attention mechanisms to figure out which parts of the input should influence the output most strongly. The prompt isn’t a command. It’s a coordinate in a vast space of possible contexts, and your output is the model’s best guess at what text should follow that coordinate.

When you change the prompt, you shift that coordinate. Change it enough, and you end up in a different neighborhood of the output distribution entirely. That’s not fundamentally different from what happens when a researcher adjusts sampling temperature (which controls how much randomness the model introduces when picking its next token) or modifies the system prompt weights during fine-tuning.

The Knobs You Don’t See

Models like GPT-4 and Claude expose a small set of explicit parameters to API users: temperature, top-p, max tokens, frequency penalty, presence penalty. These are the obvious levers. Temperature near zero makes the model repetitive and conservative. Push it toward one and the outputs get more varied and creative, sometimes usefully, sometimes not.

But here’s the thing most people don’t realize: your prompt text is also a parameter. It’s just encoded differently. Telling a model “you are a concise technical writer” in the system prompt shifts its output distribution toward shorter, denser responses in much the same way that lowering temperature shifts it toward higher-probability tokens. Asking the model to “think step by step” (the chain-of-thought technique, documented in a 2022 Google paper by Wei et al.) pushes computation toward intermediate reasoning steps before committing to an answer. The phrase itself acts as a soft switch.

This is why seemingly trivial prompt changes produce large output differences. Swapping “list” for “enumerate” can shift response formatting. Adding “be concise” competes with a verbose training distribution and usually wins. These aren’t stylistic preferences you’re communicating to a conscious reader. They’re weights you’re applying to a probability machine.

Where the Analogy Breaks Down

The parameter-tuning framing is useful but not perfect. A few places where it gets complicated.

First, prompt effects are non-linear and hard to predict. Adjusting temperature by 0.1 produces a smooth, roughly proportional change in output variance. Changing one word in a prompt can cascade through attention layers in ways that are nearly impossible to anticipate. The input space for prompts is astronomically larger than the input space for numeric hyperparameters, which makes systematic search much harder.

Second, prompts interact with training data in opaque ways. When you write “respond like a senior engineer,” you’re triggering patterns that exist because the model saw a lot of text written by (or about) senior engineers during training. The effect depends on what that training data actually contained. You’re not directly programming behavior; you’re activating latent patterns. This is why prompt hallucinations often trace back to what the model was implicitly told to expect.

Third, prompts have a context window budget. You can’t just keep adding instructions indefinitely. In fact, there are documented cases where adding more context actively hurts performance because later tokens can dilute or confuse the attention signal from earlier ones. This has no clean analog in traditional hyperparameter tuning.

What This Means Practically

If you accept that prompts are a form of parameter tuning, a few practical conclusions follow.

Treat prompt development like experimentation, not writing. The intuition that a well-written prompt is one that sounds clear and professional to a human reader is wrong, or at least incomplete. What matters is how the prompt shifts output distributions. That requires testing, not just careful prose. Keep a log of what you changed and what happened.

Be skeptical of prompt “recipes” that travel without context. A chain-of-thought prompt that works well for GPT-4 at temperature 0.7 may behave differently on Claude or on an older model. The underlying architecture, training data, and default sampling parameters all interact with your prompt. Copy-pasting techniques from a blog post is like copying hyperparameters from someone else’s training run on a different dataset.

The real expertise is understanding the model, not the words. The best prompt engineers aren’t people who write beautifully. They’re people who have developed accurate mental models of how a given LLM responds to different input structures. That’s closer to the intuition a good ML researcher has about how a model behaves under different training conditions than it is to copywriting skill.

The Career Implications

This framing also puts the “prompt engineer” job title in a clearer light. The role is real and genuinely valuable right now, for the same reason that knowing how to tune a complex instrument matters when you’re playing it live. But treating it as a stable, discrete profession may be optimistic.

As models improve, they become more robust to sloppy prompts. As tooling matures, more of the systematic work (iterating through prompt variations, evaluating outputs, managing context windows) gets automated. The judgment layer stays valuable. The craft layer gets commoditized.

That’s not a knock on people doing this work today. It’s an honest read of where the leverage is. Understanding that you’re tuning a probability machine, not writing instructions for a person, is what separates the practitioners who will adapt as the tools change from the ones who won’t.