In 2016, GitHub suffered a production incident that their test suite had no realistic chance of catching. A network partition during a database failover caused two nodes to briefly disagree about who was the primary. For a short window, data was written to the wrong node. By the time the system corrected itself, some commits had been lost and some repositories were showing incorrect information to users. The tests were passing. The staging environment was fine. The bug existed in the gap between what they tested and how the real world actually behaves.

This is not a story about GitHub being negligent. It’s a story about a category of bug that your tests are structurally incapable of finding, and what that means for how you build software.

The Setup

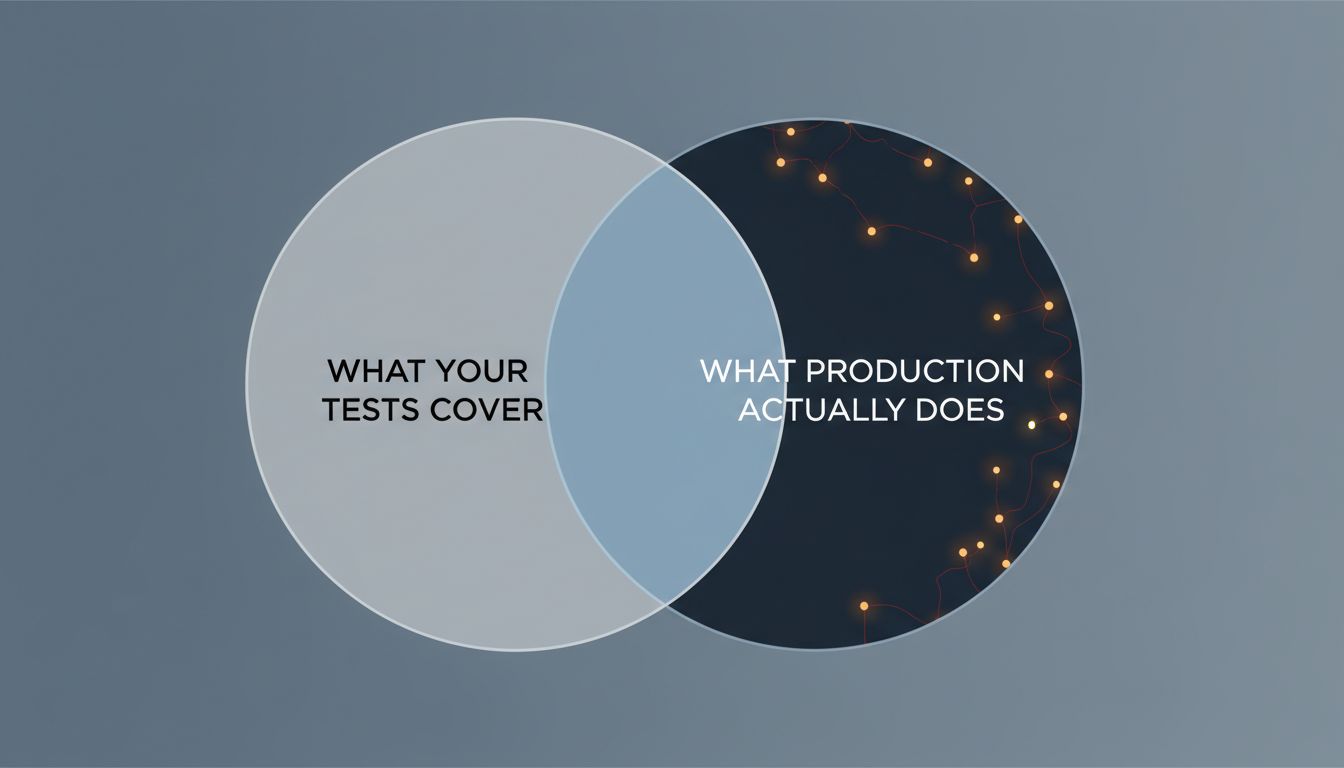

Every test suite is a model. When you write a unit test, you’re saying: “I believe this function behaves this way, given these inputs, in this environment.” When you write an integration test, you’re making slightly broader claims. When you spin up a staging environment, you’re trying to approximate production.

The problem is that production isn’t an environment. It’s a population of environments, users, timing conditions, network states, and data shapes that you can never fully enumerate in advance. Your test suite is a sketch of that population. A good sketch, hopefully. But a sketch.

For most bugs, this is fine. Logic errors, off-by-ones, null pointer exceptions in predictable code paths: these show up in tests because the tests are a reasonable model of how the code will be exercised. You write a test, the bug fails it, you fix the bug.

But a specific class of bugs doesn’t appear until production, and they keep appearing in production even after you’ve added more tests. These bugs are not just hard to catch. They’re pointing at something real about the gap between your model and reality.

What Happened at Knight Capital

In August 2012, Knight Capital Group deployed new trading software. The deployment was botched in a specific way: one of their eight production servers wasn’t updated with the new code, and that server still had an old, dormant feature activated by a flag that the new code repurposed for something different.

For 45 minutes, that server sent out erroneous orders. Knight Capital lost $440 million. The firm essentially ceased to exist as an independent entity.

The bug was not subtle in retrospect. But the test suite couldn’t catch it because the test suite didn’t know that one server would be running different code than the others. The tests assumed a homogeneous deployment. Production was not homogeneous. That gap, between assumed uniformity and actual partial deployment, was where the catastrophe lived.

No unit test could have found this. No integration test that ran on a single environment would have found this. The failure mode required the specific configuration that only existed in production, during the actual deployment, with real traffic flowing through it.

Why This Keeps Happening

The GitHub and Knight Capital cases are dramatic, but the same dynamic plays out at smaller scales constantly. You’ve probably seen it yourself. A race condition that only surfaces under real user concurrency. A caching bug that only appears after a specific sequence of reads and writes that your synthetic load tests don’t replicate. A third-party API that behaves slightly differently in production than in the sandbox you test against.

These bugs share a structure. They require state or timing or environmental configuration that your tests don’t model, not because your test suite is poorly written, but because accurately modeling production is either impossible or prohibitively expensive.

The temptation, after each of these incidents, is to add more tests. Write a test that covers this exact scenario. But this is often the wrong response, or at least an incomplete one. Six reasons your staging environment is lying to you goes into the structural reasons why you can’t always close this gap by improving the pre-production environment. The issue is deeper than test coverage.

What You Can Actually Do

The production-only bug is not a testing failure in the conventional sense. It’s an observability and deployment architecture failure. Here’s how to reframe your response.

Treat production as the primary source of truth about your system’s behavior. This sounds obvious, but most teams treat production data as a post-mortem resource rather than an ongoing input. If you’re not collecting detailed telemetry in production, you’re flying blind. Distributed traces, structured logs with request context, and real-time error aggregation aren’t nice-to-haves. They’re the only way you’ll understand bugs that live in production state.

Make deployment incremental and reversible. Knight Capital’s mistake wasn’t writing bad code. It was deploying to all servers simultaneously with no ability to quickly roll back or limit blast radius. Feature flags, canary deployments, and blue-green infrastructure don’t prevent bugs, but they convert catastrophic failures into contained ones. A bug that affects 1% of traffic for 10 minutes is a completely different incident than one that affects 100% of traffic for 45 minutes.

Stop treating the production incident as proof that you need more pre-production tests. Sometimes that’s true. Often, the right response is to improve your production observability so you catch the next unforeseen failure faster, not to try to enumerate all possible failures in advance. You cannot win a game of whack-a-mole against an infinite problem space.

Look for what the bug reveals about your mental model. When a production-only bug surfaces, ask not just “how do I fix this” but “what did I believe about this system that turned out to be wrong?” GitHub’s incident revealed an assumption about network partition behavior during failovers. Knight Capital’s incident revealed an assumption about deployment uniformity. Each of those assumptions lived somewhere in the test suite, encoded as things the tests didn’t bother to vary. When you find and correct the assumption, you’re doing more durable work than just adding a regression test.

The Lesson Worth Keeping

The bugs that only appear in production are not anomalies. They are the predictable consequence of testing a model of your system while shipping the actual system. The model will always be incomplete. That’s not a failure of your engineering process. It’s a property of complex systems.

What separates teams that handle this well from teams that keep getting blindsided isn’t a better test suite (though good tests still matter). It’s an honest accounting of what their tests can and cannot tell them, combined with the production instrumentation to learn quickly when reality departs from the model.

Your tests are a statement about what you currently understand. Production is the feedback loop that tells you where your understanding is wrong. What actually happens to an AI model after deploy covers a related version of this problem in machine learning systems, where the gap between training data and production data causes exactly the same kind of drift. The form changes but the structure is identical: a model of the world that stops matching the world.

Build better tests, yes. But also build systems that fail gracefully, deploy incrementally, and tell you clearly what’s happening while they’re running. The goal isn’t to eliminate the gap between your model and production. It’s to make sure that when the gap shows up, you find out immediately and can act on it before it becomes the next case study.