Most people treat a checksum like a lock. If the hash matches, the file is safe. If it doesn’t, something went wrong in transit and you should try again. This mental model is almost entirely wrong, and the gap between what checksums actually do and what people think they do is where a lot of security confusion lives.

A checksum is not a security mechanism. It is a receipt.

What a Hash Actually Computes

When you download a file and verify its SHA-256 hash against the one posted on the distribution page, you are answering one question: does the bits-on-your-disk match the bits someone measured at a specific point in time? That’s it. The hash tells you nothing about whether those original bits were malicious, whether the server was compromised before the hash was computed, or whether the page hosting the hash is trustworthy.

SHA-256, like its predecessor SHA-1 (now deprecated for most security uses), works by feeding your file through a deterministic mathematical function that produces a fixed-length fingerprint. Change a single bit in the source file and the output hash changes completely. This property, called the avalanche effect, is what makes checksums reliable for detecting accidental corruption. Cosmic rays, failing drives, and network packet errors are all genuinely detectable this way.

Accidental corruption, though, is not the threat most people are worried about when they carefully copy a hash string and compare it character by character.

The Attack Surface the Hash Doesn’t Cover

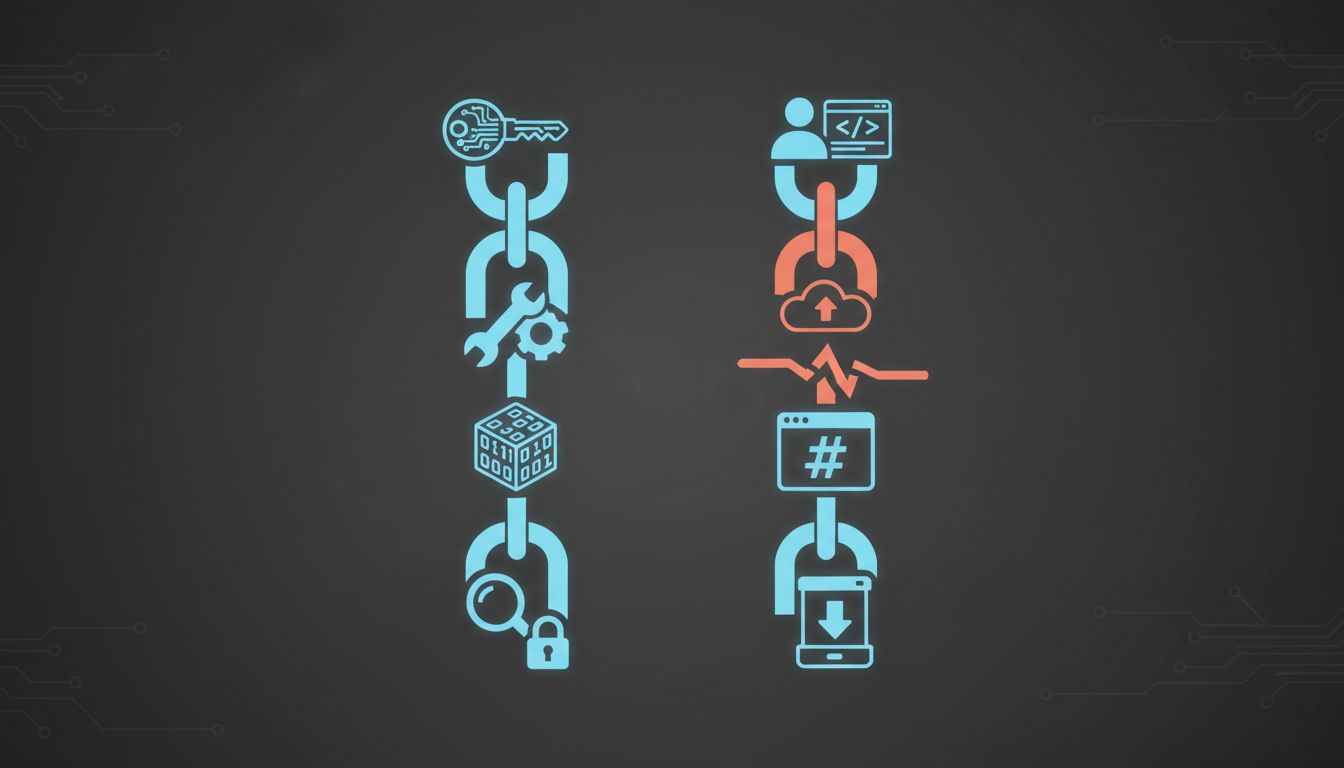

Consider the actual chain of trust when you download software. The developer builds the binary. It gets uploaded to a distribution server. That server generates or is given a hash. The hash is posted on a webpage. You visit the webpage, copy the hash, download the binary, and compare.

A motivated attacker who has compromised the distribution server can replace both the binary and the hash simultaneously. Your verification will succeed. The hash will match perfectly, because it was computed against the malicious file. This is not a theoretical scenario. The SolarWinds breach in 2020 involved trojanized software updates distributed through official channels with valid signatures, a more sophisticated version of precisely this problem.

The hash comparison you ran was technically correct. It proved the file you downloaded matched the file on the server. What it couldn’t prove was that the file on the server was the one the developer intended to ship.

This is the distinction that matters. A checksum answers the question “did my download get corrupted in transit?” It does not answer “should I trust what I downloaded?”

What Actually Provides the Trust Guarantee

Code signing does something fundamentally different. When a developer signs a binary with their private key, they create a cryptographic proof that someone in possession of that key signed off on that exact file. Verification requires the developer’s public key, which can be obtained independently. A compromised distribution server cannot forge this signature without the private key, which the developer (presumably) controls separately.

This is why Apple’s notarization system, Linux package managers like apt and dnf, and Windows Authenticode exist. They decouple the trust anchor from the distribution channel. The signature travels with the file. The verification key comes from somewhere else.

The analogy worth holding onto: a checksum is like confirming that a sealed envelope arrived with the seal intact. A cryptographic signature is like confirming that the seal belongs to the person you expected to send it. Both are useful. Only one of them actually authenticates the sender.

For open source software where signing infrastructure is sometimes absent, reproducible builds offer a partial answer. If multiple independent parties can build identical binaries from the same source, a mismatch between the official binary and community-reproduced versions becomes detectable. The Reproducible Builds project has been quietly pushing this practice across the Linux ecosystem for years, and it’s one of the more underappreciated developments in software supply chain security.

Where Checksums Remain Genuinely Valuable

None of this means checksums are useless. For their intended purpose, they remain indispensable.

Large file transfers across unreliable networks fail silently all the time. A 10GB dataset, a disk image, a firmware update pushed across a cellular link: these are contexts where bit-level corruption is a real operational hazard, not a theoretical one. Checksums catch these failures reliably and cheaply. Database backup systems, scientific data repositories, and archival storage all depend heavily on periodic hash verification to detect silent data rot, a problem that compounds over years on spinning drives.

The confusion arises because the same tool gets recruited for a security job it was never designed to do. This is a pattern that appears throughout computing, and as most encryption advice is solving the wrong problem, the mismatch between a tool’s design and its deployed use case tends to create exactly the vulnerabilities that practitioners think they’ve closed.

The Lesson Is About Trust Chains, Not Hash Functions

The checksum verification ritual that ships with most Linux distributions and many open source projects is not security theater. It’s useful integrity checking wrapped in a presentation that implies security guarantees it cannot deliver.

The actual question worth asking before running any downloaded software is not “does the hash match?” but “where did the hash come from, and is that source independent of the file I’m verifying?” If the answer to the second part is “the same webpage, served from the same domain, probably the same server,” then you’ve confirmed your download wasn’t corrupted in transit. You have not confirmed much else.

For most personal software downloads, this level of verification is probably sufficient. Attackers with the capability to compromise major distribution servers and replace both binaries and hashes simultaneously are not typically targeting individual users. But for organizations deploying software at scale, for developers building update mechanisms, and for anyone handling genuinely sensitive systems, understanding what the checksum actually proves is the prerequisite for building a trust model that holds.