A database query takes somewhere between one and fifty milliseconds under normal conditions. That sounds fast. On the scale of what a modern CPU can accomplish, it is geological time. While your application waits for that response, something specific is happening inside your runtime. Most developers have only a vague sense of what that something is, and the vagueness is costing them.

The Thread Is Probably Just Sitting There

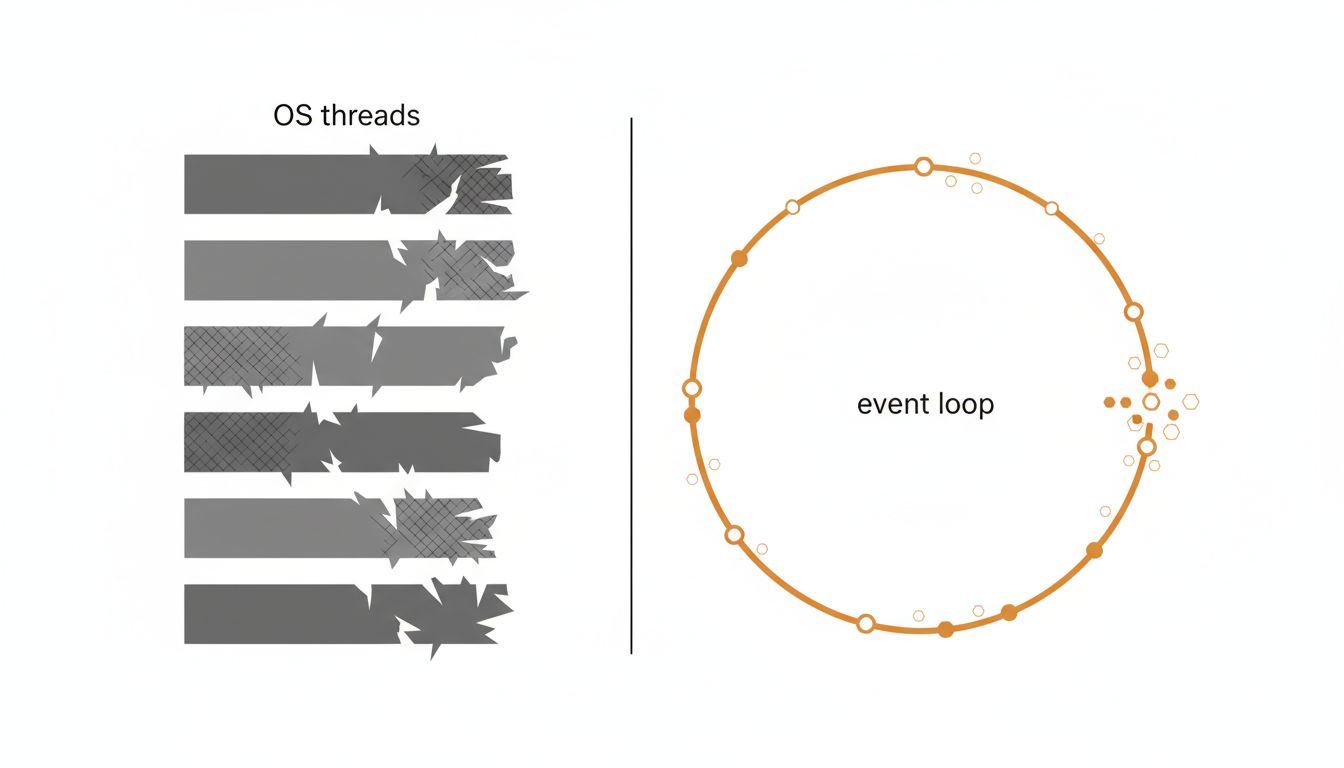

In a traditional synchronous application, the answer is blunt: your thread is blocked. The operating system has suspended it, moved it off the CPU, and parked it in a wait queue. It will stay there until the database sends back a response, at which point the OS will reschedule it, move it back onto a CPU core, and let execution continue.

This is not a bug. It is how blocking I/O was designed to work. The problem is what it implies at scale. If handling one request requires one thread, and each thread can be blocked for 20 milliseconds waiting on the database, your server’s capacity becomes a simple division problem: the number of threads you can afford to run (in memory, in scheduling overhead) divided by average wait time. On a Java server with default thread stack sizes, each thread consumes around 512KB to 1MB of memory before it does anything. Multiply by thousands of concurrent requests and you have a memory problem before you have a performance problem.

The thread-per-request model was a reasonable bet when web applications were simpler and concurrency demands were lower. It aged poorly.

What Asynchronous Runtimes Actually Do Instead

The alternative is event-driven, non-blocking I/O. Node.js popularized this model. Rust’s async/await, Python’s asyncio, Java’s virtual threads, and Go’s goroutines all represent different points on the same design continuum.

The core idea: instead of blocking a thread while waiting, register a callback or suspend a lightweight coroutine, then free the thread to do other work. When the database responds, the event loop picks up the registered continuation and resumes execution.

In Node.js, there is exactly one JavaScript thread (ignoring worker threads). When your code issues a database query, libuv (the underlying C library) hands the I/O operation to the operating system using non-blocking syscalls or a thread pool, then returns control to the event loop. The event loop can now process other requests, timers, or I/O completions. Your query’s callback fires when the OS signals that data has arrived.

Go takes a different approach. Goroutines are multiplexed onto OS threads by the Go runtime scheduler. When a goroutine blocks on I/O, the runtime parks it and runs another goroutine on the same OS thread. The programmer writes code that looks synchronous. The runtime handles the complexity underneath. This is why Go can handle tens of thousands of concurrent goroutines on a few OS threads without the memory overhead of equivalent Java threads.

Java’s Project Loom, which delivered virtual threads in JDK 21, applies a similar principle to the JVM. Virtual threads are cheap to create and park. When a virtual thread blocks on a database call, the JVM unmounts it from its carrier thread, which then picks up another virtual thread. The promise is that you get the familiar synchronous programming model without the scaling cost.

The Hidden Work: Connection Pools and the C10K Problem

Even in async runtimes, the database connection itself is a constrained resource. Most databases impose connection limits. PostgreSQL, by default, caps connections at 100. Each connection has real overhead on the database server: memory, a dedicated process or thread (depending on the database), and lock state.

This is why connection pooling exists. A pool like PgBouncer or HikariCP maintains a fixed set of live connections and queues application requests against them. Your async application might handle 10,000 concurrent requests while holding only 20 actual database connections. Requests that arrive when all connections are busy wait in the pool’s queue rather than spawning new connections.

The practical consequence: async I/O does not eliminate waiting. It moves where the waiting happens. Instead of blocking OS threads, you block lightweight coroutines or queue entries. The difference is overhead, both memory and scheduling cost. A blocked virtual thread in Java costs a few hundred bytes. A blocked OS thread costs orders of magnitude more.

This connects to a broader pattern in systems design: the bottleneck does not disappear, it migrates. Push it out of the OS thread scheduler and it appears in the connection pool. Push it out of the connection pool with more connections and it appears as database CPU saturation. Understanding where your program is actually waiting, not where you assume it is waiting, is prerequisite to fixing it. Your server’s latency numbers are probably wrong is a related read on why measurement here is harder than it looks.

Why This Matters for Architecture, Not Just Performance

The blocking versus non-blocking distinction shapes more than throughput numbers. It shapes how you reason about failure modes.

In a thread-per-request system, a slow database query has a clean blast radius. The threads waiting on that query are occupied. New requests that need the database queue up. Eventually you exhaust your thread pool and the server stops accepting work. It is a comprehensible cascade.

In an async system, the failure modes are subtler. A slow database does not immediately exhaust threads, so the system may appear healthy while request latencies climb. The event loop stays responsive. New connections get accepted. The queue grows in memory rather than in visible thread exhaustion. This can make async systems harder to monitor and diagnose, not easier.

The other architectural implication is that async code is contagious. In most languages, once you introduce an async function, everything that calls it must also be async, or you need a bridge. This is the “colored function” problem that Go deliberately avoided and that remains a genuine pain in Python and JavaScript. The runtime model you choose at the start of a project is not easily changed later.

The Honest Conclusion

Your program, while waiting for a database response, is either blocking a thread and wasting OS resources, running an event loop that is handling other work, or suspended as a lightweight coroutine waiting to be resumed. Which of these is happening depends entirely on the runtime and the I/O model you chose, not on anything the database does.

The performance argument for non-blocking I/O is real but not universal. For applications with high concurrency and I/O-bound workloads, async runtimes are a genuine improvement. For applications with modest concurrency and mostly CPU-bound work, the added complexity of async code buys little and adds cognitive overhead.

The engineers who get this right are not the ones who reflexively reach for async. They are the ones who can describe, specifically, what their program is doing during every millisecond of that wait, and who have made a deliberate choice about whether that is acceptable.