The Collision That Isn’t

The mental model most people carry about network congestion is wrong in an interesting way. When two packets arrive at the same router interface at the same moment, there’s no collision in the dramatic sense, no electrical spark, no mutual destruction. The router doesn’t panic. What happens instead is almost boring: one packet waits.

But the mechanisms underneath that simple statement are worth understanding, because they determine whether your video call stutters, whether your cloud database scales, and whether a financial exchange can process thousands of trades per second without dropping orders.

How a Router Actually Processes Packets

A modern router is not a single pipe. It’s built around a switching fabric connecting input ports to output ports, and each port has its own hardware queue. When a packet arrives, it gets parsed at line rate: the router reads the destination IP address, looks it up in the forwarding table (usually a specialized data structure called a TCAM, or ternary content-addressable memory, that can match any row in a single clock cycle), and determines which output port should receive it.

TCAM is worth a brief detour. Unlike regular memory, which you address to retrieve content, a TCAM lets you supply content and get back an address. A router can look up a 32-bit IPv4 destination against hundreds of thousands of routing entries in a single operation, typically under a nanosecond. This is why your packets aren’t sitting in lookup limbo while the router thinks.

The problem isn’t lookup. The problem is output port contention.

The Queue Is the Whole Story

Imagine two packets arrive simultaneously on different input ports but both need to leave through the same output port. The output port can only transmit one packet at a time (serialization is fundamental to how Ethernet and most other link-layer technologies work). So the second packet goes into a queue.

That queue has a finite size. On a typical enterprise router, output queues might hold somewhere between a few hundred and several thousand packets depending on configuration. When the queue fills up, something has to give. This is where routers make a real decision, and the decision matters enormously.

The naive approach is tail drop: when the queue is full, drop the next packet that arrives. Simple, fair in a rough sense, but it has a catastrophic failure mode. TCP, which governs most internet traffic, uses packet loss as a signal to slow down. If many TCP connections are all filling a queue simultaneously and all experience loss at the same moment, they all slow down at the same moment, then all ramp back up together, then fill the queue again. This produces what’s called TCP global synchronization: the queue oscillates between empty and full, wasting capacity in a rhythm nobody designed.

Active Queue Management and the Smarter Approach

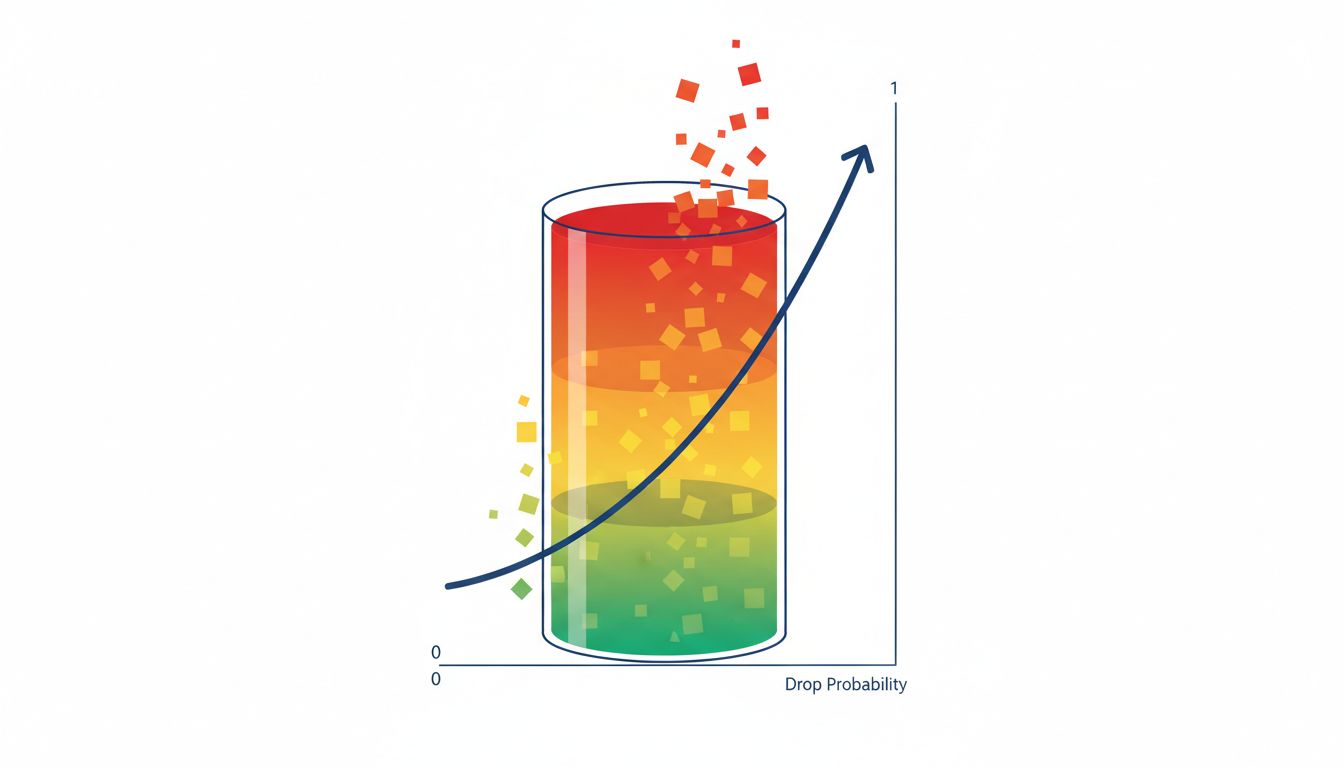

The solution, developed through significant research in the 1990s, is to start dropping (or marking) packets before the queue fills up, and to do it probabilistically so not all connections get the signal simultaneously. The most widely deployed version of this idea is called WRED, Weighted Random Early Detection.

WRED monitors the average queue depth and defines two thresholds. Below the lower threshold, no packets are dropped. Between the two thresholds, packets are dropped with increasing probability as the queue grows. Above the upper threshold, all packets are dropped. Because the drops are randomized, different TCP connections get their congestion signals at different times and adjust asynchronously. The queue never fully empties or fully fills, and throughput stays high.

The “Weighted” part refers to traffic prioritization. WRED can apply different drop profiles to different traffic classes, so packets carrying voice traffic (which is sensitive to latency and has no retransmission mechanism) get more favorable treatment than bulk file transfers (which can tolerate some loss and retry).

Head-of-Line Blocking and the Virtual Queue Problem

Output queuing seems clean until you think about what happens on the input side. In a high-speed router with many ports, the switching fabric itself becomes a bottleneck. Packets queue at input ports waiting to cross the fabric to their output ports, and this creates a problem called head-of-line blocking.

Suppose the first packet in an input queue is destined for an output port that’s currently busy. It sits there. Behind it might be ten packets all destined for a completely free output port, but they can’t move because the first packet is blocking the line. The result: a router that’s internally congested even when most of its output ports are idle.

The fix is virtual output queuing (VOQ), where each input port maintains a separate queue for each output port rather than a single shared queue. Packets destined for a free output port can move immediately, regardless of what’s blocking traffic to other ports. This is standard in high-performance core routers, where you simply cannot afford to waste fabric capacity.

Coordinating which packets cross the fabric when is handled by a scheduler running many times per second, solving a matching problem (connect input queues to output ports in a way that maximizes throughput) under tight time constraints. The algorithms used here, iSLIP being one of the well-known ones developed at Stanford, are elegantly designed to find good matches in a fixed number of rounds rather than requiring an optimal but computationally expensive solution.

What Happens When Things Go Truly Wrong

All of this machinery works well under moderate congestion. Under severe congestion, routers make harder choices.

Buffer bloat became a recognized problem in the 2010s when researchers noticed that many consumer routers and home internet equipment had very large buffers. Large buffers sound like a good thing (fewer drops) but they cause a different pathology: packets queue for hundreds of milliseconds before being transmitted or dropped. From the application’s perspective, the connection hasn’t failed, it’s just unbearably slow. The buffer is full of packets that will be delivered eventually, but so late that they’re useless for anything latency-sensitive. A video call with 400ms of one-way latency is not a video call.

The response to buffer bloat was a set of algorithms collectively called AQM (Active Queue Management) schemes, with CoDel (Controlled Delay) being particularly influential. CoDel doesn’t manage queue length directly. It manages queue delay, and starts dropping packets only when the sojourn time (how long a packet spends in the queue) consistently exceeds a target, typically around 5 milliseconds. This keeps the queue responsive without shrinking buffers so aggressively that bursts cause unnecessary drops.

The Subtle Role of Traffic Shaping

Routers don’t just react to congestion passively. At network edges, where a customer’s traffic meets a carrier’s backbone, routers actively shape traffic to enforce rate limits and prioritization policies.

A token bucket is the canonical mechanism. The router maintains a conceptual bucket that fills with tokens at a defined rate (say, one token per megabit of allowed throughput). Each packet costs tokens proportional to its size. If the bucket has enough tokens, the packet goes through immediately. If not, it either waits (shaping) or gets dropped (policing). This allows bursting up to the bucket size while enforcing a long-run average rate.

QoS (Quality of Service) policies layer on top: different traffic classes get different queues with different scheduling priorities. A well-configured network edge router might run five or six queues per interface, with strict priority for voice signaling traffic and weighted fair queuing among the rest.

What This Means

When two packets arrive at the same router simultaneously, the actual answer to “what happens” is: one gets forwarded, and one waits in a queue, where it will be forwarded, dropped, or marked depending on queue depth, traffic priority, and the active queue management policy in effect. The decision about which packet waits and for how long is made by hardware and software that’s been refined over decades of research.

The interesting insight is that network performance isn’t primarily about routing table lookup speed or even raw bandwidth. It’s about queue management. The difference between a network that feels fast under load and one that degrades badly comes down to how routers handle the moments when demand exceeds supply, which is to say, exactly the moments that matter most.

Every abstraction you build on top of a network, whether it’s a distributed database trying to maintain consistency, or microservices coordinating across availability zones, sits on top of this queue management layer. Understanding it doesn’t just satisfy curiosity; it changes how you think about latency budgets, retry logic, and why some network paths behave differently under load than their bandwidth numbers would suggest.