The Simple Version

Cloud tiers aren’t priced by value delivered. They’re priced to make you feel like you’re being prudent. The second-cheapest option often carries the worst ratio of cost to capability, and the companies that discover this tend to do so on their infrastructure bill.

Why the Middle Feels Safe

Picking the second-cheapest anything is a deeply human heuristic. Psychologists call it “compromise effect”: when given three options, people disproportionately choose the middle one because it signals neither recklessness nor recklessness-in-reverse. Wine lists are designed around this. So are cloud pricing pages.

The problem is that cloud services aren’t bottles of Bordeaux. Their tiers aren’t arranged by quality on a linear scale. They’re arranged to create maximum purchase regret at specific price points, usually the ones occupied by engineers who are being careful with company money.

A startup picking AWS’s mid-tier compute to avoid looking extravagant isn’t being fiscally conservative. It’s paying near-premium prices for infrastructure that will require significantly more engineering time to manage, scale, and debug around its limitations.

Where the Hidden Costs Actually Live

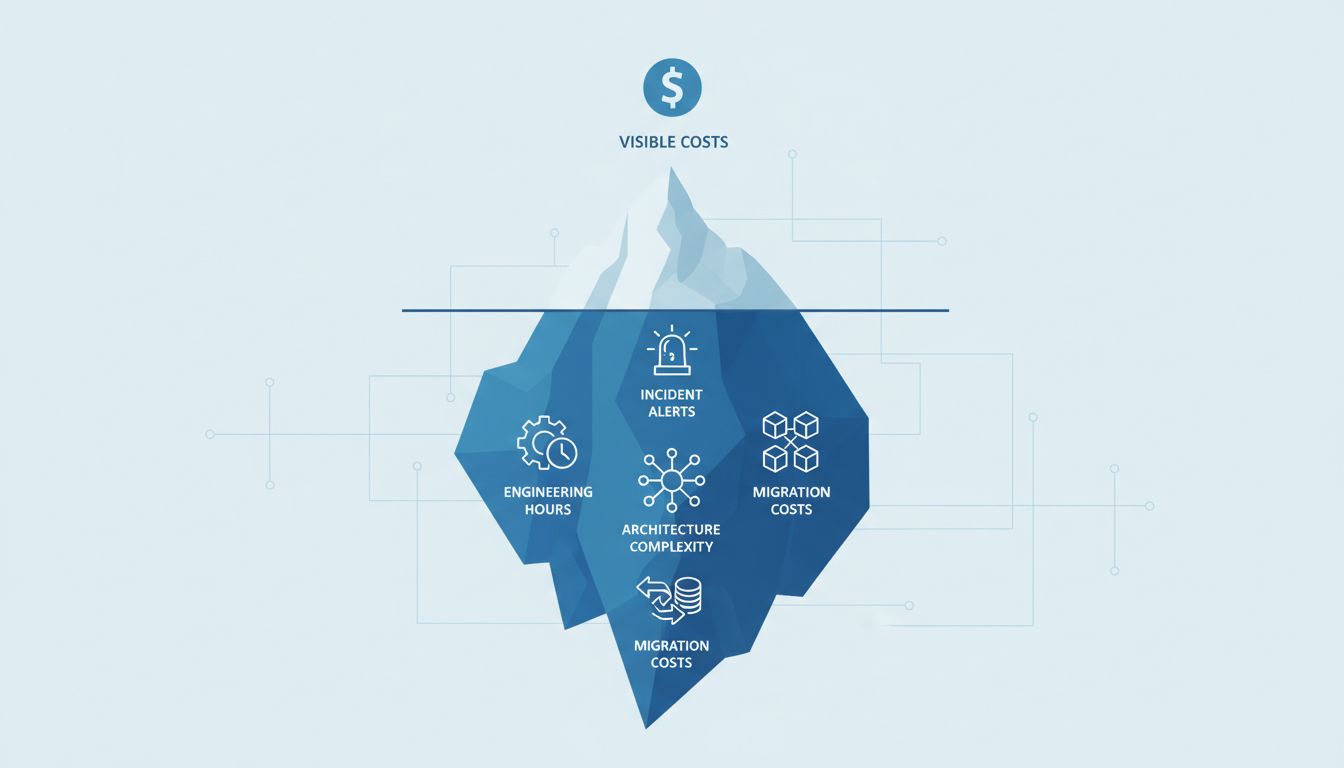

Cloud pricing has three components that most people mentally account for, and several they don’t.

The visible cost is the per-hour or per-request rate on the pricing page. This is what engineers compare when evaluating tiers. It’s also the least important number in the total cost of ownership calculation.

The invisible costs are operational. Consider storage tiers. AWS S3’s “Infrequent Access” tier costs less per gigabyte stored than Standard, but charges per retrieval. The pricing page makes this clear, yet many teams underestimate their access frequency and end up paying more than Standard would have cost. The same pattern applies to database instance classes, where a smaller instance might handle average load fine but require read replicas or caching layers that a larger instance wouldn’t, and those additions carry their own per-hour rates and operational complexity.

The deepest cost is engineering time. A database instance that runs hot at 80% CPU during business hours doesn’t just risk slowdowns. It requires someone to monitor it, tune queries against it, and eventually migrate off it. That work isn’t on the pricing page. The premium tier that idles at 40% CPU costs more per month and substantially less per quarter once you factor in the engineer-hours not spent managing it.

This is the core of the problem with mid-tier choices: they’re often at exactly the threshold where you’re paying for capability you almost have, not capability you actually have.

The Architecture Tax

There’s a less-discussed version of this problem that shows up at the systems level rather than the individual-service level.

When a team chooses infrastructure that’s slightly underpowered, they compensate architecturally. They add caches in front of databases that would handle load fine if the instance were larger. They spin up separate read replicas that a higher-tier managed database would handle internally. They write workarounds for rate limits that exist in lower-tier API plans but not premium ones.

Each of these compensations is itself a cloud resource with its own cost. More importantly, each one is a surface area for failure. Caches introduce cache invalidation bugs. Read replicas introduce replication lag. Workarounds accumulate. The engineers who wrote them leave. The documentation doesn’t keep up. What started as frugality quietly becomes the software you never sunset that becomes your biggest bill.

This architecture tax compounds. A team that chose a mid-tier managed Kubernetes service instead of a premium one may spend months building tooling to compensate for missing features, only to migrate up anyway when the team grows and operational pain becomes untenable. The migration itself is expensive. The months of compensatory tooling were sunk costs.

When the Premium Tier Actually Costs Less

The math tips clearly toward premium in a few identifiable situations.

First, when the service is on the critical path. A mid-tier database behind your core product is never really cheaper than a premium one, because the cost of a degraded user experience, a slow query that cascades, or an outage is orders of magnitude higher than the monthly price difference. Premium infrastructure on commodity workloads is wasteful. Premium infrastructure on core user flows is usually correct.

Second, when the team is small. A five-person engineering team spending two hours a week managing infrastructure limitations is losing 10% of its engineering capacity to a problem that a larger instance would eliminate for a few hundred dollars a month. The calculus changes dramatically at scale, which is why larger organizations sometimes legitimately optimize back down to cheaper tiers with dedicated ops capacity. But for small teams, building things slowly and deliberately on solid infrastructure almost always beats building fast on infrastructure you’ll outgrow.

Third, when the capability gap is qualitative, not quantitative. Many cloud services have tiers where the jump from second-to-top to top includes features that are fundamentally different in kind: automated backups with point-in-time recovery versus no backups, SLA-backed uptime versus best-effort, dedicated compute versus shared burst capacity. These aren’t just more of the same thing. They’re different products.

How to Think About It

The useful reframe is to stop evaluating cloud tiers by their sticker price and start evaluating them by what they shift onto your engineering team.

Every limitation in a cheaper tier is either something your users will feel or something your engineers will carry. The question isn’t “can we afford the premium tier?” It’s “what is the cheaper tier actually costing us in engineering time, incident risk, and architectural debt, and does that exceed the price difference?”

In most cases, when teams do that math honestly, the premium tier wins. Not always. Not on every service. But on the ones that matter most, the second-cheapest option is frequently the most expensive choice you’ll make.