Most people who struggle with AI outputs are solving the wrong problem. They tweak their phrasing, add more detail, try a different tone, and cycle through variations like they’re adjusting a search query. Sometimes that works. More often, it’s a symptom of a deeper issue: they don’t have an accurate picture of what the model actually is, so they can’t reason clearly about why it’s failing or how to fix it.

Get the mental model right, and prompt improvement becomes intuitive rather than trial-and-error.

The Three Wrong Models Everyone Starts With

When people first use a capable language model, they tend to slot it into one of three familiar categories. Each one is wrong in a specific, predictable way.

The first is the search engine model. In this frame, a good prompt is a good query: precise, keyword-rich, specific. This produces prompts that are technically detailed but contextually thin. You’ll get outputs that answer the literal question while missing everything that made the question interesting. Search engines retrieve. Models generate. The difference matters enormously.

The second is the human expert model. Here, the model is treated like a knowledgeable colleague who just needs clear instructions. This feels intuitive because the model communicates in natural language and responds to social cues. But a human expert has genuine beliefs, real-world experience, and an actual understanding of what they don’t know. The model has none of these things. It produces confident-sounding text by predicting what plausible responses look like, not by reasoning from first principles. When you treat it like a human expert, you stop applying appropriate skepticism to its outputs.

The third is the magic oracle model. This is the frame where you write a vague, ambitious prompt and expect the model to somehow know what you actually want. “Write me a great marketing email” is this failure mode in its purest form. Oracles don’t exist. The model will produce something that satisfies the statistical shape of a marketing email, and it will probably be mediocre, because mediocre is the mean.

What the Model Actually Is

A language model is, at its core, a very sophisticated next-token predictor trained on a large corpus of text. That description is reductive but useful, because it tells you something actionable: the model is shaped by the distribution of text it was trained on, and it will naturally produce outputs that look like the center of that distribution.

This has real consequences. Ask for a “professional email” and you’ll get something that looks like the average of thousands of professional emails, which is to say something flat and forgettable. Ask for an essay and you’ll get something that resembles what essays tend to look like, complete with their most common structural tics and hedges.

The better mental model is something like: a very well-read collaborator who has no persistent memory, no ability to verify facts, no genuine preferences, and a strong tendency to tell you what you seem to want to hear. That last part is especially important. The model is trained partly on human feedback, which creates a bias toward responses that feel satisfying rather than responses that are true or useful. It will agree with incorrect premises you embed in your prompts. It will produce confident nonsense when you ask about things outside its training. It will validate bad ideas if you seem attached to them.

Knowing this changes how you write prompts. You stop trusting its confidence. You start giving it explicit permission to push back or say it doesn’t know. You treat its first response as a draft, not a verdict.

Context Is Not Just Background, It’s Constraint

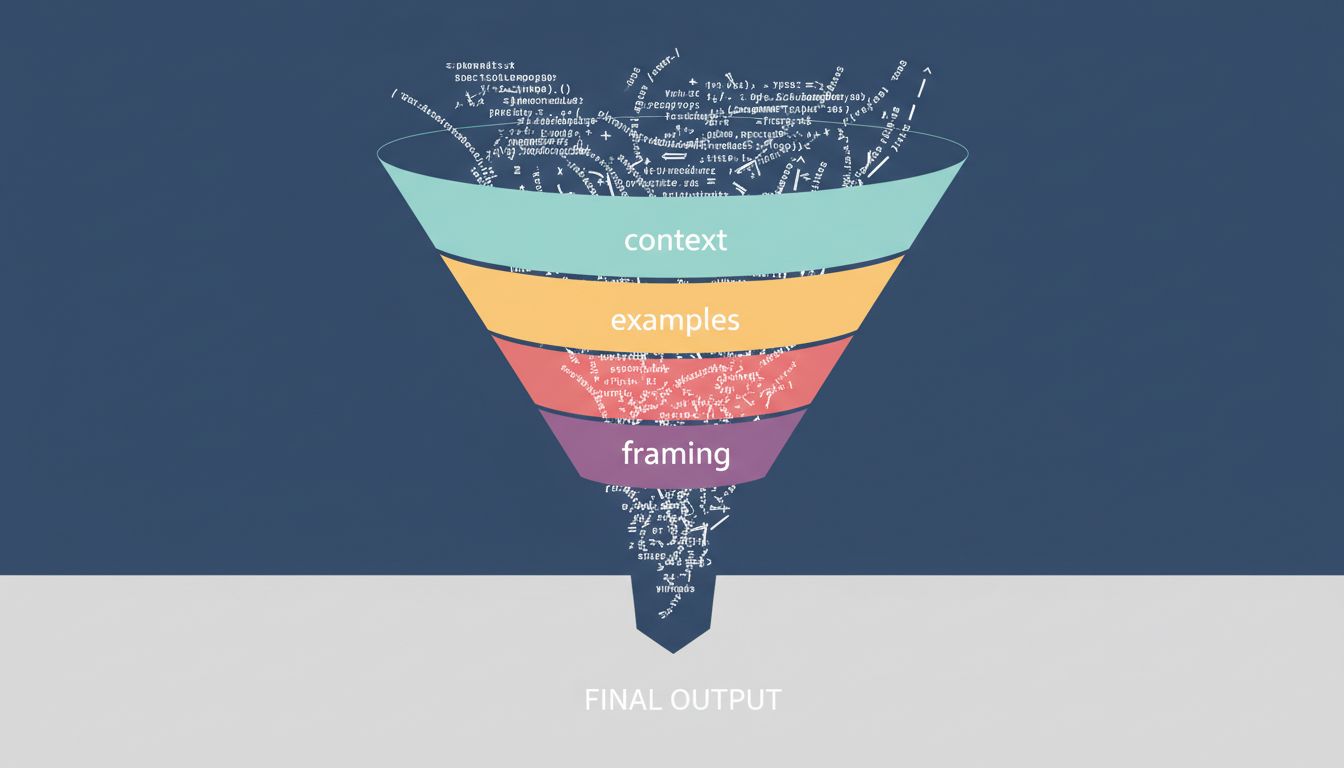

One of the highest-leverage things you can do is understand that context isn’t decoration. It’s the mechanism by which you shape the probability space the model is sampling from.

When you write a prompt, you’re not instructing the model. You’re establishing a context that makes certain kinds of responses more or less likely. A prompt that starts with “You are a skeptical editor reviewing a first draft” produces meaningfully different outputs than one that starts with “You are a helpful writing assistant” even if the task description is identical. The framing shifts what the model treats as an appropriate response.

This is why specificity works so well, and why it works for reasons that have nothing to do with being precise for the model’s sake. Specific context narrows the distribution. “Write a subject line for a re-engagement email targeting lapsed SaaS users who last logged in 60 days ago” constrains the output space far more than “write a re-engagement email subject line.” You’re not giving instructions so much as you’re creating a situation in which only certain kinds of responses make sense.

The same principle applies to examples. Showing the model the format, tone, or style you want is more reliable than describing it. This is why few-shot prompting (giving the model two or three examples before the actual task) consistently outperforms zero-shot prompting for complex or stylistically specific tasks. You’re not teaching the model anything. You’re steering its predictions.

Iteration Is a Skill, Not a Workaround

A lot of people treat multiple rounds of prompting as a failure state. If they needed to revise, they assume their first prompt was bad. This is backwards.

For any task with genuine complexity, iterating is the correct strategy. The first response is information. It tells you where the model’s interpretation diverged from yours, what context you forgot to provide, what constraints you didn’t make explicit. A bad first output is a diagnosis, not a rejection.

The useful habit is to treat the first response as a probe. Read it not just for quality but for what it reveals about what the model understood your request to be. Then respond to that understanding directly. “You interpreted this as X, but I actually need Y” is more useful than rewriting your original prompt from scratch.

This also means that prompt engineering is closer to regular engineering than most people expect. You’re iterating toward a specification, debugging mismatches between intent and output, and building up context across turns the same way you’d build up state in a program. The underlying discipline is the same.

Fix the Model First, Then the Prompt

The practical takeaway is sequenced. Before you adjust your prompt, ask which mental model you’re operating from. Are you treating the output like a search result you just need to refine? Are you trusting confidence that the model hasn’t earned? Are you expecting it to infer what you want from thin context?

Give the model a role that fits your actual need, not a generic helpful assistant. Give it explicit permission to be uncertain or to ask clarifying questions. Provide examples of what good output looks like. Build context that makes the right kind of response the most natural one.

None of this is complicated once you stop thinking about the model as something it isn’t. The prompts that work aren’t the ones written by people who found the right magic words. They’re written by people who have an accurate enough picture of how the system works to reason about it clearly. That’s a skill you can develop, and it transfers to every model you use, not just the one you’re working with today.