Most developers encounter embeddings as a means to an end. You convert text to vectors, stuff them in a vector database, retrieve the relevant chunks, and hand them to a language model. Step two of five. Move on. But that mental model undersells what’s actually happening, and it leads to systems that are harder to debug, easier to fool, and more brittle than they need to be.

Here are six places embeddings are doing real work in your pipeline that you might be treating as incidental.

1. They’re Making Semantic Decisions Before Your LLM Sees Anything

When you retrieve documents using cosine similarity between embeddings, you’re not doing a neutral lookup. You’re running a classifier. The embedding model has already encoded a theory of what concepts are related, which words are synonymous, and which topics cluster together. That theory was baked in during training on whatever corpus the embedding model saw.

This means that if your embedding model was trained primarily on general web text, it may treat “heart failure” and “cardiac arrest” as near-synonyms while treating “Python” (the language) and “Python” (the snake) as neighbors depending on context. Your retrieval results are a direct function of those learned relationships. If the embedding model doesn’t share your domain’s vocabulary, the retrieval step will silently return plausible-but-wrong chunks, and your LLM will confidently answer based on them. How LLMs handle context they were never trained on covers what happens downstream, but the damage is often done before the model even enters the picture.

2. Dimensionality Is a Design Choice, Not a Default

Embedding vectors have dimensionality, typically 768, 1536, or 3072 floats per vector depending on the model. Many teams use whatever the API returns without thinking about what that number costs them at scale.

Consider a modest knowledge base: one million document chunks, each embedded as a 1536-dimensional float32 vector. That’s roughly 6GB of raw vectors before any indexing overhead. Approximate nearest-neighbor indexes like HNSW (Hierarchical Navigable Small World graphs) add memory on top. For many teams this is manageable. For teams doing real-time retrieval with latency constraints, it becomes a genuine systems problem. Matryoshka Representation Learning, which models like OpenAI’s text-embedding-3 series use, lets you truncate vectors to smaller dimensions with graceful quality degradation. Using 256 dimensions instead of 1536 can cut storage and query time dramatically with a loss in retrieval quality that may be acceptable for your use case. The point is you should measure that tradeoff, not ignore it.

3. Your Chunking Strategy Is Part of the Embedding

Embeddings encode meaning at the chunk level. A 500-token chunk and a 100-token chunk from the same document will produce different vectors and retrieve under different query conditions. This is obvious once stated but surprisingly under-examined in practice.

The pathology looks like this: a user asks a narrow, specific question. Your retrieval system finds chunks that are topically adjacent but too broad to actually answer the question, because each chunk was built around a section heading rather than a specific claim. The embedding for that chunk represents the section’s general topic, not its specific content. Smaller, more focused chunks retrieve better for specific queries. Larger chunks give the LLM more context when they do retrieve. There’s no universally correct chunk size, which is exactly why it needs to be a deliberate decision with actual evaluation behind it, not a default pulled from a tutorial.

4. Embedding Distance Doesn’t Mean What You Think It Means

Cosine similarity between two vectors is a number between -1 and 1. A score of 0.85 feels high. A score of 0.60 feels low. But these numbers are model-specific and not inherently meaningful across queries or models.

In practice, many embedding models produce scores clustered in a narrow range (say, 0.70 to 0.95) for any plausible query-document pair, because the vectors are all pointing roughly in the same high-dimensional direction. A similarity score of 0.72 might mean “weakly related” for one model and “highly relevant” for another. Using a fixed similarity threshold to filter retrieval results, without calibrating that threshold against your specific model and dataset, is a common source of retrieval failures. Teams that do calibrate this, by sampling query-document pairs and labeling relevance manually, consistently get better precision out of the same embedding model.

5. They’re Load-Bearing in Classification and Routing Tasks

Beyond RAG (retrieval-augmented generation), embeddings frequently handle routing decisions in production systems. Which agent should handle this request? Is this a billing question or a technical support question? Should this input be flagged for review? These are classification problems, and many teams solve them by training a lightweight classifier on top of frozen embeddings.

This approach is genuinely effective and underused. A small logistic regression or a k-nearest-neighbor classifier trained on a few hundred labeled examples, using embeddings as features, can route queries with high accuracy and run in milliseconds. The alternative, asking an LLM to classify every incoming request, costs money and adds latency. The embedding-based classifier gets you 80% of the way there at a fraction of the cost. The catch: the embedding model you choose for this task matters as much as the classifier. If the embeddings don’t separate your categories in vector space, no classifier can fix that.

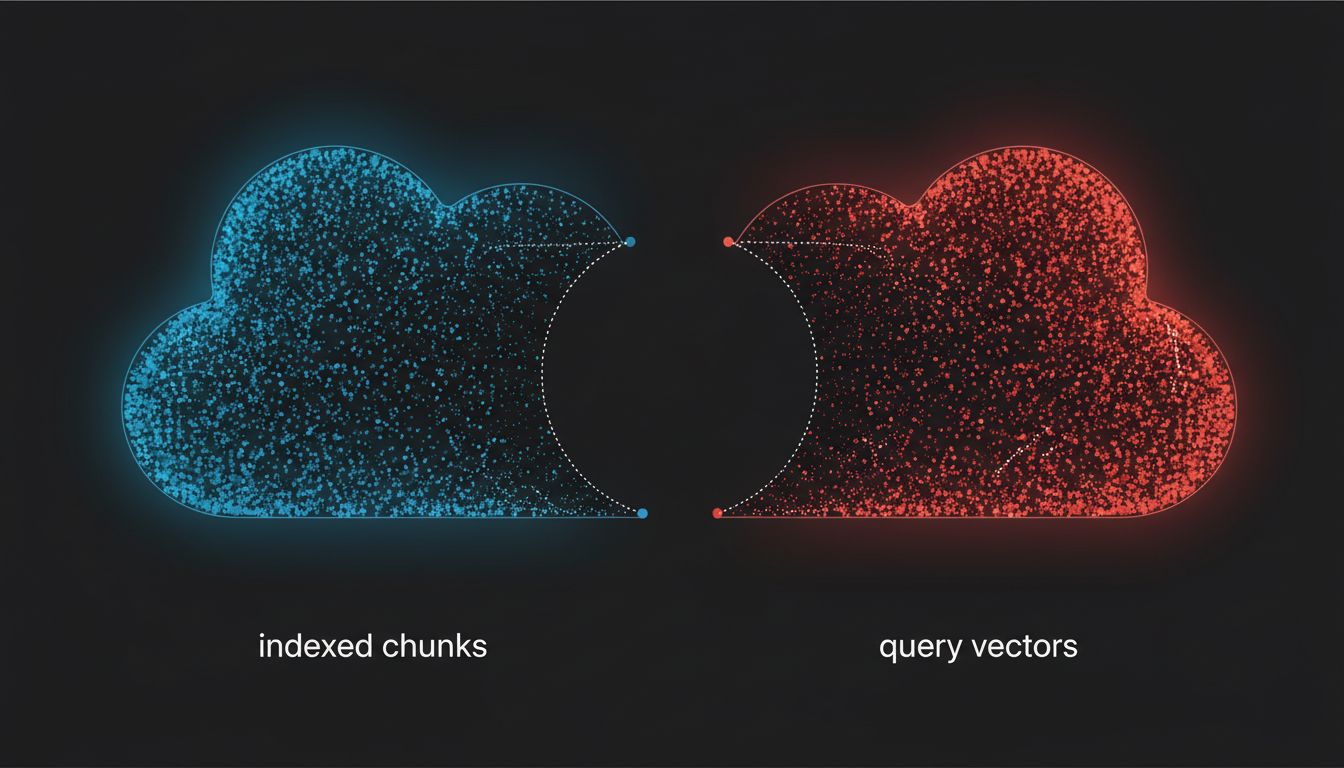

6. Embedding Models Go Stale and Nobody Notices

Language models get updated and teams notice, because the outputs change visibly. Embedding models get updated and teams often don’t notice until retrieval quality degrades, because the failure is silent. Your vectors are still numbers. The similarity scores are still numbers. The LLM still produces fluent answers. But the relationship between your indexed vectors and the query vectors has shifted, because you re-embedded queries with the new model while your stored chunks were embedded with the old one.

This is not hypothetical. OpenAI deprecated earlier embedding models and encouraged migration to newer versions. Teams that updated their query embedding without re-embedding their stored knowledge base introduced a subtle mismatch that degraded retrieval quality without triggering any obvious error. The fix is straightforward: version your embeddings, store which model produced them, and when you update the model, re-index everything. Treating embeddings as immutable artifacts rather than model-dependent outputs is the root of this problem.

The broader point is this: embeddings are not a commodity preprocessing step. They encode a worldview about language, similarity, and meaning. Every place they appear in your pipeline, they’re making decisions. The teams that get the most out of their AI systems are the ones who treat those decisions as worth examining.