There is a version of prompt engineering that is genuinely hard and technically interesting. There is also a version being sold to non-technical managers as a distinct discipline requiring specialized hires, dedicated teams, and, in some cases, six-figure salaries for people whose primary credential is being good at asking ChatGPT questions. These two versions are not the same thing, and conflating them has caused real confusion about what’s actually happening when you tweak a prompt.

What You’re Actually Doing When You Prompt

A language model is, at its core, a function that maps an input sequence to a probability distribution over possible next tokens. The model’s weights are fixed after training. When you write a prompt, you are not programming the model. You are selecting a starting point in an enormously high-dimensional input space and hoping it lands near a region of that space where the model’s learned behavior is useful to you.

This is structurally identical to what engineers do when they tune hyperparameters in any machine learning pipeline. You have a system with fixed internals, you have knobs you can turn on the input side, and you iterate toward better outputs. The fact that the knobs are made of words instead of numbers doesn’t make the process categorically different. It makes it more accessible to people without ML backgrounds, which is valuable, but it also makes it easier to dress up as something more novel than it is.

The “engineering” framing implies a level of systematic rigor that most prompt work doesn’t actually involve. Real parameter tuning in ML has a defined search space, reproducible experiments, and measurable objectives. Most prompt engineering is closer to trial-and-error with a narrative retroactively applied. You try something, it works better, you write it up as a technique.

The Techniques Are Real. The Branding Is Inflated.

None of this means that craft and knowledge don’t matter when writing prompts. They do. Chain-of-thought prompting, which asks a model to reason step-by-step before giving a final answer, demonstrably improves performance on multi-step reasoning tasks. This was documented rigorously in Google’s 2022 paper by Wei et al. Few-shot examples, role framing, and explicit output formatting all have measurable effects on model behavior. These are real techniques worth knowing.

But here’s what’s true simultaneously: these techniques are highly model-specific, version-sensitive, and prone to breaking without warning when the underlying model changes. A prompt carefully optimized for GPT-4 may perform differently on GPT-4-turbo, and differently again on GPT-4o, because OpenAI changes things under the hood. Your carefully engineered prompt has an expiration date, and that expiration is tied to someone else’s deployment schedule.

This is a meaningful constraint that the “prompt engineering as profession” framing tends to downplay. If your core expertise is knowing exactly how to phrase requests to a specific model version, that expertise has a shelf life measured in months. That’s not an engineering discipline. That’s a workflow skill, like knowing keyboard shortcuts for a specific version of Photoshop.

Where It Actually Gets Hard

There is a legitimate, technically demanding version of this work, and it lives at the intersection of model behavior, evaluation, and systems design. Writing prompts for a consumer chatbot is not that. Writing prompts that reliably extract structured data from unstructured legal documents, at scale, with measurable accuracy, and with failure modes you can detect and handle, is a different problem entirely.

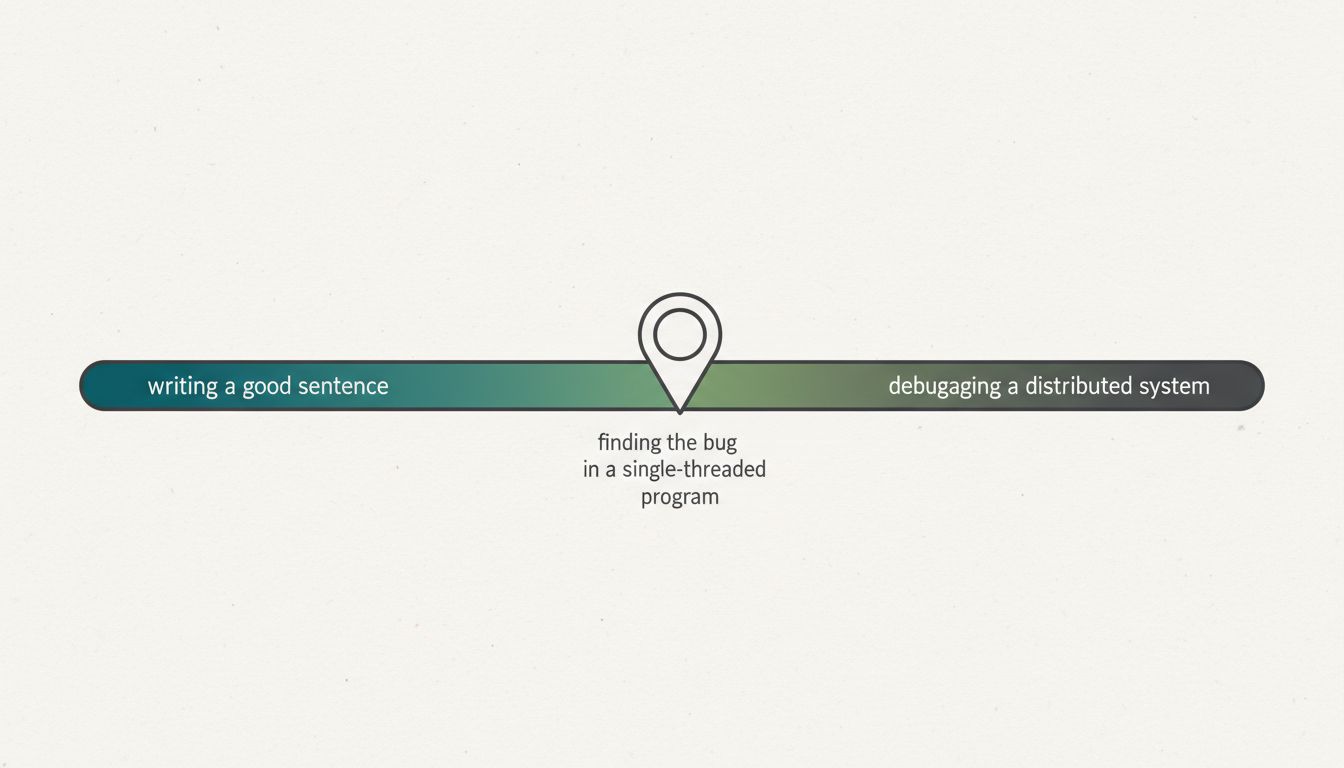

That version of the work requires you to understand how the model fails, not just how it succeeds. It requires building evaluation sets that actually test for what you care about. It requires thinking about latency, cost per token, and what happens when the model hallucinates in ways your downstream system can’t catch. Prompt engineering is debugging a system you can’t read, and the debugging part, not the prompt-writing part, is where the genuine skill lives.

The engineers who do this well are often not called prompt engineers. They’re ML engineers, or software engineers with strong applied ML instincts, who happen to be working with LLM APIs. The prompting is one component of what they build, not the identity of their role.

The Marketing Did Serve a Purpose

It’s worth being fair here. The “prompt engineering” framing, whatever its technical imprecision, did something useful: it gave non-engineers a legible entry point into working with AI systems. Telling a product manager or a lawyer that they could meaningfully improve AI outputs by thinking carefully about how they structured their requests was both true and empowering. It reduced the mystification around these systems.

The problem is when that entry point gets mistaken for a destination. Companies that are hiring teams of “prompt engineers” whose work doesn’t involve evaluation frameworks, doesn’t feed back into model selection or fine-tuning decisions, and doesn’t connect to measurable product outcomes are paying for something much closer to user research than engineering. That’s not inherently wasteful, but it should be priced and scoped accordingly.

The deeper issue is that the label inflates expectations in both directions. It makes organizations think they’ve addressed their AI capability gap when they’ve hired someone who is good at writing instructions. And it puts people in roles titled “engineer” without the infrastructure, tooling, or feedback loops that engineering work actually requires.

What To Call It Instead

The useful reframe is this: prompting is a skill that lives inside larger disciplines, not a discipline unto itself. A researcher uses it inside research. An engineer uses it inside software development. A designer uses it inside product work. The skill is real and worth developing, the same way knowing how to write a clear specification is real and worth developing. But you wouldn’t hire a “spec engineer.”

The organizations getting the most value from this work are treating prompting as one technique among many in an applied AI toolkit, not as a job function. They’re pairing it with evaluation, with fine-tuning where it makes sense, and with genuine understanding of what these models can and cannot do reliably. That’s a harder sell than “we need prompt engineers,” but it’s a more accurate description of what good AI integration actually looks like.