Prompt engineering has a credibility problem that it created itself. On one side, practitioners treat it as a serious discipline with reproducible techniques. On the other, skeptics dismiss it as glorified trial-and-error. Both positions miss the more interesting truth: prompt engineering works for reasons that are partially understood, and it fails for reasons that are almost always predictable.

The techniques are real. The brittleness is also real. Getting value from the practice means understanding both at the same time.

Why Prompting Techniques Actually Work

When you add “think step by step” to a prompt, you get better answers on multi-step reasoning tasks. This was demonstrated empirically in the 2022 paper “Large Language Models are Zero-Shot Reasoners” by Kojima et al., and it held up across multiple model families. The effect is not placebo. Something structural is happening.

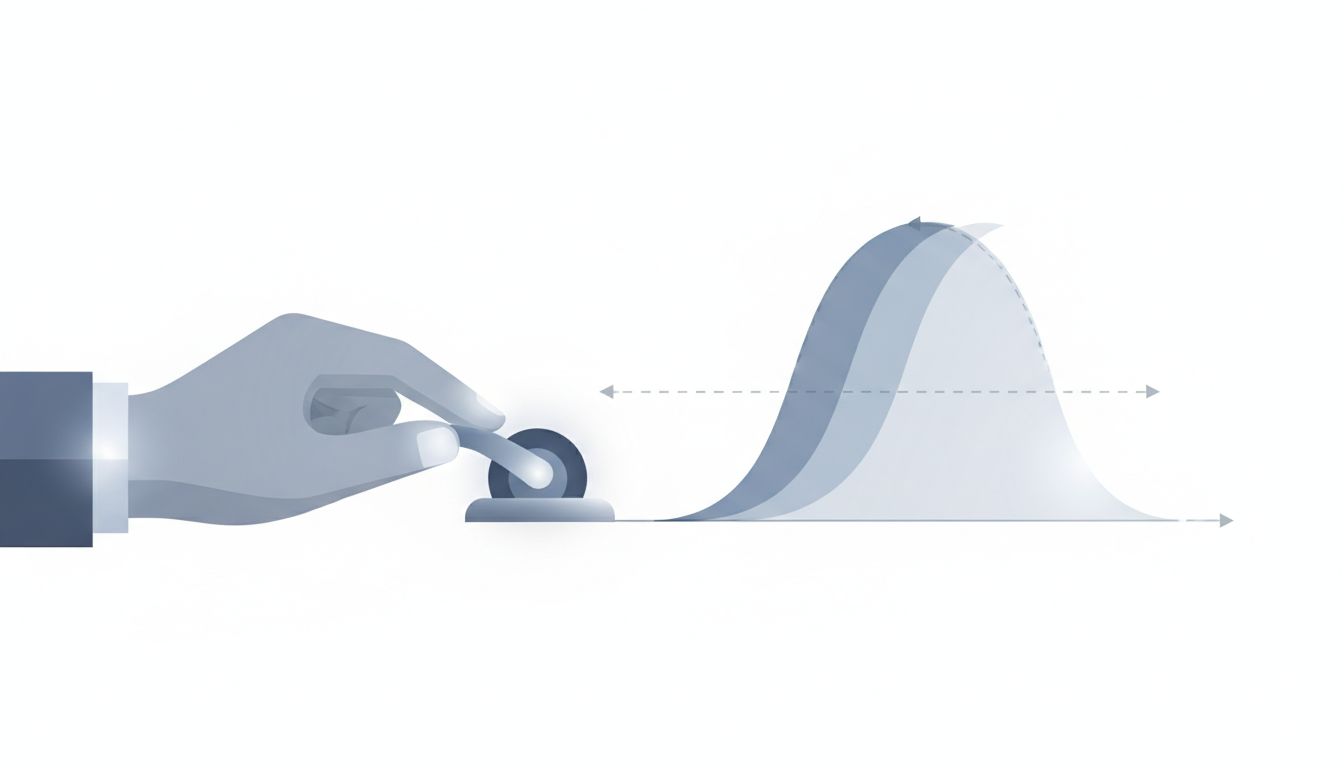

The best explanation is that these models learn from human text, and human text that works through a problem step-by-step tends to reach correct conclusions more often than text that jumps to answers. When you instruct the model to reason before concluding, you’re biasing the generation toward the part of the training distribution where careful reasoning lives. You’re not adding intelligence. You’re steering toward where intelligence already exists in the model’s learned patterns.

Similarly, few-shot prompting works because examples compress a lot of implicit instruction. Telling a model to “write a professional but warm email” is ambiguous. Showing it three examples of professional-but-warm emails is not. The examples constrain the output space far more precisely than adjectives can.

Role-prompting, system instructions, and formatting cues all function through similar mechanisms: they shift the probability distribution over the model’s next tokens toward regions that produce better outputs for your specific task. This is a real lever. It’s just not the only lever, and it’s not always the most important one.

The Ceiling You Can’t See Coming

Here’s the structural problem: prompts are optimized against a specific model at a specific point in time. When the model changes, all bets are partially off.

This happens more than people expect. OpenAI, Anthropic, and Google all update their production models, sometimes with minimal notice. A prompt carefully tuned on GPT-4 in early 2023 may behave differently on the version running in production today, because the underlying weights changed. The interface stayed the same. The model did not.

This isn’t hypothetical. Developers building on the OpenAI API have repeatedly documented output drift after silent model updates, where applications that worked correctly began producing different formats, different verbosity levels, or different failure modes. The prompts hadn’t changed. The model had.

The deeper issue is that prompt engineering, as usually practiced, has no unit tests. You tune against examples you’ve seen, check that things look right, and ship. But you rarely have systematic coverage of edge cases, and you almost never have a regression suite that catches drift when the underlying model shifts beneath you. This is the same category of risk as depending heavily on an external API without monitoring it for behavior changes — which, as it happens, tends to surface costs you didn’t budget for.

Where Prompting Can’t Reach

Prompt engineering also has a harder ceiling: it cannot give a model knowledge or capabilities it doesn’t have.

If a model’s training data has a cutoff date, no prompt retrieves information from after that date. If a model consistently fails at a specific class of spatial reasoning problem, elaborate prompting reduces the failure rate but rarely eliminates it. You’re working within a fixed capability envelope, not expanding it.

This is where the hype around prompt engineering does genuine damage. Teams spend weeks iterating on prompts for tasks where the model is fundamentally not reliable enough, convincing themselves that one more tweak will cross the threshold. Sometimes it does. More often, they’ve hit the model’s actual capability ceiling and are mistaking marginal prompt improvements for a path to production-grade reliability.

Retrieval-augmented generation is sometimes proposed as the fix for knowledge gaps, but as the architecture has its own failure modes, you’re often trading one class of error for another rather than eliminating the problem.

The honest diagnostic question is: are you prompting your way around a model limitation, or are you clarifying a task the model is already capable of? The first path has a low ceiling. The second path is where prompt engineering earns its reputation.

What Durable Prompt Engineering Looks Like

The teams that get sustained value from prompt engineering treat it less like a creative exercise and more like a software engineering problem.

They maintain versioned prompt libraries alongside the code that calls them. They write evals — test suites that check prompt outputs against expected results on a representative set of inputs — and they run those evals when they change a prompt or when they upgrade a model. They track output quality over time so that model drift shows up as a signal rather than a mystery.

They also make deliberate decisions about where prompt optimization is worth the investment. Prompting is cheap to iterate on, which makes it the right first tool when you’re exploring whether a model can do something at all. But for production systems where reliability matters, prompting is usually the starting point, not the finished solution. Fine-tuning, stricter output validation, or task decomposition often picks up where prompting plateaus.

The discipline also means being honest when a task isn’t a good fit. Not everything benefits from clever prompting. Some tasks need deterministic logic. Some need structured data the model was never trained on. Prompt engineering’s biggest failure mode isn’t a bad prompt. It’s applying prompting to a problem it was never going to solve, iterating long enough that sunken cost makes it hard to stop.

Prompting as a Diagnostic Tool, Not a Solution

The most useful reframe is this: treat prompt engineering primarily as a way to understand what a model can do, not as the mechanism you rely on for production correctness.

When you explore a task through careful prompting, you learn where the model is reliable, where it’s inconsistent, and where it fails in ways that pattern. That knowledge is genuinely valuable, and it informs better architectural decisions about when to use the model, how to constrain its outputs, and when to route around it entirely.

Prompt engineering works. It works because language models are steerable, because examples are better specifications than descriptions, and because structuring a task clearly reduces the space of plausible completions. It stops working when you treat it as a substitute for understanding the underlying system, or when you optimize so tightly for current behavior that any change in the model breaks your assumptions.

The engineers getting the most out of these systems are the ones who hold both truths at once. Prompting is a real skill. It’s also a layer built on something that can shift without warning. Design accordingly.