Retrieval-Augmented Generation is a genuine advancement in how we deploy large language models. If you’re building anything serious with AI right now, you probably should be using it. That part isn’t controversial.

What is controversial, and what I want to push back on, is the idea that RAG is a reliability fix. It isn’t. It’s a knowledge management strategy. The distinction matters enormously when you’re deciding what to build, how much to trust your outputs, and where your system will fail.

What RAG actually does

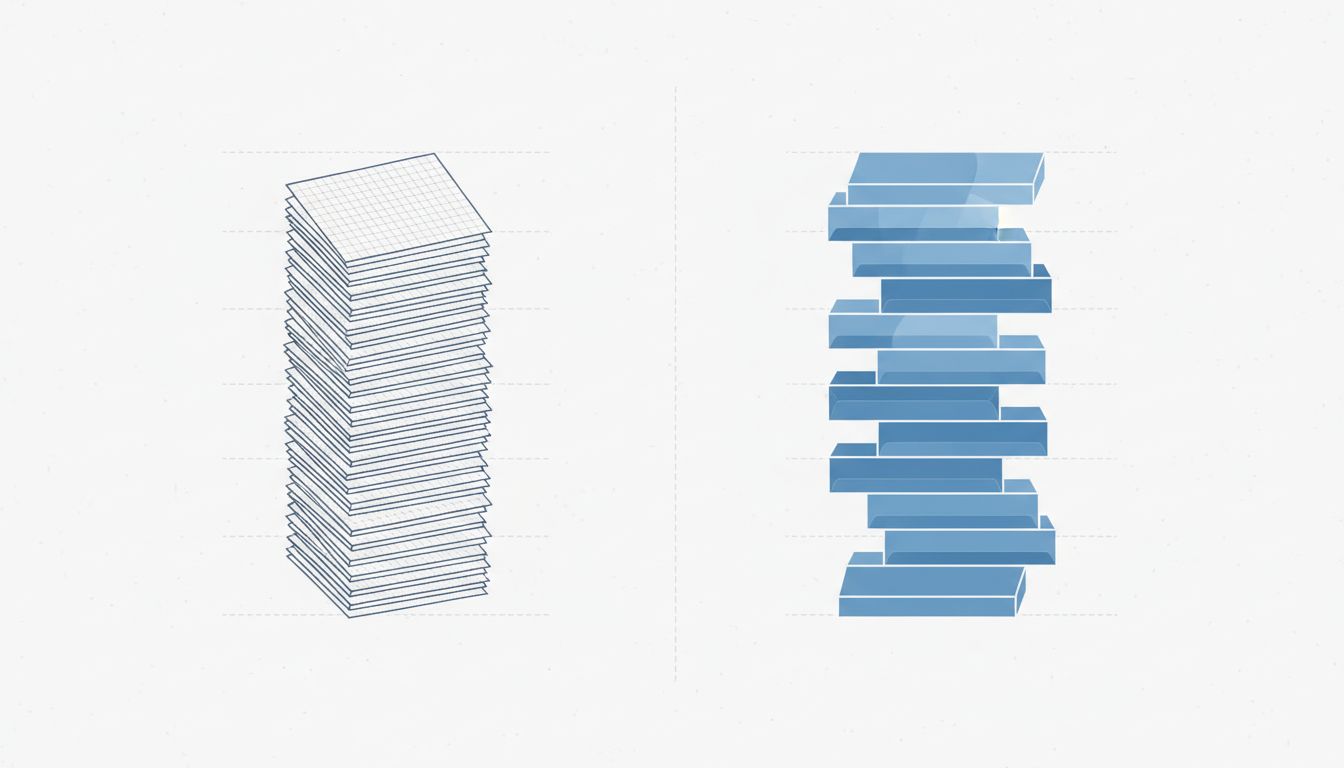

The core mechanic is straightforward. Instead of relying entirely on what a model learned during training, you retrieve relevant documents at inference time and include them in the prompt. The model generates its response grounded in that retrieved context rather than (or in addition to) its parametric memory.

If you need a clearer mental model: the LLM is a very capable reasoner, and RAG gives it a set of notes to reason from. Understanding how embeddings make retrieval work helps clarify the mechanics, but the important thing to grasp is that retrieval is a separate system with its own failure modes, operating before the model even starts generating.

That’s exactly where the trouble starts.

The retrieval step fails silently

Hallucinations from a vanilla LLM are frustrating but at least they originate in one place. With RAG, you’ve now introduced a retrieval step that can fail in ways that are harder to catch. If your vector search returns documents that are topically adjacent but not actually relevant, the model will often use them anyway. It doesn’t know the retrieval was bad. It just sees context and starts reasoning.

The result looks like a confident, well-sourced answer. It just happens to be wrong. This is worse than a direct hallucination in some ways because it’s harder to audit. Users see citations and assume accuracy. Teams see citations and assume the system worked. Neither assumption is justified.

Retrieval quality degrades with document volume, with poor chunking strategies, with queries that are ambiguous, and with knowledge bases that have internal contradictions. None of these are problems RAG was designed to solve.

Garbage in, confident garbage out

RAG shifts the quality problem from the model to your data pipeline. If your knowledge base contains outdated documentation, conflicting versions, or poorly structured content, the model will synthesize those problems into coherent-sounding responses. The LLM’s fluency becomes a liability here: it irons out the contradictions in your source material and presents you with something that reads as authoritative.

This is the knowledge management problem in disguise. Organizations that struggled to maintain accurate internal documentation before RAG will struggle in exactly the same ways after. The AI doesn’t audit your content. It uses it. And deploying a model is where the real work begins, especially when that model is only as good as what you’re feeding it.

RAG doesn’t fix reasoning failures

Even when retrieval works perfectly and the retrieved documents are accurate, the model can still reason poorly from them. Complex multi-hop questions, where you need to synthesize information across several retrieved chunks, remain genuinely hard. The model may answer based on a single strong-looking chunk while ignoring contradicting evidence elsewhere in the context.

The broader point: RAG addresses one specific weakness (stale parametric knowledge) while leaving the other weaknesses (reasoning errors, context misuse, confident wrongness) largely intact. Teams that deploy RAG and reduce their human review because they assume the problem is solved are making a dangerous trade.

The counterargument

Fair-minded critics will say I’m setting up a straw man. Nobody serious claims RAG eliminates hallucinations entirely. The actual claim is that it reduces them, provides auditability through citations, and makes errors easier to catch and correct.

This is true. RAG systems do produce fewer factual errors on knowledge-retrieval tasks than equivalent models without retrieval. Evals bear this out consistently. And citations do make debugging easier, even if they create false confidence in some users.

I’ll also grant that many of the failure modes I’ve described, specifically bad retrieval and poor knowledge bases, are engineering problems with engineering solutions. Better chunking strategies, hybrid search, reranking models, and regular content audits all help substantially.

But the marketing narrative around RAG has moved well ahead of the measured reality. Teams are adopting it as an answer to trust and reliability, not just accuracy, and that framing leads to under-investing in the things that actually build trustworthy systems: rigorous evals, human review workflows, and honest communication with users about what the system can and can’t do.

What to do with this

Use RAG. It’s a net improvement for almost any knowledge-intensive application. But treat it as what it is: a better way to give a model access to information, not a guarantee that it will use that information correctly.

That means instrumenting your retrieval step separately from your generation step so you can tell which one failed. It means auditing your knowledge base regularly, not just at launch. It means keeping human review in your loop for anything consequential, not removing it because the system now has citations.

The hallucinations haven’t gone away. They’ve just found a new place to hide.